Chainlink Price Prediction 2027: Oracle Infrastructure Analysis

Understanding LINK Price Prediction: 2027 Potential

Infrastructure protocols become more valuable as the crypto ecosystem scales and relies on robust middleware. Chainlink provides critical oracle infrastructure where proven utility and deep integrations drive long-term value over retail speculation. Increasing institutional adoption raises demand for professional-grade data delivery and security.

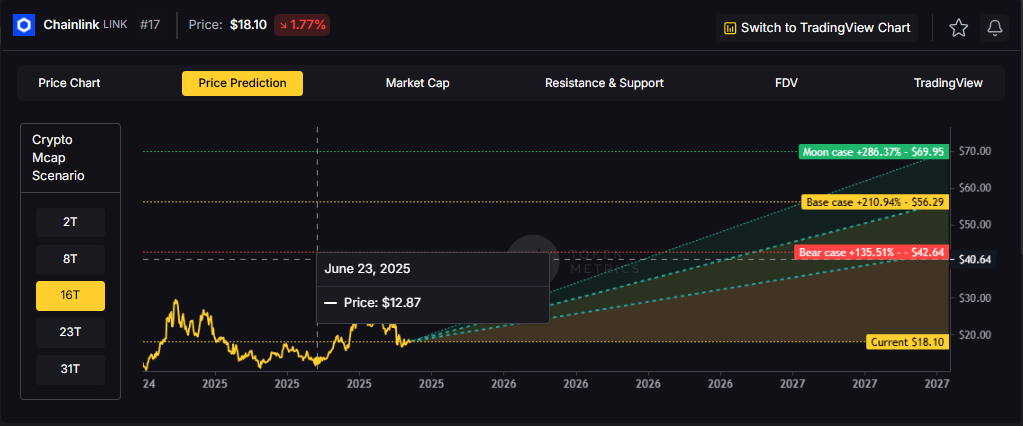

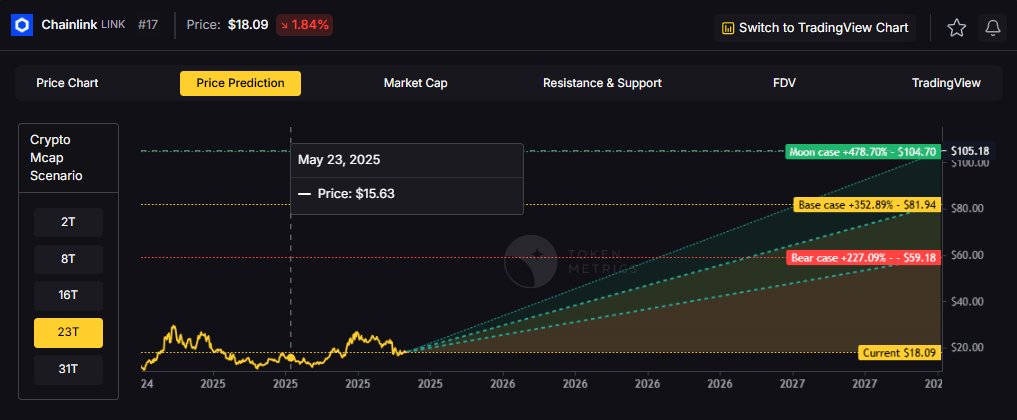

Token Metrics price prediction projections for LINK below span multiple total market cap scenarios from conservative to aggressive. Each tier assumes different levels of infrastructure demand as crypto evolves from speculative markets to institutional-grade systems. These bands frame LINK's price prediction potential outcomes into 2027.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

Token Metrics price prediction projections for LINK below span multiple total market cap scenarios from conservative to aggressive. Each tier assumes different levels of infrastructure demand as crypto evolves from speculative markets to institutional-grade systems. These bands frame LINK's price prediction potential outcomes into 2027.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

How to Read This LINK Price Prediction

Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

TM Agent baseline: Token Metrics lead metric for Chainlink, cashtag $LINK, is a TM Grade of 23.31%, which translates to a Sell, and the trading signal is bearish, indicating short-term downward momentum. This means Token Metrics currently does not endorse $LINK as a long-term buy at current conditions in our price prediction models, despite strong technology fundamentals.

Live details: Chainlink Token Details

Affiliate Disclosure: We may earn a commission from qualifying purchases made via this link, at no extra cost to you.

Key Takeaways: Chainlink Price Prediction Summary

- Scenario driven: Price prediction outcomes hinge on total crypto market cap; higher liquidity and adoption lift the bands

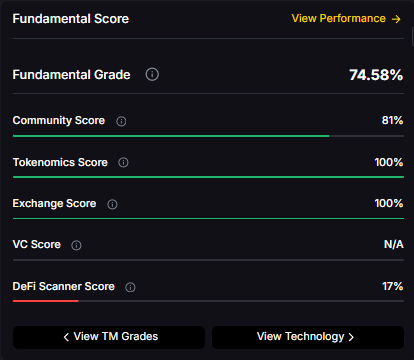

- Fundamentals: Fundamental Grade 74.58% (Community 81%, Tokenomics 100%, Exchange 100%, VC —, DeFi Scanner 17%)

- Technology: Technology Grade 88.50% (Activity 81%, Repository 72%, Collaboration 100%, Security 86%, DeFi Scanner 17%)

- TM Agent gist: Bearish signal with limited upside in price prediction models unless fundamentals or market regime change

- Current rating: Sell (23.31%) with strong tech but weak valuation

- Education only, not financial advice

Chainlink Price Prediction Scenario Analysis

Token Metrics price prediction scenarios span four market cap tiers, each representing different levels of crypto market maturity and liquidity:

8T Market Cap - LINK Price Prediction:

At an 8 trillion dollar total crypto market cap, LINK price prediction projects to $26.10 in bear conditions, $30.65 in the base case, and $35.20 in bullish scenarios.

16T Market Cap - LINK Price Prediction:

Doubling the market to 16 trillion expands the price prediction range to $42.64 (bear), $56.29 (base), and $69.95 (moon).

23T Market Cap - LINK Price Prediction:

At 23 trillion, the price prediction scenarios show $59.18, $81.94, and $104.70 respectively.

31T Market Cap - LINK Price Prediction:

In the maximum liquidity scenario of 31 trillion, LINK price predictions could reach $75.71 (bear), $107.58 (base), or $139.44 (moon).

These price prediction ranges reflect potential infrastructure value capture as crypto markets mature, though current valuation concerns contribute to the Sell rating despite strong technology fundamentals.

Why Consider the Indices with Top-100 Exposure

Chainlink represents one opportunity among hundreds in crypto markets. Token Metrics Indices bundle LINK with top one hundred assets for systematic exposure to the strongest projects. Single tokens face idiosyncratic risks that diversified baskets mitigate.

Historical index performance demonstrates the value of systematic diversification versus concentrated positions.

What Is Chainlink?

Chainlink is a decentralized oracle network that connects smart contracts to real-world data and systems. It enables secure retrieval and verification of off-chain information, supports computation, and integrates across multiple blockchains. As adoption grows, Chainlink serves as critical infrastructure for reliable data feeds and automation.

The LINK token is used to pay node operators and secure the network's services. Common use cases include DeFi price feeds, insurance, and enterprise integrations, with CCIP extending cross-chain messaging and token transfers—all factors that influence long-term LINK price predictions.

Token Metrics AI Analysis

Token Metrics AI provides comprehensive context informing our LINK price prediction models:

Vision: Chainlink aims to create a decentralized, secure, and reliable network for connecting smart contracts with real-world data and systems. Its vision is to become the standard for how blockchains interact with external environments, enabling trust-minimized automation across industries.

Problem: Smart contracts cannot natively access data outside their blockchain, limiting their functionality. Relying on centralized oracles introduces single points of failure and undermines the security and decentralization of blockchain applications. This creates a critical need for a trustless, tamper-proof way to bring real-world information onto blockchains.

Solution: Chainlink solves this by operating a decentralized network of node operators that fetch, aggregate, and deliver data from off-chain sources to smart contracts. It uses cryptographic proofs, reputation systems, and economic incentives to ensure data integrity. The network supports various data types and computation tasks, allowing developers to build complex, data-driven decentralized applications.

Market Analysis: Chainlink is a market leader in the oracle space and a key infrastructure component in the broader blockchain ecosystem, particularly within Ethereum and other smart contract platforms. It faces competition from emerging oracle networks like Band Protocol and API3, but maintains a strong first-mover advantage and widespread integration across DeFi, NFTs, and enterprise blockchain solutions. Adoption is driven by developer activity, partnerships with major blockchain projects, and demand for secure data feeds. Key risks include technological shifts, regulatory scrutiny on data providers, and execution challenges in scaling decentralized oracle networks. As smart contract usage grows, so does the potential for oracle services, positioning Chainlink at the center of a critical niche, though its success depends on maintaining security and decentralization over time—all critical factors in our price prediction analysis.

Fundamental and Technology Snapshot from Token Metrics

Fundamental Grade: 74.58% (Community 81%, Tokenomics 100%, Exchange 100%, VC —, DeFi Scanner 17%).

Technology Grade: 88.50% (Activity 81%, Repository 72%, Collaboration 100%, Security 86%, DeFi Scanner 17%).

Catalysts That Skew LINK Price Predictions Bullish

- Institutional and retail access expands with ETFs, listings, and integrations

- Macro tailwinds from lower real rates and improving liquidity

- Product or roadmap milestones such as CCIP upgrades, scaling, or partnerships

- Increased adoption of Chainlink oracle services across DeFi protocols

- Enterprise blockchain integrations requiring secure data feeds

- Cross-chain expansion through CCIP (Cross-Chain Interoperability Protocol)

Risks That Skew LINK Price Predictions Bearish

- Macro risk-off from tightening or liquidity shocks

- Regulatory actions targeting oracle networks or infrastructure outages

- Concentration in node operator economics and competitive displacement

- Current low TM Grade (23.31%) indicating valuation concerns

- Competition from alternative oracle solutions (Band Protocol, API3)

- Token economics challenges despite 100% tokenomics score

How Token Metrics Can Help

Token Metrics empowers you to analyze Chainlink and hundreds of digital assets with AI-driven ratings, on-chain and fundamental data, and index solutions to manage portfolio risk smartly in a rapidly evolving crypto market. Our price prediction frameworks provide transparent scenario-based analysis even for tokens with Sell ratings.

Chainlink Price Prediction FAQs

Can LINK reach $100?

Yes. Based on our price prediction scenarios, LINK could reach $100+ in the 23T moon case, projecting $104.70. However, this requires significant market cap expansion and improved market conditions beyond the current Sell rating (23.31%). Not financial advice.

What price could LINK reach in the moon case?

Moon case price predictions range from $35.20 at 8T to $139.44 at 31T total crypto market cap. These scenarios assume maximum liquidity expansion and strong Chainlink adoption, though current bearish signals suggest caution. Not financial advice.

Should I buy LINK now or wait?

Timing depends on risk tolerance and macro outlook. Current price of $18.09 sits below the 8T bear case in our price prediction scenarios, suggesting potential value. However, the Sell rating (23.31%) and bearish trading signal indicate Token Metrics does not currently endorse LINK at these levels. Dollar-cost averaging may reduce timing risk if you believe in long-term infrastructure value. Not financial advice.

What is the Chainlink price prediction for 2025-2027?

Our comprehensive LINK price prediction framework suggests Chainlink could trade between $26.10 and $139.44 depending on market conditions and total crypto market capitalization. The base case price prediction scenario clusters around $30.65 to $107.58 across different market cap environments. Despite strong technology (88.50%) and fundamentals (74.58%), the current Sell rating (23.31%) reflects valuation concerns. Not financial advice.

Can Chainlink reach $50?

Yes. Based on our price prediction scenarios, LINK could reach $56.29 in the 16T base case and higher in 23T/31T scenarios. The $50 target becomes achievable in moderate market cap environments (16T tier), though current bearish momentum suggests this may take time. Not financial advice.

Why does LINK have a Sell rating despite strong technology?

LINK shows excellent technology fundamentals (88.50% grade) with strong development activity, collaboration, and security. However, the overall TM Grade of 23.31% (Sell) reflects current valuation concerns, market positioning, and bearish trading signals. Our price prediction models show potential upside in favorable market conditions, but current metrics suggest waiting for improved entry points. Not financial advice.

Is Chainlink a good investment based on price predictions?

LINK presents a complex investment case: exceptional technology grade (88.50%), solid fundamentals (74.58%), but a Sell rating (23.31%) with bearish momentum. While our price prediction models show significant upside potential in bull market scenarios, current valuation concerns and bearish signals suggest caution. The oracle infrastructure thesis remains compelling long-term, but timing and entry points matter. Consider diversified exposure through indices. Not financial advice.

How does LINK compare to other oracle price predictions?

Chainlink dominates the oracle space with first-mover advantage and widespread integration. Our price prediction framework suggests LINK could reach $30-$139 across scenarios. Competitors like Band Protocol and API3 offer alternatives, but Chainlink's established network effects and enterprise partnerships position it as the infrastructure leader. However, the current Sell rating suggests valuation concerns versus alternatives.

What are the biggest risks to LINK price predictions?

Key risks that could impact Chainlink price predictions include: current Sell rating (23.31%) indicating valuation concerns, competition from emerging oracle networks, regulatory scrutiny on data providers, node operator centralization risks, macro liquidity shocks, and challenges scaling decentralized oracle infrastructure. Despite strong technology (88.50%), these factors contribute to bearish near-term outlook.

Will LINK benefit from DeFi growth?

Chainlink is critical infrastructure for DeFi, providing price feeds for lending protocols, derivatives, and stablecoins. Our price prediction scenarios assume LINK captures value from continued DeFi adoption. However, the current Sell rating suggests this thesis isn't reflected in valuation metrics yet. Long-term infrastructure value may require patience and improved market conditions.

Should I buy LINK now or wait?

Timing depends on risk tolerance and macro outlook. Current price of $18.09 sits below the 8T bear case in the scenarios. Dollar-cost averaging may reduce timing risk. Not financial advice.

Next Steps

Track live grades and signals: Token Details

Want exposure? Buy LINK on MEXC

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

Why Token Metrics Ratings Matter

Discover the full potential of your crypto research and portfolio management with Token Metrics. Our ratings combine AI-driven analytics, on-chain data, and decades of investing expertise—giving you the edge to navigate fast-changing markets. Try our platform to access scenario-based price prediction targets, token grades, indices, and more for institutional and individual investors. Token Metrics is your research partner through every crypto market cycle.

Why Use Token Metrics for LINK Price Predictions?

- Transparent analysis: Honest Sell ratings (23.31%) even when technology fundamentals are strong (88.50%)

- Scenario-based modeling: Multiple market cap tiers for comprehensive price prediction analysis

- Infrastructure focus: Specialized oracle network analysis and competitive landscape assessment

- Risk-adjusted approach: Balanced view of technology strength versus valuation concerns

- Real-time signals: Trading signals and TM Grades updated regularly

- Diversification tools: Index solutions to spread oracle infrastructure risk

- Comparative analysis: Analyze LINK against Band Protocol, API3, and 6,000+ tokens

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)