Top Crypto Trading Platforms in 2025

%201.svg)

%201.svg)

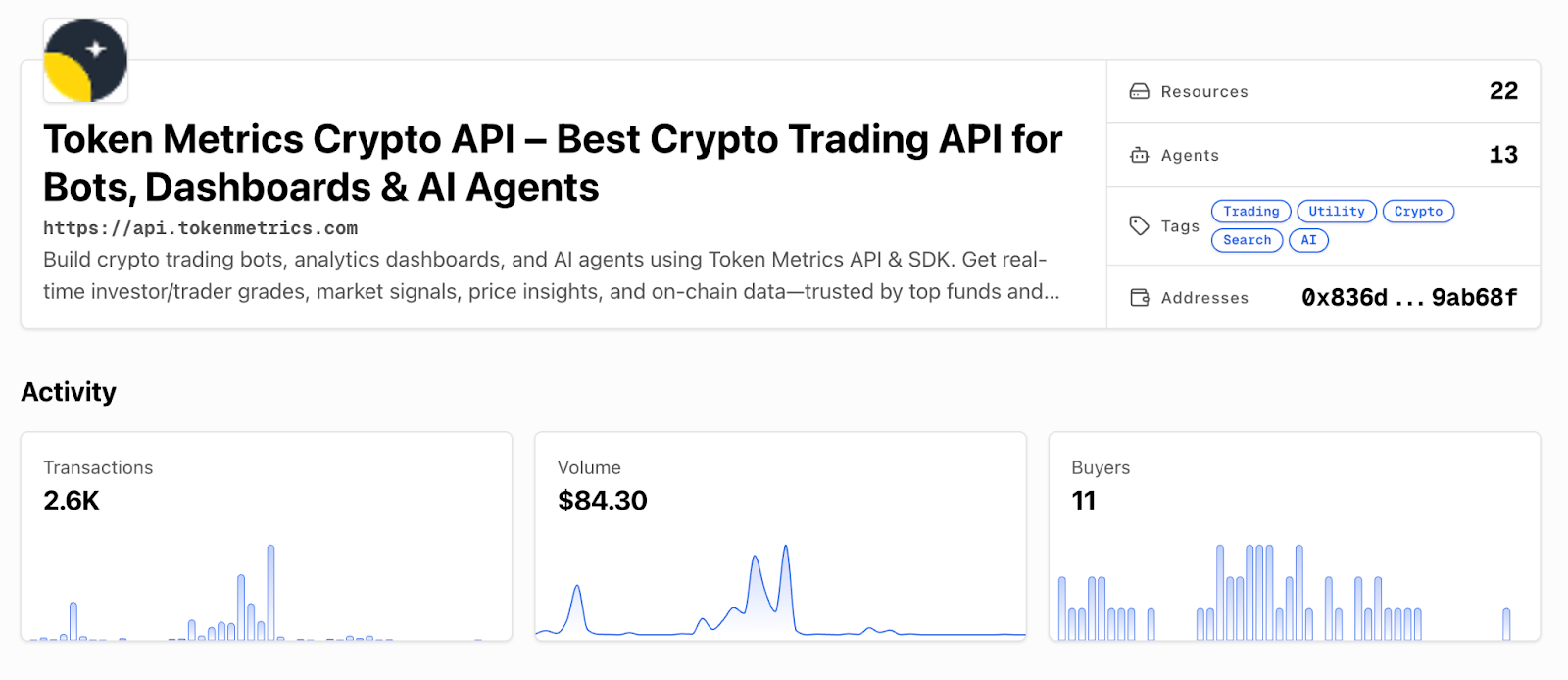

Big news: We’re cranking up the heat on AI-driven crypto analytics with the launch of the Token Metrics API and our official SDK (Software Development Kit). This isn’t just an upgrade – it's a quantum leap, giving traders, hedge funds, developers, and institutions direct access to cutting-edge market intelligence, trading signals, and predictive analytics.

Crypto markets move fast, and having real-time, AI-powered insights can be the difference between catching the next big trend or getting left behind. Until now, traders and quants have been wrestling with scattered data, delayed reporting, and a lack of truly predictive analytics. Not anymore.

The Token Metrics API delivers 32+ high-performance endpoints packed with powerful AI-driven insights right into your lap, including:

Getting started with the Token Metrics API is simple:

At Token Metrics, we believe data should be decentralized, predictive, and actionable.

The Token Metrics API & SDK bring next-gen AI-powered crypto intelligence to anyone looking to trade smarter, build better, and stay ahead of the curve. With our official SDK, developers can plug these insights into their own trading bots, dashboards, and research tools – no need to reinvent the wheel.

%201.svg)

%201.svg)

DeFi protocols are maturing beyond early ponzi dynamics toward sustainable revenue models. Aave operates in this evolving landscape where real yield and proven product-market fit increasingly drive valuations rather than speculation alone. Growing regulatory pressure on centralized platforms creates tailwinds for decentralized alternatives—factors that inform our comprehensive AAVE price prediction framework.

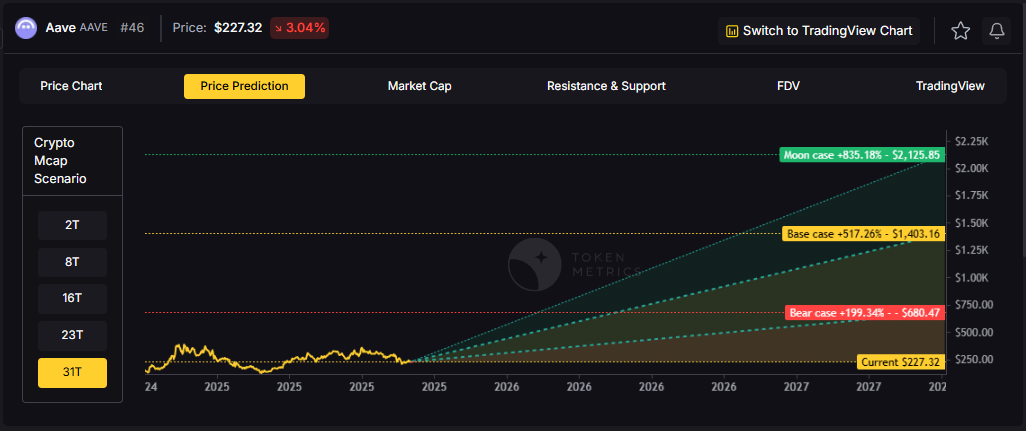

The scenario bands below reflect how AAVE price predictions might perform across different total crypto market cap environments. Each tier represents a distinct liquidity regime, from bear conditions with muted DeFi activity to moon scenarios where decentralized infrastructure captures significant value from traditional finance.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

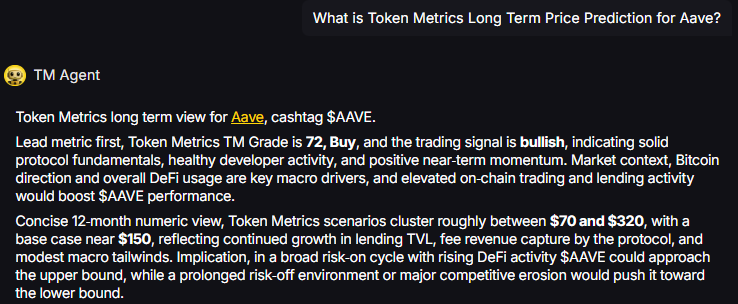

TM Agent baseline: Token Metrics TM Grade is 72, Buy, and the trading signal is bullish, indicating solid protocol fundamentals, healthy developer activity, and positive near-term momentum. Concise twelve-month numeric view, Token Metrics price prediction scenarios cluster roughly between $70 and $320, with a base case near $150, reflecting continued growth in lending TVL, fee revenue capture by the protocol, and modest macro tailwinds.

Live details: Aave Token Details

Affiliate Disclosure: We may earn a commission from qualifying purchases made via this link, at no extra cost to you.

Our Token Metrics price prediction framework spans four market cap tiers, each representing different levels of crypto market maturity and liquidity:

8T Market Cap - AAVE Price Prediction:

At an 8 trillion dollar total crypto market cap, AAVE projects to $293.45 in bear conditions, $396.69 in the base case, and $499.94 in bullish scenarios.

Doubling the market to 16 trillion expands the price prediction range to $427.46 (bear), $732.18 (base), and $1,041.91 (moon).

At 23 trillion, the price prediction scenarios show $551.46, $1,007.67, and $1,583.86 respectively.

In the maximum liquidity scenario of 31 trillion, AAVE price predictions could reach $680.47 (bear), $1,403.16 (base), or $2,175.85 (moon).

Each tier assumes progressively stronger market conditions, with the base case price prediction reflecting steady growth and the moon case requiring sustained bull market dynamics.

Aave represents one opportunity among hundreds in crypto markets. Token Metrics Indices bundle AAVE with top one hundred assets for systematic exposure to the strongest projects. Single tokens face idiosyncratic risks that diversified baskets mitigate.

Historical index performance demonstrates the value of systematic diversification versus concentrated positions.

Aave is a decentralized lending protocol that operates across multiple EVM-compatible chains including Ethereum, Polygon, Arbitrum, and Optimism. The network enables users to supply crypto assets as collateral and borrow against them in an over-collateralized manner, with interest rates dynamically adjusted based on utilization.

The AAVE token serves as both a governance asset and a backstop for the protocol through the Safety Module, where stakers earn rewards in exchange for assuming shortfall risk. Primary utilities include voting on protocol upgrades, fee switches, collateral parameters, and new market deployments.

Token Metrics AI provides comprehensive context on Aave's positioning and challenges.

Vision: Aave aims to create an open, accessible, and non-custodial financial system where users have full control over their assets. Its vision centers on decentralizing credit markets and enabling seamless, trustless lending and borrowing across blockchain networks.

Problem: Traditional financial systems often exclude users due to geographic, economic, or institutional barriers. Even in crypto, accessing credit or earning yield on idle assets can be complex, slow, or require centralized intermediaries. Aave addresses the need for transparent, permissionless, and efficient lending and borrowing markets in the digital asset space.

Solution: Aave uses a decentralized protocol where users supply assets to liquidity pools and earn interest, while borrowers can draw from these pools by posting collateral. It supports features like variable and stable interest rates, flash loans, and cross-chain functionality through its Layer 2 and multi-chain deployments. The AAVE token is used for governance and as a safety mechanism via its staking program (Safety Module).

Market Analysis: Aave is a leading player in the DeFi lending sector, often compared with protocols like Compound and Maker. It benefits from strong brand recognition, a mature codebase, and ongoing innovation such as Aave Arc for institutional pools and cross-chain expansion. Adoption is driven by liquidity, developer activity, and integration with other DeFi platforms. Key risks include competition from newer lending protocols, regulatory scrutiny on DeFi, and smart contract risks. As a top DeFi project, Aave's performance reflects broader trends in decentralized finance, including yield demand, network security, and user trust. Its multi-chain strategy helps maintain relevance amid shifting ecosystem dynamics.

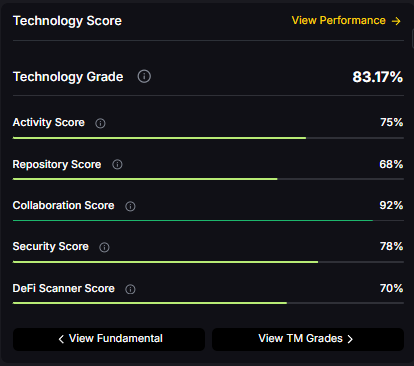

Fundamental Grade: 75.51% (Community 77%, Tokenomics 100%, Exchange 100%, VC 49%, DeFi Scanner 70%).

Technology Grade: 83.17% (Activity 75%, Repository 68%, Collaboration 92%, Security 78%, DeFi Scanner 70%).

Yes. Based on our price prediction scenarios, AAVE could reach $1,007.67 in the 23T base case and $1,041.91 in the 16T moon case. Not financial advice.

At current price of $228.16, a 10x would reach $2,281.60. This falls within the 31T moon case price prediction at $2,175.85 (only slightly below), and would require extreme liquidity expansion. Not financial advice.

Our moon case price predictions range from $499.94 at 8T to $2,175.85 at 31T. These scenarios assume maximum liquidity expansion and strong Aave adoption. Not financial advice.

Our comprehensive 2027 price prediction framework suggests AAVE could trade between $293.45 and $2,175.85, depending on market conditions and total crypto market capitalization. The base case scenario clusters around $396.69 to $1,403.16 across different market cap environments. Not financial advice.

AAVE shows strong fundamentals (75.51% grade) and technology scores (83.17% grade), with bullish trading signals. However, all price predictions involve uncertainty and risk. Always conduct your own research and consult financial advisors before investing. Not financial advice.

Track live grades and signals: Token Details

Want exposure? Buy AAVE on MEXC

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

%201.svg)

%201.svg)

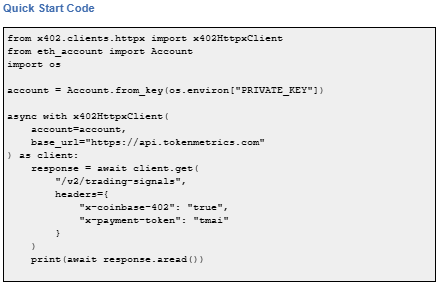

x402 is an open-source, HTTP-native payment protocol developed by Coinbase that enables pay-per-call API access using crypto wallets. It leverages the HTTP 402 Payment Required status code to create seamless, keyless API payments.

It eliminates traditional API keys and subscriptions, allowing agents and applications to pay for exactly what they use in real time. It works across Base and Solana with USDC and selected native tokens such as TMAI.

Start using Token Metrics X402 integration here. https://www.x402scan.com/server/244415a1-d172-4867-ac30-6af563fd4d25

x402 transforms API access by making payments native to HTTP requests.

Feature | Traditional APIs | x402 APIs |

Authentication | API keys, tokens | Wallet signature |

Payment Model | Subscription, prepaid | Pay-per-call |

Onboarding | Sign up, KYC, billing | Connect wallet |

Rate Limits | Fixed tiers | Economic (pay more = more access) |

Commitment | Monthly/annual | Zero, per-call only |

How to use it: Add x-coinbase-402: true header to any supported endpoint. Sign payment with your wallet. The API responds immediately after confirming micro-payment.

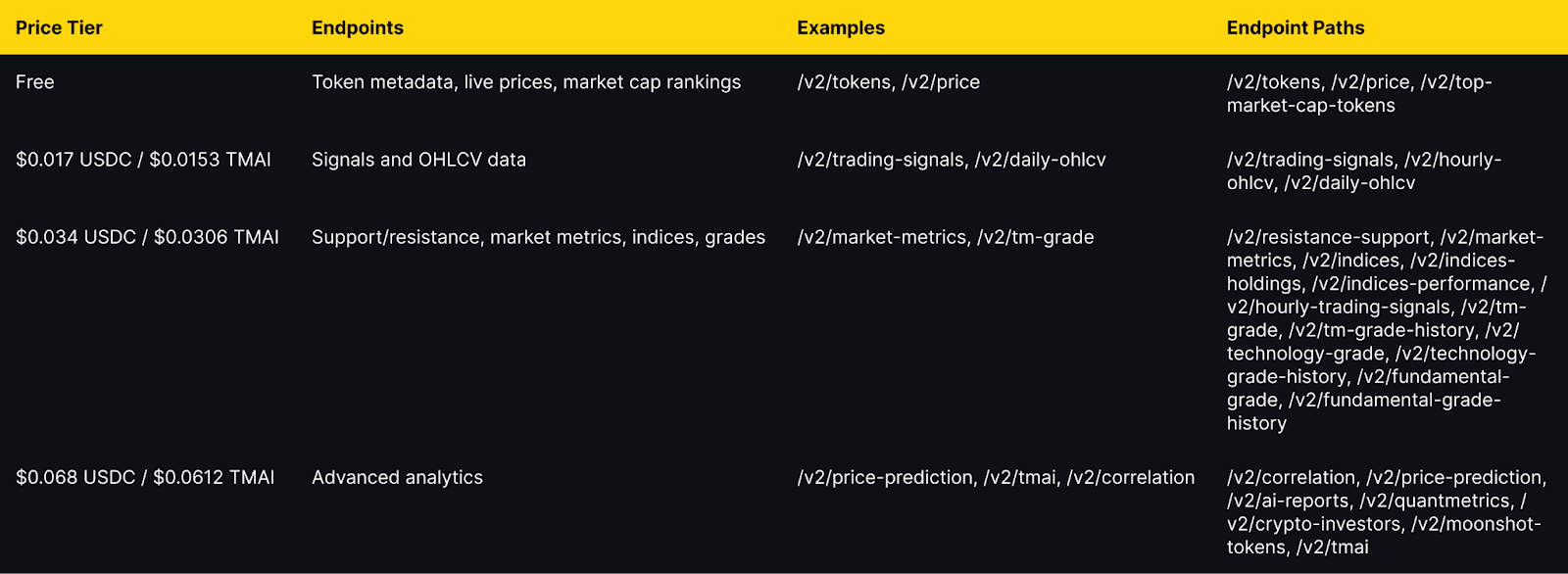

Token Metrics integration: All public endpoints available via x402 with per-call pricing from $0.017 to $0.068 USDC (10% discount with TMAI token).

Explore live agents: https://www.x402scan.com/composer.

The Protocol Flow

The HTTP 402 status code was reserved in HTTP/1.1 in 1997 for future digital payment use cases and was never standardized for any specific payment scheme. x402 activates this path by using 402 responses to coordinate crypto payments during API requests.

Why this matters: It eliminates intermediary payment processors, enables true machine-to-machine commerce, and reduces friction for AI agents.

CoinGecko Recognition

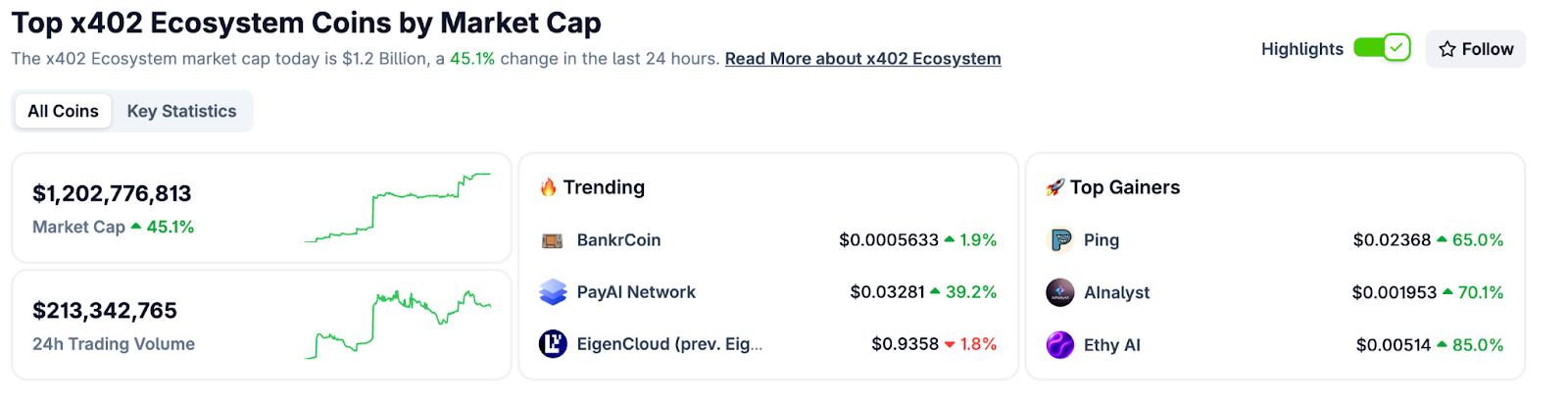

CoinGecko launched a dedicated x402 Ecosystem category in October 2025, tracking 700+ projects with over $1 billion market cap and approximately $213 million in daily trading volume. Top performers include PING and Alnalyst, along with established projects like EigenCloud.

Base Network Adoption

Base has emerged as the primary chain for x402 adoption, with 450,000+ weekly transactions by late October 2025, up from near-zero in May. This growth demonstrates real agent and developer usage.

Composer is x402scan's sandbox for discovering and using AI agents that pay per tool call. Users can open any agent, chat with it, and watch tool calls and payments stream in real time.

Top agents include AInalyst, Canza, SOSA, and NewEra. The Composer feed shows live activity across all agents.

Explore Composer: https://x402scan.com/composer

What We Ship

Token Metrics offers all public API endpoints via x402 with no API key required. Pay per call with USDC or TMAI for a 10 percent discount. Access includes trading signals, price predictions, fundamental grades, technology scores, indices data, and the AI chatbot.

Check out Token Metrics Integration on X402. https://www.x402scan.com/server/244415a1-d172-4867-ac30-6af563fd4d25

Data as of October, 2025.

Pricing Tiers

Important note: TMAI Spend Limit: TMAI has 18 decimals. Set max payment to avoid overspending. Example: 200 TMAI = 200 * (10 ** 18) in base units.

Full integration guide: https://api.tokenmetrics.com

Ecosystem Participants and Tools

Active x402 Endpoints

Key endpoints beyond Token Metrics include Heurist Mesh for crypto intelligence, Tavily extract for structured web content, Firecrawl search for SERP and scraping, Twitter or X search for social discovery, and various DeFi and market data providers.

Infrastructure and Tools

Common Questions About x402

How is x402 different from traditional API keys?

x402 uses wallet signatures instead of API keys. Payment happens per call rather than via subscription. No sign-up, no monthly billing, no rate limit tiers. You pay for exactly what you use.

Which chains support x402?

Currently Base and Solana. Most activity is on Base with USDC as the primary payment token. Some endpoints accept native tokens like TMAI for discounts.

Do I need to trust the API provider with my funds?

No. Payments are on-chain and verifiable. You approve each transaction amount. No escrow or prepayment is required.

What happens if a payment fails?

The API returns 402 Payment Required again with updated payment details. Your client retries automatically. You do not receive data until payment confirms.

Can I use x402 with existing API clients?

Yes, with x402 client libraries such as x402-axios for Node and x402-httpx for Python. These wrap standard HTTP clients and handle the payment flow automatically.

Getting Started Checklist

Token Metrics x402 Resources

What's Next for x402

Ecosystem expansion. More API providers adopting x402, additional chains beyond Base and Solana, standardization of payment headers and response formats.

Agent sophistication. As x402 matures, expect agents that automatically discover and compose multiple paid endpoints, optimize costs across providers, and negotiate better rates for bulk usage.

Disclosure

Educational content only, not financial advice. API usage and crypto payments carry risks. Verify all transactions before signing. Do your own research.

%201.svg)

%201.svg)

Opening Hook

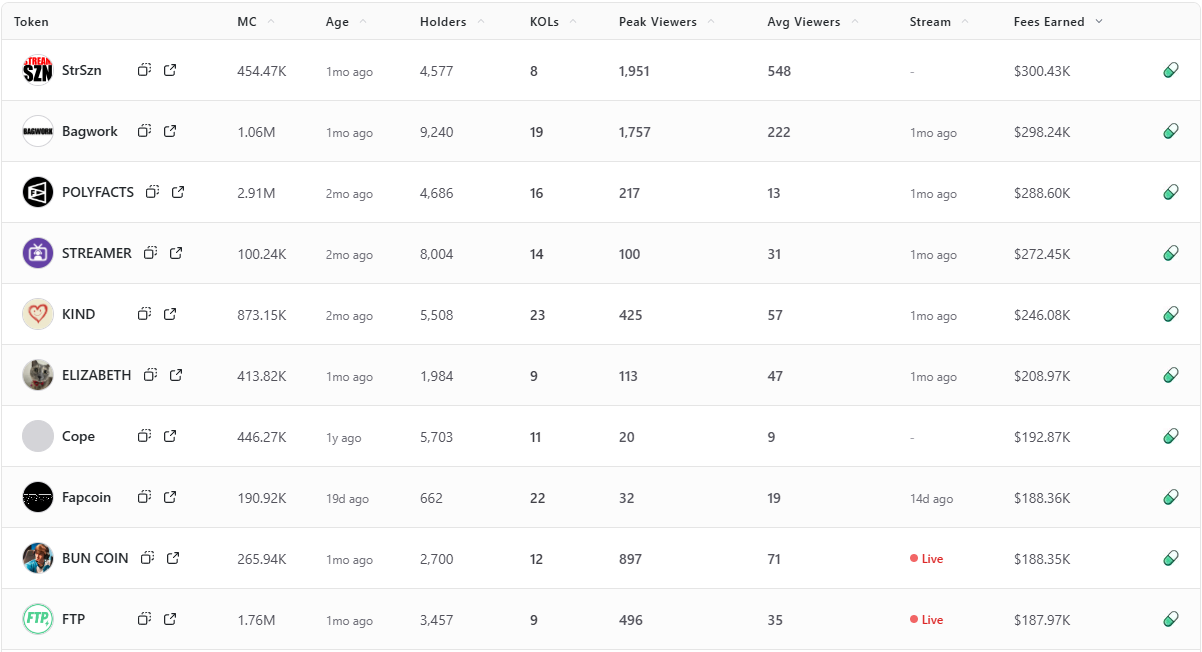

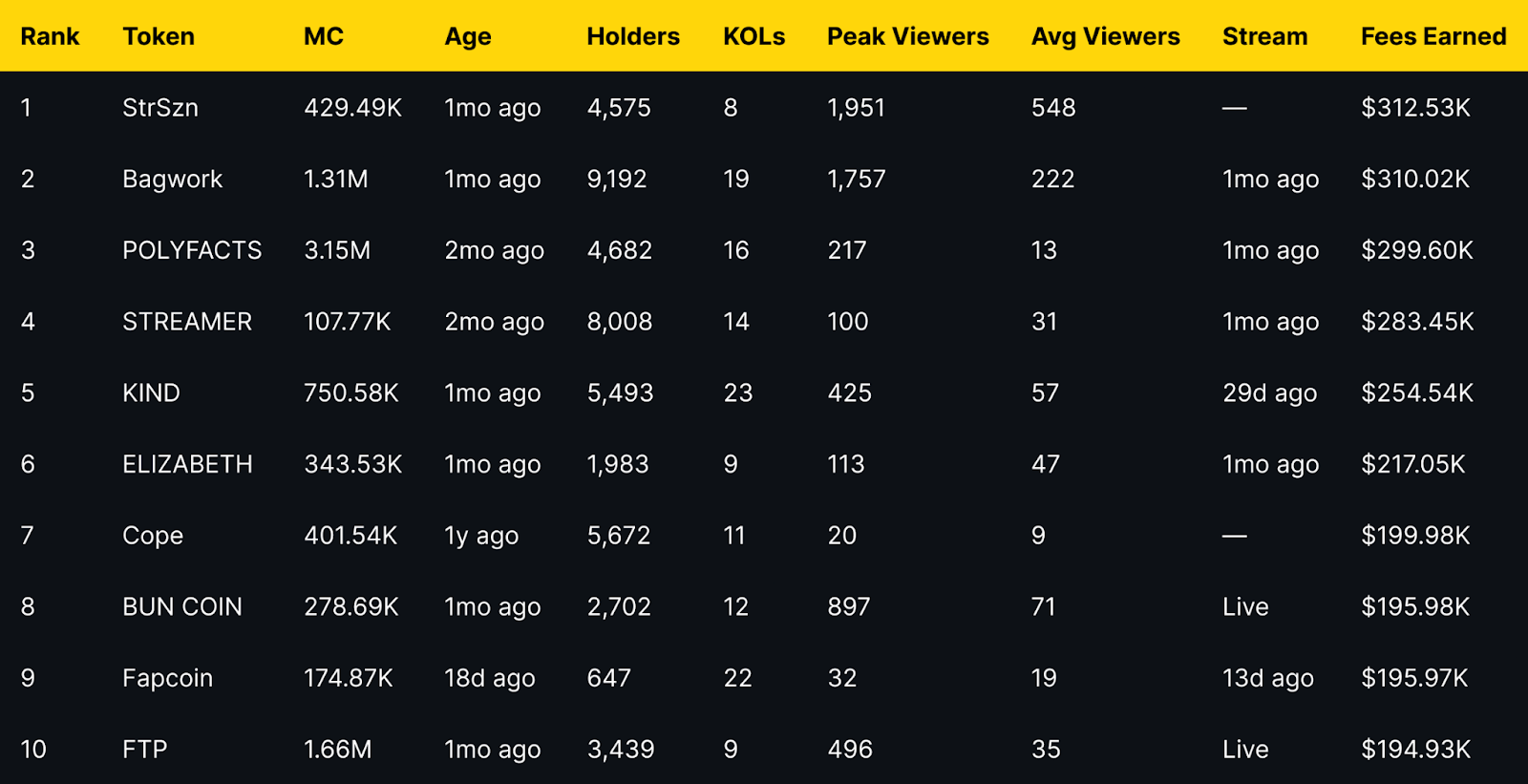

Fees Earned is a clean way to see which livestream tokens convert attention into on-chain activity. This leaderboard ranks the top 10 Pump.fun livestream tokens by Fees Earned using the screenshot you provided.

Selection rule is simple, top 10 by Fees Earned from the screenshot, numbers appear exactly as shown. If a field is not in the image, it is recorded as —.

Entity coverage: project names and tickers are taken as listed on Pump.fun, chain is Solana, sector is livestream meme tokens and creator tokens.

Token Metrics Live (TMLIVE) brings real time, data driven crypto market analysis to Pump.fun. The team has produced live crypto content for 7 years with a 500K plus audience and a platform of more than 100,000 users. Our public track record includes early coverage of winners like MATIC and Helium in 2018.

TMLIVE Quick Stats, as captured

TLDR: Fees Earned Leaders at a Glance

Short distribution note: the top three sit within a narrow band of each other, while mid-table tokens show a mix of older communities and recent streams. Several names with modest average viewers still appear due to concentrated activity during peaks.

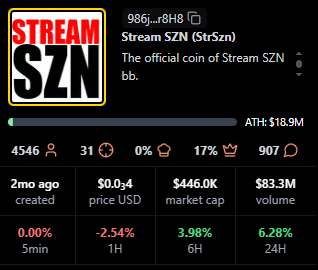

StrSzn

Positioning: Active community meme with consistent viewer base.

Research Blurb: Project details unclear at time of writing. Fees and viewership suggest consistent stream engagement over the last month.

Quick Facts: Chain = Solana, Status = —, Peak Viewers = 1,951, Avg Viewers = 548.

https://pump.fun/coin/986j8mhmidrcbx3wf1XJxsQFvWBMXg7gnDi3mejsr8H8

Bagwork

Positioning: Large holder base with sustained attention.

Research Blurb: Project details unclear at time of writing. Strong holders and KOL presence supported steady audience numbers.

Quick Facts: Chain = Solana, Status = 1mo ago, Holders = 9,192, KOLs = 19.

https://pump.fun/coin/7Pnqg1S6MYrL6AP1ZXcToTHfdBbTB77ze6Y33qBBpump

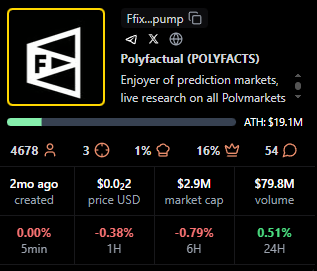

POLYFACTS

Positioning: Higher market cap with light average viewership.

Research Blurb: Project details unclear at time of writing. High market cap with comparatively low average viewers implies fees concentrated in shorter windows.

Quick Facts: Chain = Solana, Status = 1mo ago, MC = 3.15M, Avg Viewers = 13.

https://pump.fun/coin/FfixAeHevSKBZWoXPTbLk4U4X9piqvzGKvQaFo3cpump

STREAMER

Positioning: Community focused around streaming identity.

Research Blurb: Project details unclear at time of writing. Solid holders and moderate KOL count, steady averages over time.

Quick Facts: Chain = Solana, Status = 1mo ago, Holders = 8,008, KOLs = 14.

https://pump.fun/coin/3arUrpH3nzaRJbbpVgY42dcqSq9A5BFgUxKozZ4npump

KIND

Positioning: Heaviest KOL footprint in the top 10.

Research Blurb: Project details unclear at time of writing. The largest KOL count here aligns with above average view metrics and meaningful fees.

Quick Facts: Chain = Solana, Status = 29d ago, KOLs = 23, Avg Viewers = 57.

https://pump.fun/coin/V5cCiSixPLAiEDX2zZquT5VuLm4prr5t35PWmjNpump

ELIZABETH

Positioning: Mid-cap meme with consistent streams.

Research Blurb: Project details unclear at time of writing. Viewer averages and recency indicate steady presence rather than single spike behavior.

Quick Facts: Chain = Solana, Status = 1mo ago, Avg Viewers = 47, Peak Viewers = 113.

https://pump.fun/coin/DiiTPZdpd9t3XorHiuZUu4E1FoSaQ7uGN4q9YkQupump

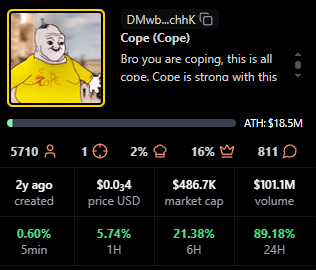

Cope

Positioning: Older token with a legacy community.

Research Blurb: Project details unclear at time of writing. Despite low recent averages, it holds a sizable base and meaningful fees.

Quick Facts: Chain = Solana, Status = —, Age = 1y ago, Avg Viewers = 9.

https://pump.fun/coin/DMwbVy48dWVKGe9z1pcVnwF3HLMLrqWdDLfbvx8RchhK

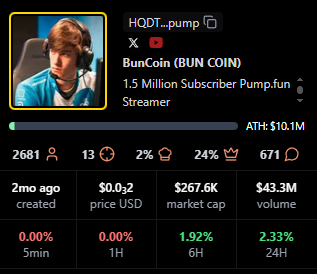

BUN COIN

Positioning: Currently live, strong peaks relative to size.

Research Blurb: Project details unclear at time of writing. Live streaming status often coincides with bursts of activity that lift fees quickly.

Quick Facts: Chain = Solana, Status = Live, Peak Viewers = 897, Avg Viewers = 71.

https://pump.fun/coin/HQDTzNa4nQVetoG6aCbSLX9kcH7tSv2j2sTV67Etpump

Fapcoin

Positioning: Newer token with targeted pushes.

Research Blurb: Project details unclear at time of writing. Recent age and meaningful KOL support suggest orchestrated activations that can move fees.

Quick Facts: Chain = Solana, Status = 13d ago, Age = 18d ago, KOLs = 22.

https://pump.fun/coin/8vGr1eX9vfpootWiUPYa5kYoGx9bTuRy2Xc4dNMrpump

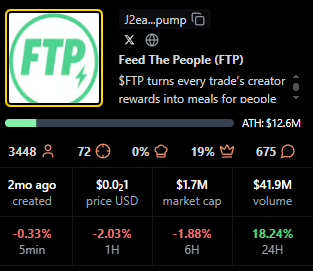

FTP

Positioning: Live status with solid mid-table view metrics.

Research Blurb: Project details unclear at time of writing. Peaks and consistent averages suggest an active audience during live windows.

Quick Facts: Chain = Solana, Status = Live, Peak Viewers = 496, Avg Viewers = 35.

https://pump.fun/coin/J2eaKn35rp82T6RFEsNK9CLRHEKV9BLXjedFM3q6pump

Signals From Fees Earned: Patterns to Watch

Fees Earned often rise with peak and average viewers, but timing matters. Several tokens here show concentrated peaks with modest averages, which implies that well timed announcements or coordinated segments can still produce high fees.

Age is not a blocker for this board. Newer tokens like Fapcoin appear due to focused activity, while older names such as Cope persist by mobilizing established holders. KOL count appears additive rather than decisive, with KIND standing out as the KOL leader.

For creators, Fees Earned reflects whether livestream moments translate into on-chain action. Design streams around clear calls to action, align announcements with segments that drive peaks, then sustain momentum with repeatable formats that stabilize averages.

For traders, Fees Earned complements market cap, viewers, and age. Look for projects that combine rising averages with consistent peaks, because those patterns suggest repeatable engagement rather than single event spikes.

TV Live is a fast way to follow real-time crypto market news, creator launches, and token breakdowns as they happen. You get context on stream dynamics, audience behavior, and on-chain activity while the story evolves.

CTA: Watch TV Live for real-time crypto market news →TV Live Link

CTA: Follow and enable alerts → TV Live

Token Metrics is trusted for transparent data, crypto analytics, on-chain ratings, and investor education. Our platform offers cutting-edge signals and market research to empower your crypto investing decisions.

What is the best way to track Pump.fun livestream leaders?

Tracking Pump.fun livestream leaders starts with the scanner views that show Fees Earned, viewers, and KOLs side by side, paired with live coverage so you see data and narrative shifts together.

Do higher fees predict higher market cap or sustained viewership?

Higher Fees Earned does not guarantee higher market cap or sustained viewership, it indicates conversion in specific windows, while longer term outcomes still depend on execution and community engagement.

How often do these rankings change?

Rankings can change quickly during active cycles, the entries shown here reflect the exact time of the screenshot.

Next Steps

Disclosure

This article is educational content. Cryptocurrency involves risk. Always do your own research.

%201.svg)

%201.svg)

Fast API design is no longer just about response time — it’s about developer ergonomics, safety, observability, and the ability to integrate modern AI services. FastAPI (commonly referenced by the search phrase "fast api") has become a favored framework in Python for building high-performance, async-ready APIs with built-in validation. This article explains the core concepts, best practices, and deployment patterns to help engineering teams build reliable, maintainable APIs that scale.

FastAPI is a Python web framework built on top of ASGI standards (like Starlette and Uvicorn) that emphasizes developer speed and runtime performance. Key differentiators include automatic request validation via Pydantic, type-driven documentation (OpenAPI/Swagger UI generated automatically), and first-class async support. Practically, that means less boilerplate, clearer contracts between clients and servers, and competitive throughput for I/O-bound workloads.

At the heart of FastAPI’s performance is asynchronous concurrency. By leveraging async/await, FastAPI handles many simultaneous connections efficiently, especially when endpoints perform non-blocking I/O such as database queries, HTTP calls to third-party services, or interactions with AI models. Important performance factors to evaluate:

FastAPI’s integration with Pydantic makes data validation explicit and type-driven. Use Pydantic models for request and response schemas to ensure inputs are sanitized and outputs are predictable. Recommended patterns:

Many modern APIs act as orchestrators for AI models or third-party data services. FastAPI’s async-first design pairs well with calling model inference endpoints or streaming responses. Practical tips when integrating AI services:

Deploying FastAPI to production typically involves containerized ASGI servers, an API gateway, and autoscaling infrastructure. Core operational considerations include:

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

FastAPI is built for the async ASGI ecosystem and emphasizes type-driven validation and automatic OpenAPI documentation. Flask is a synchronous WSGI framework that is lightweight and flexible but requires more manual setup for async support, validation, and schema generation. Choose based on concurrency needs, existing ecosystem, and developer preference.

Use async endpoints when your handler performs non-blocking I/O such as database queries with async drivers, external HTTP requests, or calls to async message brokers. For CPU-heavy tasks, prefer background workers or separate services to avoid blocking the event loop.

Pydantic enforces input types and constraints at the boundary of your application, reducing runtime errors and making APIs self-documenting. It also provides clear error messages, supports complex nested structures, and integrates tightly with FastAPI’s automatic documentation.

Common issues include running blocking code in async endpoints, inadequate connection pooling, missing rate limiting, and insufficient observability. Ensure proper worker/process models, async drivers, and graceful shutdown handling when deploying to production.

Use FastAPI’s TestClient (based on Starlette’s testing utilities) for endpoint tests and pytest for unit and integration tests. Mock external services and use testing databases or fixtures for repeatable test runs. Also include load testing to validate performance under expected concurrency.

Yes. When combined with proper patterns—type-driven design, async-safe libraries, containerization, observability, and scalable deployment—FastAPI is well-suited for production microservices focused on I/O-bound workloads and integrations with AI or external APIs.

Disclaimer

This article is for educational and informational purposes only. It does not constitute professional, legal, or investment advice. Evaluate tools and architectures according to your organization’s requirements and consult qualified professionals when needed.

%201.svg)

%201.svg)

Free APIs unlock data and functionality for rapid prototyping, research, and lightweight production use. Whether you’re building an AI agent, visualizing on-chain metrics, or ingesting market snapshots, understanding how to evaluate and integrate a free API is essential to building reliable systems without hidden costs.

Not all "free" APIs are created equal. The term generally refers to services that allow access to endpoints without an upfront fee, but differences appear across rate limits, data freshness, feature scope, and licensing. A clear framework for assessment is: access model, usage limits, data latency, security, and terms of service.

Use a methodical approach to compare options. Below is a pragmatic checklist that helps prioritize trade-offs between cost and capability.

For crypto-specific datasets, platforms such as Token Metrics illustrate how integrated analytics and API endpoints can complement raw data feeds by adding model-driven signals and normalized asset metadata.

Free APIs are most effective when integrated with resilient patterns. Below are recommended practices for teams and solo developers alike.

Understanding where a free API fits in your architecture depends on the scenario. Consider three common patterns:

When working with AI agents or automated analytics, instrument data flows and label data quality explicitly. AI-driven research tools can accelerate dataset discovery and normalization, but you should always audit automated outputs and maintain provenance records.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

Limits vary by provider but often include reduced daily/monthly call quotas, limited concurrency, and delayed data freshness. Review the provider’s rate-limit policy and test in your deployment region.

Yes for low-volume or non-critical paths, provided you incorporate caching, retries, and fallback logic. For mission-critical systems, evaluate paid tiers for SLAs and enhanced support.

Store keys in environment-specific vaults, avoid client-side exposure, and rotate keys periodically. Use proxy layers to inject keys server-side when integrating client apps.

Some free APIs provide robust historical endpoints, but completeness and retention policies differ. Validate by sampling known events and comparing across providers before depending on the dataset.

AI tools can assist with data cleaning, anomaly detection, and feature extraction, making it easier to derive insight from limited free data. Always verify model outputs and maintain traceability to source calls.

Track request volume, error rates (429/5xx), latency, and data staleness metrics. Set alerts for approaching throughput caps and automate graceful fallbacks to preserve user experience.

Legal permissions depend on the provider’s terms. Some allow caching for display but prohibit redistribution or commercial resale. Always consult the API’s terms of service before storing or sharing data.

Design with decoupled ingestion, caching, and multi-source redundancy so you can swap to paid tiers or alternative providers without significant refactoring.

Yes. Combining multiple sources improves resilience and data quality, but requires normalization, reconciliation logic, and latency-aware merging rules.

Disclaimer

This article is educational and informational only. It does not constitute financial, legal, or investment advice. Evaluate services and make decisions based on your own research and compliance requirements.

%201.svg)

%201.svg)

Modern web and mobile applications rely heavily on REST APIs to exchange data, integrate services, and enable automation. Whether you're building a microservice, connecting to a third-party data feed, or wiring AI agents to live systems, a clear understanding of REST API fundamentals helps you design robust, secure, and maintainable interfaces.

REST (Representational State Transfer) is an architectural style for distributed systems. A REST API exposes resources—often represented as JSON or XML—using URLs and standard HTTP methods. REST is not a protocol but a set of constraints that favor statelessness, resource orientation, and a uniform interface.

Key benefits include simplicity, broad client support, and easy caching, which makes REST a default choice for many public and internal APIs. Use-case examples include content delivery, telemetry ingestion, authentication services, and integrations between backend services and AI models that require data access.

Understanding core REST principles helps you map business entities to API resources and choose appropriate operations:

Adhering to these constraints makes integrations easier, especially when connecting analytics, monitoring, or AI-driven agents that rely on predictable behavior and clear failure modes.

Building a usable REST API involves choices beyond the basics. Consider these patterns and practices:

For teams building APIs that feed ML or AI pipelines, consistent schemas and semantic versioning are particularly important. They minimize downstream data drift and make model retraining and validation repeatable.

Security and operational visibility are core to production APIs:

Scaling often combines stateless application design, caching (CDNs or reverse proxies), and horizontal autoscaling behind load balancers. For APIs used by data-hungry AI agents, consider async patterns (webhooks, message queues) to decouple long-running tasks from synchronous request flows.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

REST emphasizes resources and uses HTTP verbs and status codes. GraphQL exposes a flexible query language letting clients request only needed fields. REST is often simpler to cache and monitor, while GraphQL can reduce over-fetching for complex nested data. Choose based on client needs, caching, and complexity.

Common strategies include URI versioning (/v1/) and header-based versioning. Maintain backward compatibility whenever possible, provide deprecation notices, and publish migration guides. Semantic versioning of your API contract helps client teams plan upgrades.

Require TLS, use strong authentication (OAuth 2.0 or signed tokens), validate inputs, enforce rate limits, and monitor anomalous traffic. Regularly audit access controls and rotate secrets. Security posture should be part of the API lifecycle.

APIs can supply training data, feature stores, and live inference endpoints. Design predictable schemas, low-latency endpoints, and asynchronous jobs for heavy computations. Tooling and observability help detect data drift, which is critical for reliable AI systems. Platforms like Token Metrics illustrate how API-led data can support model-informed insights.

Use synchronous Crypto APIs for short, fast operations with immediate results. For long-running tasks (batch processing, complex model inference), use asynchronous patterns: accept a request, return a job ID, and provide status endpoints or webhooks to report completion.

This article is educational and technical in nature. It does not constitute investment, legal, or professional advice. Evaluate tools and architectures against your requirements and risks before deployment.

%201.svg)

%201.svg)

REST APIs power much of the web and modern integrations—from mobile apps to AI agents that consume structured data. Understanding the principles, common pitfalls, and operational practices that make a REST API reliable and maintainable helps teams move faster while reducing friction when integrating services.

Representational State Transfer (REST) is an architectural style for networked applications. A REST API exposes resources (users, accounts, prices, etc.) via predictable HTTP endpoints and methods (GET, POST, PUT, DELETE). Its simplicity, cacheability, and wide tooling support make REST a go-to pattern for many back-end services and third-party integrations.

Key behavioral expectations include statelessness (each request contains the information needed to process it), use of standard HTTP status codes, and a resource-oriented URI design. These conventions improve developer experience and enable robust monitoring and error handling across distributed systems.

Designing a clear resource model at the outset avoids messy ad-hoc expansions later. Consider these guidelines:

Model relationships thoughtfully: prefer nested resources for clarity (e.g., /projects/42/tasks) but avoid excessive nesting depth. A well-documented schema contract reduces integration errors and accelerates client development.

Security for REST APIs is multi-layered. Common patterns:

Also consider rate limiting, token expiry, and key rotation policies. For APIs that surface sensitive data, adopt least-privilege principles and audit logging so access patterns can be reviewed.

Latency and scalability are often where APIs meet their limits. Practical levers include:

Instrument your API with observability: structured logs, distributed traces, and metrics (latency, error rates, throughput). These signals enable data-driven tuning and prioritized fixes.

Quality APIs are well-tested and easy to adopt. Include:

Automate CI checks that validate linting, schema changes, and security scanning to maintain long-term health.

When REST APIs expose market data, on-chain metrics, or signal feeds for analytics and AI agents, additional considerations apply. Data freshness, deterministic timestamps, provenance metadata, and predictable rate limits matter for reproducible analytics. Design APIs so consumers can:

AI-driven workflows often combine multiple endpoints; consistent schemas and clear quotas simplify orchestration and reduce operational surprises. For example, Token Metrics demonstrates how structured crypto insights can be surfaced via APIs to support research and model inputs for agents.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

"REST" refers to the architectural constraints defined by Roy Fielding. "RESTful" is an informal adjective describing APIs that follow REST principles—though implementations vary in how strictly they adhere to the constraints.

Use semantic intent when versioning. URL-based versions (e.g., /v1/) are explicit, while header-based or content negotiation approaches avoid URL churn. Regardless, document deprecation timelines and provide backward-compatible pathways.

REST is simple and cache-friendly for resource-centric models. GraphQL excels when clients need flexible queries across nested relationships. Consider client requirements, caching strategy, and operational complexity when choosing.

Expose limit headers, return standard status codes (e.g., 429), and provide retry-after guidance. Offer tiered quotas and clear documentation so integrators can design backoffs and fallback strategies.

OpenAPI (Swagger) for specs, Postman for interactive exploration, Pact for contract testing, and CI-integrated schema validators are common choices. Combine these with monitoring and API gateways for observability and enforcement.

This article is for educational and technical reference only. It is not financial, legal, or investment advice. Always evaluate tools and services against your own technical requirements and compliance obligations before integrating them into production systems.

%201.svg)

%201.svg)

REST APIs power most modern web and mobile back ends by providing a uniform, scalable way to exchange data over HTTP. Whether you are building microservices, connecting AI agents, or integrating third‑party feeds, understanding the architectural principles, design patterns, and operational tradeoffs of REST can help you build reliable systems. This article breaks down core concepts, design best practices, security measures, and practical steps to integrate REST APIs with analytics and AI workflows.

REST (Representational State Transfer) is an architectural style for distributed systems. It emphasizes stateless interactions, resource-based URIs, and the use of standard HTTP verbs (GET, POST, PUT, DELETE, PATCH). Key constraints include:

When designing APIs, aim for clear resource models, intuitive endpoint naming, and consistent payload shapes. Consider versioning strategies (URL vs header) from day one to avoid breaking clients as your API evolves.

Good API design balances usability, performance, and maintainability. Adopt these common patterns:

Document endpoints with examples and schemas (OpenAPI/Swagger). Automated documentation and SDK generation reduce integration friction and lower client-side errors.

Security and operational resilience are core concerns for production APIs. Consider the following layers:

For scale, design for statelessness so instances are replaceable, use caching (HTTP cache headers, CDN, or edge caches), and partition data to reduce contention. Use circuit breakers and graceful degradation to maintain partial service during downstream failures.

REST APIs are frequently used to feed AI models, aggregate on‑chain data, and connect analytics pipelines. Best practices for these integrations include:

To accelerate research workflows and reduce time-to-insight, many teams combine REST APIs with AI-driven analytics. For example, external platforms can provide curated market and on‑chain data through RESTful endpoints that feed model training or signal generation. One such option for consolidated crypto data access is Token Metrics, which can be used as part of an analysis pipeline to augment internal data sources.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

REST is an architectural style defined by constraints; "RESTful" describes services that adhere to those principles. In practice, many APIs are called RESTful even if they relax some constraints, such as strict HATEOAS.

Version early when breaking changes are likely. Common approaches are path versioning (/v1/) or header-based versioning. Path versioning is simpler for clients, while headers keep URLs cleaner. Maintain compatibility guarantees in your documentation.

REST is straightforward for resource-centric designs and benefits from HTTP caching and simple tooling. GraphQL excels when clients need flexible queries and to reduce over-fetching. Choose based on client needs, caching requirements, and team expertise.

Use token bucket or fixed-window counters, and apply limits per API key, IP, or user. Provide rate limit headers and meaningful status codes (429 Too Many Requests) to help clients implement backoff and retry strategies.

Combine unit and integration tests with contract tests (OpenAPI-driven). For monitoring, collect metrics (latency, error rates), traces, and structured logs. Synthetic checks and alerting on SLA breaches help detect degradations early.

Use OpenAPI/Swagger to provide machine-readable schemas and auto-generate interactive docs. Include examples, authentication instructions, and clear error code tables. Keep docs in version control alongside code.

Disclaimer

This article is educational and informational only. It does not constitute financial, investment, legal, or professional advice. Evaluate tools and services independently and consult appropriate professionals for specific needs.

%201.svg)

%201.svg)

REST APIs power much of the modern web, mobile apps, and integrations between services. Whether you are building a backend for a product, connecting to external data sources, or composing AI agents that call external endpoints, understanding REST API fundamentals helps you design reliable, maintainable, and performant systems.

Representational State Transfer (REST) is an architectural style that uses simple HTTP verbs to operate on resources identified by URLs. A REST API exposes these resources over HTTP so clients can create, read, update, and delete state in a predictable way. Key benefits include:

REST is not a strict protocol; it is a set of constraints that make APIs easier to consume and maintain. Understanding these constraints enables clearer contracts between services and smoother integration with libraries, SDKs, and API gateways.

Designing a RESTful API starts with resources and consistent use of HTTP semantics. Typical patterns include:

Use idempotency for safety: GET, PUT, and DELETE should be safe to retry without causing unintended side effects. POST is commonly non-idempotent unless an idempotency key is provided.

As APIs grow, practical patterns help keep them efficient and stable:

Security and reliability are essential for production APIs. Consider these practices:

These controls reduce downtime and make integration predictable for client teams and third-party developers.

Good testing and clear docs accelerate adoption and reduce bugs:

These measures improve developer productivity and reduce the risk of downstream failures when APIs evolve.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

REST is the architectural style; RESTful typically describes APIs that follow REST constraints such as statelessness, resource orientation, and use of HTTP verbs. In practice the terms are often used interchangeably.

PUT generally replaces a full resource and is idempotent; PATCH applies partial changes and may not be idempotent unless designed to be. Choose based on whether clients send full or partial resource representations.

URL versioning (/v1/) is simple and visible to clients, while header versioning is cleaner from a URL standpoint but harder for users to discover. Pick a strategy with a clear migration and deprecation plan.

Typical causes include unoptimized database queries, chatty endpoints that require many requests, lack of caching, and large payloads. Use profiling, caching, and pagination to mitigate these issues.

AI agents often orchestrate multiple data sources and services via REST APIs. Well-documented, authenticated, and idempotent endpoints make it safer for agents to request data, trigger workflows, and integrate model outputs into applications.

OpenAPI/Swagger, Postman, Redoc, and API gateways (e.g., Kong, Apigee) are common. They help standardize schemas, run automated tests, and generate SDKs for multiple languages.

Disclaimer

This article is educational and informational only. It does not constitute professional advice. Evaluate technical choices and platforms based on your project requirements and security needs.

%201.svg)

%201.svg)

REST APIs are the connective tissue of modern software: from mobile apps to cloud services, they standardize how systems share data. This guide breaks down practical design patterns, security considerations, performance tuning, and testing strategies to help engineers build reliable, maintainable RESTful services.

Good REST API design balances consistency, discoverability, and simplicity. Start with clear resource modeling — treat nouns as endpoints (e.g., /users, /orders) and use HTTP methods semantically: GET for retrieval, POST for creation, PUT/PATCH for updates, and DELETE for removals. Design predictable URIs, favor plural resource names, and use nested resources sparingly when relationships matter.

Other patterns to consider:

Security is foundational. Choose an authentication model that matches your use case: token-based (OAuth 2.0, JWT) is common for user-facing APIs, while mutual TLS or API keys may suit machine-to-machine communication. Regardless of choice, follow these practices:

Designate clear error codes and messages that avoid leaking sensitive information. Security reviews and threat modeling are essential parts of API lifecycle management.

Performance and scalability decisions often shape architecture. Key levers include caching, pagination, and efficient data modeling:

Leverage observability: instrument APIs with metrics (latency, error rates, throughput), distributed tracing, and structured logs. These signals help locate bottlenecks and inform capacity planning. In distributed deployments, design for graceful degradation and retries with exponential backoff to improve resilience.

Robust testing and tooling accelerate safe iteration. Adopt automated tests at multiple levels: unit tests for handlers, integration tests against staging environments, and contract tests to ensure backward compatibility. Use API mocking to validate client behavior early in development.

Versioning strategy matters: embed version in the URL (e.g., /v1/users) or the Accept header. Aim for backwards-compatible changes when possible; when breaking changes are unavoidable, document migration paths.

AI-enhanced tools can assist with schema discovery, test generation, and traffic analysis. For example, Token Metrics and similar platforms illustrate how analytics and automated signals can surface usage patterns and anomalies in request volumes — useful inputs when tuning rate limits or prioritizing endpoints for optimization.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

A REST API (Representational State Transfer) is an architectural style for networked applications that uses stateless HTTP requests to manipulate resources represented by URLs and standard methods.

Secure your API by enforcing HTTPS, using robust authentication (OAuth 2.0, short-lived tokens), validating inputs, applying rate limits, and monitoring access logs for anomalies.

Use POST to create resources, PUT to replace a resource entirely, and PATCH to apply partial updates. Choose semantics that align with client expectations and document them clearly.

Common approaches include URL versioning (/v1/...), header versioning (Accept header), or content negotiation. Prefer backward-compatible changes; when breaking changes are required, communicate deprecation timelines.

Return appropriate HTTP status codes, provide consistent error bodies with machine-readable codes and human-readable messages, and avoid exposing sensitive internals. Include correlation IDs to aid debugging.

Use synthetic monitoring, real-user metrics, health checks, distributed tracing, and automated alerting. Combine unit/integration tests with contract tests and post-deployment smoke checks.

Disclaimer

This article is educational and technical in nature. It does not provide financial, legal, or investment advice. Implementation choices depend on your specific context; consult qualified professionals for regulatory or security-sensitive decisions.

%201.svg)

%201.svg)

The modern digital landscape thrives on interconnected systems that communicate seamlessly across platforms, applications, and services. At the heart of this connectivity lies REST API architecture, a powerful yet elegant approach to building web services that has become the industry standard for everything from social media platforms to cryptocurrency exchanges. Understanding REST APIs is no longer optional for developers but essential for anyone building or integrating with web applications, particularly in rapidly evolving sectors like blockchain technology and digital asset management.

REST, an acronym for Representational State Transfer, represents an architectural style rather than a rigid protocol, giving developers flexibility while maintaining consistent principles. The architecture was introduced by Roy Fielding in his doctoral dissertation, establishing guidelines that have shaped how modern web services communicate. At its essence, REST API architecture treats everything as a resource that can be uniquely identified and manipulated through standard operations, creating an intuitive framework that mirrors how we naturally think about data and operations.

The architectural constraints of REST create systems that are scalable, maintainable, and performant. The client-server separation ensures that user interface concerns remain distinct from data storage concerns, allowing both to evolve independently. This separation proves particularly valuable in cryptocurrency applications where frontend trading interfaces need to iterate rapidly based on user feedback while backend systems handling blockchain data require stability and reliability. Token Metrics leverages this architectural principle in its crypto API design, providing developers with consistent access to sophisticated cryptocurrency analytics while continuously improving the underlying data processing infrastructure.

The stateless constraint requires that each request from client to server contains all information necessary to understand and process the request. The server maintains no client context between requests, instead relying on clients to include authentication credentials, resource identifiers, and operation parameters with every API call. This statelessness enables horizontal scaling, where additional servers can be added to handle increased load without complex session synchronization. For cryptocurrency APIs serving global markets with thousands of concurrent users querying market data, this architectural decision becomes critical to maintaining performance and availability.

Cacheability forms another foundational constraint in REST architecture, requiring that responses explicitly indicate whether they can be cached. This constraint improves performance and scalability by reducing the number of client-server interactions needed. In crypto APIs, distinguishing between frequently changing data like real-time cryptocurrency prices and relatively stable data like historical trading volumes enables intelligent caching strategies that balance freshness with performance. Token Metrics implements sophisticated caching mechanisms throughout its cryptocurrency API infrastructure, ensuring that developers receive rapid responses while maintaining data accuracy for time-sensitive trading decisions.

Understanding HTTP methods represents the cornerstone of effective REST API usage, as these verbs define the operations that clients can perform on resources. The GET method retrieves resource representations without modifying server state, making it safe and idempotent. In cryptocurrency APIs, GET requests fetch market data, retrieve token analytics, query blockchain transactions, or access portfolio information. The idempotent nature of GET means that multiple identical requests produce the same result, allowing for safe retries and caching without unintended side effects.

The POST method creates new resources on the server, typically returning the newly created resource's location and details in the response. When building crypto trading applications, POST requests might submit new orders, create alerts, or register webhooks for market notifications. Unlike GET, POST requests are neither safe nor idempotent, meaning multiple identical POST requests could create multiple resources. Understanding this distinction helps developers implement appropriate error handling and confirmation workflows in their cryptocurrency applications.

PUT requests update existing resources by replacing them entirely with the provided representation. The idempotent nature of PUT ensures that repeating the same update request produces the same final state, regardless of how many times it executes. In blockchain APIs, PUT might update user preferences, modify trading strategy parameters, or adjust portfolio allocations. The complete replacement semantics of PUT require clients to provide all resource fields, even if only updating a subset of values, distinguishing it from PATCH operations.

The PATCH method provides partial updates to resources, modifying only specified fields while leaving others unchanged. This granular control proves valuable when updating complex resources where clients want to modify specific attributes without retrieving and replacing entire resource representations. For cryptocurrency portfolio management APIs, PATCH enables updating individual asset allocations or adjusting specific trading parameters without affecting other settings. DELETE removes resources from the server, completing the standard CRUD operations that map naturally to database operations and resource lifecycle management.

Security in REST API design begins with authentication, the process of verifying user identity before granting access to protected resources. Multiple authentication mechanisms exist for REST APIs, each with distinct characteristics and use cases. Basic authentication transmits credentials with each request, simple to implement but requiring HTTPS to prevent credential exposure. Token-based authentication using JSON Web Tokens has emerged as the preferred approach for modern APIs, providing secure, stateless authentication that scales effectively across distributed systems.

OAuth 2.0 provides a comprehensive authorization framework particularly suited for scenarios where third-party applications need limited access to user resources without receiving actual credentials. In the cryptocurrency ecosystem, OAuth enables portfolio tracking apps to access user holdings across multiple exchanges, trading bots to execute strategies without accessing withdrawal capabilities, and analytics platforms to retrieve transaction history while maintaining security. Token Metrics implements robust OAuth 2.0 support in its crypto API, allowing developers to build sophisticated applications that leverage Token Metrics intelligence while maintaining strict security boundaries.

API key authentication offers a straightforward mechanism for identifying and authorizing API clients, particularly appropriate for server-to-server communications where user context is less relevant. Generating unique API keys for each client application enables granular access control and usage tracking. For cryptocurrency APIs, combining API keys with IP whitelisting provides additional security layers, ensuring that even if keys are compromised, they cannot be used from unauthorized locations. Proper API key rotation policies and secure storage practices prevent keys from becoming long-term security liabilities.

Transport layer security through HTTPS encryption protects data in transit, preventing man-in-the-middle attacks and eavesdropping. This protection becomes non-negotiable for cryptocurrency APIs where intercepted requests could expose trading strategies, portfolio holdings, or authentication credentials. Beyond transport encryption, sensitive data stored in databases or cached in memory requires encryption at rest, ensuring comprehensive protection throughout the data lifecycle. Token Metrics employs end-to-end encryption across its crypto API infrastructure, protecting proprietary algorithms, user data, and sensitive market intelligence from unauthorized access.

Versioning enables REST APIs to evolve without breaking existing client integrations, a critical capability for long-lived APIs supporting diverse client applications. URI versioning embeds the version number directly in the endpoint path, creating explicit, easily discoverable version indicators. A cryptocurrency API might expose endpoints like /api/v1/cryptocurrencies/bitcoin/price and /api/v2/cryptocurrencies/bitcoin/price, allowing old and new clients to coexist peacefully. This approach provides maximum clarity and simplicity, making it the most widely adopted versioning strategy.

Header-based versioning places version information in custom HTTP headers rather than URIs, keeping endpoint paths clean and emphasizing that different versions represent the same conceptual resource. Clients specify their desired API version through headers like API-Version: 2 or Accept: application/vnd.tokenmetrics.v2+json. While this approach maintains cleaner URLs, it makes API versions less discoverable and complicates testing since headers are less visible than path components. For cryptocurrency APIs where trading bots and automated systems consume endpoints programmatically, the clarity of URI versioning often outweighs the aesthetic benefits of header-based approaches.

Content negotiation through Accept headers allows clients to request specific response formats or versions, leveraging HTTP's built-in content negotiation mechanisms. This approach treats different API versions as different representations of the same resource, aligning well with REST principles. However, implementation complexity and reduced discoverability have limited its adoption compared to URI versioning. Token Metrics maintains clear versioning in its cryptocurrency API, ensuring that developers can rely on stable endpoints while the platform continues evolving with new features, data sources, and analytical capabilities.

Deprecation policies govern how long old API versions remain supported and what notice clients receive before version retirement. Responsible API providers announce deprecations well in advance, provide migration guides, and maintain overlapping version support during transition periods. For crypto APIs where trading systems might run unattended for extended periods, generous deprecation timelines prevent unexpected failures that could result in missed trading opportunities or financial losses. Clear communication channels for version updates and deprecation notices help developers plan migrations and maintain system reliability.

Well-designed REST API requests and responses create intuitive interfaces that developers can understand and use effectively. Request design begins with meaningful URI structures that use nouns to represent resources and HTTP methods to indicate operations. Rather than encoding actions in URIs like /api/getCryptocurrencyPrice, REST APIs prefer resource-oriented URIs like /api/cryptocurrencies/bitcoin/price where the HTTP method conveys intent. This convention creates self-documenting APIs that follow predictable patterns across all endpoints.

Query parameters enable filtering, sorting, pagination, and field selection, allowing clients to request exactly the data they need. A cryptocurrency market data API might support queries like /api/cryptocurrencies?marketcap_min=1000000000&sort=volume_desc&limit=50 to retrieve the top 50 cryptocurrencies by trading volume with market capitalizations above one billion. Supporting flexible query parameters reduces the number of specialized endpoints needed while giving clients fine-grained control over responses. Token Metrics provides extensive query capabilities in its crypto API, enabling developers to filter and sort through comprehensive cryptocurrency data to find exactly the insights they need.

Response design focuses on providing consistent, well-structured data that clients can parse reliably. JSON has become the de facto standard for REST API responses, offering a balance of human readability and machine parsability. Consistent property naming conventions, typically camelCase or snake_case used uniformly across all endpoints, eliminate confusion and reduce integration errors. Including metadata like pagination information, request timestamps, and data freshness indicators helps clients understand and properly utilize responses.

HTTP status codes communicate request outcomes, with the first digit indicating the general category of response. Success responses in the 200 range include 200 for successful requests, 201 for successful resource creation, and 204 for successful operations returning no content. Client error responses in the 400 range signal problems with the request, including 400 for malformed requests, 401 for authentication failures, 403 for authorization denials, 404 for missing resources, and 429 for rate limit violations. Server error responses in the 500 range indicate problems on the server side. Proper use of status codes enables intelligent error handling in client applications.

Rate limiting protects REST APIs from abuse and ensures equitable resource distribution among all consumers. Implementing rate limits prevents individual clients from monopolizing server resources, maintains consistent performance for all users, and protects against denial-of-service attacks. For cryptocurrency APIs where market volatility can trigger massive traffic spikes, rate limiting prevents system overload while maintaining service availability. Different rate limiting strategies address different scenarios and requirements.

Fixed window rate limiting counts requests within discrete time windows, resetting counters at window boundaries. This straightforward approach makes it easy to communicate limits like "1000 requests per hour" but can allow burst traffic at window boundaries. Sliding window rate limiting provides smoother traffic distribution by considering rolling time periods, though with increased implementation complexity. Token bucket algorithms offer the most flexible approach, allowing burst capacity while maintaining average rate constraints over time.

Tiered rate limits align with different user segments and use cases, offering higher limits to paying customers or trusted partners while maintaining lower limits for anonymous or free-tier users. Token Metrics implements sophisticated tiered rate limiting across its cryptocurrency API plans, balancing accessibility for developers exploring the platform with the need to maintain system performance and reliability. Developer tiers might support hundreds of requests per minute for prototyping, while enterprise plans provide substantially higher limits suitable for production trading systems.

Rate limit communication through response headers keeps clients informed about their consumption and remaining quota. Standard headers like X-RateLimit-Limit, X-RateLimit-Remaining, and `X-RateLimit-Reset provide transparent visibility into rate limit status, enabling clients to throttle their requests proactively. For crypto trading applications making time-sensitive market data requests, understanding rate limit status prevents throttling during critical market moments and enables intelligent request scheduling.

Comprehensive error handling distinguishes professional REST APIs from amateur implementations, particularly in cryptocurrency applications where clear diagnostics enable rapid issue resolution. Error responses should provide multiple layers of information including HTTP status codes for machine processing, error codes for specific error identification, human-readable messages for developer understanding, and actionable guidance for resolution. Structured error responses following consistent formats enable clients to implement robust error handling logic.

Client errors in the 400 range typically indicate problems the client can fix by modifying their request. Detailed error messages should specify which parameters are invalid, what constraints were violated, and how to construct valid requests. For cryptocurrency APIs, distinguishing between unknown cryptocurrency symbols, invalid date ranges, malformed addresses, and insufficient permissions enables clients to implement appropriate error recovery strategies. Token Metrics provides detailed error responses throughout its crypto API, helping developers quickly identify and resolve integration issues.

Server errors require different handling since clients cannot directly resolve the underlying problems. Implementing retry logic with exponential backoff helps handle transient failures without overwhelming recovering systems. Circuit breaker patterns prevent cascading failures by temporarily suspending requests to failing dependencies, allowing them time to recover. For blockchain APIs aggregating data from multiple sources, implementing fallback mechanisms ensures partial functionality continues even when individual data sources experience disruptions.

Validation occurs at multiple levels, from basic format validation of request parameters to business logic validation of operation feasibility. Early validation provides faster feedback and prevents unnecessary processing of invalid requests. For crypto trading APIs, validation might check that order quantities exceed minimum trade sizes, trading pairs are valid and actively traded, and users have sufficient balances before attempting trade execution. Comprehensive validation reduces error rates and improves user experience.

Performance optimization begins with database query efficiency, as database operations typically dominate API response times. Proper indexing strategies ensure that queries retrieving cryptocurrency market data, token analytics, or blockchain transactions execute quickly even as data volumes grow. Connection pooling prevents the overhead of establishing new database connections for each request, particularly important for high-traffic crypto APIs serving thousands of concurrent users.

Caching strategies dramatically improve performance by storing computed results or frequently accessed data in fast-access memory. Distinguishing between different cache invalidation requirements enables optimized caching policies. Cryptocurrency price data might cache for seconds due to rapid changes, while historical data can cache for hours or days. Token Metrics implements multi-level caching throughout its crypto API infrastructure, including application-level caching, database query result caching, and CDN caching for globally distributed access.

Pagination prevents overwhelming clients and servers with massive response payloads. Cursor-based pagination provides consistent results even as underlying data changes, important for cryptocurrency market data where new transactions and price updates arrive constantly. Limit-offset pagination offers simpler implementation but can produce inconsistent results across pages if data changes during pagination. Supporting configurable page sizes lets clients balance between number of requests and response size based on their specific needs.

Asynchronous processing offloads time-consuming operations from request-response cycles, improving API responsiveness. For complex cryptocurrency analytics that might require minutes to compute, accepting requests and returning job identifiers enables clients to poll for results or receive webhook notifications upon completion. This pattern allows APIs to acknowledge requests immediately while processing continues in the background, preventing timeout failures and improving perceived performance.

Testing REST APIs requires comprehensive strategies covering functionality, performance, security, and reliability. Unit tests validate individual endpoint behaviors, ensuring request parsing, business logic, and response formatting work correctly in isolation. For cryptocurrency APIs, unit tests verify that price calculations, technical indicator computations, and trading signal generation functions correctly across various market conditions and edge cases.

Integration tests validate how API components work together and interact with external dependencies like databases, blockchain nodes, and third-party data providers. Testing error handling, timeout scenarios, and fallback mechanisms ensures APIs gracefully handle infrastructure failures. Token Metrics maintains rigorous testing protocols for its cryptocurrency API, ensuring that developers receive accurate, reliable market data even when individual data sources experience disruptions or delays.

Contract testing ensures that APIs adhere to documented specifications and maintain backward compatibility across versions. Consumer-driven contract testing validates that APIs meet the specific needs of consuming applications, catching breaking changes before they impact production systems. For crypto APIs supporting diverse clients from mobile apps to trading bots, contract testing prevents regressions that could break existing integrations.

Performance testing validates API behavior under load, identifying bottlenecks and capacity limits before they impact production users. Load testing simulates normal traffic patterns, stress testing pushes systems beyond expected capacity, and soak testing validates sustained operation over extended periods. For cryptocurrency APIs where market events can trigger massive traffic spikes, understanding system behavior under various load conditions enables capacity planning and infrastructure optimization.

Exceptional documentation serves as the primary interface between API providers and developers, dramatically impacting adoption and successful integration. Comprehensive documentation includes conceptual overviews explaining the API's purpose and architecture, getting started guides walking developers through initial integration, detailed endpoint references documenting all available operations, and code examples demonstrating common use cases in multiple programming languages.

Interactive documentation tools like Swagger UI or Redoc enable developers to explore and test endpoints directly from documentation pages, dramatically reducing time from discovery to first successful API call. For cryptocurrency APIs, providing pre-configured examples for common queries like retrieving Bitcoin prices, analyzing trading volumes, or fetching token ratings accelerates integration and helps developers understand response structures. Token Metrics offers extensive API documentation covering its comprehensive cryptocurrency analytics platform, including detailed guides for accessing token grades, market predictions, sentiment analysis, and technical indicators.

SDK development provides language-native interfaces abstracting HTTP request details and response parsing. Official SDKs for Python, JavaScript, Java, and other popular languages accelerate integration and reduce implementation errors. For crypto APIs, SDKs can handle authentication, request signing, rate limiting, error retry logic, and response pagination automatically, allowing developers to focus on building features rather than managing HTTP communications.

Cryptocurrency exchanges represent one of the most demanding use cases for REST APIs, requiring high throughput, low latency, and absolute reliability. Trading APIs enable programmatic order placement, portfolio management, and market data access, supporting both manual trading through web and mobile interfaces and automated trading through bots and algorithms. The financial stakes make security, accuracy, and availability paramount concerns that drive architectural decisions.

Blockchain explorers and analytics platforms leverage REST APIs to provide searchable, queryable access to blockchain data. Rather than requiring every application to run full blockchain nodes and parse raw blockchain data, these APIs provide convenient interfaces for querying transactions, addresses, blocks, and smart contract events. Token Metrics provides comprehensive blockchain API access integrated with advanced analytics, enabling developers to combine raw blockchain data with sophisticated market intelligence and AI-driven insights.

Portfolio management applications aggregate data from multiple sources through REST APIs, providing users with unified views of their cryptocurrency holdings across exchanges, wallets, and blockchain networks. These applications depend on reliable crypto APIs delivering accurate balance information, transaction history, and real-time valuations. The complexity of tracking assets across dozens of blockchain networks and hundreds of exchanges necessitates robust API infrastructure that handles failures gracefully and maintains data consistency.

The evolution of REST APIs continues as new technologies and requirements emerge. GraphQL offers an alternative approach addressing some REST limitations, particularly around fetching nested resources and minimizing overfetching or underfetching of data. While GraphQL has gained adoption, REST remains dominant due to its simplicity, caching characteristics, and broad tooling support. Understanding how these technologies complement each other helps developers choose appropriate solutions for different scenarios.

Artificial intelligence integration within APIs themselves represents a frontier where APIs become more intelligent and adaptive. Machine learning models embedded in cryptocurrency APIs can personalize responses, detect anomalies, predict user needs, and provide proactive insights. Token Metrics leads this convergence, embedding AI-powered analytics directly into its crypto API, enabling developers to access sophisticated market predictions and trading signals through simple REST endpoints.

WebSocket and Server-Sent Events complement REST APIs for real-time data streaming. While REST excels at request-response patterns, WebSocket connections enable bidirectional real-time communication ideal for cryptocurrency price streams, live trading activity, and instant market alerts. Modern crypto platforms combine REST APIs for standard operations with WebSocket streams for real-time updates, leveraging the strengths of each approach.

Evaluating REST APIs for integration requires assessing multiple dimensions beyond basic functionality. Documentation quality directly impacts integration speed and ongoing maintenance, with comprehensive, accurate documentation reducing development time significantly. For cryptocurrency APIs, documentation should address domain-specific scenarios like handling blockchain reorganizations, dealing with stale data, and implementing proper error recovery for trading operations.

Performance characteristics including response times, rate limits, and reliability metrics determine whether an API can support production workloads. Trial periods and sandbox environments enable realistic testing before committing to specific providers. Token Metrics offers comprehensive trial access to its cryptocurrency API, allowing developers to evaluate data quality, response times, and feature completeness before integration decisions.

Pricing structures and terms of service require careful evaluation, particularly for cryptocurrency applications where usage can scale dramatically during market volatility. Understanding rate limits, overage charges, and upgrade paths prevents unexpected costs or service disruptions. Transparent pricing and flexible plans that scale with application growth indicate mature, developer-friendly API providers.

Understanding REST API architecture, security principles, and best practices empowers developers to build robust, scalable applications and make informed decisions when integrating external services. From HTTP methods and status codes to versioning strategies and performance optimization, each aspect contributes to creating APIs that developers trust and applications that deliver value. The principles of REST architecture have proven remarkably durable, adapting to new technologies and requirements while maintaining the core characteristics that made REST successful.

In the cryptocurrency and blockchain space, REST APIs provide essential infrastructure connecting developers to market data, trading functionality, and analytical intelligence. Token Metrics exemplifies excellence in crypto API design, offering comprehensive cryptocurrency analytics, AI-powered market predictions, and real-time blockchain data through a secure, performant, well-documented RESTful interface. Whether building cryptocurrency trading platforms, portfolio management tools, or analytical applications, understanding REST APIs and leveraging powerful crypto APIs like those offered by Token Metrics accelerates development and enhances application capabilities.

As technology evolves and the cryptocurrency ecosystem matures, REST APIs will continue adapting while maintaining the fundamental principles of simplicity, scalability, and reliability that have made them indispensable. Developers who invest in deeply understanding REST architecture position themselves to build innovative applications that leverage the best of modern API design and emerging technologies, creating the next generation of solutions that drive our increasingly connected digital economy forward.

%201.svg)

%201.svg)

REST APIs power much of the modern web: mobile apps, single-page frontends, third-party integrations, and many backend services communicate via RESTful endpoints. This guide breaks down the core principles, design patterns, security considerations, and practical workflows for building and consuming reliable REST APIs. Whether you are evaluating an external API or designing one for production, the frameworks and checklists here will help you ask the right technical questions and set up measurable controls.