Practical API Testing: Strategies, Tools, and Best Practices

APIs are the connective tissue of modern software. Testing them thoroughly prevents regressions, ensures predictable behavior, and protects downstream systems. This guide breaks API testing into practical steps, frameworks, and tool recommendations so engineers can build resilient interfaces and integrate them into automated delivery pipelines.

What is API testing?

API testing verifies that application programming interfaces behave according to specification: returning correct data, enforcing authentication and authorization, handling errors, and performing within expected limits. Unlike UI testing, API tests focus on business logic, data contracts, and integration between systems rather than presentation. Well-designed API tests are fast, deterministic, and suitable for automation, enabling rapid feedback in development workflows.

Types of API tests

- Unit/Component tests: Validate single functions or routes in isolation, often by mocking external dependencies to exercise specific logic.

- Integration tests: Exercise interactions between services, databases, and third-party APIs to verify end-to-end flows and data consistency.

- Contract tests: Assert that a provider and consumer agree on request/response shapes and semantics, reducing breaking changes in distributed systems.

- Performance tests: Measure latency, throughput, and resource usage under expected and peak loads to find bottlenecks.

- Security tests: Check authentication, authorization, input validation, and common vulnerabilities (for example injection, broken access control, or insufficient rate limiting).

- End-to-end API tests: Chain multiple API calls to validate workflows that represent real user scenarios across systems.

Designing an API testing strategy

Effective strategies balance scope, speed, and confidence. A common model is the testing pyramid: many fast unit tests, a moderate number of integration and contract tests, and fewer end-to-end or performance tests. Core elements of a robust strategy include:

- Define clear acceptance criteria: Use API specifications (OpenAPI/Swagger) to derive expected responses, status codes, and error formats so tests reflect agreed behavior.

- Prioritize test cases: Focus on critical endpoints, authentication flows, data integrity, and boundary conditions that pose the greatest risk.

- Use contract testing: Make provider/consumer compatibility explicit with frameworks that can generate or verify contracts automatically.

- Maintain test data: Seed environments with deterministic datasets, use fixtures and factories, and isolate test suites from production data.

- Measure coverage pragmatically: Track which endpoints and input spaces are exercised, but avoid chasing 100% coverage if it creates brittle tests.

Tools, automation, and CI/CD

Tooling choices depend on protocols (REST, GraphQL, gRPC) and language ecosystems. Common tools and patterns include:

- Postman & Newman: Rapid exploratory testing, collection sharing, and collection-based automation suited to cross-team collaboration.

- REST-assured / Supertest / pytest + requests: Language-native libraries for integration and unit testing in JVM, Node.js, and Python ecosystems.

- Contract testing tools: Pact, Schemathesis, or other consumer-driven contract frameworks to prevent breaking changes in services.

- Load and performance: JMeter, k6, Gatling for simulating traffic and measuring resource limits and latency under stress.

- Security scanners: OWASP ZAP or dedicated fuzzers for input validation, authentication, and common attack surfaces.

Automation should be baked into CI/CD pipelines: run unit and contract tests on pull requests, integration tests on feature branches or merged branches, and schedule performance/security suites on staging environments. Observability during test runs—collecting metrics, logs, and traces—helps diagnose flakiness and resource contention faster.

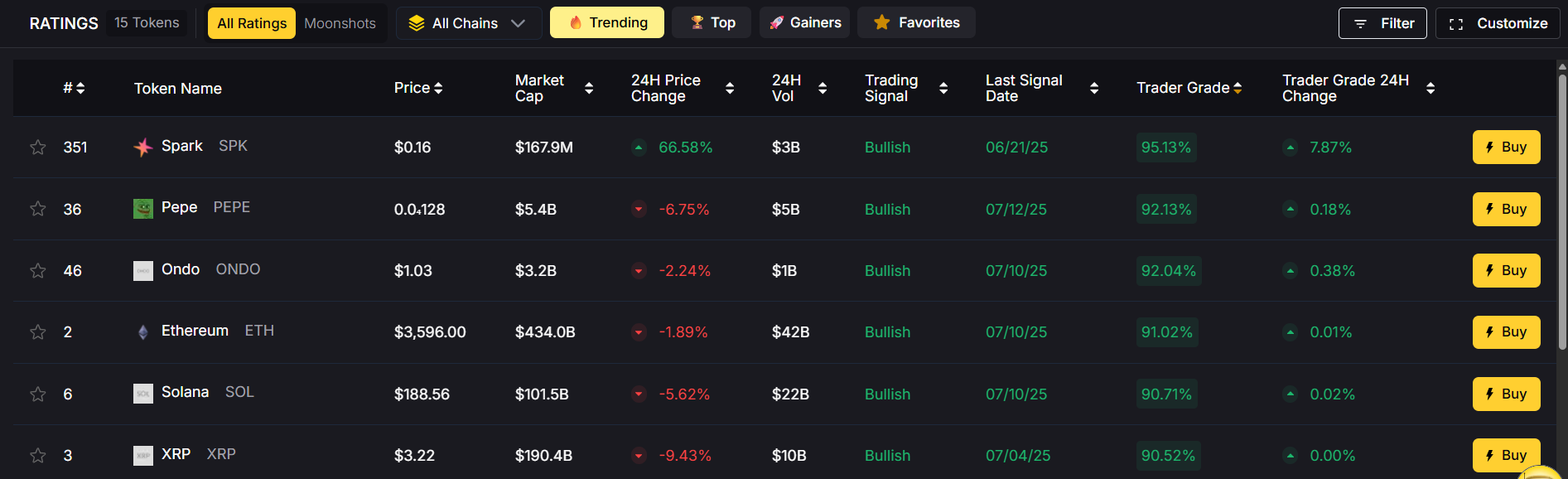

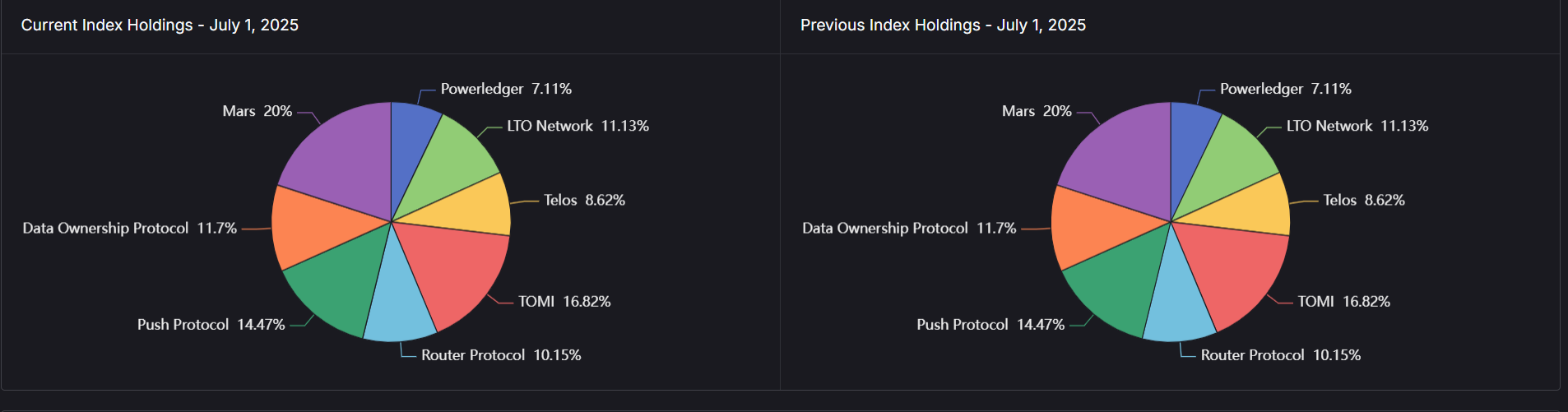

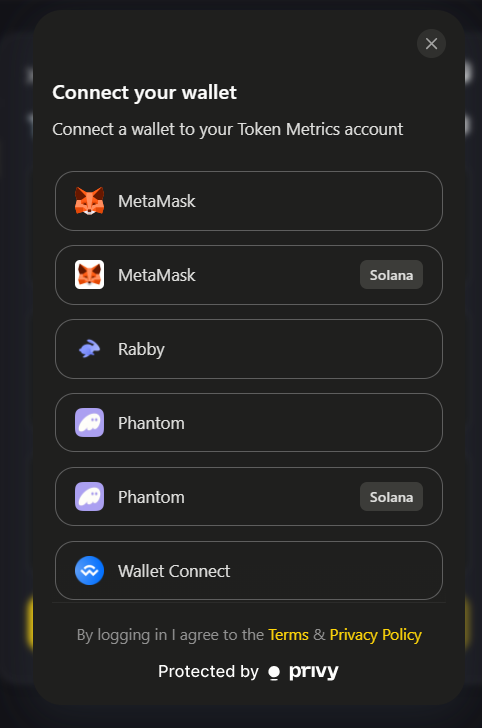

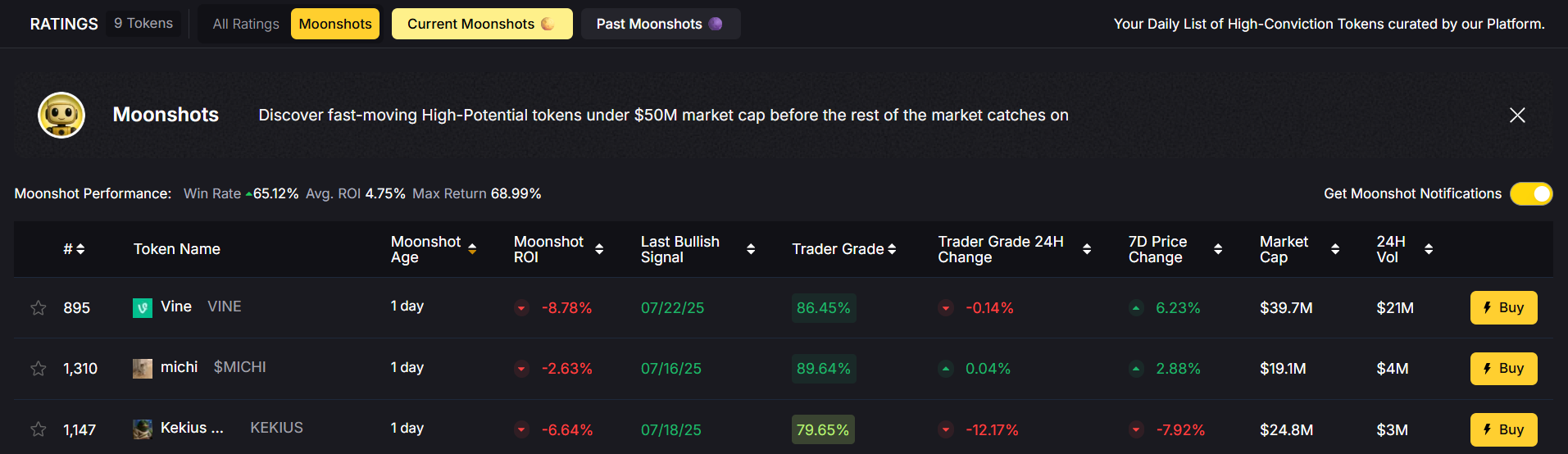

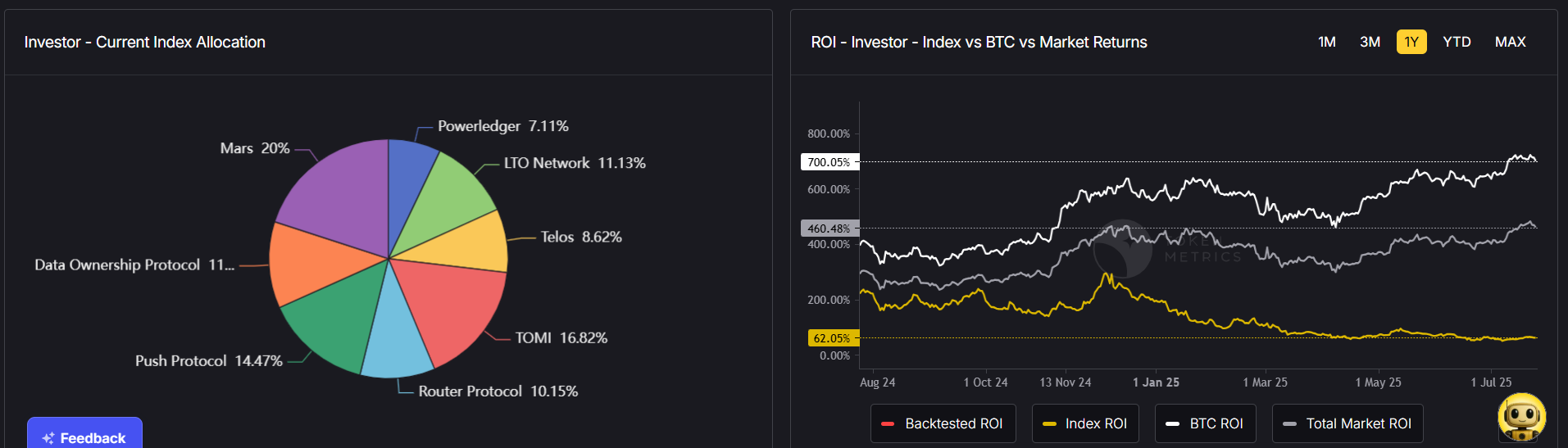

AI-driven analysis can accelerate test coverage and anomaly detection by suggesting high-value test cases and highlighting unusual response patterns. For teams that integrate external data feeds into their systems, services that expose robust, real-time APIs and analytics can be incorporated into test scenarios to validate third-party integrations under realistic conditions. For example, Token Metrics offers datasets and signals that can be used to simulate realistic inputs or verify integrations with external data providers.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

What is the difference between unit and integration API tests?

Unit tests isolate individual functions or routes using mocks and focus on internal logic. Integration tests exercise multiple components together (for example service + database) to validate interaction, data flow, and external dependencies.

How often should I run performance tests?

Run lightweight load tests during releases and schedule comprehensive performance runs on staging before major releases or after architecture changes. Frequency depends on traffic patterns and how often critical paths change.

Can AI help with API testing?

AI can suggest test inputs, prioritize test cases by risk, detect anomalies in responses, and assist with test maintenance through pattern recognition. Treat AI as a productivity augmenter that surfaces hypotheses requiring engineering validation.

What is contract testing and why use it?

Contract testing ensures providers and consumers agree on the API contract (schemas, status codes, semantics). It reduces integration regressions by failing early when expectations diverge, enabling safer deployments in distributed systems.

What are best practices for test data management?

Use deterministic fixtures, isolate test databases, anonymize production data when necessary, seed environments consistently, and prefer schema or contract assertions to validate payload correctness rather than brittle value expectations.

How do I handle flaky API tests?

Investigate root causes such as timing, external dependencies, or resource contention. Reduce flakiness by mocking unstable third parties, improving environment stability, adding idempotent retries where appropriate, and capturing diagnostic traces during failures.

Disclaimer

This article is educational and technical in nature and does not constitute investment, legal, or regulatory advice. Evaluate tools and data sources independently and test in controlled environments before production use.

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)