Best Index Providers & Benchmark Services (2025)

Why Crypto Index Providers & Benchmark Services Matter in September 2025

Crypto index providers give institutions and advanced investors rules-based, auditable ways to measure the digital asset market. In one sentence: a crypto index provider designs and administers regulated benchmarks—like price indices or market baskets—that funds, ETPs, quants, and risk teams can track or license. As liquidity deepens and regulation advances, high-integrity benchmarks reduce noise, standardize reporting, and enable products from passive ETPs to factor strategies.

If you’re comparing crypto index providers for portfolio measurement, product launches, or compliance reporting, this guide ranks the best options now—what they do, who they fit, and what to consider across security posture, coverage, costs, and support.

How We Picked (Methodology & Scoring)

- Liquidity (30%) – Does the provider screen venues/liquidity robustly and publish transparent inclusion rules?

- Security & Governance (25%) – Benchmark authorization/registration, governance committees, calculation resilience, and public methodologies/audits.

- Coverage (15%) – Breadth across single-asset, multi-asset, sectors/factors, and region eligibility.

- Costs (15%) – Licensing clarity, data access models, and total cost to operate products.

- UX (10%) – Docs, factsheets, ground rules, rebalancing cadence, client tooling.

- Support (5%) – Responsiveness, custom index build capacity, enterprise integration.

We relied on official product pages, methodologies, and security/governance disclosures; third-party datasets (e.g., venue quality screens) were used only as cross-checks. Last updated September 2025.

Top 10 Crypto Index Providers & Benchmark Services in September 2025

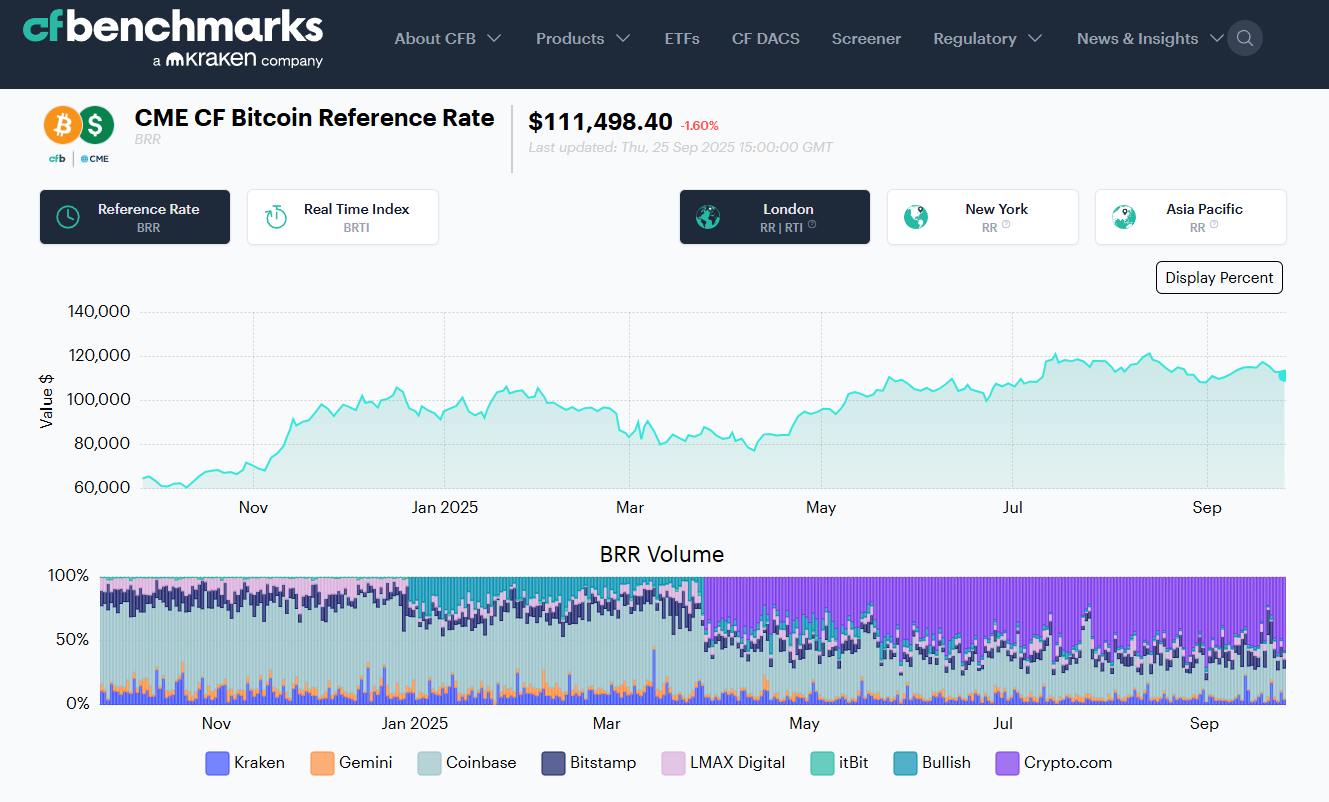

1) CF Benchmarks — Best for regulated settlement benchmarks

Why Use It: Administrator of the CME CF Bitcoin Reference Rate (BRR) and related benchmarks used to settle major futures and institutional products; UK BMR-registered with transparent exchange criteria and daily calculation since 2016. If you need benchmark-grade spot references (BTC, ETH and more) with deep derivatives alignment, start here. CF Benchmarks+1

Best For: Futures settlement references; fund NAV/pricing; risk; audit/compliance.

Notable Features: BRR/BRRNY reference rates; multi-exchange liquidity screens; methodology & governance docs; broad suite of real-time indices.

Consider If: You need composite market baskets beyond single-assets—pair with a multi-asset provider.

Alternatives: S&P Dow Jones Indices; FTSE Russell.

Regions: Global • Fees/Notes: Licensed benchmarks; enterprise pricing.

2) S&P Dow Jones Indices — Best for broad, institution-first crypto baskets

Why Use It: The S&P Cryptocurrency series (incl. Broad Digital Market) brings index craft, governance, and transparency familiar to traditional asset allocators—ideal for boards and committees that already use S&P. S&P Global+1

Best For: Asset managers launching passive products; OCIOs; consultants.

Notable Features: Broad/large-cap/mega-cap indices; single-asset BTC/ETH; published ground rules; established brand trust.

Consider If: You need highly customizable factors or staking-aware baskets—other vendors may move faster here.

Alternatives: MSCI; MarketVector.

Regions: Global • Fees/Notes: Licensing via S&P DJI.

3) MSCI Digital Assets — Best for thematic & institutional risk frameworks

Why Use It: MSCI’s Global Digital Assets and Smart Contract indices apply MSCI’s taxonomy/governance with themed exposures and clear methodologies—useful when aligning with enterprise risk standards. MSCI+1

Best For: CIOs needing policy-friendly thematics; due-diligence heavy institutions.

Notable Features: Top-30 market index; smart-contract subset; methodology docs; global brand assurance.

Consider If: You need exchange-by-exchange venue vetting or settlement rates—pair with CF Benchmarks or FTSE Russell.

Alternatives: S&P DJI; FTSE Russell.

Regions: Global • Fees/Notes: Enterprise licensing.

4) FTSE Russell Digital Asset Indices — Best for liquidity-screened, DAR-vetted universes

Why Use It: Built in association with Digital Asset Research (DAR), FTSE Russell screens assets and venues to EU Benchmark-ready standards; strong fit for risk-controlled coverage from large to micro-cap and single-asset series. LSEG+1

Best For: Product issuers who need venue vetting & governance; EU-aligned programs.

Notable Features: FTSE Global Digital Asset series; single-asset BTC/ETH; ground rules; DAR reference pricing.

Consider If: You require highly custom factor tilts—MarketVector or Vinter may be quicker to bespoke.

Alternatives: Wilshire; S&P DJI.

Regions: Global (EU-friendly) • Fees/Notes: Licensed benchmarks.

5) Nasdaq Crypto Index (NCI) — Best for flagship, dynamic market representation

Why Use It: NCI is designed to be dynamic, representative, and trackable; widely recognized and replicated by ETPs seeking diversified core exposure—useful as a single “beta” benchmark. Nasdaq+2Nasdaq Global Index Watch+2

Best For: Core market ETPs; CIO benchmarks; sleeve construction.

Notable Features: Rules-driven eligibility; regular reconstitutions; strong market recognition.

Consider If: You want deep sector/thematic granularity—pair with MSCI/MarketVector.

Alternatives: Bloomberg Galaxy (BGCI); MarketVector MVDA.

Regions: Global • Fees/Notes: Licensing via Nasdaq.

6) MarketVector Indexes — Best for broad coverage & custom builds

Why Use It: Backed by VanEck’s index arm (formerly MVIS), MarketVector offers off-the-shelf MVDA 100 plus sectors, staking-aware, and bespoke solutions—popular with issuers needing speed to market and depth. MarketVector Indexes+1

Best For: ETP issuers; quants; asset managers needing customization.

Notable Features: MVDA (100-asset) benchmark; single/multi-asset indices; staking/factor options; robust docs.

Consider If: You prioritize blue-chip simplicity—BGCI/NCI might suffice.

Alternatives: Vinter; S&P DJI.

Regions: Global • Fees/Notes: Enterprise licensing; custom index services.

7) Bloomberg Galaxy Crypto Index (BGCI) — Best for blue-chip, liquid market beta

Why Use It: Co-developed by Bloomberg and Galaxy, BGCI targets the largest, most liquid cryptoassets, with concentration caps and monthly reviews—an institutional “core” that’s widely cited on terminals. Galaxy Asset Management+1

Best For: CIO benchmarks; performance reporting; media-friendly references.

Notable Features: Capped weights; qualified exchange criteria; Bloomberg governance.

Consider If: You need smaller-cap breadth—MVDA/NCI may cover more names.

Alternatives: NCI; S&P DJI.

Regions: Global • Fees/Notes: License via Bloomberg Index Services.

8) CoinDesk Indices — Best for reference pricing (XBX) & tradable composites (CoinDesk 20)

Why Use It: Administrator of XBX (Bitcoin Price Index) and the CoinDesk 20, with transparent liquidity weighting and growing exchange integrations—including use in listed products. CoinDesk Indices+2CoinDesk Indices+2

Best For: Reference rates; product benchmarks; quant research.

Notable Features: XBX reference rate; CoinDesk 20; governance/methodologies; exchange selection rules.

Consider If: You require UK BMR-registered BTC settlement—CF Benchmarks BRR is purpose-built.

Alternatives: CF Benchmarks; S&P DJI.

Regions: Global • Fees/Notes: Licensing available; contact sales.

9) Vinter — Best for specialist, regulated crypto index construction

Why Use It: A regulated, crypto-native index provider focused on building/maintaining indices tracked by ETPs across Europe; fast on custom thematics and single-asset reference rates. vinter.co+1

Best For: European ETP issuers; bespoke strategies; rapid prototyping.

Notable Features: BMR-style reference rates; multi-asset baskets; calc-agent services; public factsheets.

Consider If: You need mega-brand recognition for U.S. committees—pair with S&P/MSCI.

Alternatives: MarketVector; Solactive.

Regions: Global (strong EU footprint) • Fees/Notes: Custom build/licensing.

10) Wilshire (FT Wilshire Digital Asset Index Series) — Best for institutional coverage & governance

Why Use It: The FT Wilshire series aims to be an institutional market standard with transparent rules, broad coverage, and exchange quality screens—supported by detailed methodology documents. wilshireindexes.com+1

Best For: Consultants/OCIOs; plan sponsors; research teams.

Notable Features: Broad Market index; governance via advisory groups; venue vetting; classification scheme.

Consider If: You need media-ubiquitous branding—S&P/Bloomberg carry more name recall.

Alternatives: FTSE Russell; S&P DJI.

Regions: Global • Fees/Notes: Enterprise licensing.

Decision Guide: Best By Use Case

- Regulated settlement benchmarks: CF Benchmarks. CF Benchmarks

- Core market beta (simple, liquid): BGCI or NCI. Galaxy Asset Management+1

- Broad institution-grade baskets: S&P DJI or FTSE Russell. S&P Global+1

- Thematic exposure (e.g., smart contracts): MSCI Digital Assets. MSCI

- Deep coverage & customization: MarketVector or Vinter. MarketVector Indexes+1

- Reference price + tradable composites: CoinDesk Indices (XBX / CoinDesk 20). CoinDesk Indices+1

- EU-aligned venue vetting: FTSE Russell (with DAR). LSEG

How to Choose the Right Crypto Index Provider (Checklist)

- Region & eligibility: Confirm benchmark status (e.g., UK/EU BMR) and licensing.

- Coverage fit: Single-asset, broad market, sectors/factors, staking yield handling.

- Liquidity screens: How are exchanges qualified and weighted?

- Rebalance/refresh: Frequency and buffers to limit turnover/slippage.

- Data quality & ops: Timestamps, outage handling, fallbacks, NAV timing.

- Costs: Licensing, data access, custom build fees.

- Support: SLAs, client engineering, custom index services.

- Red flags: Opaque methodologies; limited venue vetting.

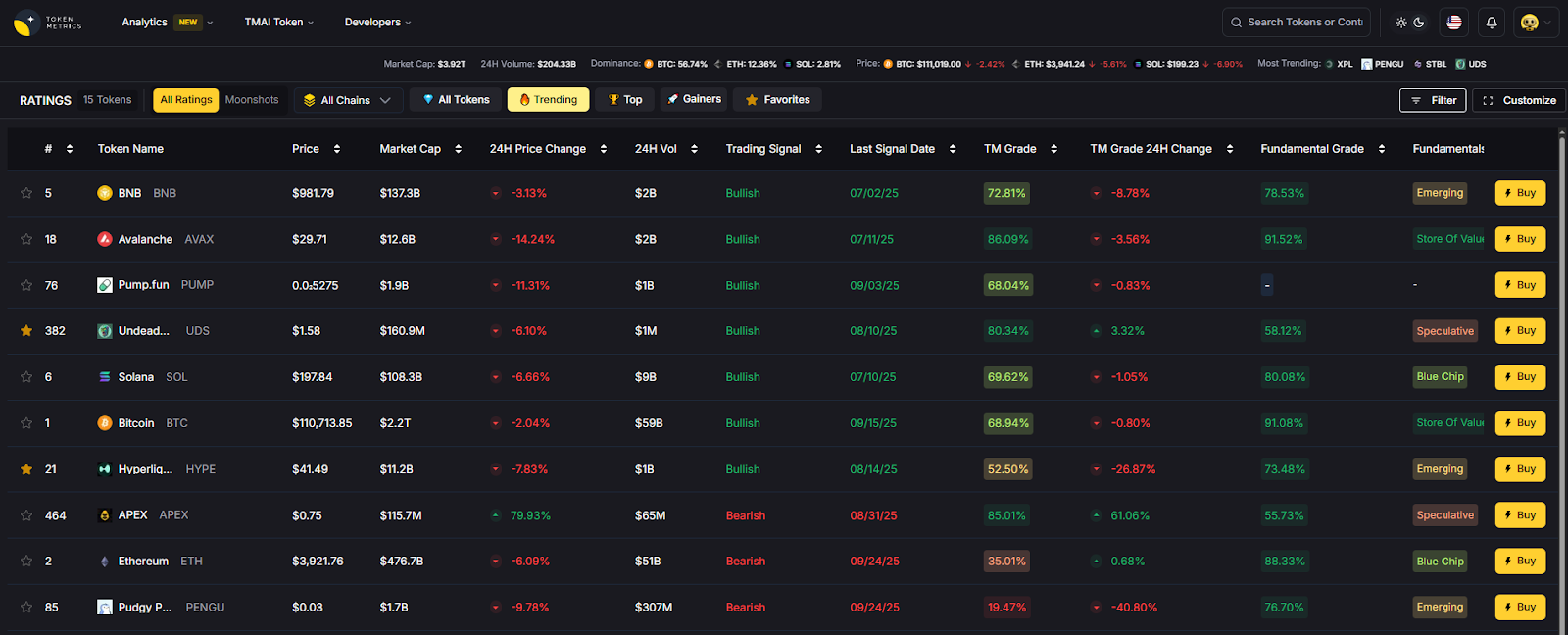

Use Token Metrics With Any Index Provider

- AI Ratings to screen constituents and spot outliers.

- Narrative Detection to see when sectors (e.g., L2s, DePIN) start trending.

- Portfolio Optimization to balance broad index beta with targeted alpha sleeves.

- Alerts & Signals to monitor entries/exits as indices rebalance.

Mini-workflow: Research → Select index/benchmark → Execute via your provider or ETP → Monitor with Token Metrics alerts.

Primary CTA: Start free trial.

Security & Compliance Tips

- Enable 2FA and role-based access for index data portals.

- Map custody and pricing cut-offs to index valuation times.

- Align with KYC/AML when launching index-linked products.

- For RFQ/OTC hedging around rebalances, pre-plan execution windows.

- Staking/bridged assets: verify methodology treatment and risks.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Assuming all “broad market” indices hold the same assets/weights.

- Ignoring venue eligibility—liquidity and data quality vary.

- Overlooking reconstitution buffers (can drive turnover and cost).

- Mixing reference rates and investable baskets in reporting.

- Not confirming licensing scope for marketing vs. product use.

FAQs

What is a crypto index provider?

A company that designs, calculates, and governs rules-based benchmarks for digital assets—ranging from single-asset reference rates to diversified market baskets—licensed for reporting or products.

Which crypto index is best for “core beta”?

For simple, liquid market exposure, many institutions look to BGCI or NCI due to broad recognition and liquidity screens; your use case and region may point to S&P/FTSE alternatives. Galaxy Asset Management+1

How do providers choose exchanges and assets?

They publish ground rules defining eligible venues (liquidity, compliance), asset screening, capping, and rebalances—see S&P, FTSE (with DAR), and CF Benchmarks for examples. S&P Global+2LSEG+2

Can I license a custom crypto index?

Yes—MarketVector and Vinter (among others) frequently build bespoke indices and act as calculation agents for issuers. MarketVector Indexes+1

What’s the difference between a reference rate and a market basket?

Reference rates (e.g., BRR, XBX) target a single asset’s robust price; market baskets (e.g., NCI, BGCI) represent diversified multi-asset exposure. Galaxy Asset Management+3CF Benchmarks+3CoinDesk Indices+3

Are these benchmarks available in the U.S. and EU?

Most are global; for EU/UK benchmark usage, verify authorization/registration (e.g., CF Benchmarks UK BMR) and your product’s country-specific rules. CF Benchmarks

Conclusion + Related Reads

If you need regulated reference pricing for settlement or NAVs, start with CF Benchmarks. For core market beta, BGCI and NCI are widely recognized. For institution-grade breadth, consider S&P DJI or FTSE Russell (with DAR). If you’re launching custom or thematic products, MarketVector and Vinter are strong build partners.

Related Reads:

- Best Cryptocurrency Exchanges 2025

- Top Derivatives Platforms 2025

- Top Institutional Custody Providers 2025

Sources & Update Notes

We reviewed official product pages, methodologies, and governance documents current as of September 2025. A short list of key sources per provider is below (official sites only; non-official data used only for cross-checks and not linked here).

- CF Benchmarks: “BRR – CME CF Bitcoin Reference Rate”; CME CF Cryptocurrency Benchmarks. CF Benchmarks+1

- S&P Dow Jones Indices: “Cryptocurrency – Indices”; “S&P Cryptocurrency Broad Digital Market Index.” S&P Global+1

- MSCI: “Digital Assets Solutions”; “Global Digital Assets Index Methodology.” MSCI+1

- FTSE Russell: “Digital Asset indices”; FTSE + DAR reference pricing overview/ground rules. LSEG+2LSEG+2

- Nasdaq: “Nasdaq Crypto Index (NCI)” solution page; NCI index overview; Hashdex NCI ETP replication note. Nasdaq+2Nasdaq Global Index Watch+2

- MarketVector: “Digital Assets Indexes” hub; “MarketVector Digital Assets 100 (MVDA).” MarketVector Indexes+1

- Bloomberg Galaxy: Galaxy “Bloomberg Indices (BGCI)” page; Bloomberg terminal quote page. Galaxy Asset Management+1

- CoinDesk Indices: “CoinDesk Indices” homepage; “XBX” page; NYSE/ICE collaboration release referencing XBX. CoinDesk Indices+2CoinDesk Indices+2

- Vinter: “Making Smarter Crypto Indexes for ETF Issuers”; example single-asset reference rate page. vinter.co+1

Wilshire: FT Wilshire Digital Asset Index Series page; methodology PDF. wilshireindexes.com+1

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)