Best Newsletters & Independent Analysts (2025)

Why Crypto Newsletters & Independent Analysts Matter in September 2025

In a market that never sleeps, the best crypto newsletters 2025 help you filter noise, spot narratives early, and act with conviction. In one line: a great newsletter or analyst condenses complex on-chain, macro, and market structure data into clear, investable insights. Whether you’re a builder, long-term allocator, or active trader, pairing independent analysis with your own process can tighten feedback loops and reduce decision fatigue. In 2025, ETF flows, L2 expansion, AI infra plays, and global regulation shifts mean more data than ever. The picks below focus on consistency, methodology transparency, breadth (on-chain + macro + market), and practical takeaways—blending independent crypto analysts with data-driven research letters and easy-to-digest daily briefs.

Secondary intents we cover: crypto research newsletter, on-chain analysis weekly, and “who to follow” for credible signal over hype.

How We Picked (Methodology & Scoring)

- Scale & authority (liquidity = 30%): Reach, frequency, and signals that move or benchmark the market (ETF/flows, L2 metrics, sector heat).

- Security & transparency (25%): Clear disclosures, methodology notes, sources of data; links to security/research pages when applicable.

- Coverage (15%): On-chain + macro + sector breadth; BTC/ETH plus L2s, DeFi, RWAs, AI infra, and alt cycles.

- Costs (15%): Free tiers, reasonable paid options, and clarity on what’s gated.

- UX (10%): Digestible summaries, archives, and skim-ability.

- Support (5%): Reliability of delivery, community, and documentation.

Data sources used: official sites/newsletter hubs, research/security pages, and widely cited datasets (Glassnode, Coin Metrics, Kaiko, CoinShares) for cross-checks. Last updated September 2025.

Top 10 Crypto Newsletters & Independent Analysts in September 2025

1. Bankless — Best for Daily Crypto & Web3 Digests

- Why Use It: Bankless offers an approachable Daily Brief and deeper thematic series that balance top-of-funnel news with actionable context. If you want a consistent, skimmable daily pulse on crypto, DeFi, and Ethereum, this is a staple.

- Best For: Busy professionals, founders, new-to-intermediate investors, narrative spotters.

- Notable Features: Daily Brief; weekly/thematic issues; Ethereum-centric takes; large archive; clear disclosures.

- Fees Notes: Generous free tier; optional paid communities/products.

- Regions: Global

- Alternatives: The Defiant, Milk Road

- Consider If: You want daily breadth and a friendly voice more than deep quant.

2. The Defiant — Best for DeFi-Native Coverage

- Why Use It: The Defiant’s daily/weekly letters and DeFi Alpha cut straight to on-chain happenings, new protocols, and governance. Expect fast DeFi coverage with practical trader/investor context.

- Best For: DeFi power users, yield seekers, DAO/governance watchers.

- Notable Features: DeFi-focused daily; weekly recaps; Alpha letter; strong reporting cadence.

- Fees Notes: Free newsletter options; premium research tiers available.

- Regions: Global

- Alternatives: Bankless, Delphi Digital

- Consider If: Your focus is DeFi first and you want timely protocol insights.

3. Messari – Unqualified Opinions — Best for Institutional-Grade Daily Takes

- Why Use It: Messari’s daily market commentary and analyst notes are crisp, data-aware, and aligned with institutional workflows. Great for staying current on stablecoins, venture, and macro-market structure.

- Best For: Funds, analysts, founders, policy/market observers.

- Notable Features: Daily commentary; stablecoin weekly; venture weekly; archives; research ecosystem.

- Fees Notes: Free newsletters with deeper research available to paying customers.

- Regions: Global

- Alternatives: Delphi Digital, Coin Metrics SOTN

- Consider If: You value concise institutional context over tutorials.

4. Delphi Digital – Delphi Alpha — Best for Thematic Deep Dives

- Why Use It: Delphi marries thematic research (AI infra, gaming, L2s) with market updates and timely unlocks of longer reports. Great when you want conviction around medium-term narratives.

- Best For: Venture/allocators, founders, narrative investors.

- Notable Features: “Alpha” newsletter; report previews; cross-asset views; long-form research.

- Fees Notes: Free Alpha letter; premium research memberships available.

- Regions: Global

- Alternatives: Messari, The Defiant

- Consider If: You prefer thesis-driven research over daily headlines.

5. Glassnode – The Week On-Chain — Best for On-Chain Market Structure

- Why Use It: The industry’s flagship weekly on-chain letter explains BTC/ETH supply dynamics, holder cohorts, and cycle health with charts you’ll see cited everywhere.

- Best For: Traders, quants, macro/on-chain hybrid readers.

- Notable Features: Weekly on-chain; clear frameworks; historical cycle context; free subscription option.

- Fees Notes: Free newsletter; paid platform tiers for advanced metrics.

- Regions: Global

- Alternatives: Coin Metrics SOTN, Into The Cryptoverse

- Consider If: You want a single, rigorous on-chain read each week.

6. Coin Metrics – State of the Network — Best for Data-First Research Notes

- Why Use It: SOTN blends on-chain and market data into weekly essays on sectors like LSTs, stablecoins, and market microstructure. It’s authoritative, neutral, and heavily cited.

- Best For: Researchers, desk strategists, product teams.

- Notable Features: Weekly SOTN; special insights; transparent data lineage; archives.

- Fees Notes: Free newsletter; enterprise data products available.

- Regions: Global

- Alternatives: Glassnode, Kaiko Research

- Consider If: You want clean methodology and durable references.

7. Kaiko Research Newsletter — Best for Liquidity & Market Microstructure

- Why Use It: Kaiko’s research distills exchange liquidity, spreads, and derivatives structure across venues—useful for routing, slippage, and institutional execution context.

- Best For: Execution teams, market makers, advanced traders.

- Notable Features: Data-driven notes; liquidity dashboards; exchange/venue comparisons.

- Fees Notes: Free research posts; deeper tiers for subscribers/clients.

- Regions: Global

- Alternatives: Coin Metrics, Messari

- Consider If: You care about where liquidity actually is—and why it moves.

8. CoinShares – Digital Asset Fund Flows & Market Update — Best for ETF/Institutional Flow Watchers

- Why Use It: Weekly Fund Flows and macro wrap-ups help you track institutional positioning and sentiment—especially relevant in the ETF era.

- Best For: Allocators, macro traders, desk strategists.

- Notable Features: Monday flows report; Friday market update; AuM trends; asset/region breakdowns.

- Fees Notes: Free reports.

- Regions: Global (some content segmented by jurisdiction)

- Alternatives: Glassnode, Messari

- Consider If: You anchor decisions to capital flows and risk appetite.

9. Milk Road — Best for Quick, Conversational Daily Briefs

- Why Use It: A fast, witty daily that makes crypto easier to follow without dumbing it down. Great second screen with coffee—good for catching headlines, airdrops, and memes that matter.

- Best For: Busy professionals, newcomers, social-narrative trackers.

- Notable Features: Daily TL;DR; approachable tone; growing macro/AI crossover.

- Fees Notes: Free newsletter; sponsored placements disclosed.

- Regions: Global

- Alternatives: Bankless, The Defiant

- Consider If: You want speed and simplicity over deep quant.

10. Lyn Alden – Strategic Investment Newsletter — Best for Macro That Actually Impacts Crypto

- Why Use It: Not crypto-only—yet hugely relevant. Lyn’s macro letters cover liquidity regimes, fiscal/monetary shifts, and energy/AI cycles that drive risk assets, including BTC/ETH.

- Best For: Long-term allocators, macro-minded crypto investors.

- Notable Features: Free macro letters; archives; occasional crypto-specific sections; clear frameworks.

- Fees Notes: Free with optional premium research.

- Regions: Global

- Alternatives: Messari, Delphi Digital

- Consider If: You want a macro north star to frame your crypto thesis.

Decision Guide: Best By Use Case

- DeFi-native coverage: The Defiant

- Daily crypto pulse (friendly): Bankless or Milk Road

- Institutional-style daily notes: Messari – Unqualified Opinions

- Thematic, thesis-driven research: Delphi Digital

- On-chain cycle health: Glassnode – Week On-Chain

- Data-first weekly (methodology): Coin Metrics – SOTN

- Liquidity & venue quality: Kaiko Research

- ETF & institutional positioning: CoinShares Fund Flows

- Macro framing for crypto: Lyn Alden

How to Choose the Right Crypto Newsletter/Analyst (Checklist)

- Region/eligibility: confirm signup availability and any paywall constraints.

- Breadth vs. depth: daily skim (news) vs. weekly deep dives (research).

- Data lineage: on-chain and market sources are named and reproducible.

- Fees & value: what’s free vs. gated; consider team needs (PM vs. research).

- UX & cadence: archives, searchable tags, consistent schedule.

- Disclosures: positions, sponsorships, methodology explained.

- Community/support: access to Q&A, office hours, or active forums.

- Red flags: vague performance claims; undisclosed affiliations.

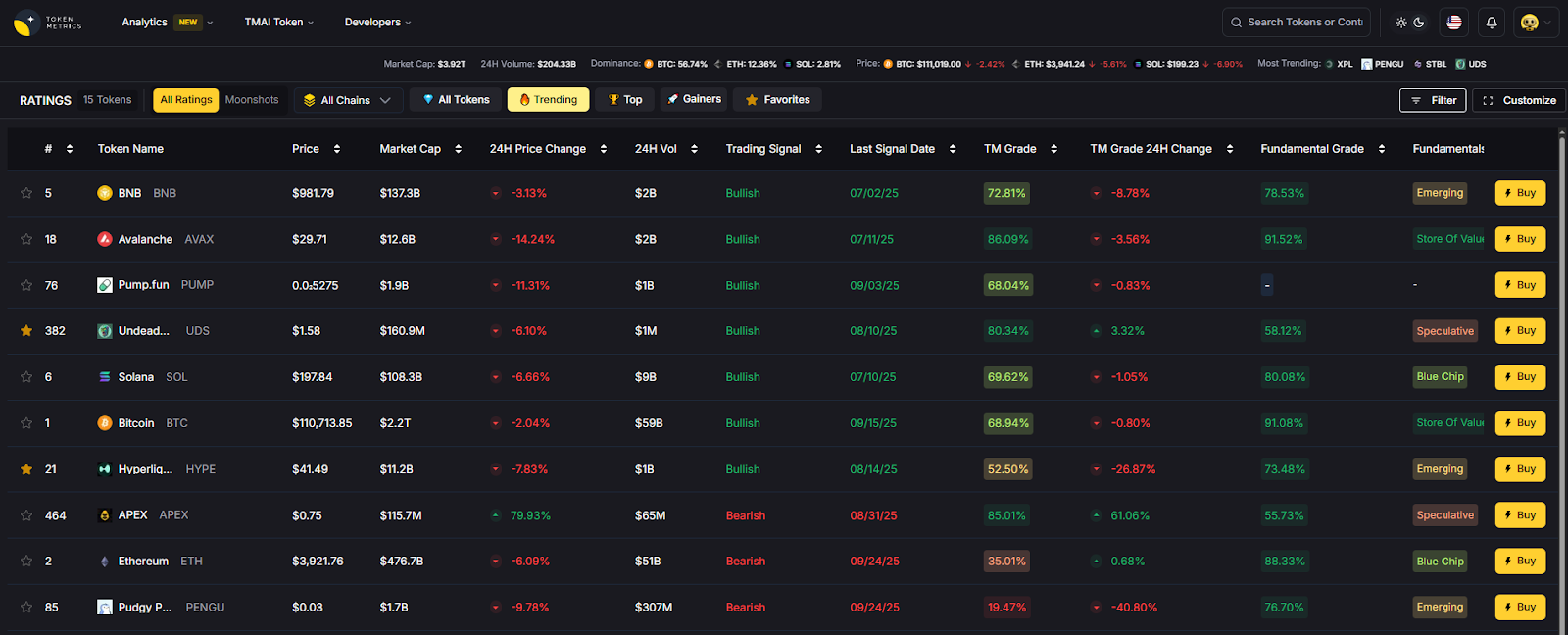

Use Token Metrics With Any Newsletter/Analyst

- AI Ratings to screen sectors/tokens surfacing in the letters you read.

- Narrative Detection to quantify momentum behind themes (L2s, AI infra, RWAs).

- Portfolio Optimization to size convictions with risk-aware allocations.

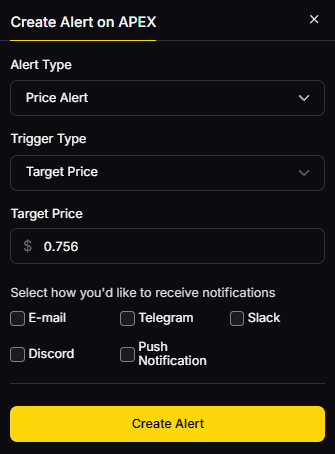

- Alerts/Signals to time entries/exits as narratives evolve.

Workflow: Research in your favorite newsletter → shortlist in Token Metrics → execute on your venue of choice → monitor with Alerts.

Primary CTA: Start free trial

Security & Compliance Tips

- Enable 2FA on your email client and any research platform accounts.

- Verify newsletter domains and unsubscribe pages to avoid phishing.

- Respect KYC/AML and regional rules when acting on research.

- For RFQs/execution, confirm venue liquidity and slippage.

- Separate reading devices from hot-wallets; practice wallet hygiene.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Treating a newsletter as a signal service—use it as input, not output.

- Ignoring methodology and disclosures.

- Chasing every narrative without a sizing framework.

- Subscribing to too many sources—prioritize quality over quantity.

- Not validating claims with primary data (on-chain/flows).

FAQs

What makes a crypto newsletter “best” in 2025?

Frequency, methodological transparency, and the ability to translate on-chain/macro signals into practical takeaways. Bonus points for archives and clear disclosures.

Are the top newsletters free or paid?

Most offer strong free tiers (daily or weekly). Paid tiers typically unlock deeper research, models, or community access.

Do I need both on-chain and macro letters?

Ideally yes—on-chain explains market structure; macro sets the regime (liquidity, rates, growth). Pairing both creates a more complete view.

How often should I read?

Skim dailies (Bankless/Milk Road) for awareness; reserve time weekly for deep dives (Glassnode/Coin Metrics/Delphi).

Can newsletters replace analytics tools?

No. Treat them as curated insight. Validate ideas with your own data and risk framework (Token Metrics can help).

Which is best for ETF/flows?

CoinShares’ weekly Fund Flows is the go-to for institutional positioning, complemented by Glassnode/Coin Metrics on structure.

Conclusion + Related Reads

If you want a quick pulse, pick a daily (Bankless or Milk Road). For deeper conviction, add one weekly on-chain (Glassnode or Coin Metrics) and one thesis engine (Delphi or Messari). Layer macro (Lyn Alden) to frame the regime, and use Token Metrics to quantify what you read and act deliberately.

Related Reads:

- Best Cryptocurrency Exchanges 2025

- Top Derivatives Platforms 2025

- Top Institutional Custody Providers 2025

Sources & Update Notes

We reviewed each provider’s official newsletter hub, research pages, and recent posts to confirm availability, cadence, and focus. Updated September 2025 with the latest archives and program pages. Key official references: Bankless newsletter hub Bankless+2Bankless+2; The Defiant newsletter page The Defiant+1; Messari newsletter hub and Unqualified Opinions pages Messari+2messari.substack.com+2; Delphi Digital newsletter page and research site Delphi Digital+2delphidigital.io+2; Glassnode Week On-Chain hub and latest issue insights.glassnode.com+2Glassnode+2; Coin Metrics SOTN hub and archive Coin Metrics+2Coin Metrics+2; Kaiko research/newsletter hub and company site Kaiko Research+1; CoinShares Fund Flows & Research hubs (US/global) and latest weekly example CoinShares+2CoinShares+2; Milk Road homepage and social proof Milk Road+1; Lyn Alden newsletter/archive pages and 2025 issues Lyn Alden+4Lyn Alden+4Lyn Alden+4.

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)