Leading Metaverse Platforms (2025)

Why Metaverse Platforms Matter in September 2025

The metaverse has evolved from hype to practical utility: brands, creators, and gamers now use metaverse platforms to host events, build persistent worlds, and monetize experiences. In one line: a metaverse platform is a shared, real-time 3D world or network of worlds where users can create, socialize, and sometimes own digital assets. In 2025, this matters because cross-platform tooling (web/mobile/VR), better creator economics, and cleaner wallet flows are making virtual worlds useful—not just novel. Whether you’re a creator monetizing UGC, a brand running virtual activations, or a gamer seeking interoperable avatars and items, this guide compares the leaders and helps you pick the right fit. Secondary focus areas include web3 metaverse ownership models, virtual worlds with events/tools, and NFT avatars where relevant.

How We Picked (Methodology & Scoring)

- Liquidity (30%): Active user activity, creator economy health, and tradable asset depth for worlds/items.

- Security (25%): Platform transparency, custody/ownership model, documentation, audits, and brand safeguards.

- Coverage (15%): Breadth of supported devices (web/mobile/XR), toolchains (Unity, SDKs), and asset standards.

- Costs (15%): Fees on mints, marketplace trades, land, or subscriptions; fair creator revenue splits.

- UX (10%): Onboarding, performance, no-code tools, creator pipelines.

- Support (5%): Docs, community, and partner success resources.

Data sources: official product/docs pages, security/transparency pages, and (for cross-checks) widely cited market datasets. Last updated September 2025.

Top 10 Metaverse Platforms in September 2025

1. Decentraland — Best for open, browser-based social worlds

- Why Use It: One of the earliest browser-native 3D virtual worlds with user-owned land and a strong events culture (conferences, fashion, art). DAO-governed features and open tooling make it a steady choice for brand activations and community hubs. Decentraland

- Best For: Web-first events; brand galleries; creator storefronts; DAO communities.

- Notable Features: Land & wearables as NFTs; events calendar; builder & SDK; DAO governance. Decentraland

- Fees/Notes: Marketplace fees on assets vary; gas applies for on-chain actions.

- Regions: Global (browser-based).

- Consider If: You want open standards and long-running community tooling over cutting-edge graphics.

- Alternatives: The Sandbox, Spatial.

2. The Sandbox — Best for branded IP and UGC game experiences

- Why Use It: A UGC-driven game world with heavy brand participation and seasonal campaigns that reward play and creation. Strong toolchain (VoxEdit, Game Maker) and high-profile partnerships attract mainstream audiences. The Sandbox+2Vogue Business+2

- Best For: Brands/IP holders; creators building mini-games; seasonal events.

- Notable Features: No-code Game Maker; avatar collections; brand hubs; seasonal reward pools. The Sandbox+1

- Fees/Notes: Asset and land marketplace fees; seasonal reward structures.

- Regions: Global.

- Consider If: You want strong IP gravity and structured events more than fully open worldbuilding.

- Alternatives: Decentraland, Upland.

3. Somnium Space — Best for immersive VR worldbuilding

- Why Use It: A persistent, open VR metaverse with land ownership and deep creator tools—great for immersive meetups, galleries, and simulations. Hardware initiatives (e.g., VR1) signal a VR-first roadmap. somniumspace.com+2somniumspace.com+2

- Best For: VR-native communities; immersive events; simulation builds.

- Notable Features: Persistent VR world; land & parcels; robust creator/SDK docs; hardware ecosystem. somniumspace.com+1

- Fees/Notes: Marketplace and gas fees apply for on-chain assets.

- Regions: Global.

- Consider If: VR performance and hardware availability fit your audience.

- Alternatives: Spatial, Mona.

4. Voxels — Best for lightweight, linkable spaces

- Why Use It: A voxel-style world (formerly Cryptovoxels) known for easy, link-and-share parcels, fast event setups, and a strong indie creator scene. Great for galleries and casual meetups. Voxels+1

- Best For: NFT galleries; indie events; rapid prototyping.

- Notable Features: Parcels & islands; simple building; events; browser-friendly access. Voxels

- Fees/Notes: Asset/parcel markets with variable fees; gas for on-chain actions.

- Regions: Global.

- Consider If: You prefer simplicity over realism and AAA graphics.

- Alternatives: Hyperfy, Oncyber.

5. Spatial — Best for cross-device events and no-code worlds

- Why Use It: Polished, cross-platform creation: publish to web, mobile, and XR; strong no-code templates plus a Unity SDK for advanced teams. Used by creators, educators, and brands for scalable events. Spatial+1

- Best For: Brand activations; classrooms & training; cross-device showcases.

- Notable Features: No-code world templates; Unity SDK; web/mobile/XR publishing; multiplayer. Spatial

- Fees/Notes: Freemium with paid tiers/features; no crypto requirement to start.

- Regions: Global.

- Consider If: You want frictionless onboarding and device coverage without mandatory wallets.

- Alternatives: Mona, Somnium Space.

6. Mona (Monaverse) — Best for high-fidelity art worlds

- Why Use It: Curated, visually striking worlds favored by digital artists and institutions; interoperable assets and creator-forward tools make it ideal for exhibitions and premium experiences. monaverse.com+1

- Best For: Galleries & museums; premium showcases; art-led communities.

- Notable Features: High-fidelity scenes; curated drops; creator tools; marketplace. monaverse.com

- Fees/Notes: Marketplace fees for assets; gas where applicable.

- Regions: Global.

- Consider If: You prioritize aesthetics and curation over mass-market gamification.

- Alternatives: Spatial, Oncyber.

7. Oncyber — Best for instant NFT galleries & creator “multiverses”

- Why Use It: Easiest way to spin up personal worlds/galleries that showcase NFTs, with simple hosting and sharable links; now expanding creator tools (Studio) for interactive spaces. oncyber.io+1

- Best For: Artists/collectors; quick showcases; brand micro-experiences.

- Notable Features: One-click galleries; wallet connect; customizable spaces; creator studio. oncyber.io

- Fees/Notes: Free to start; marketplace/transaction fees where applicable.

- Regions: Global.

- Consider If: You need speed and simplicity, not complex game loops.

- Alternatives: Voxels, Mona.

8. Nifty Island — Best for creator-led islands & social play

- Why Use It: A free-to-play social game world where communities build islands, run quests, and bring compatible NFTs in-world; expanding UGC features and events. Nifty Island+1

- Best For: Streamers & communities; UGC map makers; social gaming guilds.

- Notable Features: Island builder; quests; NFT avatar/item support; leaderboards. Nifty Island+1

- Fees/Notes: Free to play; optional marketplace economy.

- Regions: Global.

- Consider If: You want a fun, social loop with creator progression over real-estate speculation.

- Alternatives: Worldwide Webb, The Sandbox.

9. Upland — Best for real-world-mapped city building

- Why Use It: A city-builder mapped to real-world geographies, emphasizing digital property, development, and an open economy—popular with strategy players and brand pop-ups. Upland

- Best For: Property flippers; city sim fans; brand tie-ins tied to real locations.

- Notable Features: Real-world maps; property trading; dev APIs; avatar integrations. Upland

- Fees/Notes: Marketplace fees; token/withdrawal rules vary by region.

- Regions: Global (availability varies).

- Consider If: You want geo-tied gameplay and an economy centered on property.

- Alternatives: The Sandbox, Decentraland.

10. Otherside — Best for large-scale, interoperable metaRPGs

- Why Use It: Yuga Labs’ metaRPG in development aims for massive, real-time multiplayer with NFT interoperability—suited to large communities seeking events and game loops at scale. otherside.xyz+1

- Best For: Big communities; interoperable avatar projects; large-scale events.

- Notable Features: MetaRPG vision; NFT-native design; real-time massive sessions. otherside.xyz

- Fees/Notes: Economy details evolving; expect on-chain transactions for assets.

- Regions: Global (under development; access windows vary).

- Consider If: You’re comfortable with active development and staged releases.

- Alternatives: Nifty Island, The Sandbox.

Decision Guide: Best By Use Case

- Regulated/corporate events, low friction: Spatial

- Open web3 land & wearables: Decentraland

- Brand/IP campaigns & UGC seasons: The Sandbox

- High-fidelity art exhibitions: Mona

- VR-native immersion: Somnium Space

- Instant NFT galleries: Oncyber

- Social UGC gameplay: Nifty Island

- Geo-tied city building/economy: Upland

- Massive interoperable metaRPG (developing): Otherside

- Lightweight, link-and-share worlds: Voxels

How to Choose the Right Metaverse Platform (Checklist)

- Confirm region/eligibility (and any content or cash-out restrictions).

- Match your use case: events vs. galleries vs. UGC games vs. VR immersion.

- Check device coverage (web, mobile, XR) and tooling (no-code, Unity/SDK).

- Review ownership/custody of assets; does it require a wallet?

- Compare costs: land, mints, marketplace fees, subscriptions.

- Evaluate performance & UX for your target hardware and connection speeds.

- Look for support/docs and active community channels.

- Red flags: locked ecosystems with poor export options; unclear TOS on IP/royalties.

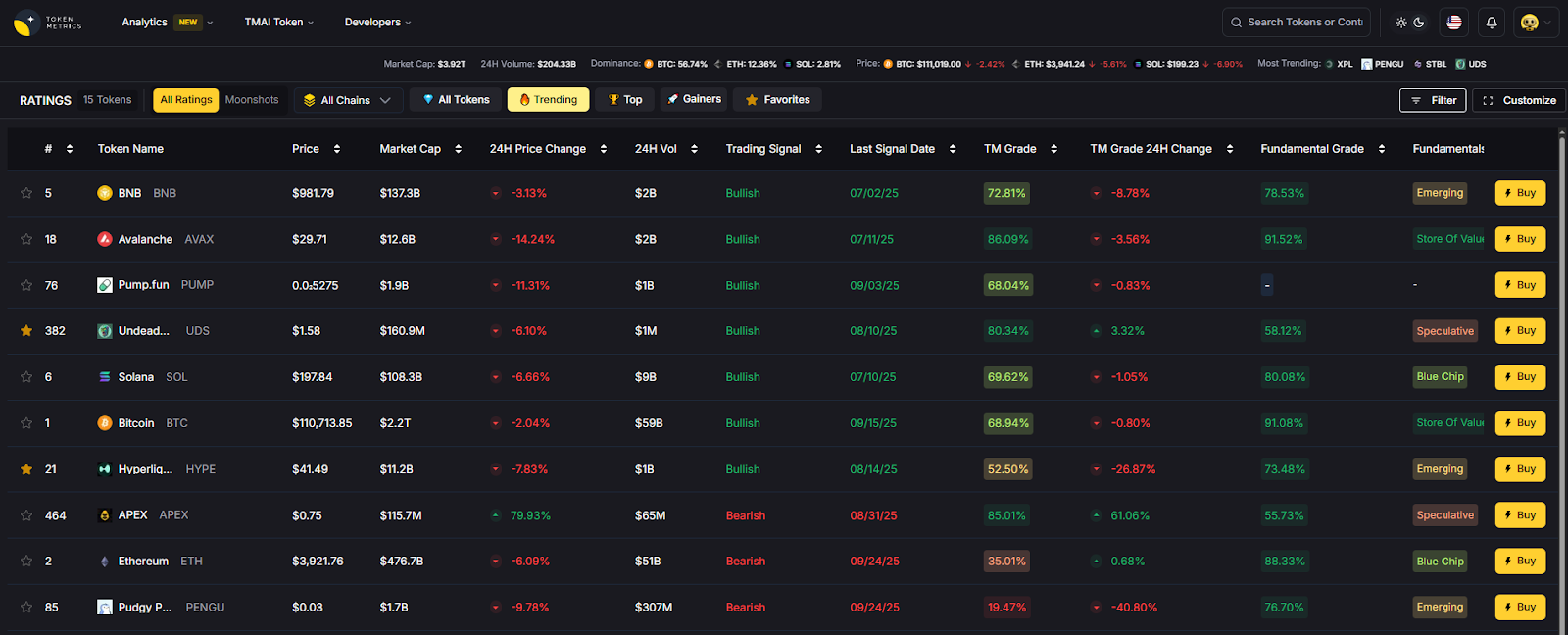

Use Token Metrics With Any Metaverse Platform

- AI Ratings to screen tokens and ecosystems tied to these platforms.

- Narrative Detection to spot momentum in metaverse, gaming, and creator-economy sectors.

- Portfolio Optimization to balance exposure across platform tokens and gaming assets.

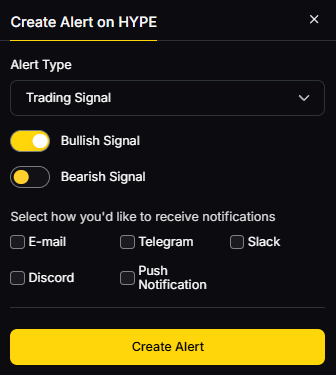

- Alerts & Signals to monitor entries/exits as narratives evolve.

Workflow: Research on Token Metrics → Select a platform/asset → Execute in your chosen world → Monitor with alerts.

Primary CTA: Start free trial

Security & Compliance Tips

- Enable 2FA on marketplaces/accounts; safeguard seed phrases if using wallets.

- Separate hot vs. cold storage for valuable assets; use hardware wallets where appropriate.

- Follow KYC/AML rules on fiat on-/off-ramps and regional restrictions.

- Use official clients/links only; beware spoofed mints and fake airdrops.

- For events/UGC, implement moderation and IP policies before going live.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Buying land/assets before validating actual foot traffic or event needs.

- Ignoring device compatibility (mobile/XR) for your audience.

- Underestimating build time—even “no-code” worlds need iteration.

- Skipping wallet safety and permissions review.

- Chasing hype without checking fees and creator revenue splits.

FAQs

What is a metaverse platform?

A shared, persistent 3D environment where users can create, socialize, and sometimes own assets (via wallets/NFTs). Some focus on events and galleries; others on UGC games or VR immersion.

Do I need crypto to use these platforms?

Not always. Spatial and some worlds allow non-crypto onboarding. Web3-native platforms often require wallets for asset ownership and trading.

Which platform is best for branded events?

The Sandbox (IP partnerships, seasons) and Spatial (cross-device ease) are top picks; Decentraland also hosts large community events.

What about VR?

Somnium Space is VR-first; Spatial also supports XR publishing. Confirm device lists and performance requirements.

Are assets portable across worlds?

Interoperability is improving (avatars, file formats), but true portability varies. Always check import/export support and license terms.

How do these platforms make money?

Typically via land sales, marketplace fees, subscriptions, or seasonal passes/rewards. Review fee pages and terms before committing.

What risks should I consider?

Platform changes, token volatility, phishing, and evolving terms. Start small, use official links, and secure wallets.

Conclusion + Related Reads

If you’re brand-led or IP-driven, start with The Sandbox or Spatial. For open web3 communities and DAO-style governance, consider Decentraland. Creators seeking premium visuals may prefer Mona, while Somnium Space fits VR die-hards. Social UGC gamers can thrive on Nifty Island; geo-builders on Upland; galleries on Oncyber; lightweight events on Voxels; and large NFT communities should watch Otherside as it develops.

Related Reads:

- Best Cryptocurrency Exchanges 2025

- Top Derivatives Platforms 2025

- Top Institutional Custody Providers 2025

Sources & Update Notes

We validated claims on official product/docs pages and public platform documentation, and cross-checked positioning with widely cited datasets when needed. Updated September 2025; we’ll refresh as platforms ship major features or change terms.

- Decentraland — Home, Docs, DAO pages. Decentraland

- The Sandbox — Home, Avatars/Seasons, brand news. Vogue Business+3The Sandbox+3The Sandbox+3

- Somnium Space — Home, Docs, VR1 store. somniumspace.com+2somniumspace.com+2

- Voxels — Home/Parcels (formerly Cryptovoxels). Voxels+1

- Spatial — Home, categories, Unity SDK. Spatial+1

- Mona — Home, brand copy. monaverse.com+1

- Oncyber — Home, Studio. oncyber.io+1

- Nifty Island — Home, FAQs/updates. Nifty Island+2Epic Games Store+2

- Upland — Home (product), community channels. Upland

Otherside — Home, Yuga overview. otherside.xyz+1

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)