Top Derivatives Platforms for Futures & Options (2025)

If you trade crypto futures and options, picking the right derivatives platforms can make or break your results. In this guide, we sort the top exchanges and on-chain venues by liquidity, security, costs, and product depth so you can match your strategy to the right venue—fast. You’ll find quick answers near the top, deeper context below, and links to official resources. We cover crypto futures, crypto options, and perpetual swaps for both centralized and decentralized platforms.

Quick answer: The best platform for you depends on region/eligibility, contract types (perps, dated futures, options), fee structure, margin system, and support quality. Below we score each provider and map them to common use cases.

How We Picked (Methodology & Scoring)

We scored each provider using the weights below (0–100 total):

- Liquidity (30%) – Depth, spreads, and market resilience during volatility.

- Security (25%) – Operational history, custody model, risk controls, and disclosures.

- Coverage (15%) – Contract variety (BTC/ETH majors, alt perps, dated futures, options).

- Costs (15%) – Trading/withdrawal fees, funding rates context, rebates.

- UX (10%) – Execution workflow, APIs, mobile, analytics/tools.

- Support (5%) – Docs, status pages, client service, institutional access.

Sources: Official platform pages, help centers, and product docs; public disclosures and product catalogs; our hands-on review and long-term coverage of derivatives venues. Last updated September 2025.

Top 10 Derivatives Platforms in September 2025

Each summary includes why it stands out, who it’s best for, and what to consider. Always check regional eligibility.

1. Binance Futures — Best for global liquidity at scale Binance+2Binance+2

Why Use It: Binance Futures offers some of the deepest books and widest perp listings, with robust APIs and portfolio margin. It’s a go-to for active traders who need speed and breadth.

Best For: High-frequency/active traders; systematic/API users; altcoin perp explorers.

Notable Features: Perpetuals and dated futures, options module, copy trading, portfolio margin.

Consider If: You need U.S.-regulated access—availability may vary by region.

Alternatives: OKX, Bybit.

2. OKX — Best for breadth + toolset OKX+2OKX+2

Why Use It: Strong product coverage (perps, dated futures, options) with solid liquidity and a polished interface. Good balance of features for discretionary and API traders.

Best For: Multi-instrument traders; users wanting options + perps under one roof.

Notable Features: Unified account, options chain, pre-market perps, apps and API.

Consider If: Region/eligibility and KYC rules may limit access.

Alternatives: Binance Futures, Bybit.

3. Bybit Derivatives — Best for active perps traders Bybit+2Bybit+2

Why Use It: Competitive fees, broad perp markets, solid tooling, and a large user base make Bybit attractive for day traders and swing traders alike.

Best For: Perps power users; copy-trading and mobile-first traders.

Notable Features: USDT/USDC coin-margined perps, options, demo trading, OpenAPI.

Consider If: Check your local rules—service availability varies by region.

Alternatives: Binance Futures, Bitget.

4. Deribit — Best for BTC/ETH options liquidity deribit.com+1

Why Use It: Deribit is the reference venue for crypto options on BTC and ETH, with deep liquidity across maturities and strikes; it also offers futures.

Best For: Options traders (directional, spreads, volatility) and institutions.

Notable Features: Options analytics, block trading tools, test environment, 24/7 support.

Consider If: Regional access may be limited; primarily majors vs. broad alt coverage.

Alternatives: Aevo (on-chain), CME (regulated futures/options).

5. CME Group — Best for U.S.-regulated institutional futures Reuters+3CME Group+3CME Group+3

Why Use It: For institutions needing CFTC-regulated access, margin efficiency, and robust market infrastructure, CME is the standard for BTC/ETH futures and options.

Best For: Funds, corporates, and professionals with FCM relationships.

Notable Features: Standard and micro contracts, options, benchmarks, data tools.

Consider If: Requires brokerage/FCM onboarding; no altcoin perps.

Alternatives: Coinbase Derivatives (U.S.), Kraken Futures (institutions).

6. dYdX — Best decentralized perps (self-custody) dYdX Chain+2dydx.xyz+2

Why Use It: dYdX v4 runs on its own chain with on-chain settlement and pro tooling. Traders who want non-custodial perps and transparent mechanics gravitate here.

Best For: DeFi-native traders; users prioritizing self-custody and transparency.

Notable Features: On-chain orderbook, staking & trading rewards, API, incentives.

Consider If: Wallet/key management and gas/network dynamics add complexity.

Alternatives: Aevo (options + perps), GMX (alt DEX perps).

7. Kraken Futures — Best for compliance-minded access incl. U.S. roll-out Kraken+2Kraken+2

Why Use It: Kraken offers crypto futures for eligible regions, with a growing U.S. footprint via Kraken Derivatives US and established institutional services.

Best For: Traders who value brand trust, support, and clear documentation.

Notable Features: Pro interface, institutional onboarding, status and support resources.

Consider If: Product scope and leverage limits can differ by jurisdiction.

Alternatives: Coinbase Derivatives, CME.

8. Coinbase Derivatives — Best for U.S.-regulated access + education AP News+3Coinbase+3Coinbase+3

Why Use It: NFA-supervised futures for eligible U.S. customers and resources that explain contract types. Outside the U.S., Coinbase also offers derivatives via separate entities.

Best For: U.S. traders needing regulated access; Coinbase ecosystem users.

Notable Features: Nano BTC/ETH contracts, 24/7 trading, learn content, FCM/FCM-like flows.

Consider If: Contract lineup is narrower than global offshore venues.

Alternatives: CME (institutional), Kraken Futures.

9. Bitget — Best for alt-perps variety + copy trading Bitget+3Bitget+3Bitget+3

Why Use It: Bitget emphasizes a wide perp catalog, social/copy features, and frequent product updates—useful for traders rotating across narratives.

Best For: Altcoin perp explorers; copy-trading users; mobile-first traders.

Notable Features: USDT/USDC-margined perps, copy trading, frequent listings, guides.

Consider If: Check eligibility and risk—breadth can mean uneven depth in tail assets.

Alternatives: Bybit, OKX.

10. Aevo — Best on-chain options + perps with unified margin Aevo Documentation+3Aevo+3Aevo Documentation+3

Why Use It: Aevo runs a custom L2 (OP-stack based) and offers options, perps, and pre-launch futures with unified margin—bridging CEX-like speed with on-chain settlement.

Best For: Options/perps traders who want DeFi custody with pro tools.

Notable Features: Unified margin, off-chain matching + on-chain settlement, pre-launch markets, detailed docs and fee specs.

Consider If: On-chain workflows (bridging, gas) and product scope differ from CEXs.

Alternatives: Deribit (options liquidity), dYdX (perps DEX).

Decision Guide: Best By Use Case

- Deep global perp liquidity: Binance Futures, OKX, Bybit. Binance+2OKX+2

- BTC/ETH options liquidity: Deribit. deribit.com

- U.S.-regulated futures (retail/pro): CME (via FCMs), Coinbase Derivatives, Kraken Futures (jurisdiction dependent). Kraken+3CME Group+3CME Group+3

- Self-custody perps (on-chain): dYdX. dYdX Chain

- On-chain options + unified margin: Aevo. Aevo Documentation

- Altcoin perps + copy trading: Bitget, Bybit. Bitget+1

- Education + tight CEX ecosystem: Coinbase Derivatives. Coinbase

How to Choose the Right Platform (Checklist)

- Region & Eligibility: Confirm KYC/AML rules and whether your country is supported.

- Coverage & Liquidity: Check your contract list (majors vs. alts), order-book depth, and spreads.

- Custody & Security: Decide CEX custody vs. self-custody (DEX). Review incident history and controls.

- Costs: Compare maker/taker tiers, funding mechanics, and rebates across your actual volumes.

- Margin & Risk: Portfolio margin availability, liquidation engine design, circuit breakers.

- UX & API: If you automate, verify API limits and docs; assess mobile/desktop parity.

- Support & Docs: Look for status pages, live chat, and clear product specs.

- Red flags: Vague disclosures; no status page; no detail on risk/liquidation systems.

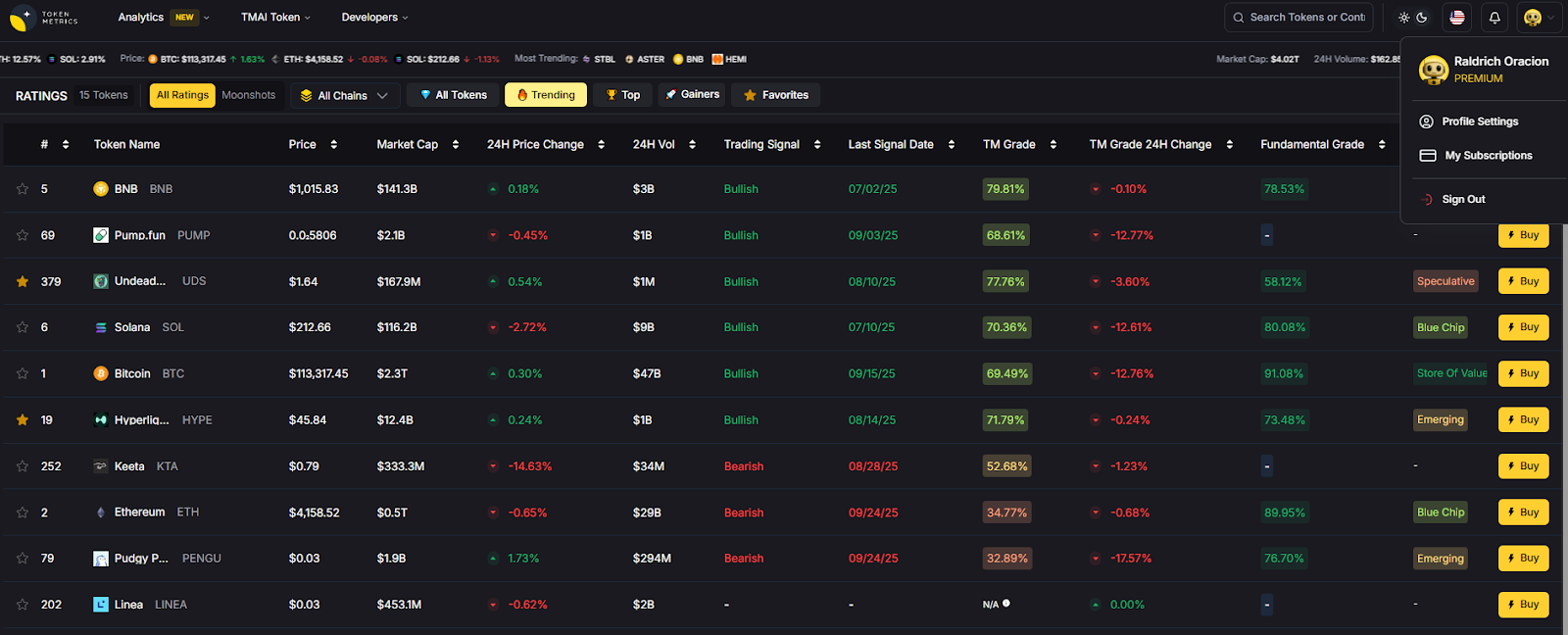

Use Token Metrics With Any Derivatives Platform

- AI Ratings & Signals: Spot changing trends before the crowd.

- Narrative Detection: Track sectors and catalysts that may drive perp flows.

- Portfolio Optimization: Size positions with risk-aware models and scenario tools.

- Alerts: Get notified on grade moves, momentum changes, and volatility spikes.

Workflow (1–4): Research with Token Metrics → Pick venue(s) above → Execute perps/options → Monitor with alerts and refine.

Primary CTA: Start free trial

Security & Compliance Tips

- Enable 2FA, withdrawal allow-lists, and API key scopes/rotations.

- For DEXs, practice wallet hygiene (hardware wallet, clean approvals).

- Use proper KYC/AML where required; understand tax obligations.

- If using options or leverage, set pre-trade max loss and test position sizing.

- For block/OTC execution, compare quotes and confirm settlement instructions.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Trading perps without understanding funding and how it impacts P&L.

- Ignoring region restrictions and onboarding to non-eligible venues.

- Oversizing positions without a liquidation buffer.

- Mixing custodial and self-custodial workflows without a key plan.

- Chasing low-liquidity alts where slippage can erase edge.

FAQs

What’s the difference between perps and traditional futures?

Perpetual swaps have no expiry, so you don’t roll contracts; instead, a funding rate nudges perp prices toward spot. Dated futures expire and may require roll management. Binance+1

Where can U.S. traders access regulated crypto futures?

Through CFTC/NFA-supervised venues like CME (via FCMs) and Coinbase Derivatives for eligible customers; availability and contract lists vary by account type. CME Group+2Coinbase+2

What’s the leading venue for BTC/ETH options liquidity?

Deribit has long been the primary market for BTC/ETH options liquidity used by pros and market makers. deribit.com

Which DEXs offer serious perps trading?

dYdX is purpose-built for on-chain perps with a pro workflow; Aevo blends options + perps with unified margin on a custom L2. dYdX Chain+1

How do I keep fees under control?

Use maker orders where possible, seek fee tier discounts/rebates, and compare funding rates over your expected holding time. Each venue publishes fee schedules and specs.

Conclusion + Related Reads

If you want deep global perps, start with Binance, OKX, or Bybit. For BTC/ETH options, Deribit remains the benchmark. If you need U.S.-regulated access, look at CME via an FCM or Coinbase Derivatives; Kraken is expanding its futures footprint. Prefer self-custody? dYdX and Aevo are solid on-chain choices. Match the venue to your region, contracts, and risk process—then let Token Metrics surface signals and manage the watchlist.

Related Reads

- Best Cryptocurrency Exchanges 2025

- Top Derivatives Platforms 2025

- Top Institutional Custody Providers 2025

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)