Top Influencers/KOLs (Twitter, YouTube, TikTok) 2025

Why Crypto Influencers & KOLs Matter in September 2025

The flood of information in crypto makes trusted voices indispensable. The top crypto influencers 2025 help you filter noise, spot narratives early, and pressure-test ideas across Twitter/X, YouTube, and TikTok. This guide ranks the most useful creators and media brands for research, education, and market awareness—whether you’re an individual investor, a builder, or an institution.

Definition: A crypto influencer/KOL is a creator or publication with outsized reach and demonstrated ability to shape attention, educate audiences, and surface on-chain or market insights. We emphasize track record, transparency, and multi-platform presence. Secondary terms like best crypto KOLs, crypto YouTubers, and crypto Twitter accounts are woven in naturally to match search intent.

How We Picked (Methodology & Scoring)

- Scale & reach (30%): Multi-platform presence; consistent engagement on X/Twitter, YouTube, and/or TikTok.

- Security & integrity (25%): Clear disclosures, brand reputation, and risk-aware education (no guaranteed-profit claims).

- Coverage & depth (15%): Breadth of topics (macro, on-chain, DeFi, trading, security) and depth of analysis.

- Costs (15%): Free content availability; paid tiers optional and transparent.

- UX (10%): Clarity, production quality, and beginner-friendliness.

- Support (5%): Community resources (newsletters, podcasts, docs, learning hubs).

Data sources: official websites, channels, and about pages; we cross-checked scale and focus with widely cited datasets when needed. Last updated September 2025.

Top 10 Crypto Influencers & KOLs in September 2025

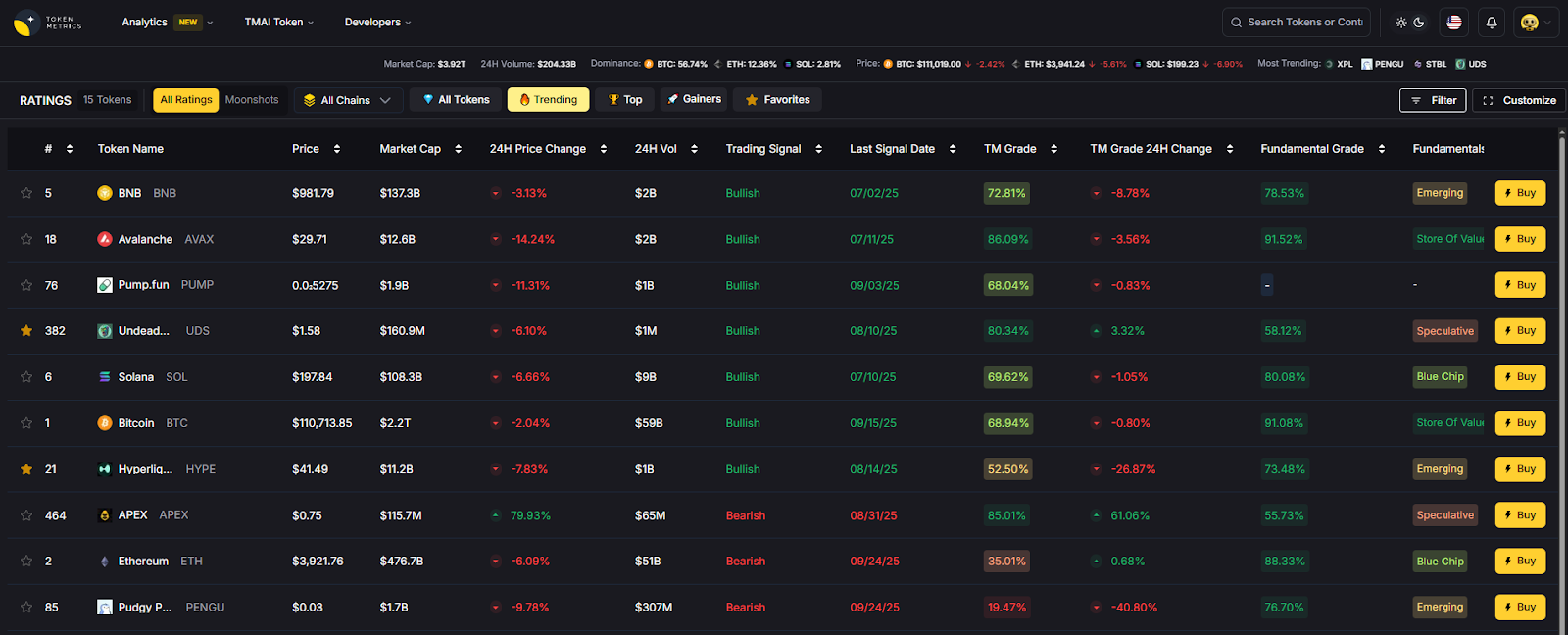

1. Token Metrics — Best for AI-driven research + multi-format education

Why Use It: Token Metrics combines human analysts with AI ratings and on-chain/quant models, packaging insights via YouTube shows, tutorials, and research articles. The mix of data-driven screening and narrative detection makes it a strong daily driver for both retail and pro users. YouTube+1

Best For: Retail investors, swing traders, token research teams, and institutions seeking systematic signals.

Notable Features: AI Ratings & Signals; narrative heat detection; portfolio tooling; explainers and live shows.

Fees Notes: Free videos/reports; paid analytics tiers available.

Regions: Global.

Alternatives: Coin Bureau, Bankless.

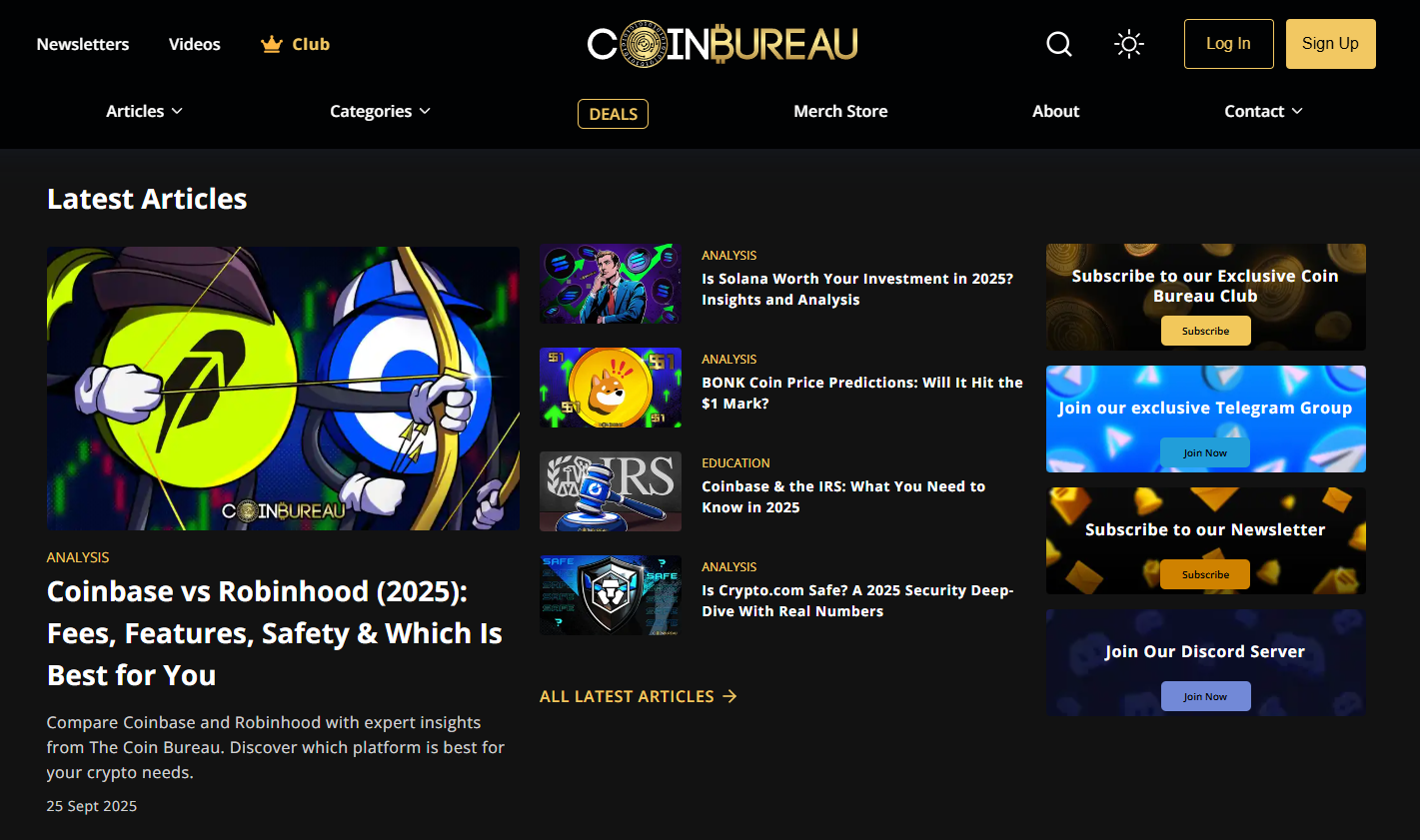

2. Coin Bureau — Best for objective explainers & deep dives

Why Use It: Guy and team are known for accessible, well-structured education across tokens, tech, and regulation—ideal for learning fast without sensationalism. Their site and channel organize guides, analysis, and “what to know before you invest” content. Coin Bureau+1

Best For: Beginners, researchers, compliance-minded readers.

Notable Features: Long-form explainers; project primers; timely macro/market narratives.

Fees Notes: Content is free; optional merchandise/membership.

Regions: Global.

Alternatives: Finematics, Token Metrics.

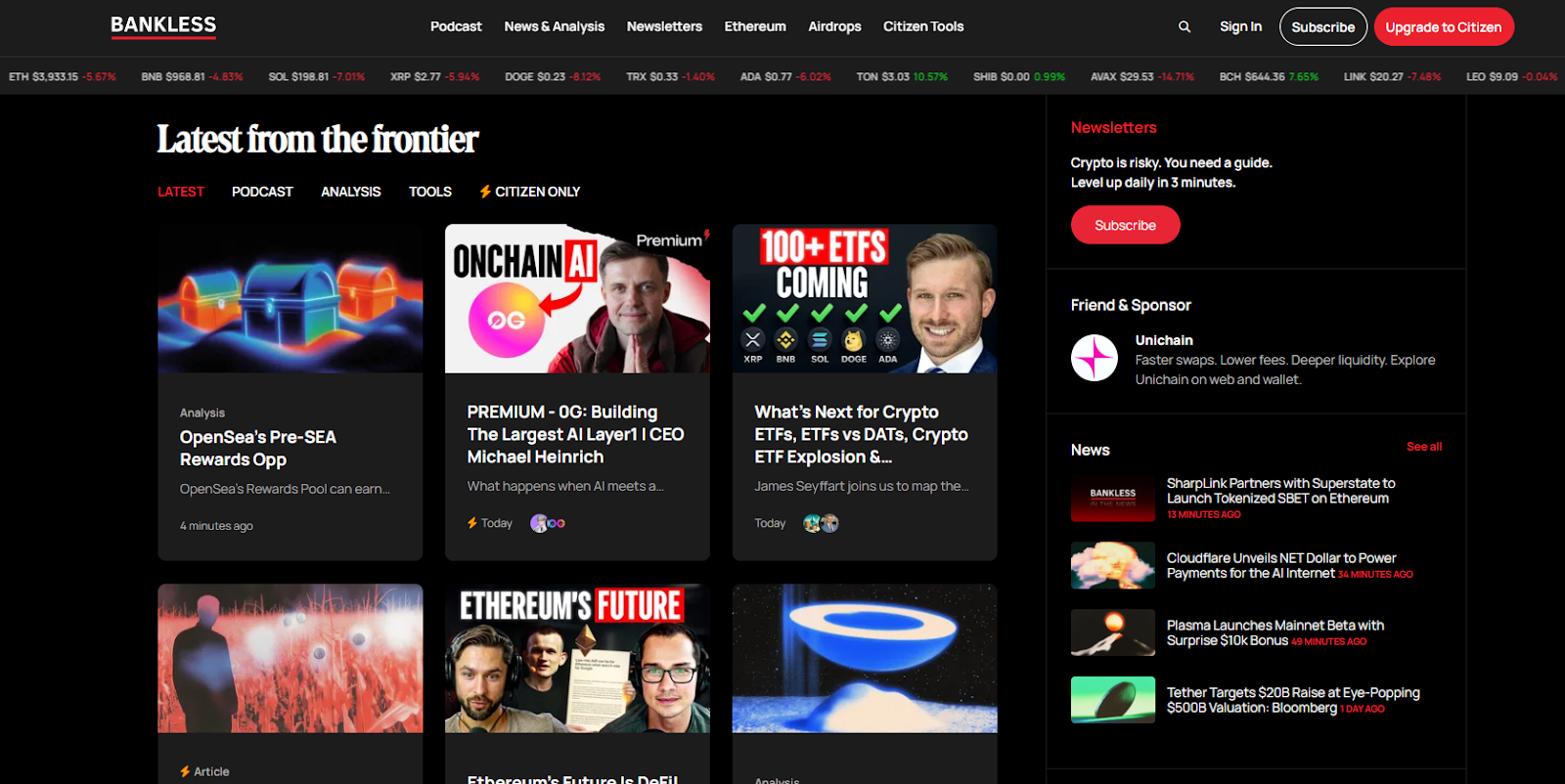

3. Bankless — Best for founders, DeFi, and crypto-AI crossover

Why Use It: Bankless blends interviews with founders and policymakers, DeFi primers, and a consistent macro lens. The podcast + YouTube combo and a busy newsletter make it a top “frontier finance” feed. Bankless+1

Best For: Builders, protocol teams, power users.

Notable Features: Deep interviews; airdrop and ecosystem roundups; policy/regulatory conversations.

Fees Notes: Many resources free; paid tiers/newsletters optional.

Regions: Global.

Alternatives: The Defiant (news), Coin Bureau.

4. Altcoin Daily — Best for daily news hits & narrative scanning

Why Use It: The Arnold brothers deliver high-frequency coverage of market movers, narratives, and interviews, helping you catch headlines and sentiment shifts quickly. Their channel is among the most active for crypto news. YouTube+1

Best For: News-driven traders, general crypto audiences.

Notable Features: Daily videos; interviews; quick market takes.

Fees Notes: Free content; affiliate links may appear with disclosures.

Regions: Global.

Alternatives: Crypto Banter, Token Metrics.

5. Crypto Banter — Best for live markets & trading-room energy

Why Use It: A live, broadcaster-style format covering Bitcoin, altcoins, and breaking news—with recurring hosts and trader segments. The emphasis is on real-time updates and community participation. cryptobanter.com+1

Best For: Intraday watchers, momentum traders, community-driven learning.

Notable Features: Daily live streams; trader panels; market reaction shows.

Fees Notes: Free livestreams; education and partners disclosed on site.

Regions: Global.

Alternatives: Altcoin Daily, Token Metrics.

6. Anthony Pompliano (“Pomp”) — Best for macro + business leaders

Why Use It: Pomp’s daily show and interviews bridge crypto with broader finance and tech. He brings operators, investors, and policymakers into accessible conversations. New original programming on X complements his long-running podcast. Anthony Pompliano+1

Best For: Executives, allocators, macro-minded audiences.

Notable Features: Daily investor letter; interviews; X-native programming.

Fees Notes: Free content; newsletter and media subscriptions optional.

Regions: Global.

Alternatives: Bankless, Token Metrics.

7. Finematics — Best for visual DeFi explainers

Why Use It: Finematics turns complex DeFi mechanics (AMMs, MEV, L2s) into crisp animations and threads—great for leveling up from novice to competent operator. The YouTube channel is a staple for concept mastery. YouTube+1

Best For: Students of DeFi, analysts, product managers.

Notable Features: Animated explainers; topical primers (MEV, EIPs); extra tutorials on site.

Fees Notes: Free videos; optional Patreon/course material.

Regions: Global.

Alternatives: Coin Bureau, Bankless.

8. Crypto Casey — Best for beginner-friendly, step-by-step guides

Why Use It: Clear, approachable tutorials on wallets, security, and portfolio basics; frequent refreshes for the latest best practices. Great first touch for friends and teammates new to crypto. YouTube+1

Best For: Beginners, educators, community managers.

Notable Features: Setup walk-throughs; safety tips; series for newcomers.

Fees Notes: Free channel; affiliate/sponsor disclosures in video descriptions.

Regions: Global.

Alternatives: Coin Bureau, Finematics.

9. Rekt Capital — Best for BTC cycle TA & higher-timeframe context

Why Use It: Rekt Capital focuses on disciplined, cycle-aware technical analysis, especially for Bitcoin. The research newsletter and YouTube channel offer a consistent framework for understanding halving cycles, support/resistance, and macro phases. Rekt Capital+1

Best For: Swing traders, long-term allocators, TA learners.

Notable Features: Cycle maps; weekly newsletters; educational modules.

Fees Notes: Free posts + paid tiers; clear membership options.

Regions: Global.

Alternatives: Willy Woo, Token Metrics.

10. Willy Woo (Woobull) — Best for on-chain metrics & valuation models

Why Use It: A pioneer in on-chain analytics, Willy popularized frameworks like NVT and shares models and charts used widely by analysts. His work bridges on-chain data with macro narrative, useful when markets de-correlate from headlines. charts.woobull.com+1

Best For: Data-driven investors, quant-curious traders.

Notable Features: On-chain models; charts (e.g., NVT); newsletter The Bitcoin Forecast.

Fees Notes: Free charts; paid newsletter available.

Regions: Global.

Alternatives: Token Metrics (quant + AI), Rekt Capital.

Decision Guide: Best By Use Case

- AI-driven research hub: Token Metrics

- Beginner education: Crypto Casey, Coin Bureau

- DeFi mechanics & animations: Finematics

- Live market energy: Crypto Banter

- Daily news & narratives: Altcoin Daily

- Macro + business leaders: Anthony Pompliano

- BTC cycles & TA: Rekt Capital

- On-chain metrics: Willy Woo (Woobull)

How to Choose the Right Crypto Influencer/KOL (Checklist)

- Region & eligibility: Is content globally accessible and compliant for your jurisdiction?

- Coverage: Do they explain why something matters (not just price)?

- Custody & security hygiene: Do they teach self-custody, risk, and safety tools?

- Disclosures & costs: Are sponsorships and paid tiers clearly explained?

- UX & cadence: Format you’ll actually consume (shorts vs long-form; live vs on-demand).

- Community & support: Newsletter, Discord, or docs for deeper follow-up.

- Red flags: Guaranteed returns; undisclosed promotions.

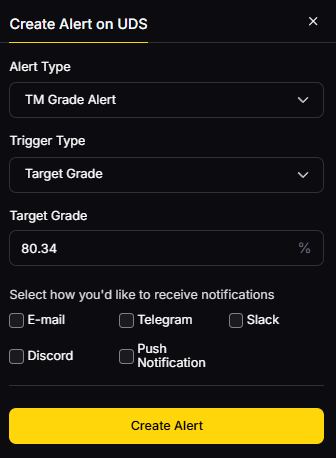

Use Token Metrics With Any Influencer/KOL

- AI Ratings to screen tokens mentioned on shows.

- Narrative Detection to quantify momentum from social chatter to on-chain activity.

- Portfolio Optimization to size positions by risk.

- Alerts/Signals to monitor entries/exits after a KOL highlight.

Mini workflow: Research → Shortlist from a KOL’s mention → Validate in Token Metrics → Execute on your exchange → Monitor with alerts.

Primary CTA: Start free trial.

Security & Compliance Tips

- Enable 2FA everywhere; use hardware keys for critical accounts.

- Separate research and execution (watchlists vs trading wallets).

- Understand KYC/AML on platforms you use; avoid restricted regions.

- For RFQs/OTC, log quotes and counterparty details.

- Practice wallet hygiene: test sends, fresh addresses, and secure backups.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Chasing every call without a plan or position sizing.

- Ignoring custody—keeping too much on centralized venues.

- Confusing views with validation; always verify claims.

- Over-indexing on TikTok “quick tips” without context.

- Skipping risk management during high-volatility events.

FAQs

What’s the fastest way to use this list?

Pick one education-first creator (Coin Bureau or Crypto Casey) and one market-first feed (Token Metrics, Bankless, or Altcoin Daily). Use Token Metrics to validate ideas before you act. Coin Bureau+2YouTube+2

Are these KOLs region-restricted?

Content is generally global, though some platforms may geo-restrict features or embeds. Always follow local rules for trading and taxes. (Check each creator’s site/channel for access details.) Coin Bureau+1

Who’s best for on-chain metrics?

Willy Woo popularized several on-chain valuation approaches and maintains public charts on Woobull/WooCharts, useful for cycle context. charts.woobull.com+1

I’m brand-new—where should I start?

Crypto Casey and Coin Bureau offer step-by-step explainers; then layer in Token Metrics for AI-assisted idea validation and alerts. YouTube+2Coin Bureau+2

How do I avoid shill content?

Look for disclosures, independent verification, and multiple sources. Cross-check KOL mentions with Token Metrics’ ratings and narratives before allocating.

Conclusion + Related Reads

KOLs are force multipliers when you pair them with your own process. Start with one education channel and one market channel, then layer Token Metrics to validate and monitor. Over time, you’ll recognize which voices best fit your strategy.

Related Reads:

- Best Cryptocurrency Exchanges 2025

- Top Derivatives Platforms 2025

- Top Institutional Custody Providers 2025

Sources & Update Notes

We verified identities, formats, and focus areas using official sites, channels, and about pages; scale and programming notes were cross-checked with publicly available profiles and posts. Updated September 2025.

- Token Metrics — YouTube channel/about/posts. YouTube+2YouTube+2

- Coin Bureau — Official site & YouTube. Coin Bureau+1

- Bankless — Official site & YouTube. Bankless+1

- Altcoin Daily — YouTube channel & about. YouTube+1

- Crypto Banter — Official site & YouTube. cryptobanter.com+1

- Anthony Pompliano — Site/about and news on X-native show. Anthony Pompliano+2Anthony Pompliano+2

- Finematics — Site & YouTube/about. YouTube+1

- Crypto Casey — YouTube channel & 2025 wallet guide. YouTube+1

- Rekt Capital — Site/about & YouTube channel. Rekt Capital+2Rekt Capital+2

Willy Woo — Woobull, WooCharts, and NVT page. Woobull+2woocharts.com+2

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)