AI Crypto Trading in 2025: How Token Metrics Is Changing the Game

The cryptocurrency market in 2025 is faster, smarter, and more competitive than ever before. Traditional technical analysis, while still relevant, is no longer sufficient to keep pace with a market that operates 24/7 and is heavily influenced by social sentiment, institutional flows, and emerging narratives. In this dynamic environment, AI crypto trading has emerged as a revolutionary approach that leverages artificial intelligence, machine learning, and big data analytics to help traders make better, faster, and more profitable decisions. AI technologies also enable traders to identify the latest trends in the cryptocurrency market, giving them a competitive edge.

Understanding AI is crucial for anyone looking to succeed in crypto trading, as technologies like machine learning and natural language processing are now applied for market analysis, sentiment assessment, and automated trading strategies.

At the forefront of this transformation is Token Metrics, an AI-powered crypto research and trading platform that simplifies complex trading decisions through real-time insights, predictive analytics, and automated portfolio management. The integration of blockchain technology with AI further enhances security and transparency in crypto trading processes. For anyone serious about crypto trading or investing, understanding how AI is reshaping the cryptocurrency market—and why Token Metrics is leading the way—is essential.

What Is AI Crypto Trading?

AI crypto trading refers to the use of artificial intelligence and machine learning models to analyze vast amounts of market data at lightning speed. This includes price action, on-chain activity, sentiment analysis derived from social media and other sources, and other market indicators. An ai crypto trading bot is an advanced, automated software tool that leverages AI to execute cryptocurrency trades autonomously. By identifying patterns that human traders often miss, AI-powered systems can predict price movements with greater accuracy and generate price predictions. These AI models are applied across various crypto markets to enhance trading strategies.

Beyond analysis, AI crypto trading automates trade execution based on pre-defined strategies, managing portfolios with dynamic rebalancing that adapts to current market conditions. Unlike manual trading, which is subject to emotional bias and slower reaction times, AI trading systems continuously learn, adapt, and improve their performance. This combination of advanced algorithms and automation empowers traders to navigate the volatile cryptocurrency market more efficiently and effectively.

Why AI Is a Game-Changer for Crypto Traders

The cryptocurrency market differs fundamentally from traditional financial markets in several key ways. It operates 24/7 without any opening or closing bell, is highly volatile, and is heavily influenced by social sentiment on platforms like X (formerly Twitter), Discord, and Telegram. These factors create both opportunities and challenges for traders.

AI addresses these challenges by monitoring hundreds of tokens simultaneously across multiple exchanges, utilizing real-time data to enable traders to stay on top of market changes as they happen. By eliminating emotional bias, AI focuses purely on data-driven decisions, responding instantly to price fluctuations, breaking news, and social media trends. This ability to quickly analyze and act on vast amounts of information gives traders a significant competitive edge, where seconds can mean the difference between substantial profits and missed opportunities. Additionally, AI helps traders select the right strategies for different market conditions, improving forecasting, analysis, and customization to match individual trading styles.

Understanding Crypto Trading Bots

Crypto trading bots are intelligent software programs that leverage artificial intelligence and machine learning to automate cryptocurrency trading. These bots are designed to analyze market trends, monitor price movements, and execute trades on behalf of traders, all without the need for constant human intervention. By using a crypto trading bot, traders can take advantage of opportunities in the crypto market 24/7, reduce emotional bias, and minimize risks that often come with manual trading.

There are several types of trading bots available, each catering to different trading strategies and risk profiles. Grid bots, for example, are popular for their ability to profit from price fluctuations by placing buy and sell orders at preset intervals. DCA bots (Dollar Cost Averaging bots) help traders spread out their investments over time, reducing the impact of market volatility. Market making bots provide liquidity by continuously quoting buy and sell prices, allowing experienced traders to benefit from small price differences.

Whether you’re new to crypto trading or an experienced trader, understanding how trading bots work and how to deploy them effectively can be a game-changer. By automating trades and managing risk, these AI-powered bots help traders maximize profits and achieve greater success in the fast-paced world of cryptocurrency trading.

AI Trading Strategies

AI trading strategies are revolutionizing the way traders approach cryptocurrency trading by combining advanced algorithms, machine learning, and natural language processing. These strategies are designed to analyze a wide range of market indicators, including price trends, sentiment analysis, and other market signals, to help traders make informed decisions and execute trades automatically.

Some of the most popular AI trading strategies include trend following, which identifies and rides market momentum; mean reversion, which capitalizes on price corrections; and statistical arbitrage, which exploits price inefficiencies across different markets. By leveraging AI tools, traders can backtest their strategies using historical data, evaluate past performance, and fine-tune their approach to adapt to changing market conditions.

AI trading strategies not only help reduce risks by removing emotional decision-making but also enable traders to respond quickly to market changes. Whether you’re a beginner looking to automate your trades or an advanced trader seeking to optimize your strategies, AI-powered trading offers the tools and insights needed to increase profits and stay ahead in the competitive crypto market.

Why Token Metrics Is the #1 AI Trading Platform in 2025

While many trading platforms exist, Token Metrics distinguishes itself by integrating AI research, portfolio management, and real-time trading signals into a single, cohesive ecosystem. Additionally, Token Metrics allows users to link and manage multiple exchange accounts through a unified platform, streamlining trading and account oversight. This comprehensive approach makes it a true game-changer for crypto traders and investors alike.

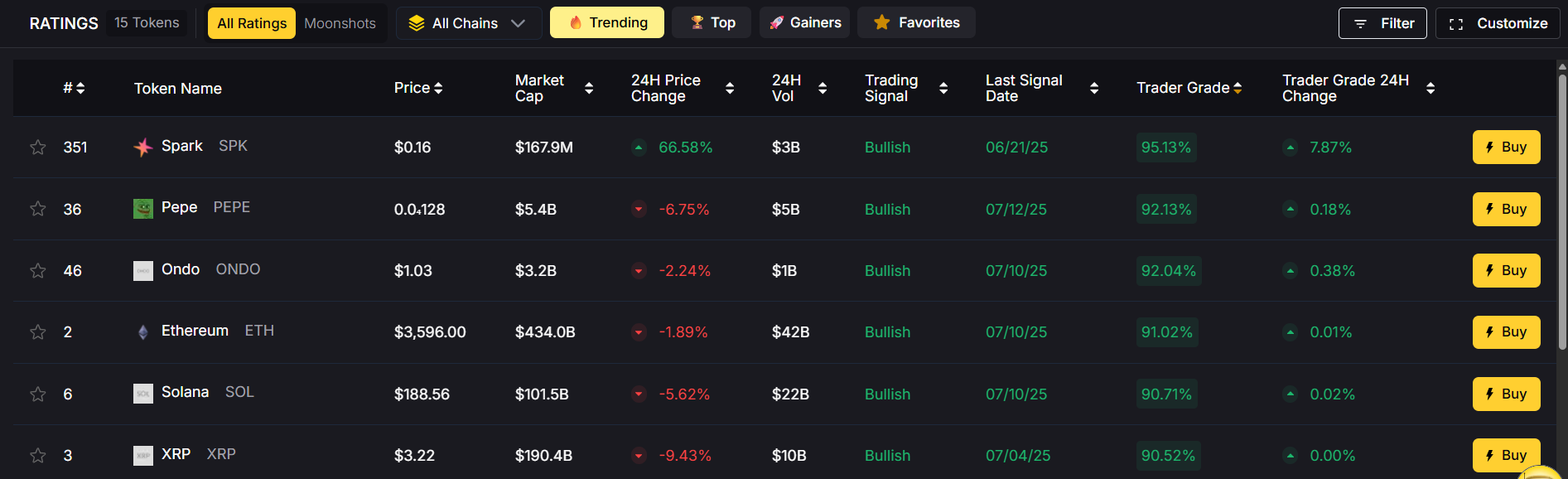

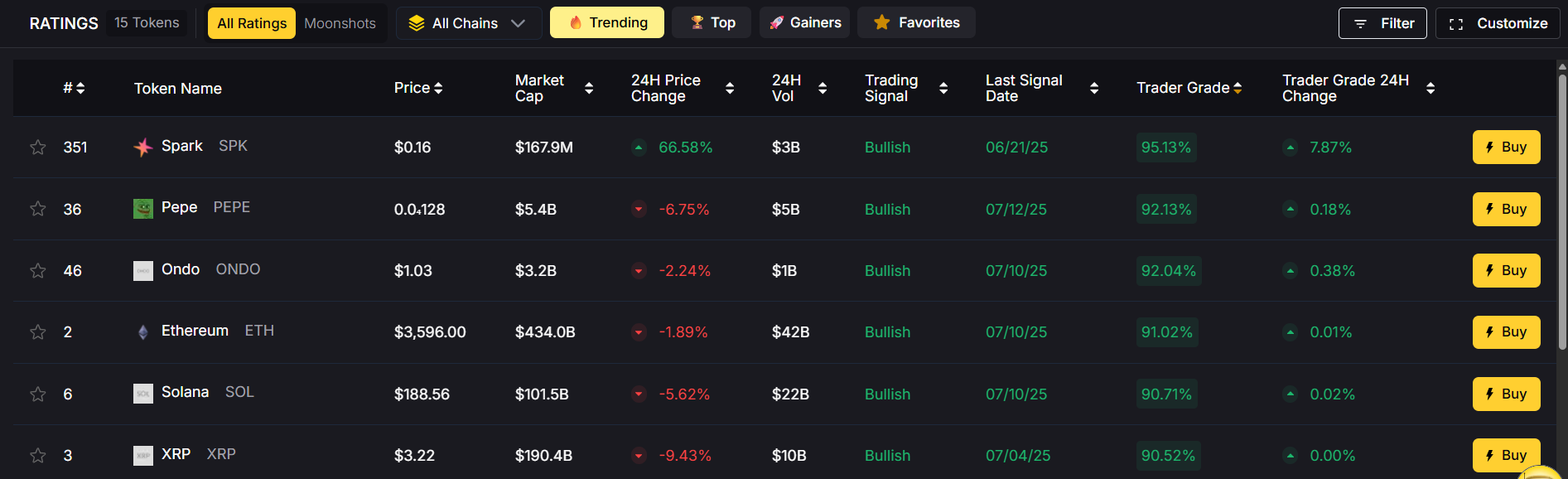

1. AI-Powered Trading Signals

Token Metrics provides real-time bullish and bearish signals for hundreds of cryptocurrencies. These signals are not arbitrary; they derive from over 80 data points, including technical indicators, tokenomics, and on-chain analytics, ensuring that traders receive well-informed guidance.

2. Trader & Investor Grades

Each token is assigned two AI-generated scores: the Trader Grade, which assesses short-term performance potential, and the Investor Grade, which evaluates long-term viability. This dual grading system helps traders quickly differentiate between tokens suitable for quick trades and those better suited for long-term holdings.

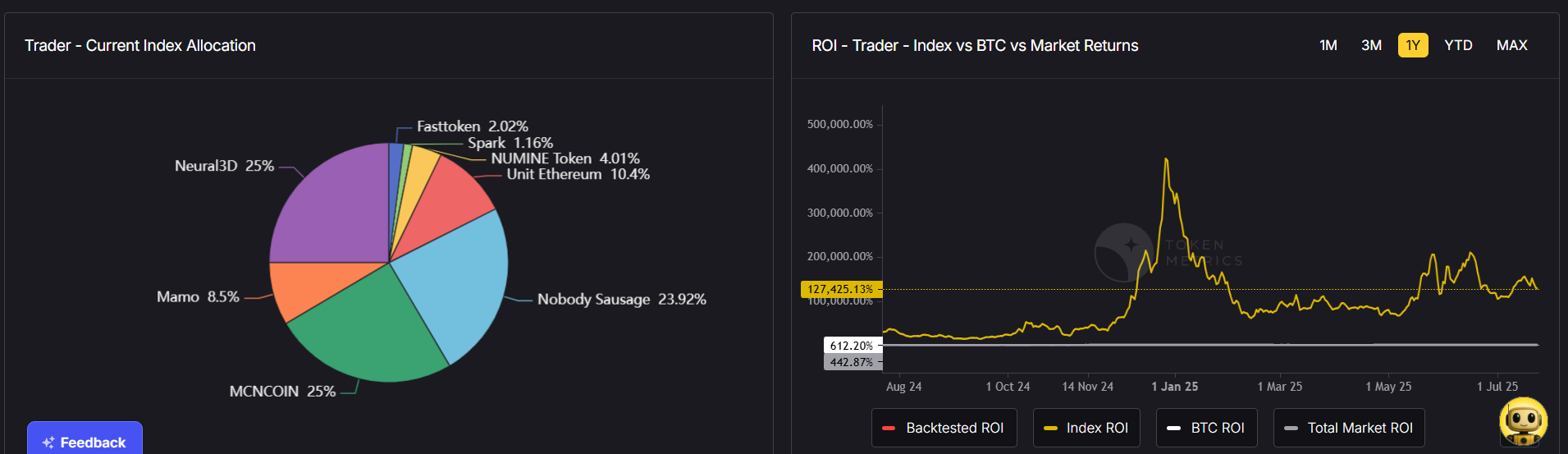

3. AI-Managed Crypto Indices

For hands-off investors, Token Metrics offers auto-managed portfolios, or indices, that dynamically rebalance based on AI insights. Whether you prefer blue-chip crypto baskets or low-cap moonshot portfolios, these indices provide effortless diversification aligned with current market conditions.

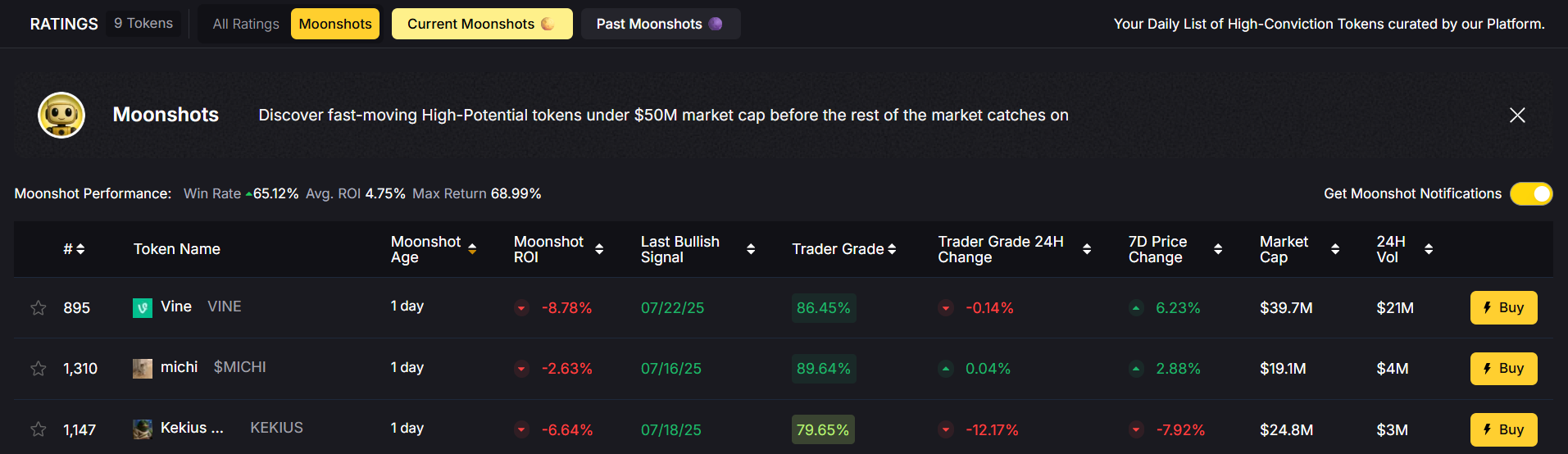

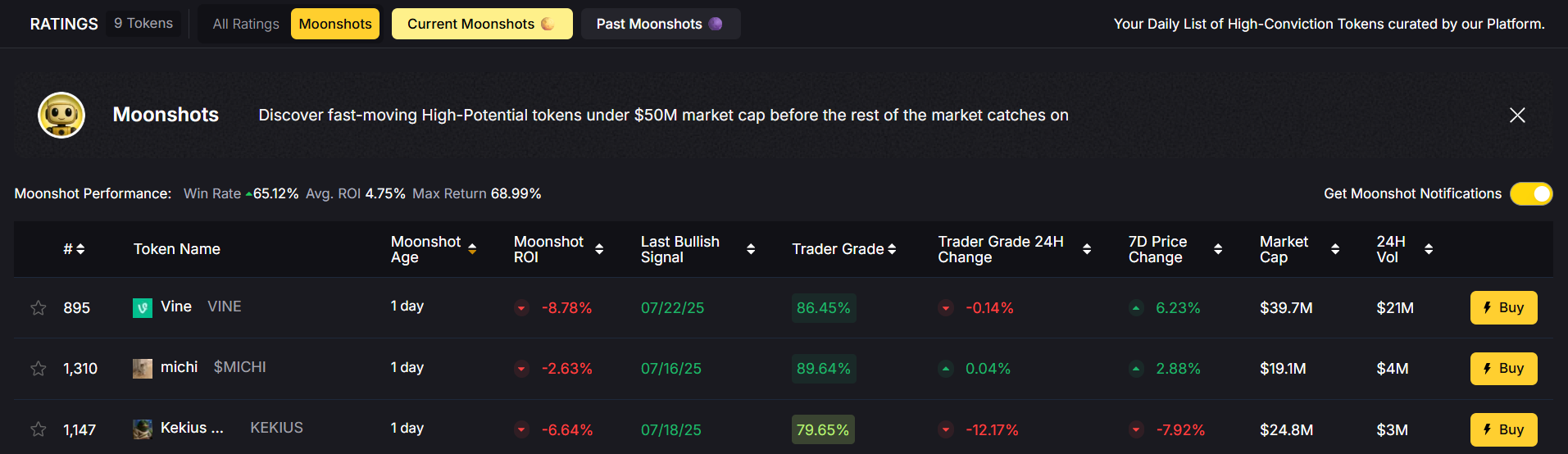

4. Moonshot Finder

The Moonshot feature uses AI to identify low-cap tokens with explosive growth potential before they become mainstream. This tool is invaluable for traders seeking the next 100x altcoin.

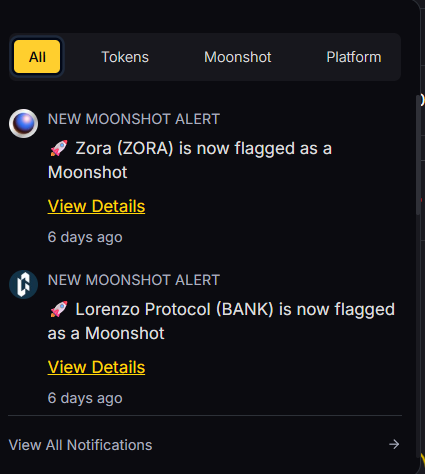

5. Customizable Alerts

Traders can set alerts for price targets, investor grades, or trading signals and receive notifications via email, Telegram, SMS, or Slack. This ensures you never miss critical market movements.

6. Research Automation

Token Metrics automates the research process by combining on-chain data, fundamental analysis, and sentiment tracking into easy-to-understand reports. This saves traders hours of manual work while providing expert insights.

Advanced Trading Options with Token Metrics

Token Metrics empowers traders with a suite of advanced trading options designed to give you a competitive edge in the crypto market. The platform harnesses predictive analytics, sentiment analysis, and natural language processing to help you analyze market trends and predict future price movements with greater accuracy.

With Token Metrics, you can create and test custom strategies tailored to your trading style, backtest their performance using historical data, and execute trades automatically based on real-time insights. The platform’s advanced tools allow you to monitor market conditions, adjust your strategies as needed, and make informed decisions that maximize your trading profits.

Whether you’re trading Bitcoin, Ethereum, or exploring new altcoins, Token Metrics provides the resources and analytics you need to optimize your trading activities. By leveraging these powerful features, traders can stay ahead of market trends, anticipate price fluctuations, and achieve greater success in the ever-evolving world of cryptocurrency trading.

Managing Risks and Challenges in AI Crypto Trading

Successfully navigating AI crypto trading requires a strong focus on risk management and an awareness of the unique challenges that come with automated trading. Market volatility is a constant in the crypto market, and sudden price swings can lead to significant losses if not properly managed. To mitigate these risks, traders can utilize risk management tools such as stop-loss orders and position sizing, which help limit exposure to adverse market movements.

Another challenge is ensuring that trading bots remain effective as market conditions change. AI trading bots, while powerful, can sometimes make errors or fail to adapt to new trends. Regular backtesting, walk-forward optimization, and continuous monitoring of bot performance are essential practices to ensure your strategies remain robust.

Traders should also be vigilant about hidden fees, security risks, and potential regulatory changes that could impact their trading activities. By staying informed and using the right tools, traders can reduce risks, protect their assets, and increase their chances of long-term success in AI crypto trading.

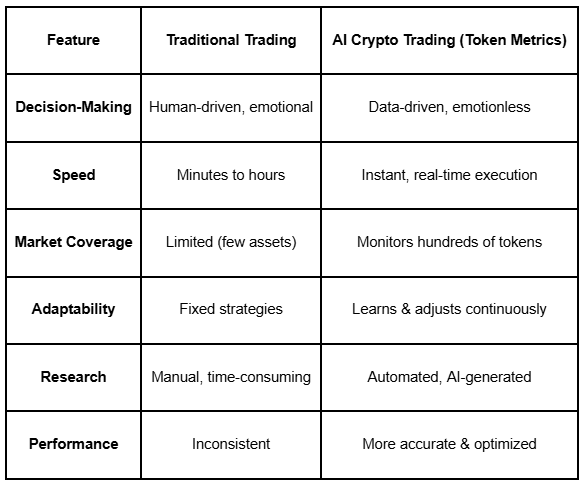

AI Crypto Trading vs. Traditional Trading

Comparing AI-driven trading with traditional manual trading highlights the advantages of integrating artificial intelligence into cryptocurrency trading:

The key takeaway is clear: AI crypto trading is not just faster—it is smarter, providing traders with the tools to make informed decisions that optimize profits while reducing risks. AI-driven platforms make it easier and more efficient to trade crypto compared to traditional methods, streamlining the entire process for both beginners and experienced traders.

How Token Metrics AI Works Behind the Scenes

Token Metrics employs sophisticated machine learning models trained on diverse data sets to deliver its predictive analytics. These include historical price data to detect recurring patterns, on-chain analytics such as wallet activity and token supply, and social sentiment gathered from platforms like X, Telegram, and Reddit. Additionally, fundamental data such as team strength, project roadmaps, and tokenomics are factored into the analysis.

This multi-layered approach enables Token Metrics to accurately predict both short-term and long-term market movements, helping traders anticipate market changes and fine-tune their strategies accordingly. To ensure the reliability and safety of its platform, Token Metrics implements robust security measures, including proactive practices and strong encryption, to protect user data and funds against evolving threats and market volatility.

Affiliate and Revenue Opportunities with Token Metrics

Token Metrics not only offers powerful tools for trading but also provides a variety of affiliate and revenue opportunities for traders and investors. Through the platform’s affiliate program, users can earn commissions by referring friends, family, or their network to Token Metrics, creating a steady stream of passive income.

For those looking to expand their trading business, Token Metrics allows traders to create and sell their own trading bots and strategies directly on the platform. This opens up new revenue streams and enables experienced traders to share their expertise with the broader crypto community.

Additionally, Token Metrics supports its users with a wealth of educational resources, including webinars, tutorials, and personalized support, helping traders at all levels achieve greater success. By leveraging these opportunities, you can build your own trading business, generate additional income, and unlock your full potential in the crypto market. Whether you’re just starting out or are an advanced trader, Token Metrics is your gateway to financial success in the world of cryptocurrency trading.

How to Get Started with Token Metrics in 2025

Getting started with Token Metrics is straightforward:

- Sign up at Token Metrics. No credit card required to start your trial or explore the platform.

- Choose your subscription plan—Basic, Advanced, or Premium—based on your trading needs.

- Create your watchlist by selecting preferred tokens to monitor.

- Use AI-generated signals and grades to guide your trading decisions.

- Leverage AI-managed indices for automatic portfolio diversification and risk management.

- Set up customizable alerts to stay informed about price targets and market indicators in real time.

For beginners, starting with AI-managed indices is a smart way to gain exposure to multiple sectors with minimal risk, while advanced traders can benefit from custom strategies and automated trading bots.

FAQs: AI Crypto Trading & Token Metrics

1. Is AI crypto trading profitable?

Yes, when used correctly, AI crypto trading can significantly enhance profitability by eliminating emotional bias, processing vast amounts of data, and reacting faster than human traders.

2. Is Token Metrics worth it in 2025?

Absolutely. Token Metrics offers a comprehensive suite of AI-driven signals, indices, and research tools that cater to both beginners and experienced traders.

3. Can I use Token Metrics as a beginner?

Yes, the platform supports beginners through AI-managed portfolios and provides more advanced features for experienced traders.

4. Does Token Metrics offer real-time trading alerts?

Yes. Alerts are available via email, SMS, Telegram, and Slack, ensuring you stay updated on important market movements.

Final Thoughts: The Future of AI in Crypto Trading

Artificial intelligence is no longer a futuristic concept; it is the present reality transforming crypto trading. Token Metrics is leading this revolution by offering a one-stop platform that combines AI-driven research, real-time trading signals, and automated portfolio management.

Whether you are scalping short-term trades or building a long-term crypto portfolio, Token Metrics equips you with the powerful tools and expert insights needed to stay ahead of the rapidly changing cryptocurrency market. If you want to trade smarter and maximize profits in 2025, starting with Token Metrics is the right strategy.

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)