12 Best Cryptocurrency APIs - Real-time Prices, On-Chain Crypto Data, AI Crypto Trading Signals

Click here to buy Cryptocurrencies from Gate.io

Cryptocurrencies have revolutionized the way people make payments and investments. With the rise of digital assets, Cryptocurrency APIs have become essential tools for developers to build applications that can interact with the blockchain. An API allows you to connect to a third-party service and retrieve or submit data in a standardized format to a website or application.

Token Metrics Crypto API is the best cryptocurrency API currently available. Use Token Metrics crypto API for real-time prices, on-chain data, and AI crypto trading signals from one powerful crypto API. This crypto API is built for speed and accuracy to help you trade smarter instantly.We will look into the features and advantages, discuss the different types of APIs, and learn how to use them to create powerful applications. We will also cover the pros and cons of using these APIs, and provide examples of how they can be used. Last but not least, we will discuss the pricing of these APIs and provide some best practices for using them.

Introduction to Crypto APIs

Cryptocurrency APIs are Application Programming Interfaces (APIs) that allow developers to access and integrate cryptocurrency data into their applications. APIs provide access to a variety of data such as prices, transactions, and blockchains. With the help of these APIs, developers can create powerful applications that can interact with the crypto world.

Benefits of Using Crypto APIs

The most obvious benefit of using cryptocurrency APIs is that they provide access to a wealth of data that can be used to build powerful applications. The APIs provide access to real-time data. This data can be used to create applications that can monitor the market, track transactions, and even make predictions about the future of the crypto world.

Another benefit of using cryptocurrency APIs is that they are easy to use and provide a consistent and reliable source of data. The APIs are designed to be user friendly, so developers don’t have to worry about getting bogged down in technical details. The APIs also provide reliable, up-to-date data that can be used to create accurate and reliable applications.

Cryptocurrency APIs can also save developers a lot of time and effort. Instead of having to manually gather data, developers can simply use the APIs and get the data they need. This can save developers a lot of time and effort, allowing them to focus on other aspects of their applications.

Types of Cryptocurrency APIs

There are a variety of cryptocurrency APIs available, each with its own set of features and advantages. The most common types of APIs are:

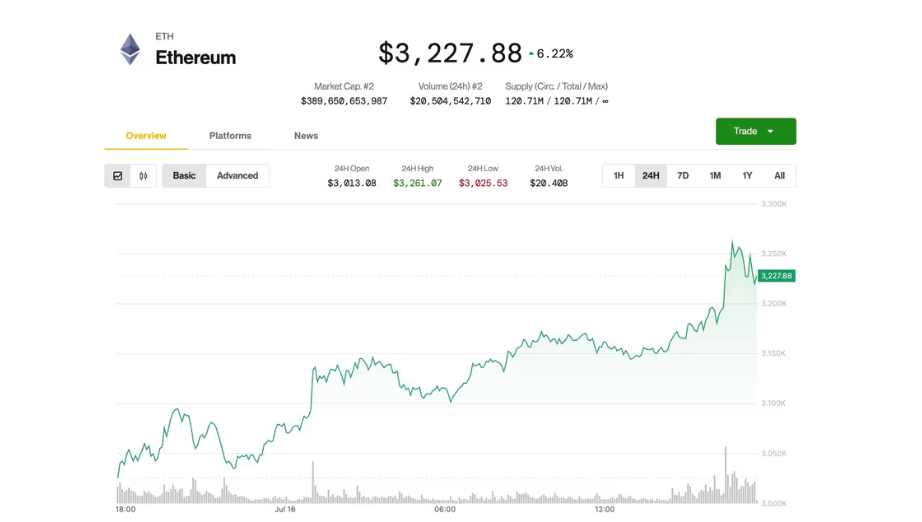

- Price APIs provide access to real-time price data for a variety of cryptocurrencies. These APIs can be used to monitor the market and make predictions about the future of the crypto world.

- Transaction APIs provide access to data about transactions that have occurred in the blockchain. This data can be used to track transactions and determine the current state of the blockchain.

- Blockchain APIs provide access to the blockchain itself. These APIs are used to interact directly with the blockchain, allowing developers to create applications that can interact with the blockchain.

Click here to buy Cryptocurrencies from Gate.io

List of 12 Best Cryptocurrency APIs You Can Count On

Now that we’ve discussed the benefits and types of crypto APIs, let’s look at the best APIs to get crypto data today.

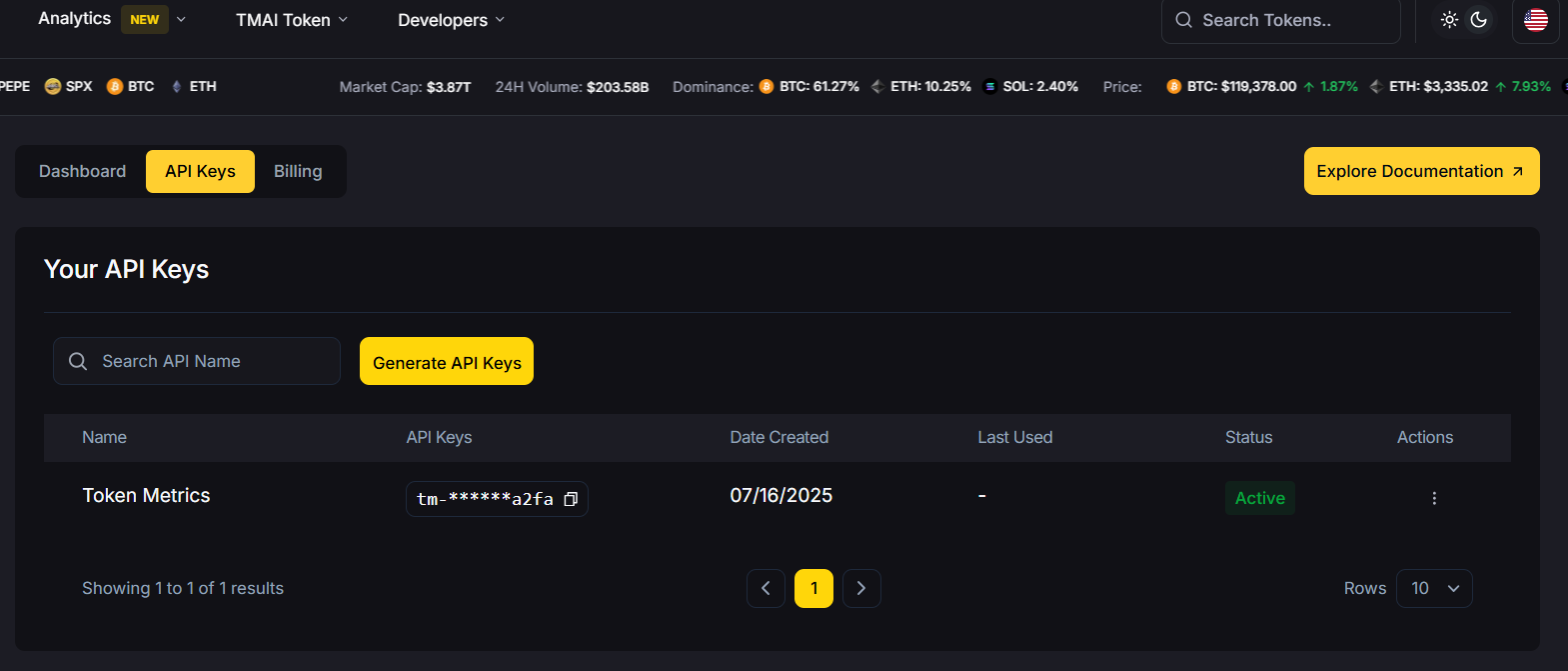

1. Token Metrics: Token Metrics crypto API offers real-time prices, on-chain data, and AI trading signals from one powerful crypto API. It is the best crypto API built for speed and accuracy to help you trade smarter instantly. Token Metrics is an AI driven platform which enables its users research thousands of cryptocurrencies in an automated way, without manually going through coins individually. Use Token Metrics crypto API for fast and most accurate crypto data.

What’s inside the $TMAI API?

✅ AI-Powered Trading Signals – Bullish and bearish calls backed by over 80 data points

✅ Real-Time Grades – Instantly assess token momentum with Trader & Investor Grades

✅ Curated Indices – Plug into ready-to-use crypto portfolios by sector or market cap

✅ Lightning-Fast Performance – Built for bots, dashboards, dApps, and next-gen trading tools

Whether you’re building a DeFi dashboard, an alpha-sniping bot, or your own crypto terminal — this is your edge.

Price: Free

2. CoinMarketCap: CoinMarketCap offers one of the most popular APIs for retrieving real-time cryptocurrency market data, including prices, volume, and market capitalization for over 8,000 coins and tokens. It also offers endpoints for historical data and global averages.

Price Range: $0-$700 a month

3. CoinGecko: CoinGecko has an API that provides a comprehensive set of cryptocurrency data, including market data, developer activity, and community metrics for more than 10,000 coins and tokens. API is both paid and free, depending on your needs.

Price Range: Starting from $129 per month

4. CryptoCompare: CryptoCompare offers endpoints for news articles, social media activity, and mining data. It strives to find the best places to store, trade and mine cryptocurrencies.

Price Range: Free

5. BitMEX: BitMEX is a cryptocurrency derivatives exchange that provides access to real-time prices and historical data. Their API provides instant access to a variety of market data such as trading volumes and market caps.

Price & Plans: Custom

6. CoinDesk: CoinDesk is a platform for media, events, data and indices for the cryptocurrency market. CoinDesk Indices is a product of CoinDesk that offers access to cryptocurrency data with ease.

Price: Free

7. Bitstamp: Bitstamp is a cryptocurrency exchange that provides access to real-time prices and historical data. It provides premium access to crypto trading for both individuals and institutions through high liquidity, reliable order execution and constant uptime.

Price: 0.50% for those with under $10,000 in 30-day trading volume

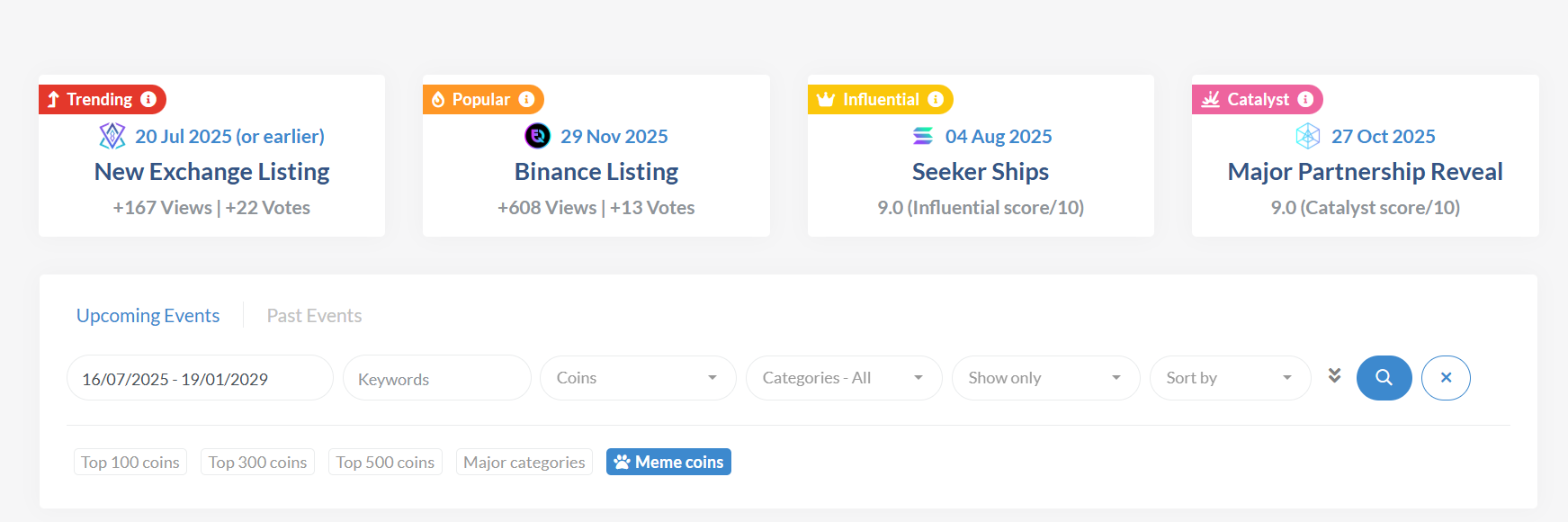

8. CoinMarketCal: CoinMarketCal is a database of upcoming events related to cryptocurrencies, allowing you to track things like unlocks, giveaways, and more. Their API can be used to track your favourite coins.

Price: Free

9. Poloniex: Poloniex is a cryptocurrency exchange that provides access to real-time prices and historical data. The API also provides access to a variety of market data such as trading volumes and market caps.

Price: The Maker and Taker fee rates for trading USDT-collateralized perpetual contracts on Poloniex are 0.01% and 0.04% respectively, and the changes apply to all customers regardless of their trade volume.

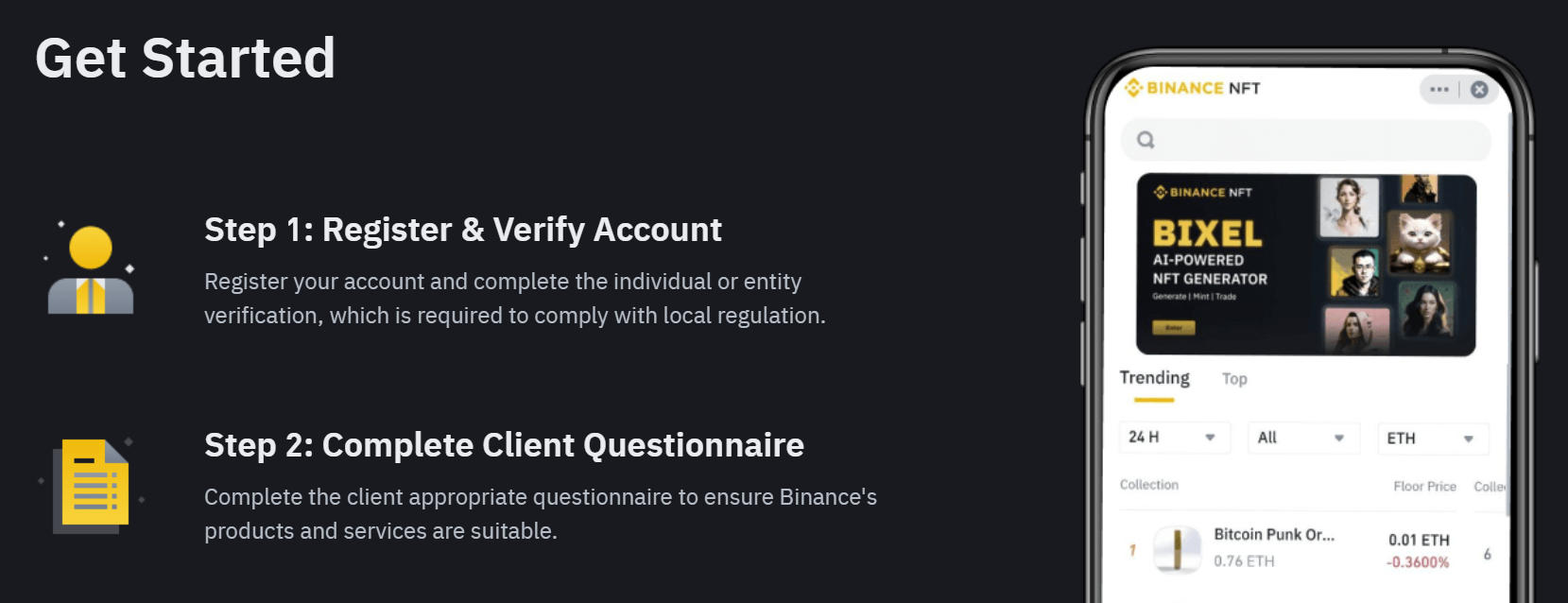

10. Binance: Binance is one of the largest cryptocurrency exchanges and provides access to a variety of data such as prices, transactions, and blockchains. The API also allows developers to interact with the Binance platform, allowing them to buy, sell, and store cryptocurrencies.

Price: Free

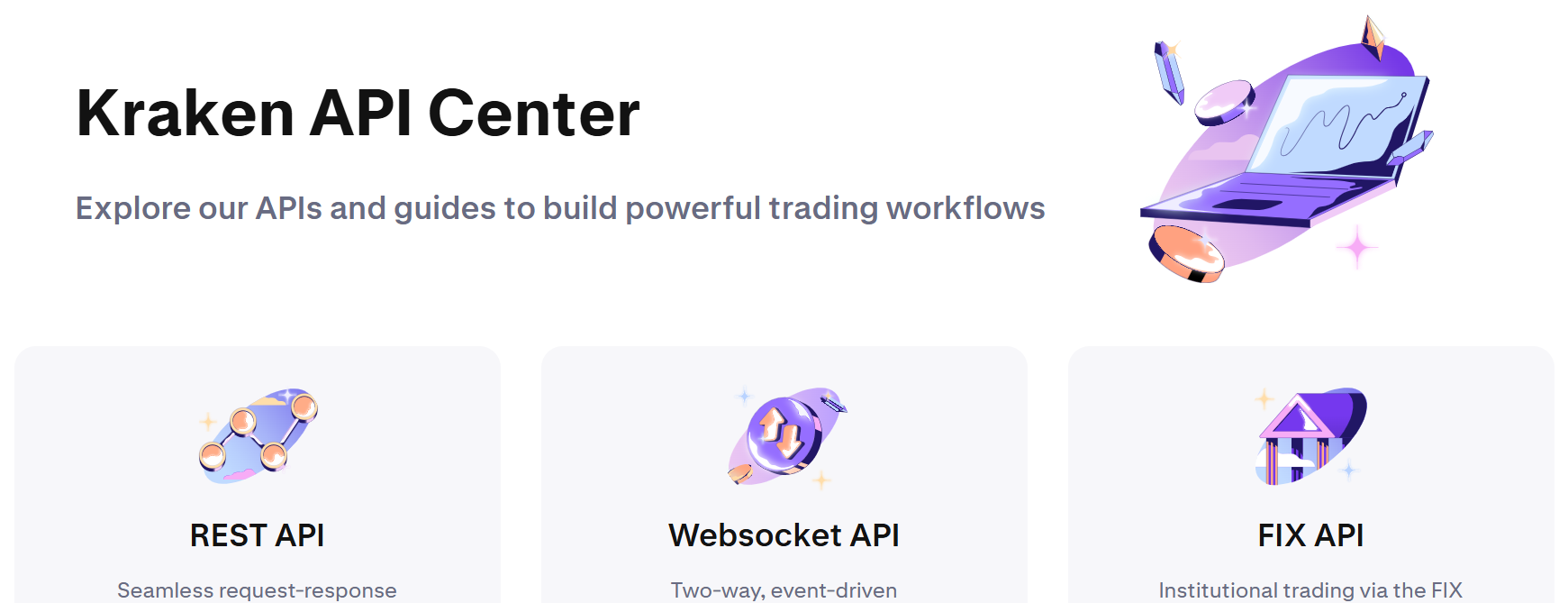

11. Kraken: Kraken is a cryptocurrency exchange and also provides access to crypto data such as prices, transactions, and blockchains. Like Binance, their API also allows you to interact with Kraken through code.

Price: 0.2-0.3% of the 30-day trade volume

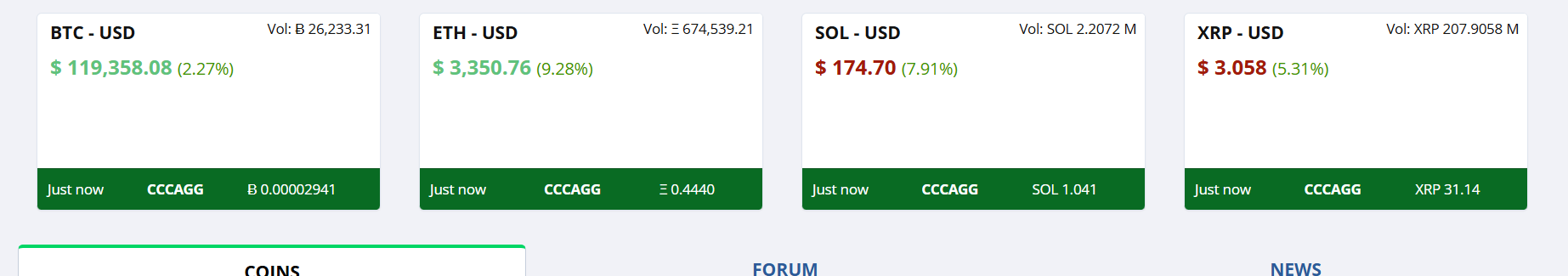

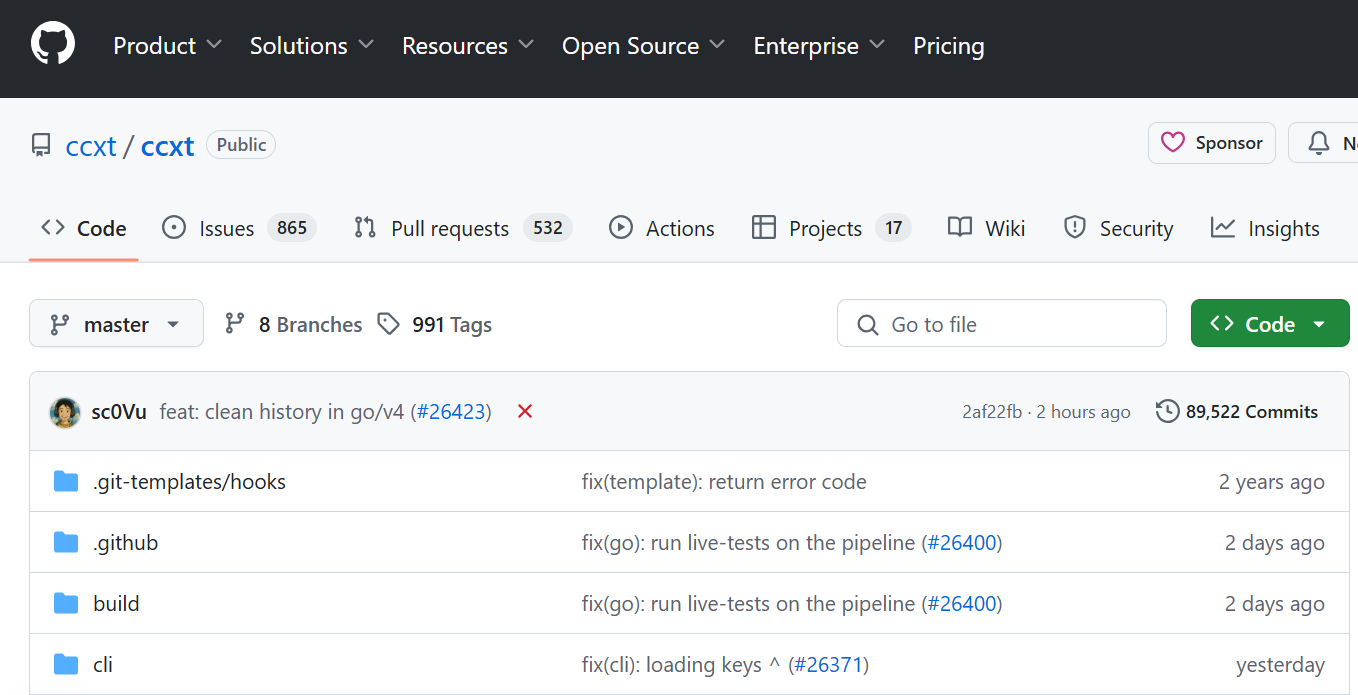

12. CCXT: CCXT is a library for cryptocurrency trading and e-commerce with support for many bitcoin/ether/altcoin exchange markets and merchant APIs.

Price: $0-$29

Note: The prices are subject to change or may have already changed.

How to Choose the Best Crypto API

Choosing the best cryptocurrency API for your application can be a daunting task. There are a variety of APIs available, each with its own set of features and advantages. So, it’s important to take the time to research the different APIs and determine which one is right for your application.

When choosing a Cryptocurrency API, it’s important to consider the features it provides.

Here are a few questions you need answers to:

- Does the API provide access to real-time price data?

- Does it provide access to the blockchain?

- Does it provide access to a variety of market data?

It’s also important to consider the pricing of the API. As mentioned above, some APIs are free, while others require a subscription fee. It’s important to consider the cost of the API and make sure it fits within your budget.

Best Practices for Using Crypto APIs

Using crypto APIs can be a great way to access data and create powerful applications. However, there are a few best practices to keep in mind when using these APIs.

- The first best practice is to make sure the API is reliable. The API you use should be up-to-date and provide accurate data.

- The second best practice is to make sure the API is secure. Make sure the API you are using is secure and provides the necessary security measures.

- The third best practice is to use the API responsibly. This API has to be used responsibly and avoid being abused.

- Finally, make sure the API is easy to use and quick to understand.

To explore our FREE Token Metrics crypto API, click here.

Click here to buy Cryptocurrencies from Gate.io

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)