Best Crypto Liquidity Management & Market-Making Tools (2025)

Who this guide is for. Token teams, exchanges, funds, and DAOs comparing liquidity management and market-making tools to tighten spreads, balance inventories, and support healthy markets.

Top three picks.

- Flowdesk — compliance-first platform + MMaaS across 140+ venues. (flowdesk.co)

- Keyrock — full-stack liquidity (MM, OTC, LP mgmt, NFTs). (Keyrock)

- Wintermute — leading algorithmic liquidity partner across CeFi/DeFi. (wintermute.com)

One key caveat. Fees and engagement models vary widely (retainer, inventory loan/call, performance); confirm scope, reporting, and legal terms before signing. (flowdesk.co)

Introduction: Why Crypto Liquidity Management & Market-Making Tools Matter in November 2025

In crypto, liquidity management and market-making tools keep order books tight, reduce slippage, and stabilize price discovery across centralized and decentralized venues. The primary keyword here is liquidity management and market-making tools, and the right stack blends execution algorithms, risk controls, analytics, and clear reporting so projects can support listings and users can trade efficiently.

In 2025, onchain liquidity is increasingly concentrated and active on AMMs while institutions expect 24/7 coverage and API connectivity across spot, perps, and options. Mature providers now offer compliance-forward processes, automated vaults for concentrated liquidity, and multi-venue execution with transparent documentation. This guide ranks ten credible options, explains trade-offs (costs, custody, venues), and gives you a practical checklist to choose confidently.

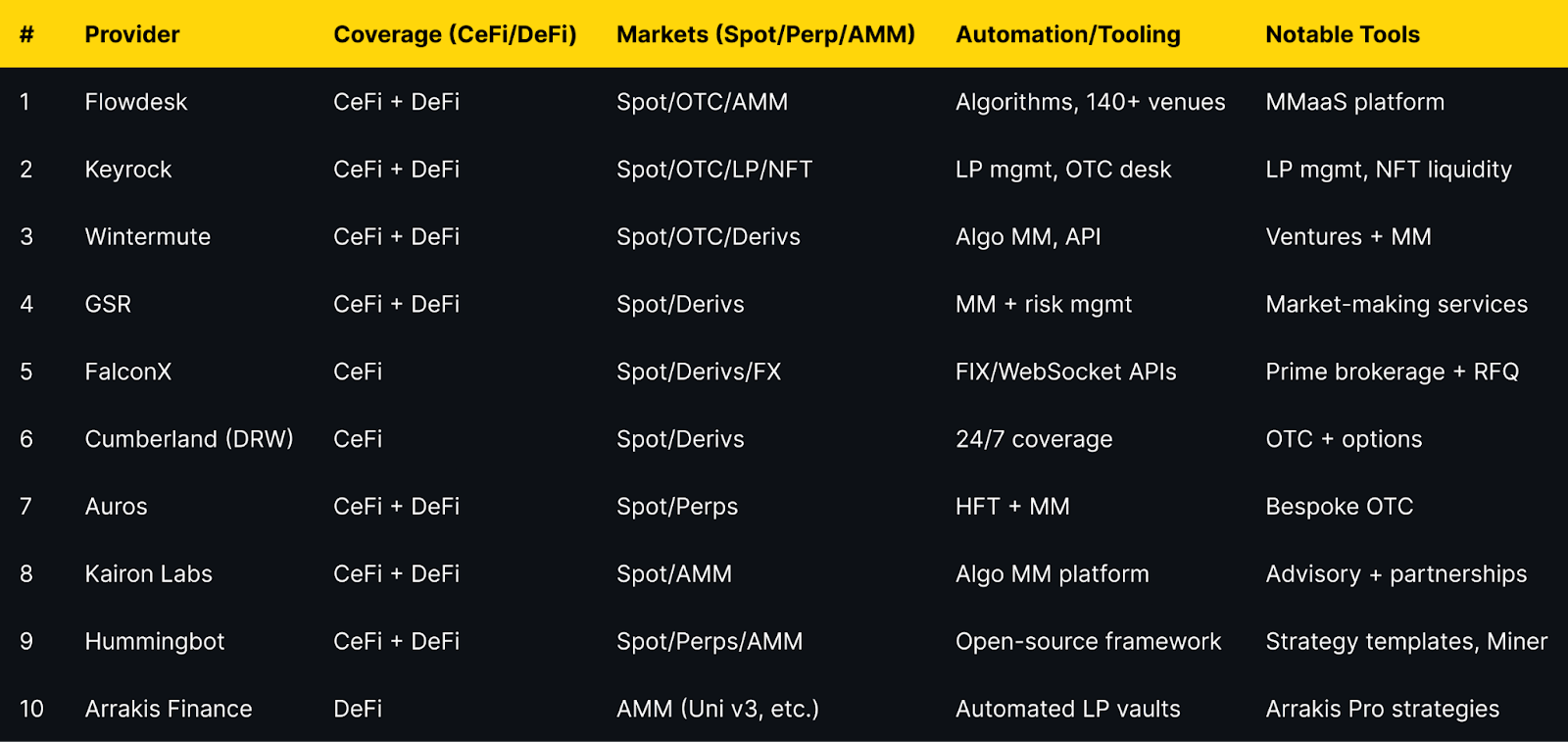

Best Crypto Liquidity Management & Market-Making Tools in November 2025 (Comparison Table)

Evidence for venue coverage and capabilities appears in provider sections below.

Top 10 Crypto Liquidity Management & Market-Making Tools in November 2025

1. Flowdesk — Best for compliance-first MMaaS at global scale

Why Use It. Flowdesk runs a compliance-first market-making and OTC platform with proprietary execution algos and integrations across 140+ centralized and decentralized exchanges, suitable for token issuers that need unified coverage and reporting. (flowdesk.co)

Best For. Token issuers; exchanges; DAOs with multi-venue liquidity needs; teams prioritizing compliance.

Notable Features. Low-latency infrastructure; MMaaS with 24/7 teams; 140+ venue connectivity; internal policies & compliance center. (flowdesk.co)

Consider If. You want documented models (retainer vs loan/call) and clear KPIs before engagement. (flowdesk.co)

Fees Notes. Custom; contract-based; network/exchange fees apply.

Regions. Global; subject to local licensing and restrictions (France DASP registration referenced on site). (flowdesk.co)

Alternatives. Keyrock; GSR.

2. Keyrock — Best for full-stack liquidity (MM, OTC, LP, NFTs)

Why Use It. Keyrock delivers market making, OTC trading, treasury solutions, and liquidity pool management for token issuers and venues; it also offers NFT liquidity and publishes security awareness and terms. (Keyrock)

Best For. Issuers needing both CeFi and DeFi coverage; platforms adding NFT or LP liquidity.

Notable Features. Liquidity pool management; OTC/options; NFT liquidity; research/insights. (Keyrock)

Consider If. You want a single counterparty handling MM + LP mgmt with documented terms. (Keyrock)

Fees Notes. Custom; scope-based; network/exchange fees may apply.

Regions. Global; services subject to applicable laws and platform eligibility.

Alternatives. Flowdesk; Wintermute.

3. Wintermute — Best for algorithmic crypto liquidity at scale

Why Use It. Wintermute is a leading algorithmic trading firm and liquidity partner that supports efficient markets across centralized and decentralized venues, with a broader ventures arm for strategic projects. (wintermute.com)

Best For. Larger token issuers and institutions that want deep, programmatic liquidity and breadth of venues.

Notable Features. Algorithmic MM; OTC; venture support; expanding US presence. (fnlondon.com)

Consider If. You need institutional processes and policy engagement in the US market. (fnlondon.com)

Fees Notes. Custom; inventory/retainer structures typical.

Regions. Global.

Alternatives. GSR; FalconX.

4. GSR — Best for crypto liquidity + risk management depth

Why Use It. GSR offers market-making and risk management across spot and derivatives, working with exchanges, token issuers, and institutions; it publishes regular research and insights. (gsr.io)

Best For. Issuers seeking experienced MM with derivatives coverage and institutional process.

Notable Features. Trading + market making services; exchange connectivity; research hub. (gsr.io)

Consider If. You need structured reporting and risk frameworks across venues.

Fees Notes. Custom; scope-based; exchange/network fees apply.

Regions. Global.

Alternatives. Wintermute; Flowdesk.

5. FalconX — Best prime brokerage + deep crypto liquidity access

Why Use It. FalconX is a prime broker with $2T+ executed and access to 400+ tokens, offering unified spot/derivs/FX execution, OTC, and APIs (WebSocket/FIX/REST). (falconx.io)

Best For. Institutions wanting a single counterparty with RFQ, streaming, and FIX integration.

Notable Features. Deep liquidity pool; algorithmic/TWAP tools; ETF issuer liquidity support; Talos integrations. (falconx.io)

Consider If. You want prime services plus execution algos rather than a pure MM retainer.

Fees Notes. Custom; execution- and volume-based; venue/network fees apply.

Regions. Global (token availability may vary). (falconx.io)

Alternatives. Cumberland; GSR.

6. Cumberland (DRW) — Best for institutional OTC and options

Why Use It. A DRW subsidiary active since 2014 in digital assets, Cumberland provides 24/7 institutional liquidity across spot, listed derivatives, bilateral options, and NDFs, with relationship coverage. (cumberland.io)

Best For. Institutions needing OTC block liquidity and derivatives structures.

Notable Features. OTC/RFQ; BTC/ETH options; futures basis; DRW backing. (cumberland.io)

Consider If. You need large, bespoke trades and derivatives hedging under institutional processes.

Fees Notes. Custom; RFQ spreads/commissions; venue/network fees apply.

Regions. Global, subject to applicable regulations.

Alternatives. FalconX; Wintermute.

7. Auros — Best for HFT-driven market-making with bespoke design

Why Use It. Auros combines high-frequency trading and strategic market making across CeFi and DeFi with bespoke OTC and transparency-oriented reporting for token stability. (Auros)

Best For. Projects seeking a partner for token launch support and stability across venues.

Notable Features. HFT + MM stack; CeFi/DeFi coverage; insights & reporting. (Auros)

Consider If. You want tailored strategies and comms during volatility.

Fees Notes. Custom; scope-based; network/venue fees apply.

Regions. Global.

Alternatives. Kairon Labs; GSR.

8. Kairon Labs — Best issuer-focused market-making + advisory

Why Use It. Kairon Labs provides algorithmic market making, liquidity provision, partnerships, and advisory for issuers, with educational content on MM models (designated vs principal). (kaironlabs.com)

Best For. Small–mid cap issuers needing hands-on guidance plus execution.

Notable Features. Issuer-centric services; partnerships support; model education. (kaironlabs.com)

Consider If. You want advisory plus MM under one roof.

Fees Notes. Custom; scope-based; exchange/network fees apply.

Regions. Global.

Alternatives. Auros; Flowdesk.

9. Hummingbot — Best open-source framework for DIY market making

Why Use It. Hummingbot is an open-source Python framework to run automated strategies on any CEX/DEX, with built-in templates for pure market making and perpetual MM and extensive docs. (hummingbot.org)

Best For. Developers, quant hobbyists, and small desks wanting DIY automation.

Notable Features. Strategy library; Docker/API quickstarts; Miner rewards marketplace. (hummingbot.org)

Consider If. You accept self-hosting and operational overhead instead of a service contract.

Fees Notes. Software is free; trading/withdrawal/network fees still apply.

Regions. Global (open-source).

Alternatives. Arrakis (for LP vaults); Keyrock (for managed LP).

10. Arrakis Finance — Best for automated onchain LP management (Uni v3 & more)

Why Use It. Arrakis provides automated LP vaults and Arrakis Pro strategies for token issuers to manage concentrated liquidity with rebalancing and inventory targeting. (arrakis.finance)

Best For. Projects prioritizing DeFi AMM depth and capital efficiency on Uniswap v3-style DEXs.

Notable Features. Ongoing inventory management; automated rebalancing; issuer-specific vault programs. (arrakis.finance)

Consider If. You need onchain, non-custodial liquidity programs over CeFi MM retainers.

Fees Notes. Protocol/vault fees; gas costs on supported chains.

Regions. Global (onchain).

Alternatives. Hummingbot (DIY); GSR (CeFi/MM).

Decision Guide: Best By Use Case

- Regulated, compliance-first MMaaS: Flowdesk. (flowdesk.co)

- One-stop liquidity incl. NFTs & LP mgmt: Keyrock. (Keyrock)

- Algorithmic MM at institutional scale: Wintermute or GSR. (wintermute.com)

- Prime brokerage + FIX/WebSocket execution: FalconX. (falconx.io)

- OTC blocks + options structures: Cumberland (DRW). (cumberland.io)

- Launch support with HFT expertise: Auros. (Auros)

- Issuer-centric MM + advisory: Kairon Labs. (kaironlabs.com)

- DIY automation (open-source): Hummingbot. (hummingbot.org)

- Onchain concentrated liquidity programs: Arrakis Finance. (arrakis.finance)

How to Choose the Right Crypto Liquidity Management & Market-Making Tool (Checklist)

- Region eligibility & licensing: Confirm provider registrations and legal terms in your jurisdictions.

- Venue coverage: CeFi exchanges, perps venues, and DeFi AMMs you actually need.

- Inventory model: Retainer vs. inventory loan/call; required collateral and risks. (flowdesk.co)

- Execution stack: APIs (FIX/WebSocket/REST), algos, latency, and monitoring. (falconx.io)

- Onchain LP management: If DeFi-first, evaluate vault design, rebalancing, and transparency. (arrakis.finance)

- Reporting & SLAs: Daily/weekly liquidity KPIs, spread targets, uptime, incident process.

- Security & compliance: Insider-trading controls, conflict-of-interest policies, audits/policies page. (flowdesk.co)

- Costs & fees: Understand spread capture, performance fees, platform fees, and gas.

- Offboarding plan: Access to accounts, revocation of keys, vault migrations, and documentation.

Red flags: No written terms, vague reporting, or inability to name supported venues.

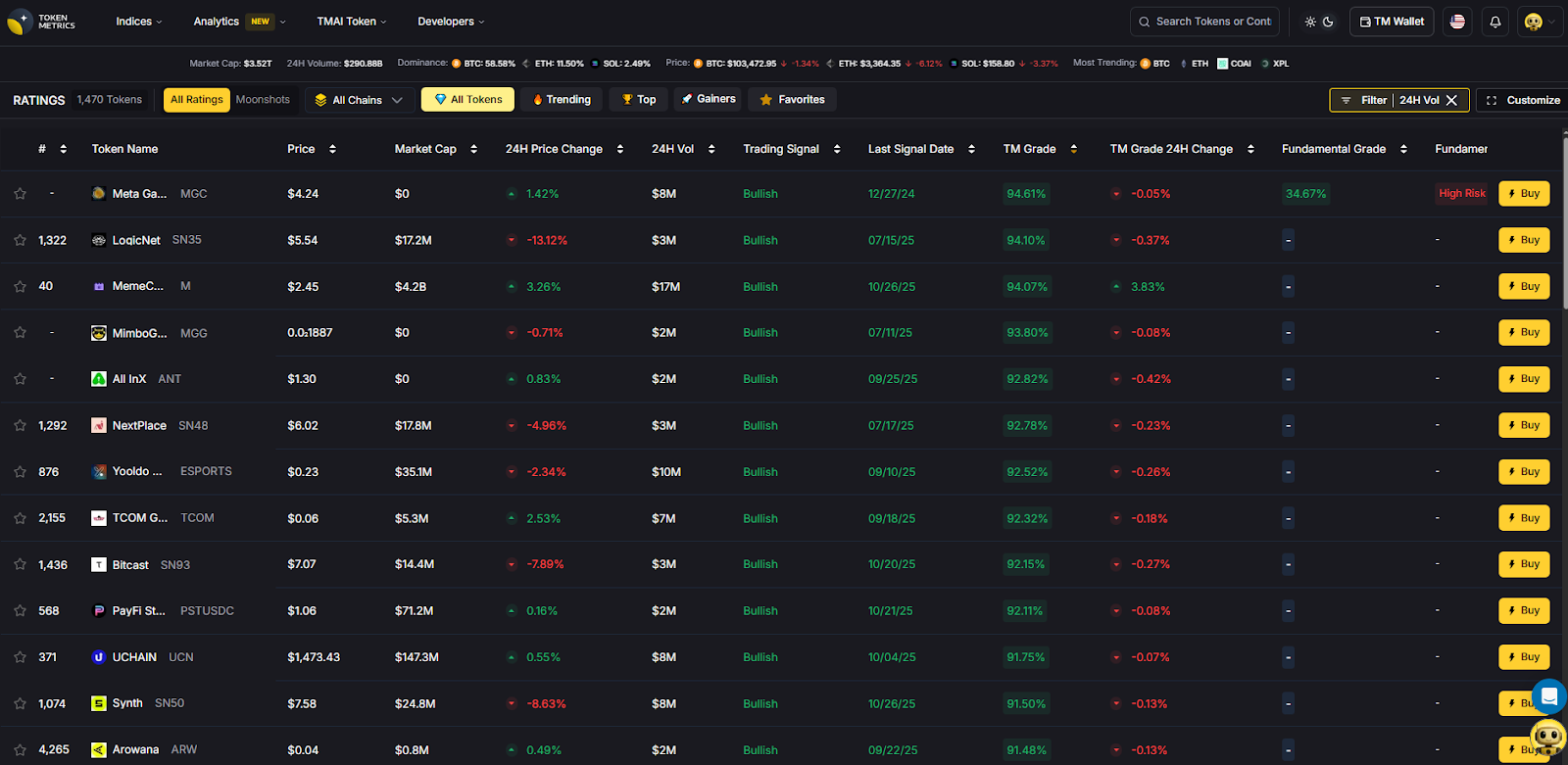

Use Token Metrics With Any Crypto Liquidity Provider

- AI Ratings to screen assets by quality and momentum before listings.

- Narrative Detection to catch early theme shifts that can impact liquidity.

- Portfolio Optimization to size inventory across chains and LPs.

- Alerts & Signals to time entries/exits and rebalance LP ranges.

Workflow: Research in Token Metrics → Select provider → Execute on-chain/CeFi → Monitor with alerts.

Start free trial to screen assets and time entries with AI.

Security & Compliance Tips

- Prefer partners that publish policies/compliance pages and name registrations. (flowdesk.co)

- Segregate exchange accounts and use least-privilege API keys; rotate regularly.

- For DeFi vaults, verify non-custodial design, fee schedules, and admin controls. (arrakis.finance)

- Confirm reporting cadence (inventory, spreads, volume, venue list).

- Use official domains and channels to avoid impersonation. (Keyrock)

- Understand engagement models (retainer vs loan/call) and associated risks. (flowdesk.co)

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Signing without clear KPIs (spread, depth, venue list).

- Ignoring region restrictions or licensing.

- Overlooking DeFi vault mechanics (rebalance rules, fees, inventories). (arrakis.finance)

- Mixing treasury and MM wallets without operational controls.

- Choosing CeFi-only when you need AMM depth (or vice versa).

- Underestimating implementation: APIs, custody, exchange listings, oracle feeds.

How We Picked (Methodology & Scoring)

We scored each provider using the following weights:

- Liquidity — 30% (depth, spreads, execution venues)

- Security — 25% (controls, disclosures, compliance posture)

- Coverage — 15% (CeFi/DeFi, spot/derivs, chain support)

- Costs — 15% (fee clarity, model fit, onchain costs)

- UX — 10% (integration, tooling, reporting)

- Support — 5% (24/7 coverage, responsiveness)

Data sources: official product, docs, pricing/terms, security/policies, and status pages; reputable market datasets used only to cross-check scale and venues. Last updated November 2025.

FAQs

What are liquidity management and market-making tools?

Software platforms and service providers that supply bids/asks, balance inventory, and manage onchain liquidity so markets remain liquid with tighter spreads and lower slippage (CeFi and DeFi).

Are managed market makers or DIY bots safer?

Managed providers handle execution, risk, and reporting under contracts; DIY bots like Hummingbot provide control but require operational expertise and monitoring. Choose based on team capacity and risk tolerance. (hummingbot.org)

How do providers charge?

Common models include retainers, inventory loan/call structures, execution fees/spreads, and protocol/vault fees on DeFi. Clarify model, caps, and KPI targets before engagement. (flowdesk.co)

Can I combine CeFi MM with DeFi vaults?

Yes. Many issuers use a CeFi MM for order books plus an onchain LP manager (e.g., Arrakis) for AMM depth, with shared reporting and risk limits. (arrakis.finance)

Do these tools work in the US/EU/APAC?

Most providers are global but subject to local regulations, listings, and counterparty restrictions. Check each provider’s terms/compliance pages and confirm venue eligibility. (flowdesk.co)

Conclusion + Related Reads

If you want compliance-centric, multi-venue coverage, start with Flowdesk or Keyrock. For institutional scale, add Wintermute or GSR. If you need prime services and execution, consider FalconX or Cumberland. For DIY or onchain-first, evaluate Hummingbot and Arrakis.

Related Reads (Token Metrics):

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)