Best NFT Marketplaces (2025)

Why NFT Marketplaces Matter in September 2025

NFT marketplaces are where collectors buy, sell, and mint digital assets across Ethereum, Bitcoin Ordinals, Solana, and gaming-focused L2s. If you’re researching the best NFT marketplaces to use right now, this guide ranks the leaders for liquidity, security, fees, and user experience—so you can move from research to purchase with confidence. The short answer: choose a regulated venue for fiat on-ramps and beginner safety, a pro venue for depth and tools, or a chain-specialist for the collections you care about. We cover cross-chain players (ETH, SOL, BTC), creator-centric platforms, and gaming ecosystems. Secondary searches like “NFT marketplace fees,” “Bitcoin Ordinals marketplace,” and “where to buy NFTs” are woven in naturally—without fluff.

How We Picked (Methodology & Scoring)

- Liquidity (30%): Active buyers/sellers, depth across top collections, and cross-chain coverage.

- Security (25%): Venue track record, custody options, proof-of-reserves (where relevant), scams countermeasures, fee/royalty transparency.

- Coverage (15%): Chains (ETH/BTC/SOL/Immutable, etc.), creator tools, launchpads, aggregators.

- Costs (15%): Marketplace fees, gas impact, royalty handling, promos.

- UX (10%): Speed, analytics, mobile, bulk/sweep tools.

- Support (5%): Docs, help centers, known regional constraints.

We used official product pages, docs/help centers, security/fee pages and cross-checked directional volume trends with widely cited market datasets. We link only to official provider sites in this article. Last updated September 2025.

Top 10 NFT Marketplaces in September 2025

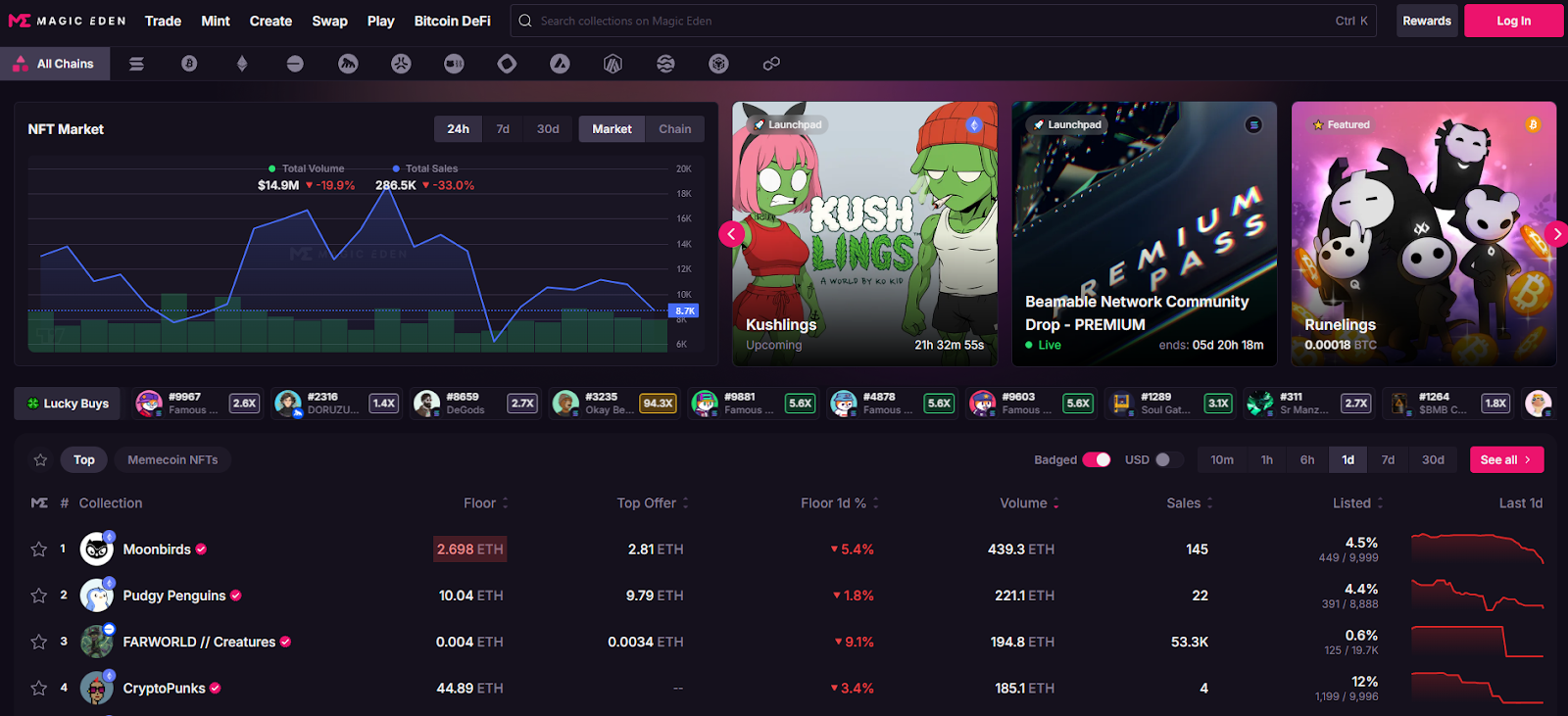

1. Magic Eden — Best for cross-chain collectors (ETH, SOL, BTC & more)

Why Use It: Magic Eden has evolved into a true cross-chain hub spanning Solana, Bitcoin Ordinals, Ethereum, Base and more, with robust discovery, analytics, and aggregation so you don’t miss listings. Fees are competitive and clearly documented, and Ordinals/SOL support is best-in-class for traders and creators. Best For: Cross-chain collectors, Ordinals buyers, SOL natives, launchpad users.

Notable Features: Aggregated listings; trait-level offers; launchpad; cross-chain swap/bridge learning; pro charts/analytics. Consider If: You want BTC/SOL liquidity with low friction; note differing fees per chain. Alternatives: Blur (ETH pro), Tensor (SOL pro).

Regions: Global • Fees Notes: 2% on BTC/SOL; 0.5% on many EVM trades (creator royalties optional per metadata).

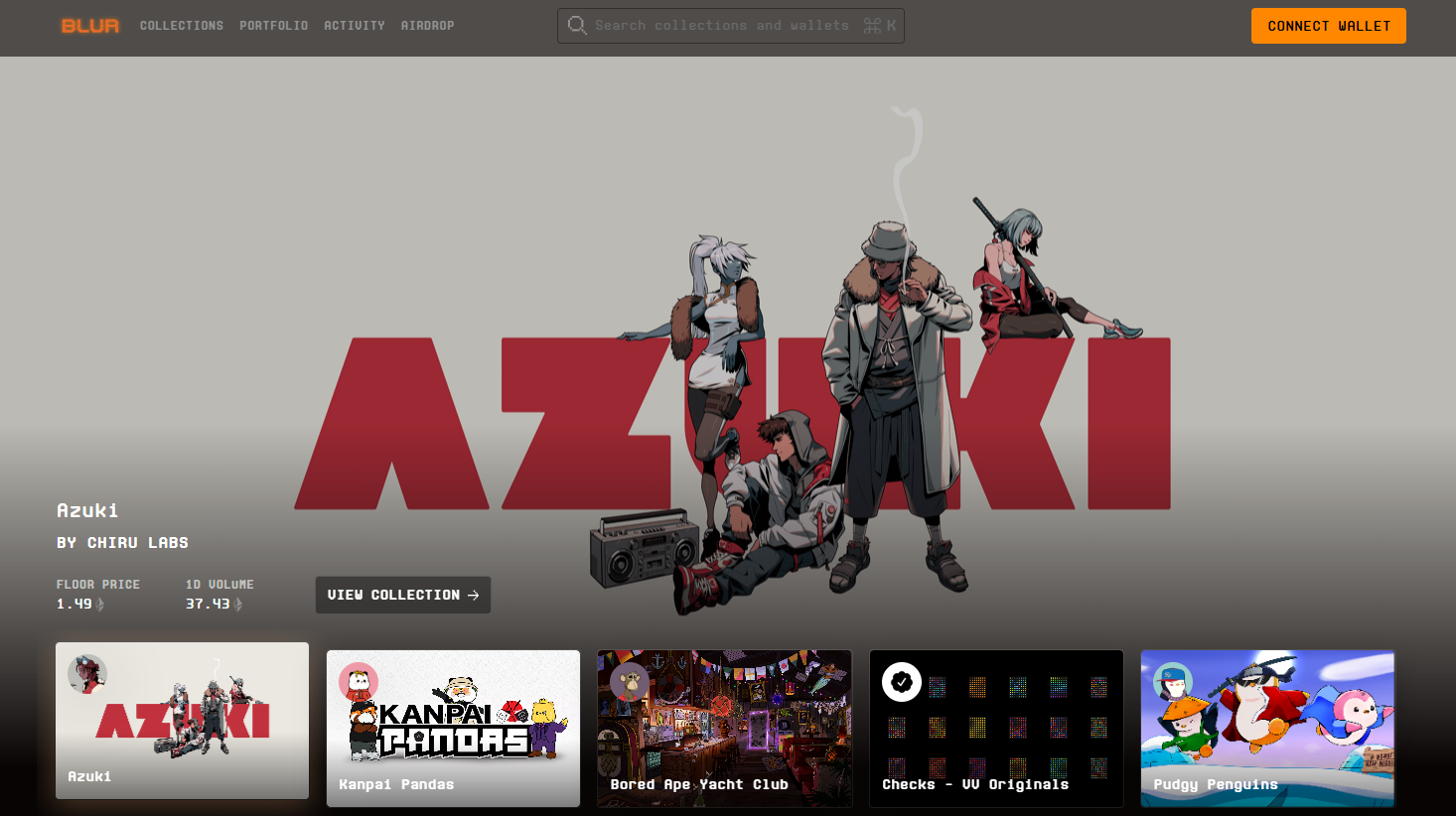

2. Blur — Best for pro ETH traders (zero marketplace fees)

Why Use It: Blur is built for speed, depth, and sweeps. It aggregates multiple markets, offers advanced portfolio analytics, and historically charges 0% marketplace fees—popular with high-frequency traders. Rewards seasons have reinforced liquidity. Best For: Power users, arbitrage/sweep traders, analytics-driven collectors.

Notable Features: Multi-market sweep; fast reveals/snipes; portfolio tools; rewards. Consider If: You prioritize pro tools and incentives over hand-holding UX.

Alternatives: OpenSea (broad audience), Magic Eden (cross-chain).

Regions: Global • Fees Notes: 0% marketplace fee shown on site; royalties subject to collection rules.

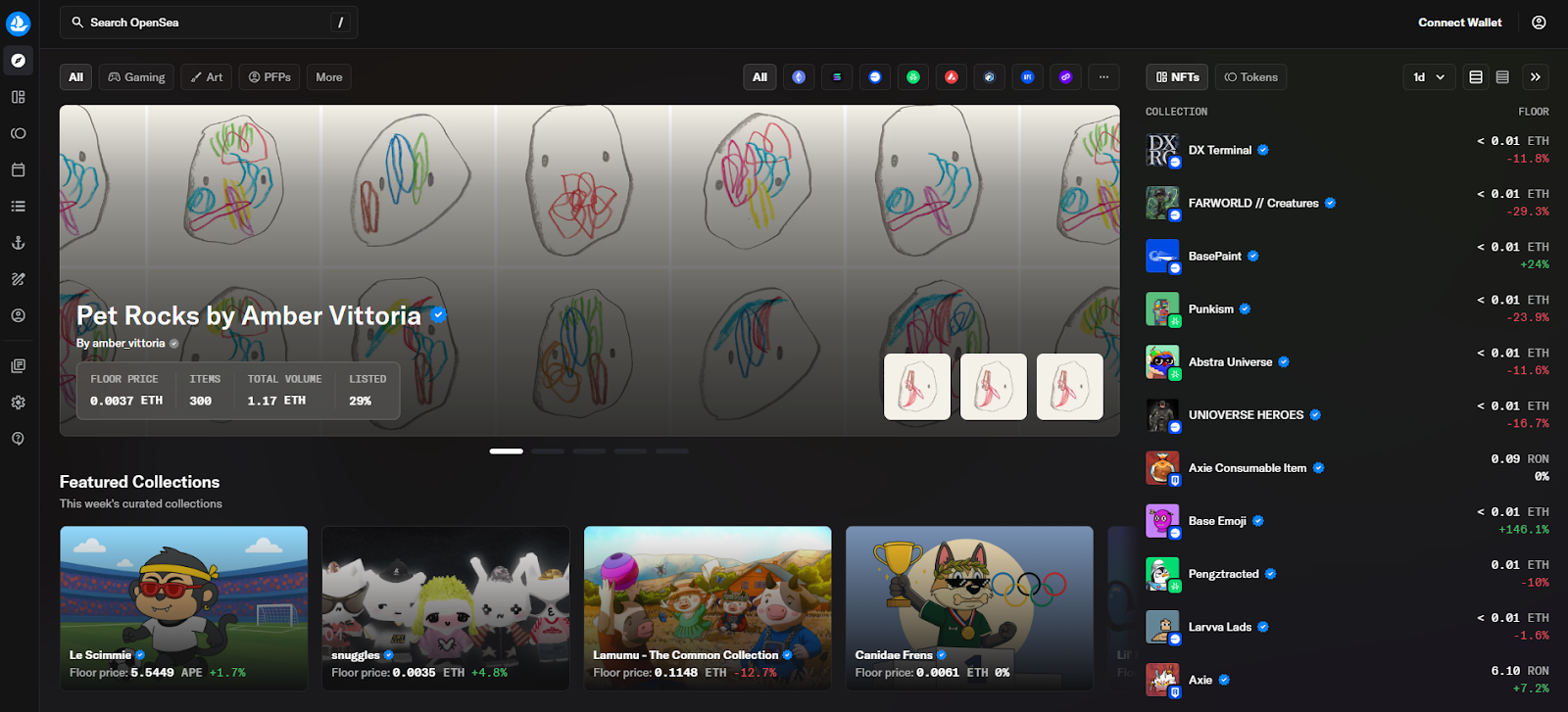

3. OpenSea — Best for mainstream access & breadth

Why Use It: The OG multi-chain marketplace with onboarding guides, wide wallet support, and large catalog coverage. OpenSea’s “OS2” revamp and recent fee policy updates keep it relevant for mainstream collectors who want familiar UX plus broad discovery. Best For: Newcomers, multi-chain browsing, casual collectors.

Notable Features: Wide collection breadth; OpenSea Pro aggregator; flexible royalties; clear TOS around third-party/gas fees. Consider If: You want broadest brand recognition; be aware fees may change. Alternatives: Blur (pro ETH), Rarible (community markets).

Regions: Global (note U.S. regulatory headlines under review). Fees Notes: Reported trading fee currently ~1% as of mid-Sept 2025; creator earnings and gas are separate.

4. Tensor — Best for pro Solana traders

Why Use It: Tensor is the Solana power-user venue with enforced-royalty logic, maker/taker clarity, and pro-grade bidding/escrow. Fast UI, Solana-native depth, and creator tools make it the advanced SOL choice. Best For: SOL traders, market-makers, bid/AMM-style flows.

Notable Features: 0% maker / ~2% taker; enforced royalties paid by taker; shared escrow; price-lock mechanics highlighted in community docs. Consider If: You want pro tools on Solana; fees differ from Magic Eden. Alternatives: Magic Eden (SOL/BTC/ETH), Hyperspace (agg).

Regions: Global • Fees Notes: 2% taker / 0% maker; royalties per collection rules

5. OKX NFT Marketplace — Best for multi-chain aggregation + Ordinals

Why Use It: OKX’s NFT market integrates with the OKX Web3 Wallet, aggregates across chains, and caters to Bitcoin Ordinals buyers with an active marketplace. Docs highlight multi-chain support and low listing costs. Note potential restrictions for U.S. residents. Best For: Multi-chain deal-hunters, Ordinals explorers, exchange users.

Notable Features: Aggregation; OKX Wallet; BTC/SOL/Polygon support; zero listing fees per help docs. Consider If: You’re outside the U.S. or comfortable with exchange-affiliated wallets. Alternatives: Magic Eden (multi-chain), Kraken NFT (U.S. friendly).

Regions: Global (U.S. access limited) • Fees Notes: Zero listing fee; trading fees vary by venue/collection.

6. Kraken NFT — Best for U.S. compliance + zero gas on trades

Why Use It: Kraken’s marketplace emphasizes security, compliance, and a simple experience with zero gas fees on trades (you pay network gas only when moving NFTs in/out). Great for U.S. users who prefer a regulated exchange brand. Best For: U.S. collectors, beginners, compliance-first buyers.

Notable Features: Zero gas on trades; creator earnings support; fiat rails via the exchange. Consider If: You prioritize regulated UX over max liquidity.

Alternatives: OpenSea (breadth), OKX NFT (aggregation).

Regions: US/EU • Fees Notes: No gas on trades; royalties and marketplace fees vary by collection.

7. Rarible — Best for community marketplaces & no-code storefronts

Why Use It: Rarible lets projects spin up branded marketplaces with custom fee routing (even 0%), while the main Rarible front-end serves multi-chain listings. Transparent fee schedules and community tooling appeal to creators and DAOs. Best For: Creators/DAOs launching branded stores; community traders.

Notable Features: No-code community marketplace builder; regressive fee schedule on main site; ETH/Polygon support. Consider If: You want custom fees/branding or to route fees to a treasury. Alternatives: Zora (creator mints), Foundation (curated art).

Regions: Global • Fees Notes: Regressive service fees on main Rarible; community markets can set fees to 0%.

8. Zora — Best for creator-friendly mints & social coins

Why Use It: Zora powers on-chain mints with a simple flow and a small protocol mint fee that’s partially shared with creators and referrers, and it now layers social “content coins.” Great for artists who prioritize distribution and rewards over secondary-market depth. Best For: Artists, indie studios, open editions, mint-first strategies.

Notable Features: One-click minting; protocol rewards; Base/L2 focus; social posting with coins. Consider If: You value creator economics; secondary liquidity may be thinner than pro venues.

Alternatives: Rarible (community stores), Foundation (curation).

Regions: Global • Fees Notes: Typical mint fee ~0.000777 ETH; reward splits for creators/referrals per docs.

9. Gamma.io — Best for Bitcoin Ordinals creators & no-code launchpads

Why Use It: Gamma focuses on Ordinals with no-code launchpads and a clean flow for inscribing and trading on Bitcoin. If you want exposure to BTC-native art and collections, Gamma is a friendly on-ramp. Best For: Ordinals creators/collectors, BTC-first communities.

Notable Features: No-code minting; Ordinals marketplace; education hub. Consider If: You want BTC exposure vs EVM/SOL liquidity; check fee line items. Alternatives: Magic Eden (BTC), UniSat (wallet+market).

Regions: Global • Fees Notes: Commission on mints/sales; see support article.

10. TokenTrove — Best for Immutable (IMX/zkEVM) gaming assets

Why Use It: TokenTrove is a top marketplace in the Immutable gaming ecosystem with stacked listings, strong filters, and price history—ideal for trading in-game items like Gods Unchained, Illuvium, and more. It plugs into Immutable’s global order book and fee model. Best For: Web3 gamers, IMX/zkEVM collectors, low-gas trades.

Notable Features: Immutable integration; curated gaming collections; alerts; charts. Consider If: You mainly collect gaming assets and want L2 speed with predictable fees.

Alternatives: OKX (aggregation), Sphere/AtomicHub (IMX partners).

Regions: Global • Fees Notes: Immutable protocol fee ~2% to buyer + marketplace maker/taker fees vary by venue.

Decision Guide: Best By Use Case

- Regulated U.S. access & zero gas on trades: Kraken NFT.

- Global liquidity + cross-chain coverage (BTC/SOL/ETH): Magic Eden.

- Pro ETH tools & zero marketplace fees: Blur.

- Pro Solana depth & maker/taker clarity: Tensor.

- Bitcoin Ordinals creators & no-code launch: Gamma.io.

- Gaming items on Immutable: TokenTrove.

- Community marketplaces (custom fees/branding): Rarible.

- Creator-first minting + rewards: Zora.

How to Choose the Right NFT Marketplace (Checklist)

- Region & eligibility: Are you U.S.-based or restricted? (OKX may limit U.S. users.)

- Collection coverage & chain: ETH/SOL/BTC/IMX? Go where your target collections trade.

- Liquidity & tools: Depth, sweep/bulk bids, analytics, trait offers.

- Fees/royalties: Marketplace fee, royalty policy, and gas impact per chain.

- Security & custody: Exchange-custodied vs self-custody; wallet best practices.

- Support & docs: Clear fee pages, dispute and help centers.

- Red flags: Opaque fee changes, poor communication, or region-blocked access when depositing/withdrawing.

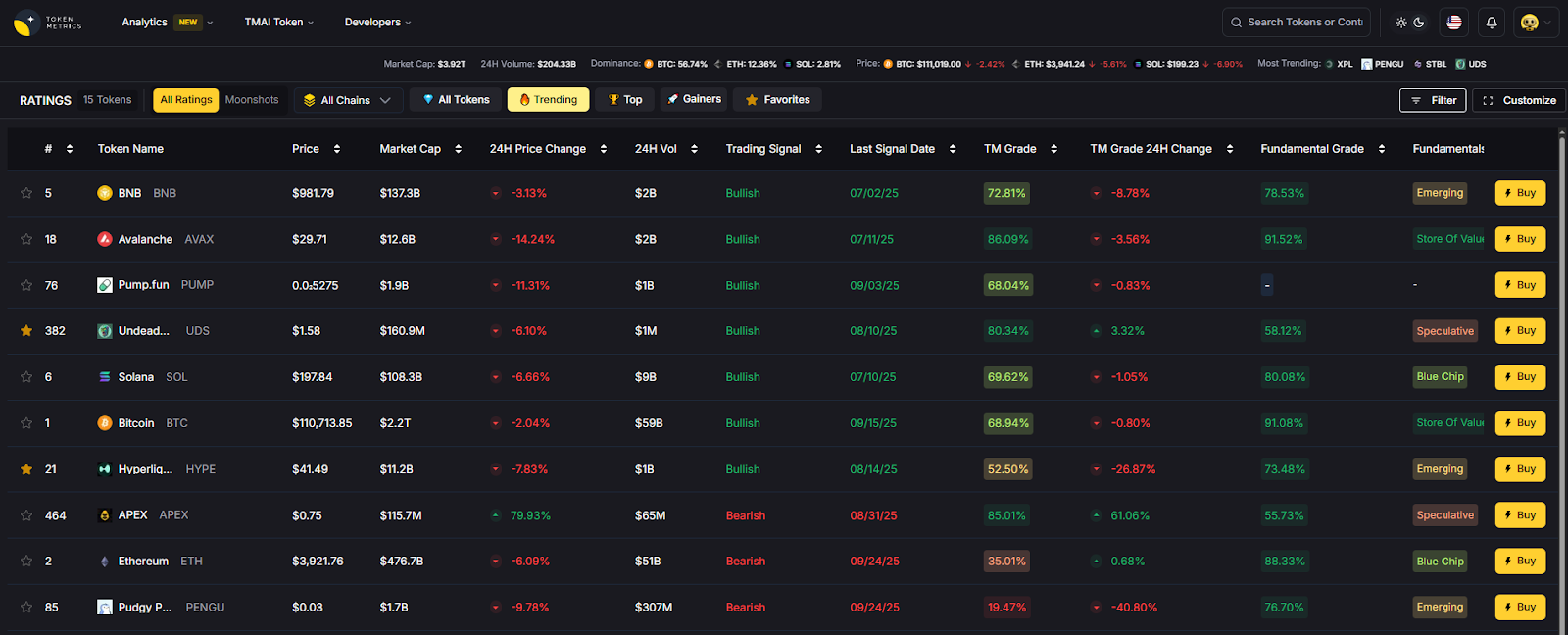

Use Token Metrics With Any NFT Marketplace

- AI Ratings: Screen collections/coins surrounding NFT ecosystems.

- Narrative Detection: Spot momentum across chains (Ordinals, gaming L2s).

- Portfolio Optimization: Balance exposure to NFTs/tokens linked to marketplaces.

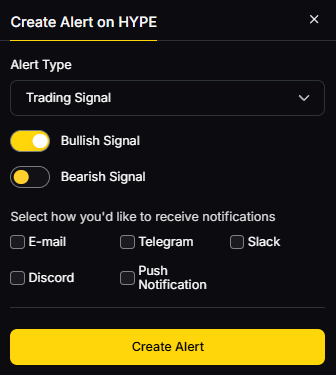

- Alerts & Signals: Track entries/exits and on-chain flows.

Workflow: Research on TM → Pick marketplace above → Execute buys/mints → Monitor with TM alerts.

Primary CTA: Start free trial

Security & Compliance Tips

- Enable 2FA and protect seed phrases; prefer hardware wallets for valuable assets.

- Understand custody: exchange-custodied (simpler) vs self-custody (control).

- Complete KYC/AML where required; mind regional restrictions.

- Verify collection royalties and contract addresses to avoid fakes.

- Practice wallet hygiene: revoke stale approvals; separate hot/cold wallets.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Ignoring fees (marketplace + gas + royalties) that change effective prices.

- Buying unverified collections or wrong contract addresses.

- Using one wallet for everything; don’t mix hot/cold funds.

- Skipping region checks (e.g., U.S. access on some exchange-run markets).

- Over-relying on hype without checking liquidity and historical sales.

FAQs

What is an NFT marketplace?

An NFT marketplace is a platform where users mint, buy, and sell NFTs (digital assets recorded on a blockchain). Marketplaces handle listings, bids, and transfers—often across multiple chains like ETH, BTC, or SOL.

Which NFT marketplace has the lowest fees?

Blur advertises 0% marketplace fees on ETH; Magic Eden lists 0.5% on many EVM trades and ~2% on SOL/BTC; Tensor uses 0% maker/2% taker. Always factor gas and royalties.

What’s best for Bitcoin Ordinals?

Magic Eden and Gamma are strong choices; UniSat’s wallet integrates with a marketplace as well. Pick based on fees and tooling.

What about U.S.-friendly options?

Kraken NFT is positioned for U.S. users with zero gas on trades. Check any exchange venue’s regional policy before funding.

Are royalties mandatory?

Policies vary: some venues enforce royalties (e.g., Tensor enforces per collection); others make royalties optional. Review each collection’s page and marketplace rules.

Do I still pay gas?

Yes, on most chains. Some custodial venues remove gas on trades but charge gas when you deposit/withdraw.

Conclusion + Related Reads

If you want cross-chain liquidity and discovery, start with Magic Eden. For pro ETH execution, Blur leads; for pro SOL, choose Tensor. U.S. newcomers who value compliance and predictability should consider Kraken NFT. Gaming collectors on Immutable can lean on TokenTrove.

Related Reads:

- Best Cryptocurrency Exchanges 2025

- Top Derivatives Platforms 2025

- Top Institutional Custody Providers 2025

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)