Chainlink (LINK) Price Prediction 2025, 2030 - Forecast Analysis

Chainlink (LINK) has emerged as a prominent player in the cryptocurrency market, offering a decentralized network of oracles that connect smart contracts with real-world data sources.

With its unique value proposition and growing adoption, many investors are curious about the future price potential of Chainlink.

In this article, we will comprehensively analyze Chainlink's price prediction, covering various timeframes, expert opinions, and market trends.

Chainlink Overview

Chainlink is an open-source network of oracles that connects smart contracts and external data sources. It acts as a bridge between blockchain platforms and real-world data, ensuring the accuracy and reliability of data inputs into smart contracts.

Built on the Ethereum blockchain, Chainlink offers services to various blockchains with smart contract functionality. The native cryptocurrency of Chainlink is called LINK, which serves two primary purposes within the network.

Firstly, it incentivizes node operators or oracles to provide reliable, accurate off-chain data. Secondly, it is staked by node operators to participate in the network and earn more LINK tokens.

Chainlink has found applications in various sectors, including decentralized finance (DeFi), gaming, insurance, and traditional systems.

In DeFi, Chainlink provides reliable price feeds, interest rates, and collateralization confirmation for lending protocols and decentralized exchanges.

It also offers solutions for generating on-chain verifiable randomness in gaming applications. Additionally, Chainlink enables traditional systems to make their data and services accessible to blockchain networks through its oracles.

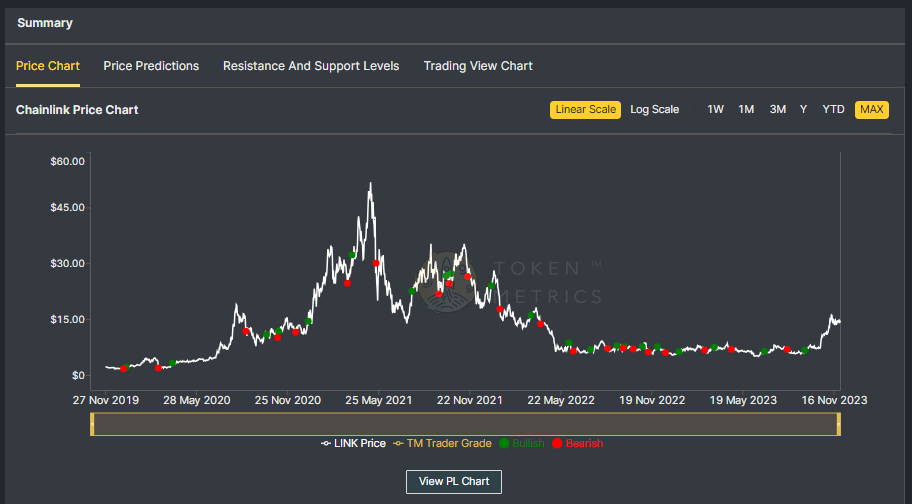

Historical Performance of Chainlink

To understand the potential future price of Chainlink, it is essential to analyze its historical performance. Chainlink has experienced significant growth since its launch in 2017.

The price of LINK surged from its initial ICO price of $0.11 to an all-time high of $54.40. However, like any cryptocurrency, it also experienced price volatility and corrections periods.

Chainlink's price has been bullish in recent years, reaching significant milestones in market capitalization and adoption.

Despite facing resistance around $12, Chainlink has maintained its position as the 13th largest cryptocurrency by market cap. The current market cap of Chainlink is $8.2 billion.

Chainlink (LINK) Current Fundamentals

Before making any price predictions, it is crucial to assess the current fundamentals of Chainlink. The network's partnerships, developments, and technological advancements significantly determine its future price potential.

Chainlink has formed strategic collaborations with prominent cryptocurrency and traditional finance entities. For example, Coinbase Cloud is now a Chainlink node operator, enhancing security and network capacity.

The platform has also achieved interoperability with multiple blockchains, including Bitcoin, Polkadot, and Binance Smart Chain.

Furthermore, Chainlink's solutions have been widely adopted in various industries. In decentralized finance, Chainlink's oracles provide reliable price data for lending protocols like Aave.

The network has also been utilized in gaming applications, insurance markets, and traditional systems to automate processes and access real-time data.

Chainlink Price Prediction - Scenario Analysis

To forecast Chainlink's future price, it is crucial to consider multiple scenarios based on different market conditions and expert opinions. Let's explore some potential price predictions for Chainlink.

Chainlink Price Prediction: Bullish Market

In a bull market scenario where the overall cryptocurrency market experiences significant growth, Chainlink could benefit from increased adoption and demand for its services. Industry experts predict that Chainlink's price could reach new highs in this case.

If the crypto market cap hits $3 Trillion and Chainlink retains its current 0.54% dominance, its price could ascend to $29.39.

In a more bullish scenario of the crypto market surging to $10 Trillion, Chainlink's price could soar to a staggering $97.97, resulting in a potential 7x return for investors.

It is important to note that these predictions are based on various factors and assumptions, and market conditions can change rapidly. However, bullish market sentiment and increased adoption of Chainlink's solutions could drive its price to new heights.

Chainlink Price Prediction: Bearish Market

In a bearish market scenario where the overall cryptocurrency market faces a downturn or stagnation, Chainlink's price could experience a decline or slower growth. Market sentiment and external factors can significantly impact the price of cryptocurrencies.

In this case, the price of Chainlink may face downward pressure with a decreased dominance of 0.27%.

In this scenario, If the crypto market cap hits $3 Trillion, Chainlink could trade around $14.89 in the short term and struggle to surpass $48.98 by 2030 even if the total crypto market cap surpasses the $10 Trillion mark.

It is crucial to consider potential risks and challenges that Chainlink may face. Factors such as regulatory developments, competition from other projects, and technological advancements can affect the growth trajectory of Chainlink.

Also Read - Next Crypto Bull Run Prediction

Chainlink Price Prediction - Industry Expert Opinion

Coinpedia platform predicts Chainlink could reach $213.1 to $253.51 by 2030, and experts from Changelly forecast that it could reach minimum and maximum prices of $30.69 and $38.26 by the end of 2025. By the end of 2030, the price of Chainlink could reach $196.53 to $244.09.

Other industry analysts have varying opinions on the future price of Chainlink. Ambcrypto.com predicts that Chainlink could reach $40.30 to $60.45 by 2030.

Note - Start Your Free Trial Today and Uncover Your Token's Price Prediction and Forecast on Token Metrics.

Is Chainlink a Good Investment?

The question of whether Chainlink is a good investment depends on various factors, including the investor's risk tolerance, investment goals, and market conditions. Chainlink has shown significant potential with its innovative solutions and growing adoption in various sectors.

Chainlink's decentralized oracle network addresses a critical limitation of smart contracts, enabling them to access real-world data seamlessly.

This unique value proposition has attracted the attention of cryptocurrency enthusiasts and traditional businesses looking to leverage blockchain technology.

However, conducting thorough research and due diligence is important before investing in cryptocurrency.

Investors should consider the project's fundamentals, team expertise, market demand, and potential risks. Consulting with financial advisors or experts in the cryptocurrency space can also provide valuable insights.

Also Read - Is Solana a Good Investment?

Risks and Rewards

As with any investment, Chainlink carries both risks and rewards. It is important to consider these factors before making investment decisions.

Risks

- Market Volatility: Cryptocurrency markets are known for their volatility, and Chainlink is no exception. Price fluctuations can be significant, and investors should be prepared for potential losses.

- Regulatory Uncertainty: The regulatory landscape for cryptocurrencies is evolving. Changes in regulations or government actions can impact the adoption and growth of Chainlink.

- Competition: The blockchain industry is highly competitive, with several projects offering similar solutions. Chainlink faces competition from established players and emerging projects in the Oracle space.

Rewards

- Growing Adoption: Chainlink has gained significant traction in various industries, including DeFi, gaming, and insurance. Increased adoption of Chainlink's solutions can drive demand for its services and potentially lead to price appreciation.

- Technological Advancements: Chainlink continues to innovate and improve its offerings. Developing new features and partnerships can enhance the network's capabilities and attract more users.

- Strong Fundamentals: Chainlink's decentralized oracle network has solid fundamentals, addressing a critical need in the blockchain ecosystem. Its value proposition and expanding use cases position it as a potential leader in the space.

Also Read - XRP Price Prediction

Chainlink Current Developments and Future Potential

Chainlink has been making significant strides in its development and partnerships, positioning itself for future growth. The network's recent collaborations with Coinbase and its interoperability across multiple blockchains demonstrate its potential to expand its reach and impact.

Additionally, Chainlink's solutions have gained recognition in the decentralized finance sector, where reliable price data and oracles are essential for functioning lending protocols and decentralized exchanges.

As the DeFi industry grows, Chainlink's services will likely remain in high demand. The future potential of Chainlink lies in its ability to continue innovating and addressing the evolving needs of the blockchain ecosystem.

The network's scalability, security, and reliability will be crucial in attracting more users and establishing itself as a critical player in the industry.

Conclusion

Chainlink's price prediction involves various factors, including market conditions, expert opinions, and the project's fundamentals.

While external factors and market trends can influence the future price of Chainlink, its strong fundamentals, growing adoption, and expanding use cases position it as a promising project in the blockchain space.

Investors should conduct thorough research, assess risk tolerance, and consider market conditions before making investment decisions. Chainlink's unique value proposition, partnerships, and technological advancements make it an intriguing project to watch in the coming years.

Disclaimer

The information provided on this website does not constitute investment advice, financial advice, trading advice, or any other advice, and you should not treat any of the website's content as such.

Token Metrics does not recommend that any cryptocurrency should be bought, sold, or held by you. Conduct your due diligence and consult your financial advisor before making investment decisions.

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)