How Does Bitcoin Differ From Ethereum: A Comprehensive 2025 Analysis

The cryptocurrency space continues to evolve at a rapid pace, with Bitcoin and Ethereum maintaining their status as the two most dominant digital assets in the crypto market. Both Bitcoin and Ethereum operate on blockchain technology, yet they differ fundamentally in their design, purpose, and investment profiles. This article presents a bitcoin vs ethereum comparison, exploring the key differences between these leading cryptocurrencies. Understanding the Bitcoin vs Ethereum debate and the key differences between Bitcoin and Ethereum is essential for investors and enthusiasts seeking to navigate the dynamic cryptocurrency market of 2025 effectively.

Introduction to Bitcoin and Ethereum

Bitcoin and Ethereum stand as the two most prominent digital assets in the cryptocurrency market, commanding a combined market capitalization that exceeds $1 trillion. Both bitcoin and ethereum leverage blockchain technology, which provides a decentralized and secure method for recording and verifying transactions. Despite this shared foundation, their purposes and functionalities diverge significantly.

Bitcoin is widely recognized as digital gold—a decentralized digital currency designed to serve as a store of value and a hedge against inflation. Its primary function is to enable peer-to-peer transactions without the need for a central authority, making it a pioneering force in the world of digital money. In contrast, Ethereum is a decentralized platform that goes beyond digital currency. It empowers developers to build and deploy smart contracts and decentralized applications (dApps), opening up a world of possibilities for programmable finance and innovation.

Understanding the underlying technology, value propositions, and investment potential of both bitcoin and ethereum is crucial for anyone looking to participate in the evolving landscape of digital assets. Whether you are interested in the stability and scarcity of bitcoin or the versatility and innovation of the ethereum network, both offer unique opportunities in the rapidly growing world of blockchain technology.

Fundamental Purpose and Design Philosophy

Bitcoin was introduced in 2009 as the first decentralized digital currency, often described as “digital gold.” Its primary goal is to serve as a peer-to-peer electronic cash system and a store of value that operates without a central authority or intermediaries, such as a central bank, highlighting its independence from traditional financial systems. Bitcoin focuses on simplicity and security, aiming to facilitate trustless, secure transactions while providing a hedge against inflation. Bitcoin aims to be a decentralized, universal form of money, prioritizing security, decentralization, and a stable long-term monetary policy. A key advantage is bitcoin's simplicity, which sets it apart from more complex blockchain platforms and supports its long-term stability and adoption. This finite supply of bitcoins, capped at 21 million, reinforces its role as digital money with scarcity akin to precious metals.

In contrast, Ethereum, launched in 2015, represents a major shift from a mere digital currency to a programmable blockchain platform. Often referred to as “the world computer,” Ethereum enables developers to create decentralized applications (dApps) and smart contracts—self-executing code that runs on the blockchain without downtime or interference. This capability allows the Ethereum ecosystem to support a vast array of decentralized finance (DeFi) protocols, tokenized assets, and automated agreements, making it a core infrastructure for innovation in the cryptocurrency space.

Understanding the Developers

The ongoing development of Bitcoin and Ethereum is a testament to the strength and vision of their respective communities. Bitcoin was launched by the enigmatic Satoshi Nakamoto, whose identity remains unknown, and its evolution is now guided by a global network of bitcoin developers. These contributors work collaboratively on the open-source Bitcoin Core protocol, ensuring the security, reliability, and decentralization of the bitcoin network.

Ethereum, on the other hand, was conceived by Vitalik Buterin and is supported by the Ethereum Foundation, a non-profit organization dedicated to advancing the ethereum network. The foundation coordinates the efforts of ethereum developers, researchers, and entrepreneurs who drive innovation across the platform. A cornerstone of Ethereum’s technical architecture is the Ethereum Virtual Machine (EVM), which enables the execution of smart contracts and decentralized applications. This powerful feature allows the ethereum network to support a wide range of programmable use cases, from decentralized finance to tokenized assets.

Both bitcoin and ethereum benefit from active, passionate developer communities that continually enhance their networks. The collaborative nature of these projects ensures that both platforms remain at the forefront of blockchain technology and digital asset innovation.

Market Capitalization and Performance in 2025

As of 2025, bitcoin's dominant market share is reflected in its market capitalization of approximately $2.3 trillion, significantly larger than Ethereum’s $530 billion market cap. Despite this gap, Ethereum’s market cap is about three times that of the next-largest cryptocurrency, highlighting its dominant position beyond Bitcoin.

The price performance of these assets has also diverged this year. After Bitcoin’s halving event in April 2024, which reduced the rate at which new bitcoins are created, Bitcoin demonstrated resilience with a price increase of around 16% through March 2025. Ethereum, however, experienced a notable drop of nearly 50% during the same period, reflecting its higher volatility and sensitivity to broader market trends. Recently, Ethereum rebounded with a surge exceeding 50%, underscoring the distinct risk and reward profiles of these digital assets in the cryptocurrency market.

Technical Architecture, Blockchain Technology, and Consensus Mechanisms

Bitcoin and Ethereum differ significantly in their underlying technology and consensus algorithms. Both Proof-of-Work (PoW) and Proof-of-Stake (PoS) are types of consensus algorithms that determine how transactions are validated and agreed upon across the network. Bitcoin operates on a Proof-of-Work (PoW) consensus mechanism, where miners compete to solve complex mathematical puzzles to validate transactions and add new blocks to bitcoin's blockchain, which serves as a decentralized ledger. A typical bitcoin transaction involves transferring digital currency units, which are then validated and recorded on bitcoin's blockchain through this process. Bitcoin transactions are fundamental to the Proof-of-Work process, as they are grouped into blocks and confirmed by miners using the consensus algorithm. This process, while highly secure and decentralized, requires substantial energy consumption. For example, creating a new bitcoin currently demands around 112 trillion calculations, reflecting Bitcoin’s commitment to security and decentralization. To address limitations in transaction speed and scalability, bitcoin's lightning network has been developed as a solution to enable faster and lower-cost payments.

Ethereum initially used a similar PoW system but transitioned to a Proof-of-Stake (PoS) consensus mechanism in 2022 through an upgrade known as “The Merge.” This shift allows validators to secure ethereum networks by staking their native cryptocurrency, ETH, rather than mining. The PoS system drastically reduces energy consumption, improves scalability, and maintains network security. This technical improvement positions Ethereum as a more environmentally sustainable and efficient platform compared to Bitcoin’s energy-intensive approach.

Scalability and Transaction Throughput

When it comes to transaction speed and scalability, Bitcoin and Ethereum offer different capabilities. The bitcoin network processes approximately 7 transactions per second, which is sufficient for a decentralized payment network but limits throughput. Ethereum’s main layer can handle about 15 transactions per second, nearly double Bitcoin’s capacity. However, Ethereum’s true scalability advantage lies in its Layer 2 solutions, such as Polygon, Arbitrum, and Optimism, which significantly increase transaction throughput and reduce transaction fees.

These advancements in the ethereum blockchain help support a growing number of decentralized applications and DeFi protocols that demand fast, low-cost transactions. Unlike Bitcoin's fixed supply, Ethereum features a dynamic supply, allowing its economic model to flexibly adjust issuance and burn fees, resulting in inflationary or deflationary tendencies as needed. The Ethereum network is also capable of processing executable code within transactions, enabling the creation and operation of smart contracts and decentralized applications. ETH serves as the native currency of the Ethereum network, and as the native token, it is used for a variety of functions across the platform. Users pay transaction fees with ETH, especially when executing smart contracts or deploying decentralized applications. Ethereum’s ecosystem continues to innovate with technical improvements that enhance scalability, making it a preferred platform for developers and users seeking dynamic and efficient decentralized finance solutions.

Community and Ecosystem

The communities and ecosystems surrounding Bitcoin and Ethereum are among the most dynamic in the cryptocurrency space. The bitcoin network boasts a mature and well-established ecosystem, with widespread adoption as a decentralized digital currency and a robust infrastructure supporting everything from payment solutions to secure storage.

In contrast, the ethereum ecosystem is renowned for its focus on decentralized finance (DeFi) and the proliferation of decentralized applications. The ethereum network has become a hub for innovation, hosting a vast array of dApps, tokens, stablecoins, and non-fungible tokens (NFTs). This vibrant environment attracts developers, investors, and users who are eager to explore new financial products and services built on blockchain technology.

Both bitcoin and ethereum owe much of their success to their engaged and diverse communities. These groups not only contribute to the development of the underlying technology but also drive adoption and create new use cases. For investors, understanding the strengths and focus areas of each ecosystem is key to evaluating the long-term potential and value proposition of these leading digital assets. Key takeaways bitcoin and ethereum offer include the importance of community-driven growth, ongoing innovation, and the expanding possibilities within the world of decentralized applications and finance.

Use Cases and Real-World Applications

Bitcoin’s primary use cases revolve around its role as digital gold and a decentralized digital currency. It is widely adopted for cross-border payments, remittances, and as an inflation hedge by institutions and corporations. Many companies now hold bitcoin as a treasury reserve asset, recognizing its value as a finite supply digital money that operates independently of central banks and traditional currencies. Unlike national currencies, which are issued and regulated by governments, Bitcoin was created as an alternative medium of exchange and store of value, offering users a decentralized option outside the control of any single nation.

Ethereum, on the other hand, offers a broader range of applications through its programmable blockchain. It powers decentralized finance protocols, enabling lending, borrowing, and trading without intermediaries. Ethereum also supports non-fungible tokens (NFTs), decentralized autonomous organizations (DAOs), and enterprise blockchain solutions. The ethereum network’s ability to execute smart contracts and host decentralized applications makes it a foundational platform for the future of tokenized assets and innovative financial products.

Investment Characteristics and Risk Profiles

From an investment perspective, bitcoin and ethereum present distinct profiles. Bitcoin is often viewed as a stable store of value with strong institutional validation, appealing to conservative investors seeking security and macroeconomic hedging. Its simplicity and fixed supply contribute to its perception as a reliable digital silver or digital gold.

Ethereum represents a growth-oriented investment, offering exposure to the expanding decentralized finance ecosystem and technological innovation. However, this comes with higher volatility and risk. Ethereum’s future developments and upgrades promise to enhance its capabilities further, attracting investors interested in the evolving crypto adoption and the broader use of blockchain technology. Still, ethereum's future remains complex and uncertain, with ongoing challenges, competition, and the outcomes of recent upgrades all influencing its long-term prospects and value proposition.

Price Predictions and Market Outlook

Market analysts remain cautiously optimistic about both bitcoin and ethereum throughout 2025. Projections suggest that Ethereum could reach $5,400 by the end of the year and potentially approach $6,100 by 2029. However, Ethereum's price remains subject to significant fluctuations, potentially rising above $5,000 or falling below $2,000 depending on market conditions and regulatory developments.

Bitcoin's outlook is similarly influenced by factors such as institutional adoption, regulatory clarity, and macroeconomic trends. Its status as the first digital asset and a decentralized payment network underpins its resilience in global markets. Investors should consider these dynamics alongside their investment objectives and risk tolerance when evaluating these cryptocurrencies.

The Role of Advanced Analytics in Crypto Investment

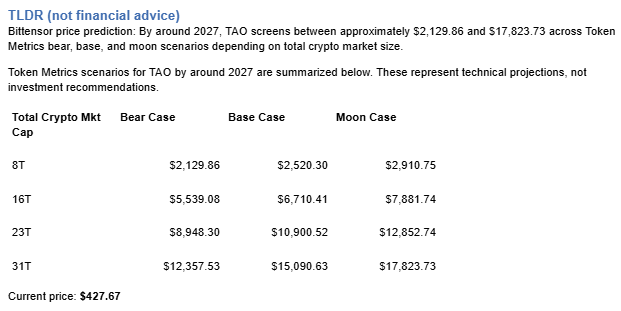

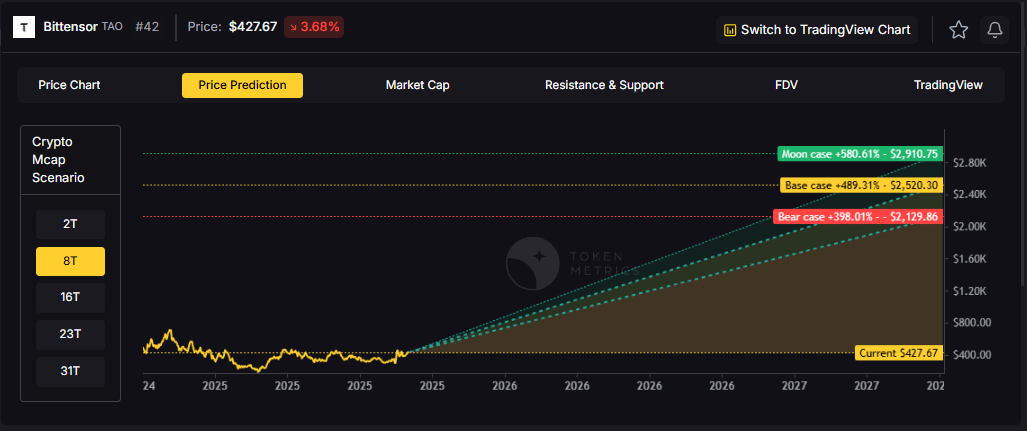

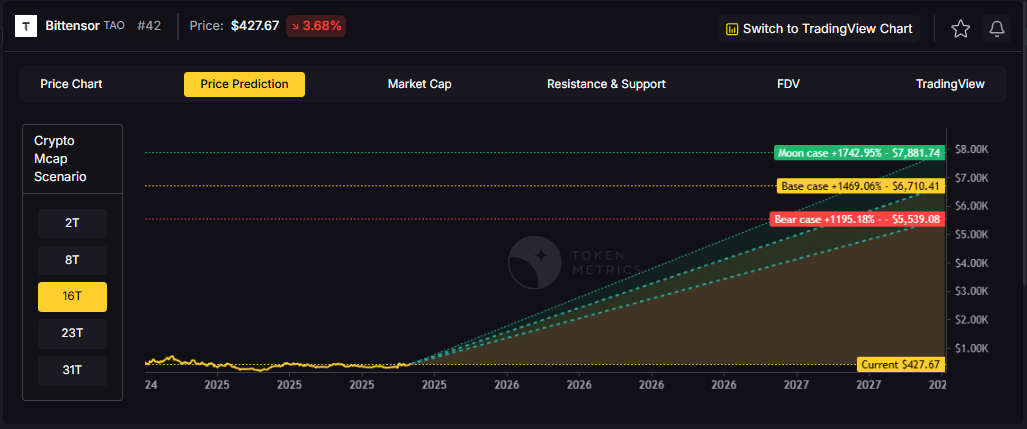

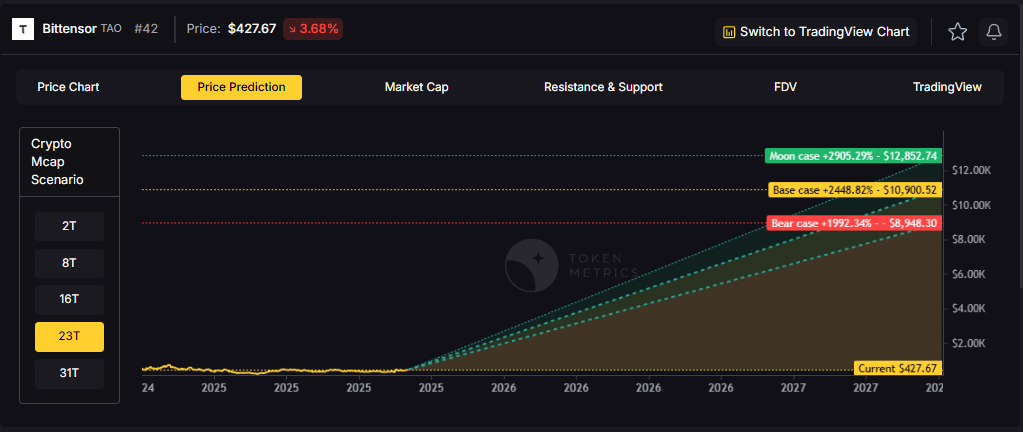

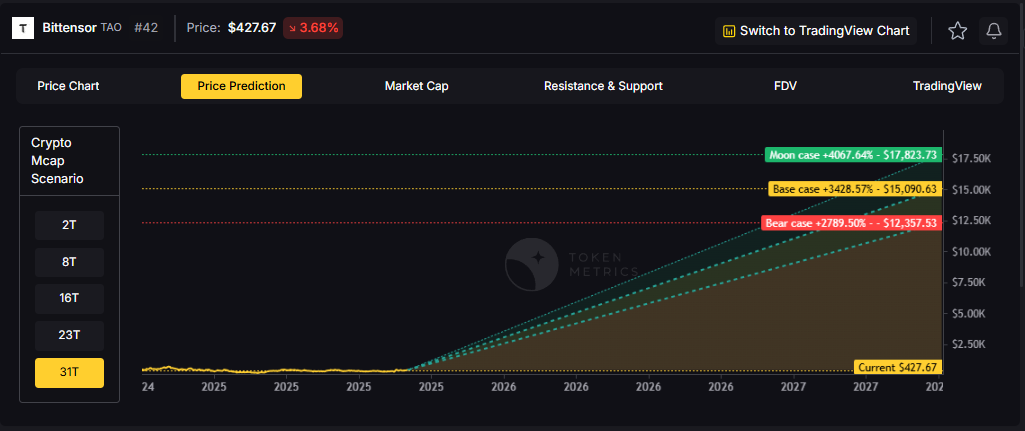

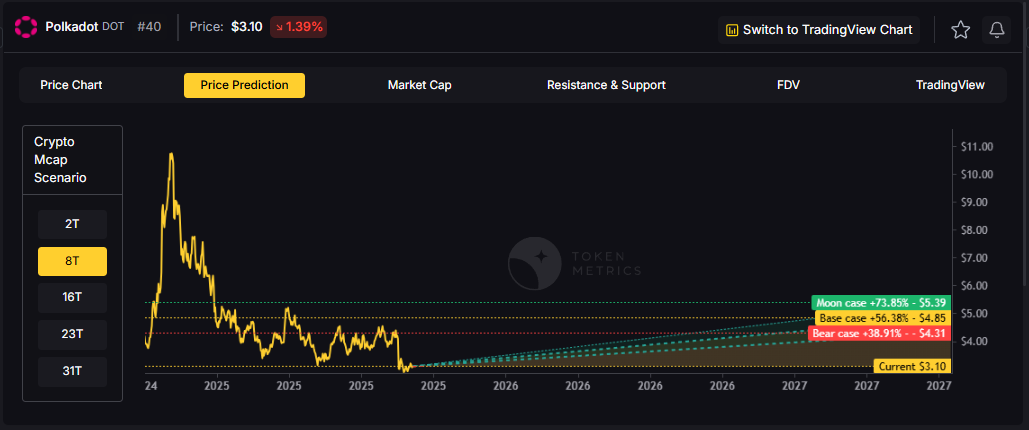

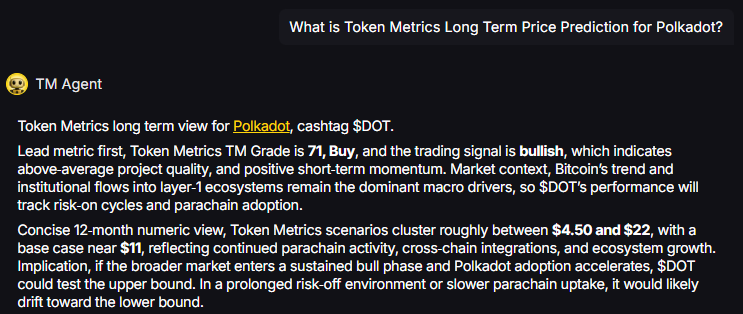

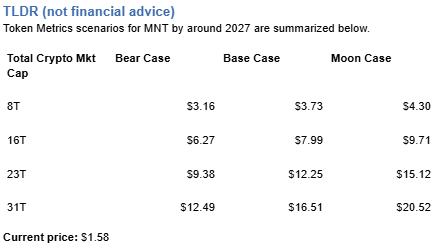

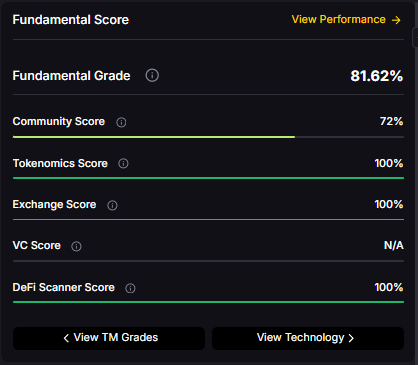

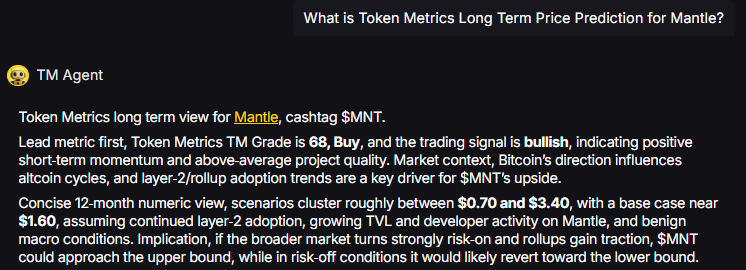

Navigating the complex cryptocurrency market requires sophisticated tools and data-driven insights. Platforms like Token Metrics have emerged as invaluable resources for investors aiming to make informed decisions. Token Metrics is an AI-powered crypto research and investment platform that consolidates market analysis, portfolio management, and real-time insights.

By leveraging artificial intelligence and machine learning, Token Metrics offers comprehensive research tools, back-tested bullish signals, and sector trend analysis. Its AI-driven X agent provides actionable insights that help investors identify opportunities and manage risks in the 24/7 crypto market. This advanced analytics platform is especially beneficial for those looking to optimize their investment strategy in both bitcoin and ethereum.

Portfolio Allocation Strategies

For investors considering both bitcoin and ethereum, a diversified portfolio approach is advisable. Bitcoin's stability and role as digital gold complement Ethereum's growth potential in decentralized finance and technology-driven applications. Depending on risk tolerance and investment goals, allocations might vary:

- Conservative investors may allocate 70% to Bitcoin and 30% to Ethereum.

- Moderate risk investors might consider a 60% Bitcoin and 40% Ethereum split.

- Growth-focused investors could tilt their portfolio toward 40% Bitcoin and 60% Ethereum.

This balanced approach leverages the unique features of both cryptocurrencies while managing volatility and maximizing exposure to different segments of the cryptocurrency ecosystem.

Conclusion

Bitcoin and Ethereum offer distinct but complementary value propositions in the cryptocurrency space. Bitcoin remains the first digital asset, a decentralized payment network, and a trusted store of value often likened to digital gold. Ethereum, powered by its programmable blockchain and smart contracts, drives innovation in decentralized finance and applications, shaping the future of the crypto market.

Choosing between bitcoin and ethereum—or deciding on an allocation between both—depends on individual investment objectives, risk appetite, and confidence in blockchain technology’s future. Both assets have a place in a well-rounded portfolio, serving different roles in the evolving digital economy.

For investors serious about cryptocurrency investing in 2025, utilizing advanced analytics platforms like Token Metrics can provide a competitive edge. With AI-powered insights, comprehensive research tools, and real-time market analysis, Token Metrics stands out as a leading platform to navigate the complexities of the cryptocurrency market.

Whether your preference is bitcoin’s simplicity and stability or ethereum’s innovation and versatility, success in the cryptocurrency market increasingly depends on access to the right data, analysis, and tools to make informed decisions in this exciting and fast-changing landscape.

Disclaimer: Certain cryptocurrency investment products, such as ETFs or trusts, are not classified as investment companies or investment companies registered under the Investment Company Act of 1940. As a result, these products do not have the same regulatory requirements as traditional mutual funds. This article does not provide tax advice. For personalized tax advice or guidance regarding regulatory classifications, consult a qualified professional.

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)