Maximize Your Profits with AI Crypto Trading: A Practical Guide

Introduction to AI Trading

The world of cryptocurrency trading is fast-paced and complex, but with the rise of artificial intelligence, traders now have powerful tools to maximize profits and minimize risks. AI crypto trading harnesses advanced algorithms and machine learning to analyze vast amounts of data, enabling smarter and more efficient trading decisions. By automating trades, AI crypto trading bots operate 24/7, seizing opportunities in the volatile crypto market anytime, anywhere. These AI agents help traders overcome emotional biases and improve decision making by relying on data-driven insights. Additionally, AI enables real-time analysis of sentiments from social media that affect cryptocurrency prices, providing traders with a deeper understanding of market dynamics. Whether you are a beginner or an advanced trader, getting started with AI crypto trading can elevate your trading experience and help you stay ahead in the competitive cryptocurrency market.

Understanding Trading Bots

Trading bots have become essential tools for crypto traders looking to automate their strategies and enhance performance. There are various types of trading bots, including grid bots and DCA (dollar cost averaging) bots, each designed to execute specific trading styles. Grid bots place buy and sell orders at preset intervals to profit from price fluctuations, while DCA bots help investors steadily accumulate assets by buying at regular intervals regardless of market conditions. These bots assist with risk management by analyzing market trends and indicators, allowing traders to automate complex trading strategies without constant monitoring. A reliable AI trading bot should integrate strong risk management tools like stop-loss orders to further safeguard investments. Popular crypto trading bots are capable of managing multiple assets and executing trades across multiple exchanges, improving overall trading efficiency. Choosing the right crypto trading bot depends on your trading goals, preferred strategies, and the bot’s features such as strategy templates, custom strategies, and exchange support.

Managing Market Volatility

Market volatility is a defining characteristic of the cryptocurrency market, making risk management crucial for successful trading. AI-powered trading tools excel at managing volatility by analyzing real-time data and market indicators to provide timely insights. These tools help traders spot trends, predict market movements, and adjust their strategies to evolving market conditions. For instance, AI crypto trading bots can incorporate sentiment analysis and moving averages to forecast price fluctuations and optimize entry and exit points. However, bots that rely heavily on historical data may face performance issues during market volatility, highlighting the importance of adaptive algorithms. By leveraging AI’s ability to process complex data quickly, traders can reduce emotional decision making and better navigate periods of high market volatility. Incorporating risk management techniques alongside AI-driven insights ensures your crypto portfolio remains resilient amid unpredictable market changes.

Exchange Accounts and AI Trading

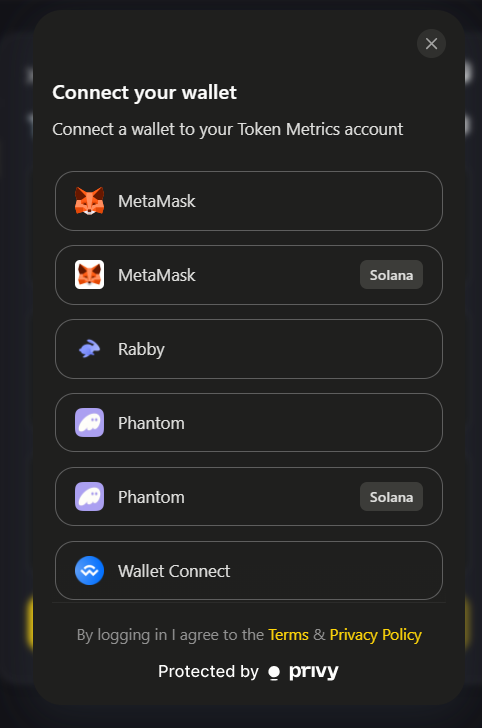

Connecting your exchange accounts to AI trading platforms unlocks the potential for fully automated trading across multiple crypto exchanges. This integration allows AI crypto trading bots to execute trades seamlessly based on your chosen strategies, freeing you from manual order placement. Ensuring robust security measures such as encrypted API keys and secure authentication is vital to protect your assets and personal information. AI tools also enable efficient management of multiple exchange accounts, allowing you to diversify your trading activities and capitalize on arbitrage opportunities. For example, 3Commas is a popular AI-powered trading platform that lets users manage assets from multiple exchanges in one interface, streamlining the trading process. Additionally, AI-powered platforms provide comprehensive analytics to monitor and analyze your trading performance across different exchanges, helping you fine tune your strategies and maximize returns.

The Role of Machine Learning

Machine learning is at the heart of AI crypto trading, enabling systems to learn from historical data and improve their predictions over time. By analyzing vast datasets of past market trends and price movements, machine learning algorithms can forecast future performance and identify profitable trading opportunities. These advanced algorithms facilitate the development of complex trading strategies that adapt dynamically to changing market conditions. Kryll.io simplifies strategy creation with a visual editor that allows for no-code trading strategies, making it accessible even to those without technical expertise. Utilizing machine learning in your crypto trading allows for automated decision making that reduces emotional bias and enhances consistency. Staying ahead of the cryptocurrency market requires continuous learning, and machine learning empowers AI trading bots to evolve with the latest trends and expert insights, making your trading smarter and more effective.

Decision Making with AI Agents

AI agents play a pivotal role in enhancing decision making within crypto trading by processing real-time market data and generating actionable insights. These intelligent systems analyze multiple market indicators, including price fluctuations, sentiment analysis, and other market indicators, to predict future market movements. By automating trading decisions, AI agents help reduce the emotional biases that often impair human traders. They optimize your trading strategy by continuously learning from market changes and fine tuning trade execution to improve performance. Leveraging AI agents allows you to trade crypto more confidently, stay ahead of spot trends, and capitalize on market opportunities with precision.

Future Performance and Predictions

Predicting future market movements is essential for successful cryptocurrency trading, and AI provides powerful tools to make these predictions more accurate. By combining historical data analysis with current market trends, AI crypto trading bots can generate reliable price predictions and forecast potential market changes. This capability enables traders to optimize their strategies proactively, adjusting their positions based on anticipated movements rather than reacting after the fact. Automated trading powered by AI reduces emotional decision making and enhances consistency in execution, which is critical in fast-moving markets. To maximize your trading performance, it is important to leverage AI tools that incorporate both advanced algorithms and real-time data for comprehensive market analysis.

Affiliate Programs and Trading

Affiliate programs offer a unique opportunity for crypto traders to monetize their trading experience by promoting AI crypto trading platforms. By joining these programs, traders can earn commissions for referring new users, creating an additional income stream beyond trading profits. Many popular AI trading platforms provide attractive commission structures and marketing materials to support affiliates. Engaging in affiliate programs allows you to share your knowledge of AI crypto trading and help others discover the benefits of automated trading. Getting started is straightforward, and participating in an affiliate program can complement your trading activities while expanding your network within the cryptocurrency market community.

Getting Started with a Free Plan

For those new to AI crypto trading, starting with a free plan is an excellent way to test and optimize your trading strategies without financial commitment. Free plans typically offer access to essential features such as automated trading, strategy templates, and real-time data, allowing you to familiarize yourself with the platform’s capabilities. While these plans may have limitations on the number of trades or supported exchanges, they provide valuable insights into how AI trading bots operate. As your confidence and trading needs grow, upgrading to a paid plan unlocks advanced features, increased exchange support, and more powerful tools to enhance your trading experience. Beginning with a free plan ensures a risk-free introduction to AI crypto trading and helps you build a solid foundation.

Advanced Trading Strategies

Advanced trading strategies are crucial for traders aiming to maximize returns and manage risks effectively. AI crypto trading bots enable the execution of complex trading strategies that incorporate multiple market indicators, sentiment analysis, and market making techniques. Dollar cost averaging (DCA) is another popular strategy facilitated by AI tools, allowing traders to mitigate the impact of price volatility by purchasing assets at regular intervals. Using AI to automate these strategies ensures precision and consistency, while also allowing customization to fit your unique trading style. Understanding the risks and rewards associated with advanced strategies is important, and AI-powered platforms often provide simulation tools to test strategies before deploying them in live markets. Embracing advanced strategies with AI support can significantly elevate your trading performance.

User-Friendly Interface

A user-friendly interface is essential for maximizing the benefits of AI crypto trading, especially for traders at all experience levels. Intuitive dashboards and easy-to-use platforms simplify the process of setting up trading bots, monitoring performance, and customizing strategies. Many AI trading platforms offer smart trading terminals that integrate multiple assets and exchange accounts into a single interface accessible on both desktop and mobile devices. Customization options allow traders to fine tune their bots according to preferred trading styles and risk tolerance. By combining powerful AI tools with a seamless user experience, these platforms empower traders to automate their trading decisions confidently and efficiently.

Robust Security Measures

Security is paramount in cryptocurrency trading, and AI crypto trading platforms implement robust measures to safeguard your assets and personal data. Encryption protocols and secure authentication methods protect your exchange accounts and API keys from unauthorized access. AI tools also monitor for suspicious activity and potential threats, providing an additional layer of defense against losses. Choosing a platform with strong security features ensures peace of mind as you automate your trading across multiple exchanges. Staying informed about security best practices and regularly updating your credentials contribute to maintaining a secure trading environment.

Responsive Customer Support

Reliable customer support is a critical component of a successful crypto trading experience. Many AI crypto trading platforms offer responsive support channels such as live chat, email, and comprehensive help centers. Prompt assistance helps resolve technical issues, clarify platform features, and guide users through setup and strategy optimization. AI-powered support systems can provide instant responses to common queries, enhancing overall support efficiency. Access to expert insights and timely help ensures that traders can focus on their strategies without unnecessary interruptions, making customer support an integral part of the trading journey.

Community Engagement

Engaging with the crypto trading community provides valuable learning opportunities and fosters collaboration among traders. Forums, social media groups, and community events allow users to share experiences, discuss market trends, and exchange tips on AI crypto trading. AI tools can facilitate community engagement by connecting traders with similar interests and providing curated content based on market changes. Participating in these communities helps traders stay updated on the latest trends, discover new strategies, and gain insights from advanced traders and asset managers. Building a network within the cryptocurrency market enhances both knowledge and trading confidence.

Additional Resources

Continuous education is vital for success in the rapidly evolving cryptocurrency market. Many AI crypto trading platforms offer additional resources such as tutorials, webinars, and strategy guides to help traders improve their skills. These educational materials cover a wide range of topics, from basic crypto trading concepts to advanced AI trading techniques and strategy development. Leveraging these resources enables traders to better understand market indicators, test strategies, and refine their trading style. AI tools can personalize learning paths, ensuring that traders receive relevant content to enhance their trading experience and stay ahead of market trends.

AI Agent Integration

Integrating AI agents with your trading bots is a powerful way to optimize your crypto trading strategy. AI agent integration allows seamless coordination between different bots and trading tools, enabling automated execution of custom strategies across multiple assets and exchanges. This integration supports strategy optimization by continuously analyzing market conditions and adjusting parameters to improve performance. Popular AI agent integration tools offer compatibility with a variety of crypto exchanges and support advanced features such as backtesting and real-time data analysis. By harnessing AI agent integration, traders can take full advantage of automated trading, fine tune their strategies, and elevate their trading to new levels of sophistication and profitability.

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)