Polkadot Price Prediction 2027 | DOT Forecast & Scenarios

Understanding Polkadot's 2027 Potential

The Layer 1 competitive landscape is consolidating as markets reward specialization over undifferentiated "Ethereum killers". Polkadot positions itself in a multi-chain world through shared security and parachain interoperability. Infrastructure maturity around custody and bridges makes alternate L1s more accessible into 2026.

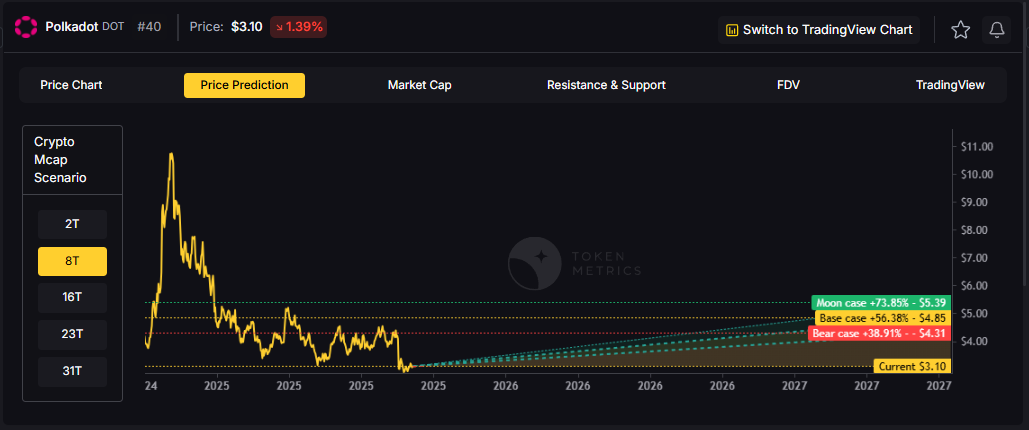

The price prediction scenario projections below map different market share outcomes for DOT across varying total crypto market sizes. Base cases assume Polkadot maintains current ecosystem momentum, while moon scenarios factor in accelerated adoption, and bear cases reflect increased competitive pressure.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

How to read our price prediction methodology:

Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

Polkadot (DOT) Price Prediction: TM Agent Baseline

Token Metrics long term price prediction view for Polkadot, cashtag $DOT. Lead metric first, Token Metrics TM Grade is 71%, Buy, and the trading signal is bullish, which indicates above-average project quality, and positive short-term momentum. Market context, Bitcoin's trend and institutional flows into layer-1 ecosystems remain the dominant macro drivers, so $DOT's performance will track risk-on cycles and parachain adoption.

Concise 12-month price prediction numeric view: Token Metrics scenarios cluster roughly between $4.50 and $22, with a base case near $11, reflecting continued parachain activity, cross-chain integrations, and ecosystem growth. Implication, if the broader market enters a sustained bull phase and Polkadot adoption accelerates, $DOT could test the upper bound. In a prolonged risk-off environment or slower parachain uptake, it would likely drift toward the lower bound.

Affiliate Disclosure: We may earn a commission from qualifying purchases made via this link, at no extra cost to you.

Key Takeaways

- Scenario driven price predictions, outcomes hinge on total crypto market cap, higher liquidity and adoption lift the bands.

- TM Agent gist: range $4.50 to $22 with a base near $11, upside requires adoption and liquidity, downside ties to risk-off.

- Education only, not financial advice.

Polkadot Price Prediction: Scenario Analysis

Token Metrics price prediction scenarios span four market cap tiers, each representing different levels of crypto market maturity and liquidity:

- 8T Price Prediction: At an eight trillion dollar total crypto market cap, DOT projects to $4.31 in bear conditions, $4.85 in the base case, and $5.39 in bullish scenarios.

- 16T Price Prediction: Doubling the market to sixteen trillion expands the range to $6.82 (bear), $8.44 (base), and $10.07 (moon).

- 23T Price Prediction: At twenty-three trillion, the scenarios show $9.33, $12.04, and $14.75 respectively.

- 31T Price Prediction: In the maximum liquidity scenario of thirty-one trillion, DOT could reach $11.84 (bear), $15.63 (base), or $19.43 (moon).

Each tier assumes progressively stronger market conditions, with the base case reflecting steady growth and the moon case requiring sustained bull market dynamics.

Why Consider the Indices with Top-100 Exposure

Polkadot represents one opportunity among hundreds in crypto markets. Token Metrics Indices bundle DOT with top one hundred assets for systematic exposure to the strongest projects. Single tokens face idiosyncratic risks that diversified baskets mitigate.

Historical index performance demonstrates the value of systematic diversification versus concentrated positions. Join the early access list

What Is Polkadot?

Polkadot is a network designed to connect specialized blockchains, called parachains, to a central Relay Chain for shared security and interoperability. Its architecture aims to enable cross-chain messaging and upgrades without hard forks.

DOT is the native token, used for staking to secure the network, on-chain governance, and bonding to add new parachains. Developers and users interact across parachains for use cases spanning DeFi, infrastructure, and cross-chain applications.

Token Metrics AI Analysis

Token Metrics AI provides comprehensive context on Polkadot's positioning and challenges.

Vision: Polkadot's vision is to create a decentralized web where independent blockchains can operate securely while communicating and sharing data across networks. It aims to enable a fully interoperable and scalable ecosystem that supports innovation in decentralized technologies.

Problem: The blockchain space faces fragmentation, with networks operating in isolation, limiting data and value transfer. This siloed structure hampers scalability, security, and user experience. Polkadot addresses the need for cross-chain communication and shared security, allowing blockchains to benefit from collective strength without sacrificing autonomy.

Solution: Polkadot uses a relay chain to coordinate a network of parachains, each with specialized functionality. It employs a nominated proof-of-stake (NPoS) consensus mechanism to secure the network and enable governance. Parachains lease slots via auctions, allowing projects to build custom blockchains with shared security and interoperability. The system supports cross-chain message passing, enabling data and asset transfers between different blockchains.

Market Analysis: Polkadot operates in the layer-0 and interoperability segment, competing with platforms like Cosmos and emerging multi-chain ecosystems. It differentiates itself through shared security, on-chain governance, and a robust parachain model. Adoption is driven by developer interest, parachain diversity, and integration with DeFi, NFTs, and enterprise solutions. Market conditions for Polkadot are influenced by broader crypto trends, regulatory developments, and execution of its technological roadmap. While it ranks among major smart contract platforms, it faces strong competition from Ethereum and high-throughput chains like Solana. Price and adoption depend on network usage, ecosystem growth, and macroeconomic factors in the crypto market.

Catalysts That Skew Bullish for DOT Price Predictions

- Institutional and retail access expands with ETFs, listings, and integrations.

- Macro tailwinds from lower real rates and improving liquidity.

- Product or roadmap milestones such as upgrades, scaling, or partnerships.

Risks That Skew Bearish on DOT Price Predictions

- Macro risk-off from tightening or liquidity shocks.

- Regulatory actions or infrastructure outages.

- Concentration or validator economics and competitive displacement.

FAQs About Polkadot Price Prediction

Will DOT hit $15 by 2027?

The 31T base case price prediction shows DOT at $15.63, which exceeds $15. The 23T moon case at $14.75 does not reach $15. Outcome depends on total crypto market cap growth and Polkadot maintaining market share. Not financial advice.

Can DOT 10x from current levels?

At current price of $3.10, a 10x would reach $31.0. None of the price prediction scenarios, with a high of $19.43 in the 31T moon case, reaches that level by 2027. 10x returns would require substantially greater market cap expansion. Not financial advice.

What price could DOT reach in the moon case?

Moon case price predictions range from $5.39 at 8T to $19.43 at 31T. These scenarios assume maximum liquidity expansion and strong Polkadot adoption. Not financial advice.

Next Steps

- Track live grades and signals: Token Details

- Join Indices Early Access

- Want exposure? Buy DOT on Gemini

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

Why Use Token Metrics for Polkadot Price Prediction Investing?

Actionable AI-driven Ratings: Access live Token Metrics grades and signals for Polkadot and hundreds of crypto assets.

Scenario Forecasting: Visualize DOT upside and downside with rigorous price prediction scenario math, not unsubstantiated hype.

Portfolio Diversification: Token Metrics Indices let you systematically diversify among top projects, mitigating single-token risk.

Start your Polkadot price prediction research with institutional-grade tools from Token Metrics.

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)