Ripple (XRP) Price Prediction Analysis - Can it Reach $500 in Future?

Ripple (XRP) has been a prominent digital asset in the cryptocurrency space since its inception in 2013. Throughout its history, XRP has experienced significant price fluctuations, reaching an all-time high of $3.84 in early 2018.

However, regulatory uncertainties and delisting on significant exchanges have caused XRP's price to retract over the years.

In this article, we will delve into the factors that could contribute to XRP's growth, analyze expert opinions on its potential price trajectory, and evaluate whether XRP has a chance of reaching $500.

Ripple (XRP) Overview

Ripple is a cryptocurrency and a digital payment protocol designed for fast and low-cost international money transfers.

Unlike other cryptocurrencies, Ripple's primary focus is facilitating seamless cross-border transactions for financial institutions. Its native digital asset, XRP, acts as a bridge currency for transferring value between different fiat currencies.

Historical Performance of Ripple (XRP)

XRP has experienced both significant highs and lows throughout its existence. In early 2018, when the cryptocurrency market was in a state of euphoria, XRP reached its all-time high of $3.84. At that time, its market capitalization stood at $139.4 billion, accounting for 20% of the entire crypto market.

However, regulatory challenges and negative sentiment surrounding XRP led to a substantial price retracement. Currently, XRP is trading at around $0.50, a significant drop from its ATH. The current market capitalization of XRP is $26.29 billion, representing around 2.5% of the total crypto market capitalization.

Ripple (XRP) Current Fundamentals

Despite the price volatility, Ripple (XRP) has established strong partnerships and collaborations within the financial industry. It has joined forces with companies like Mastercard, Bank of America, and central banks worldwide. These partnerships demonstrate the potential for XRP to play a significant role in the global financial ecosystem.

Moreover, XRP has a decentralized circulating supply, with the top 10 addresses holding only 10.7% of the total supply. This decentralization sets XRP apart from other cryptocurrencies like Dogecoin and Ethereum, where a small number of addresses control a significant portion of the circulating supply.

Ripple (XRP) Price Prediction - Industry Experts Opinion

When it comes to predicting the future price of XRP, there is a wide range of opinions among industry experts. Let's explore some of the insights shared by analysts and traders.

Technical Analysis Predictions - Technical analysis is a popular method used to forecast price movements based on historical data and chart patterns. While it's important to consider other factors, technical analysis can provide valuable insights into potential price trends.

One technical analyst, known as NeverWishing on TradingView, has predicted that XRP could reach $33 by the end of the year. Their analysis suggests a potential correction in October, followed by a bullish surge in November.

Note - Start Your Free Trial Today and Uncover Your Token's Price Prediction and Forecast on Token Metrics.

Is Ripple (XRP) a Good Investment?

Whether Ripple (XRP) is a good investment depends on various factors, including individual risk tolerance, investment goals, and market conditions.

It's essential to conduct thorough research and seek professional advice before making any investment decisions.

Ripple's solid partnerships and focus on solving real-world cross-border payment challenges have positioned it as a potential disruptor in the financial industry.

If Ripple continues to expand its network and gain regulatory clarity, it could attract more institutional investors and potentially drive up the price of XRP.

However, it's crucial to note that investing in cryptocurrencies carries inherent risks, including price volatility and regulatory uncertainties. Investors should carefully consider these risks before allocating capital to XRP or any other digital asset.

Also Read - Uniswap Price Prediction

Can XRP Reach 500 Dollars?

No, Considering current market conditions and XRP fundamentals, it's nearly impossible to reach $500, but still, it's a topic of debate among analysts and traders. While it is theoretically possible, several factors make this price target highly unlikely soon.

To reach $500, XRP's price would need to increase by approximately 100,000% from its current price of $0.50. This would result in a market capitalization of over $26 trillion, surpassing the combined value of the four largest public companies in the world - Apple, Microsoft, Saudi Aramco, and Alphabet.

While XRP has demonstrated its potential for growth in the past, achieving such a high price target would require unprecedented market adoption and widespread usage of XRP in global financial transactions.

Risks and Rewards

Investing in XRP, like any other cryptocurrency, comes with risks and potential rewards. It's essential to consider these factors before making any investment decisions.

Risks:

- Regulatory Uncertainty: XRP's status as a security has been a point of contention, leading to legal challenges and regulatory scrutiny. Any adverse regulatory decisions could negatively impact XRP's price and market sentiment.

- Market Volatility: Cryptocurrencies, including XRP, are known for their price volatility. Sharp price fluctuations can result in substantial gains or losses, making it a high-risk investment.

- Competition: XRP faces competition from other cryptocurrencies and digital payment solutions in the cross-border payment space. The success of XRP depends on its ability to differentiate itself and gain market share.

Rewards:

- Potential for Growth: XRP has demonstrated its growth potential, reaching significant price highs. If Ripple continues to forge partnerships and gain regulatory clarity, XRP could experience further price appreciation.

- Disruptive Technology: Ripple's technology has the potential to revolutionize cross-border payments by making them faster, more cost-effective, and more accessible. Increased adoption of Ripple's solutions could drive up the demand for XRP.

- Diversification: Including XRP in an investment portfolio can provide diversification benefits, as cryptocurrencies often have a low correlation with traditional asset classes like stocks and bonds.

Future Potential of Ripple (XRP)

While reaching $500 soon may be highly unlikely, Ripple (XRP) still holds potential for growth and innovation in the long run. The company's partnerships, focus on solving real-world payment challenges, and disruptive technology position it well for future success.

As the global financial industry embraces digitalization and seeks more efficient cross-border payment solutions, Ripple and XRP could play a significant role in shaping the future of finance.

Finding Crypto Moonshots: How Token Metrics Helps You Spot the Next 100x Opportunity

While XRP remains a strong contender in the digital payments space, the biggest gains in every crypto bull market often come from lesser-known, low-cap assets known as moonshots. A moonshot in crypto refers to a high-potential altcoin—typically with a market capitalization under $100 million—that is positioned to deliver outsized returns, often 10x to 100x or more. These tokens tend to fly under the radar until momentum, innovation, or narrative alignment triggers exponential growth. However, identifying the right moonshot before the crowd catches on requires more than luck—it demands deep research, data analysis, and precise timing.

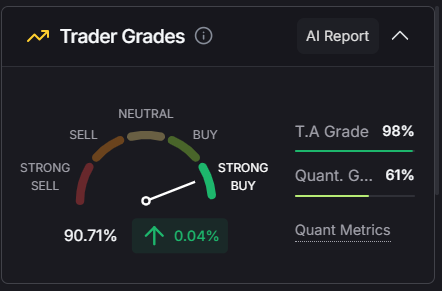

That’s where Token Metrics becomes an essential tool for any crypto investor. Powered by AI, data science, and years of market intelligence, Token Metrics makes it possible to discover altcoin moonshots before they go mainstream. The platform’s Moonshots Ratings Page surfaces under-the-radar crypto projects based on real-time performance data, low market cap, high trader/investor grade, and strong narrative alignment across sectors like AI, DePIN, Real-World Assets (RWAs), and Layer-1 ecosystems.

Finding a moonshot on Token Metrics is simple:

- Step 1: Visit the Ratings section and click on the Moonshots tab.

- Step 2: Filter tokens by market cap, volume, and recent ROI to identify breakout candidates.

- Step 3: Analyze each token’s fundamentals via the Token Details page—including price charts, token holders, on-chain activity, and AI-generated forecasts.

- Step 4: Compare with historical Past Moonshots to see which types of projects outperformed during previous cycles.

- Step 5: Take action directly from the Moonshots page using Token Metrics’ integrated swap widget—making it fast and easy to buy when opportunity strikes.

What sets Token Metrics apart is its use of AI to track over 80+ metrics, giving you a data-driven edge to act before the rest of the market. It doesn't just highlight the next promising token—it gives you the context to build conviction. With features like Token Metrics AI Agent, you can ask questions like “What’s the best AI token under $50M?” or “Which moonshots have performed best this quarter?”—and get tailored answers based on real data.

In a volatile market where timing is everything, having a reliable tool to detect moonshots early can mean the difference between a 2x and a 100x. Whether you're diversifying beyond large caps like XRP or looking to deploy capital into asymmetric opportunities, Token Metrics offers the most powerful moonshot discovery engine in crypto. Start your free trial today to uncover the next breakout token before it hits the headlines—and potentially turn small bets into life-changing gains.

Conclusion

In conclusion, the possibility of XRP reaching $500 is a topic of debate. While some technical analysts and traders have made bullish predictions, the consensus among experts suggests that such a price target is highly unlikely soon.

Investors considering XRP should carefully evaluate its fundamentals, market conditions, and individual risk tolerance. While XRP has the potential for growth and innovation, investing in cryptocurrencies carries inherent risks that should not be overlooked.

As with any investment, it is crucial to conduct thorough research, seek professional advice, and make informed decisions based on your financial goals and risk tolerance.

Frequently Asked Questions

Q1. How was Ripple (XRP) first introduced to the cryptocurrency market?

Ripple (XRP) was first introduced to the cryptocurrency market in 2013 and has become a prominent digital asset.

Q2. Why is Ripple's focus primarily on financial institutions?

Ripple aims to revolutionize the traditional financial transaction system by providing fast and low-cost international transfers. Focusing on financial institutions helps them target the root of many cross-border transaction inefficiencies.

Q3. Has XRP ever been the subject of regulatory actions or legal challenges?

Yes, XRP has faced regulatory uncertainties and challenges regarding its status as a security, which has impacted its market sentiment and price.

Q4. How does XRP's decentralization compare to that of Bitcoin?

While XRP prides itself on a decentralized circulating supply, with the top 10 addresses holding only 10.7% of the total supply, Bitcoin is also decentralized but with different distribution metrics.

Q5. Are any major industry players who have expressed optimism or pessimism about XRP's future?

While the article does mention partnerships and collaborations, the sentiment of other major industry players varies, and thorough research is advised before investing.

Q6. How does XRP aim to differentiate itself from other cryptocurrencies in the cross-border payment space?

XRP's main differentiation is its primary focus on solving real-world cross-border payment challenges, its partnerships with major financial institutions, and its potential to provide faster, more cost-effective transactions.

Q7. What factors should be considered when deciding the right time to invest in XRP?

Prospective investors should consider XRP's historical performance, current market conditions, regulatory environment, partnerships, and individual risk tolerance before investing.

Q8. Where can potential investors seek professional advice specifically about XRP investments?

Potential investors should consult financial advisors, cryptocurrency experts, or investment firms familiar with the crypto market to get tailored advice about XRP investments.

Disclaimer

The information provided on this website does not constitute investment advice, financial advice, trading advice, or any other sort of advice, and you should not treat any of the website's content as such.

Token Metrics does not recommend that any cryptocurrency should be bought, sold, or held by you. Conduct your due diligence and consult your financial advisor before making investment decisions.

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)