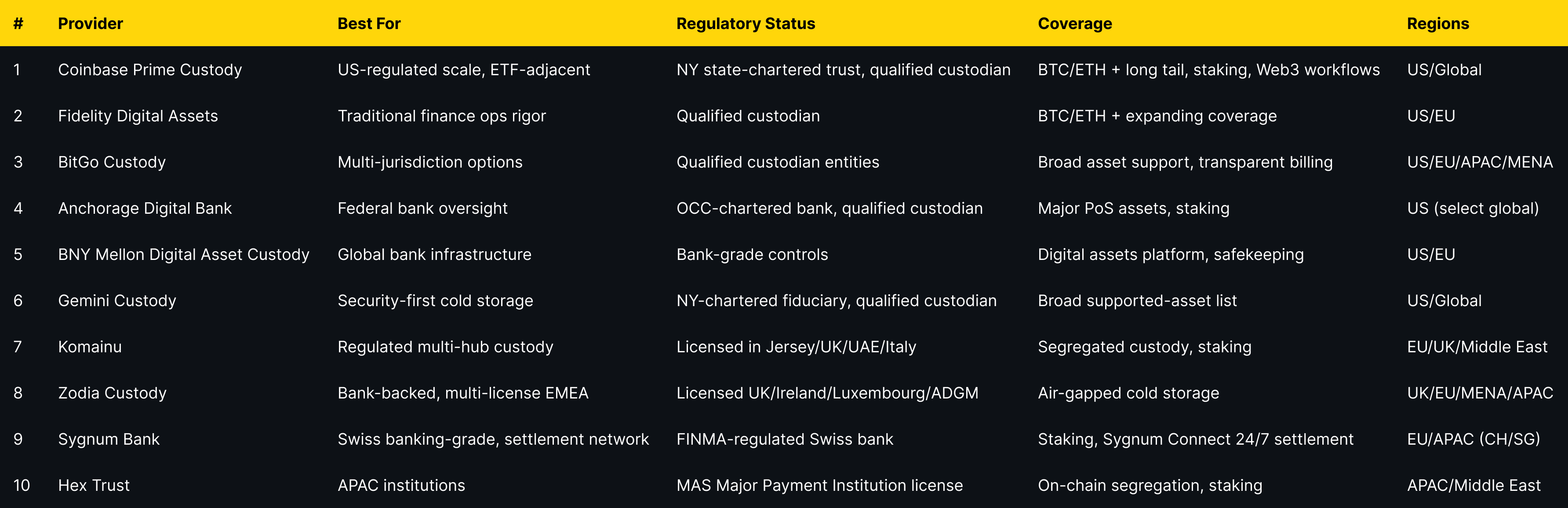

Top Institutional Crypto Custody Providers (2025)

Why Institutional Crypto Custody Providers Matter in September 2025

Institutional custody is the backbone of professional digital-asset operations. The right institutional custody provider can safeguard private keys, segregate client assets, streamline settlement, and enable workflows like staking, financing, and governance. In one sentence: an institutional crypto custodian is a regulated organization that safekeeps private keys and operationalizes secure asset movements for professional clients. In 2025, rising ETF inflows, tokenization pilots, and on-chain settlement networks make safe storage and compliant operations non-negotiable. This guide is for funds, treasuries, brokers, and corporates evaluating digital asset custody partners across the US, EU, and APAC. We compare security posture, regulatory status (e.g., qualified custodian where applicable), asset coverage, fees, and enterprise UX—so you can shortlist fast and execute confidently.

How We Picked (Methodology & Scoring)

- Liquidity (30%): Depth/venues connected, settlement rails, prime/brokerage adjacency.

- Security (25%): Key management (HSM/MPC), offline segregation, audits/SOC reports, insurance disclosures.

- Coverage (15%): Supported assets (BTC/ETH + long tail), staking, tokenized products.

- Costs (15%): Transparent billing, AUC bps tiers, network fee handling, minimums.

- UX (10%): Console quality, policy controls, APIs, reporting.

- Support (5%): White-glove ops, SLAs, incident response, onboarding speed.

Data sources: Official product/docs, trust/security pages, regulatory/licensing pages, and custodian legal/fee disclosures. Market size/sentiment cross-checked with widely cited datasets; we did not link third parties in-body.

Last updated September 2025.

Top 10 Institutional Crypto Custody Providers in September 2025

1. Coinbase Prime Custody — Best for US-regulated scale

Why Use It: Coinbase Custody Trust Company is a NY state-chartered trust and qualified custodian, integrated with Prime trading, staking, and Web3 workflows. Institutions get segregated cold storage, SOC 1/2 audits, and policy-driven approvals within a mature prime stack.

Best For: US managers, ETF service providers, funds/treasuries that need deep liquidity + custody.

Notable Features:

- Qualified custodian (NY Banking Law) with SOC 1/2 audits

- Vault architecture + policy engine; Prime integration

- Staking and governance support via custody workflows.

Consider If: You want a single pane for execution and custody with US regulatory clarity.

Alternatives: Fidelity Digital Assets, BitGo

Fees/Notes: Enterprise bps on AUC; network fees pass-through.

Regions: US/Global (eligibility varies).

2. Fidelity Digital Assets — Best for traditional finance ops rigor

Why Use It: A division of Fidelity with an integrated custody + execution stack designed for institutions, offering cold-storage execution without moving assets and traditional operational governance.

Best For: Asset managers, pensions, corporates seeking a blue-chip brand and conservative controls.

Notable Features:

- Integrated custody + multi-venue execution

- Operational governance and reporting ethos from TradFi

- Institutional research and coverage expansion.

Consider If: You prioritize a legacy financial brand with institutional processes.

Alternatives: BNY Mellon, Coinbase Prime

Fees/Notes: Bespoke enterprise pricing.

Regions: US/EU (eligibility varies).

3. BitGo Custody — Best for multi-jurisdiction options

Why Use It: BitGo operates qualified custody entities with coverage across North America, EMEA, and APAC, plus robust policy controls and detailed billing methodology for AUC.

Best For: Funds, market makers, and enterprises needing global entity flexibility.

Notable Features:

- Qualified custodian entities; segregated wallets

- Rich policy tooling and operational controls

- Transparent AUC billing methodology (bps)

Consider If: You need multi-region setup or bespoke operational segregation.

Alternatives: Komainu, Zodia Custody

Fees/Notes: Tiered AUC bps; bespoke network ops.

Regions: US/EU/APAC/MENA.

4. Anchorage Digital Bank — Best for federal bank oversight

Why Use It: The only crypto-native bank with an OCC charter in the US; a qualified custodian with staking and governance alongside institutional custody.

Best For: US institutions that want bank-level oversight and crypto-native tech.

Notable Features:

- OCC-chartered bank; qualified custodian

- Staking across major PoS assets

- Institutional console + policy workflows

Consider If: You need federal oversight and staking inside custody.

Alternatives: Coinbase Prime Custody, Fidelity Digital Assets

Fees/Notes: Enterprise pricing; staking terms by asset.

Regions: US (select global clients).

5. BNY Mellon Digital Asset Custody — Best for global bank infrastructure

Why Use It: America’s oldest bank runs an institutional Digital Assets Platform for safekeeping and on-chain services, built on its global custody foundation—ideal for asset-servicing integrations.

Best For: Asset servicers, traditional funds, and banks needing large-scale controls.

Notable Features:

- Integrated platform for safekeeping/servicing

- Bank-grade controls and lifecycle tooling

- Enterprise reporting and governance

Consider If: You prefer a global bank custodian with mature ops.

Alternatives: Fidelity Digital Assets, Sygnum Bank

Fees/Notes: Custom; bank service bundles.

Regions: US/EU (eligibility varies).

6. Gemini Custody — Best for security-first cold storage

Why Use It: Gemini Trust Company is a NY-chartered fiduciary and qualified custodian with air-gapped cold storage, role-based governance, and SOC reports—plus optional insurance coverage for certain assets.

Best For: Managers and corporates prioritizing conservative cold storage.

Notable Features:

- Qualified custodian; segregated cold storage

- Role-based governance and biometric access

- Broad supported-asset list

Consider If: You need straightforward custody without bundled trading.

Alternatives: BitGo, Coinbase Prime Custody

Fees/Notes: Tailored plans; network fees apply.

Regions: US/Global (eligibility varies).

7. Komainu — Best for regulated multi-hub custody (Jersey/UK/UAE/EU)

Why Use It: Nomura-backed Komainu operates regulated custody with segregation and staking, supported by licenses/registrations across Jersey, the UAE (Dubai VARA), the UK, and Italy—useful for cross-border institutions.

Best For: Institutions needing EMEA/Middle East optionality and staking within custody.

Notable Features:

- Regulated, segregated custody

- Institutional staking from custody

- Governance & audit frameworks

Consider If: You require multi-jurisdiction regulatory coverage.

Alternatives: Zodia Custody, BitGo

Fees/Notes: Enterprise pricing on request.

Regions: EU/UK/Middle East (global eligibility varies).

8. Zodia Custody — Best for bank-backed, multi-license EMEA coverage

Why Use It: Backed by Standard Chartered, Zodia provides institutional custody with air-gapped cold storage, standardized controls, and licensing/registrations across the UK, Ireland, Luxembourg, and Abu Dhabi (ADGM).

Best For: Asset managers and treasuries seeking bank-affiliated custody in EMEA.

Notable Features:

- Air-gapped cold storage & policy controls

- Multi-region regulatory permissions (EMEA/MENA)

- Institutional onboarding and reporting

Consider If: You want bank-backed governance and EU/Middle East reach.

Alternatives: Komainu, BNY Mellon

Fees/Notes: Custom pricing.

Regions: UK/EU/MENA/APAC (per license/authorization).

9. Sygnum Bank — Best for Swiss banking-grade custody + settlement network

Why Use It: FINMA-regulated Swiss bank providing off-balance-sheet crypto custody, staking, and Sygnum Connect—a 24/7 instant settlement network for fiat, crypto, and stablecoins.

Best For: EU/Asia institutions valuing Swiss regulation and bank-grade controls.

Notable Features:

- Off-balance-sheet, ring-fenced custody

- Staking from custody and asset risk framework

- Instant multi-asset settlement (Sygnum Connect)

Consider If: You want Swiss regulatory assurances + 24/7 settlement.

Alternatives: AMINA Bank, BNY Mellon

Fes/Notes: AUC bps; see price list. Regions: EU/APAC (CH/SG).

10. Hex Trust — Best for APAC institutions with MAS-licensed stack

Why Use It: A fully licensed APAC custodian offering on-chain segregation, role-segregated workflows, staking, and—in 2025—obtained a MAS Major Payment Institution license to offer DPT services in Singapore, rounding out custody + settlement.

Best For: Funds, foundations, and corporates across Hong Kong, Singapore, and the Middle East.

Notable Features:

- On-chain segregated accounts; auditability

- Policy controls with granular sub-accounts

- Staking & integrated markets services

Consider If: You want APAC-native licensing and operational depth.

Alternatives: Sygnum Bank, Komainu

Fees/Notes: Enterprise pricing; insurance program noted. Regions: APAC/Middle East (licensing dependent).

Decision Guide: Best By Use Case

- US-regulated & ETF-adjacent: Coinbase Prime Custody; Anchorage Digital Bank; Fidelity Digital Assets.

- Bank-backed in EMEA: BNY Mellon; Zodia Custody.

- Multi-jurisdiction flexibility: BitGo; Komainu.

- Swiss banking model: Sygnum Bank (and consider AMINA Bank).

- APAC-first compliance: Hex Trust.

- Cold-storage emphasis with simple pricing: Gemini Custody.

How to Choose the Right Institutional Custody Provider (Checklist)

- Regulatory fit: Qualified custodian or bank charter where required by your advisors/LPAs.

- Asset coverage: BTC/ETH + the specific long-tail tokens or staking assets you need.

- Operational controls: Policy rules, role segregation, whitelists, hardware/MPC key security.

- Settlement & liquidity: RFQ/OTC rails, prime integration, or instant networks.

- Fees: AUC bps, network fee handling, staking commissions, onboarding costs.

- Reporting & audit: SOC attestations, proof of segregated ownership, audit trails.

- Support: 24/7 ops desk, SLAs, incident processes.

Red flags: Commingled wallets, unclear ownership/legal structure, limited disclosures.

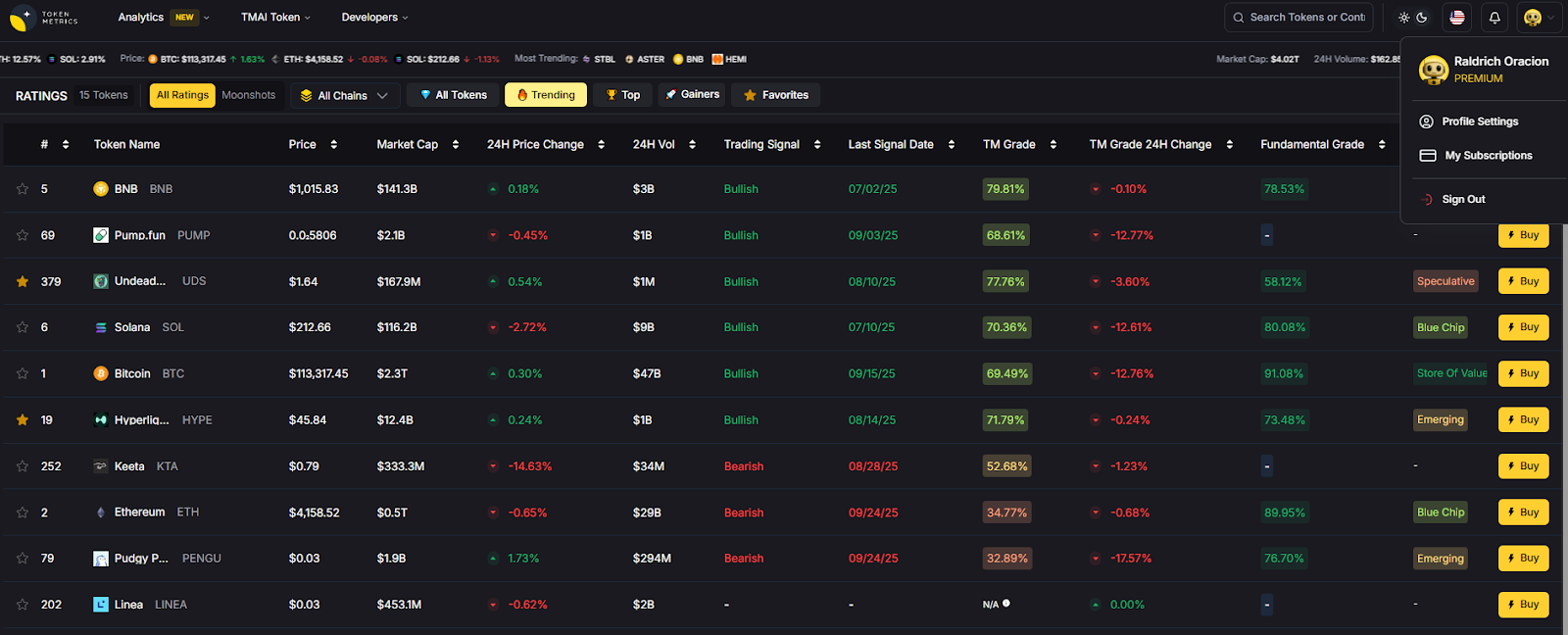

Use Token Metrics With Any Custodian

- AI Ratings: Screen assets with on-chain + quant scores to narrow to high-conviction picks.

- Narrative Detection: Identify sector momentum early (L2s, RWAs, staking).

- Portfolio Optimization: Balance risk/return before you allocate from custody.

- Alerts & Signals: Monitor entries/exits and risk while assets stay safekept.

Workflow (1–4): Research in Token Metrics → Select assets → Execute via your custodian’s trading rails/prime broker → Monitor with TM alerts.

Start free trial

Security & Compliance Tips

- Enforce hardware/MPC key ceremonies and multi-person approvals.

- Use role-segregated policies and allowlisting for withdrawals.

- Align KYC/AML and travel-rule workflows with fund docs and auditors.

- Document staking/airdrop entitlements and slashing risk treatment.

- Keep treasury cold storage separate from hot routing wallets.

This article is for research/education, not financial advice.

Beginner Mistakes to Avoid

- Picking a non-qualified entity when your mandate requires a qualified custodian.

- Underestimating operational lift (approvals, whitelists, reporting).

- Ignoring region-specific licensing/eligibility limitations.

- Focusing only on fees without evaluating security controls.

- Mixing trading and custody without strong policy separation.

FAQs

What is a qualified custodian in crypto?

A qualified custodian is a regulated entity (e.g., trust company or bank) authorized to hold client assets with segregation and audited controls, often required for investment advisers. Look for clear disclosures, SOC reports, and trust/bank charters on official pages.

Do I need a qualified custodian for my fund?

Many US advisers and institutions require qualified custody under their compliance frameworks; your legal counsel should confirm. When in doubt, choose a trust/bank chartered provider with documented segregation and audits.

Which providers support staking from custody?

Anchorage, Coinbase Prime, Komainu, Sygnum, and Hex Trust offer staking workflows from custody (asset lists vary). Confirm asset-by-asset support and commissions.

How are fees structured?

Most providers price custody in annualized basis points (bps) on average assets under custody; some publish methodologies or fee schedules. Network fees are usually passed through.

Can I keep assets off-exchange and still trade?

Yes—prime/custody integrations and instant-settlement networks let you trade while keeping keys in custody, reducing counterparty risk. Examples include Coinbase Prime and Sygnum Connect.

Are there regional restrictions I should know about?

Licensing/availability varies (e.g., Hex Trust operates under MAS MPI in Singapore; Zodia holds permissions across UK/EU/ADGM). Always confirm eligibility for your entity and region.

Conclusion + Related Reads

If you operate in the US with strict compliance needs, start with Coinbase Prime, Fidelity, or Anchorage. For bank-backed EMEA coverage, look to BNY Mellon or Zodia. For Swiss banking controls and instant settlement, Sygnum stands out; in APAC, Hex Trust offers strong licensing and workflows. BitGo and Komainu excel when you need multi-jurisdiction flexibility.

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)