Top Solana Projects in 2024 - Token Metrics Moon Awards

.png)

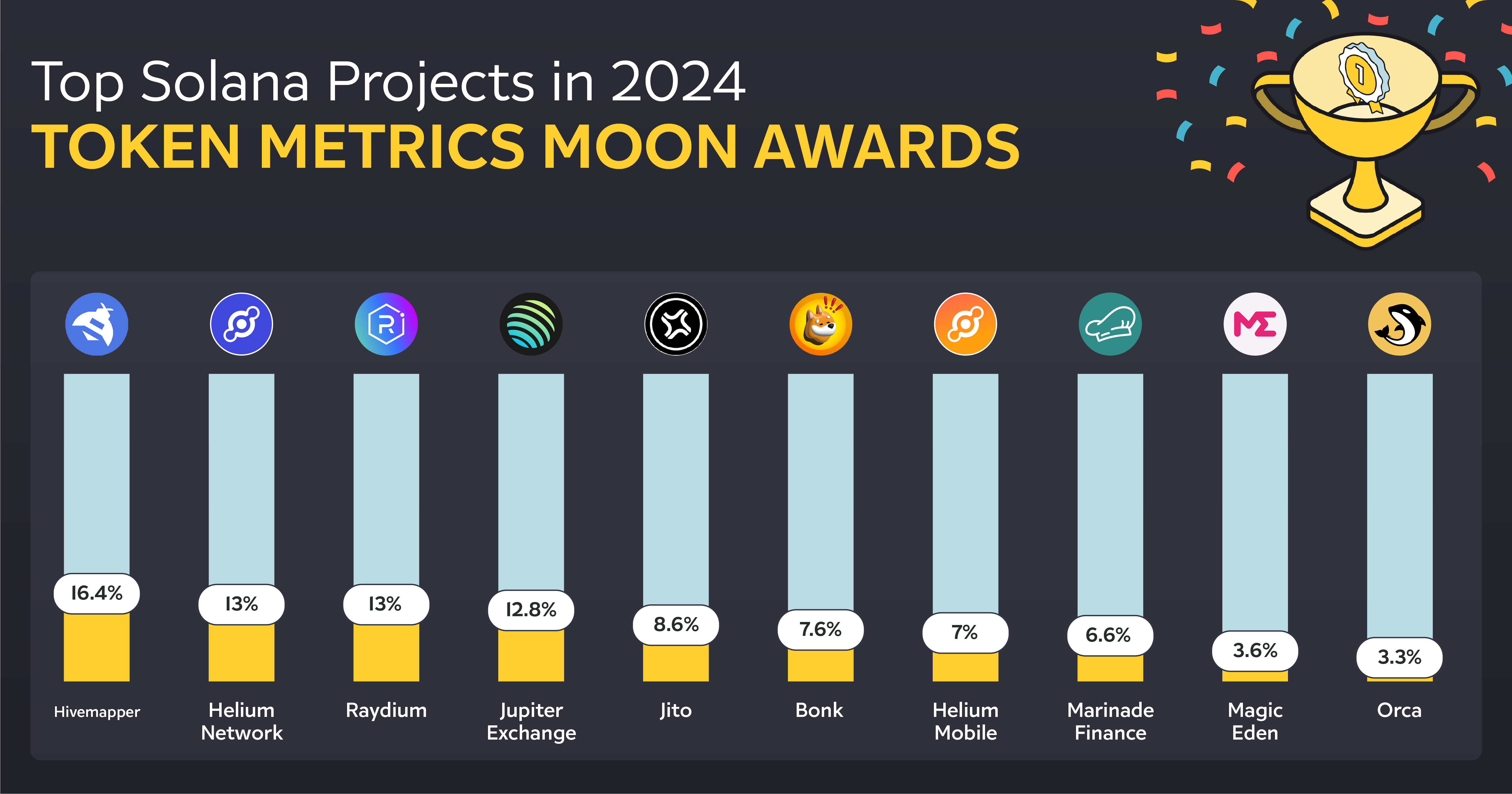

Welcome to the Token Metrics Moon Awards, a prestigious cryptocurrency industry accolade that recognizes platforms, projects, and initiatives that have significantly impacted the industry. The latest addition to the Moon Awards is the "Top Solana Projects in 2024."

Selection Process

At Token Metrics, we emphasize our audience, considering them the foundation of our operations. Our selection process is firmly rooted in engaging with the community, ensuring that our awards' results authentically reflect crypto enthusiasts' sentiments and experiences.

Through engagement with our community members, we have compiled a comprehensive list of the Top Solana Projects in 2024 based on extensive survey data and user votes.

This article aims to provide a comprehensive guide to the top Solana projects in 2024, showcasing their contributions to the ecosystem's growth despite facing challenges.

Through an in-depth exploration, we will uncover how these projects drive innovation, the technological advancements they bring, and their role in shaping the future of decentralized applications on Solana.

Solana Ecosystem Overview

Solana's ecosystem is rapidly evolving, with several competitive advantages propelling it to the forefront of blockchain technology. Notably, its ability to process transactions quickly and cheaply has made it a popular choice for developers and investors alike. Some of the highlights include:

Competitive Advantages:

- Cheap transactions

- Faster processing speed

- Growing meme coins contributing to network activity

Resurgence and Growth:

- Price recovery and zero network outages since February 2023

- Over 125 protocols, handling 100 million transactions daily

- Increased attention from investors and developers, especially in Decentralized Physical Infrastructure (DePIN)

These elements underscore Solana's robust and dynamic ecosystem, setting the stage for its continued growth and innovation in 2024.

List of Top Solana Projects in 2024

Delving into the bustling Solana ecosystem for 2024, we uncover a spectrum of projects that stand out for their innovation, utility, and contribution to the blockchain space.

These projects span various domains, including decentralized exchanges (DEXs), lending protocols, NFT marketplaces, and more, showcasing the versatility and robustness of the Solana network.

Here's a closer look at some of the top Solana projects set to make significant impacts in 2024:

1. Hivemapper

In the Moon Awards survey, Hivemapper secured the top position with 16.4% of the total votes.

Hivemapper emerges as a revolutionary force within the Solana ecosystem, championing the cause of decentralized mapping. This crypto-enabled dashcam project is not just about mapping the world; it's about reimagining who owns and contributes to that map.

With nearly 140 million kilometers mapped across 2,503 regions worldwide, Hivemapper exemplifies the shift from traditional, centralized mapping services to a more democratic, user-driven approach.

This transformation is pivotal for accurate, up-to-date maps essential for navigation and urban planning.

Key Features and Innovations:

- Decentralized Mapping: Leveraging the Solana blockchain, it offers a democratic way for global contributors to participate in map creation and updates.

- Comprehensive Coverage: The project maps nearly 140 million kilometers, covering 2,503 regions globally.

- Reward System: Contributors are rewarded for their input, with the full reward opportunity for every road reset every 7 days, encouraging continuous updates.

Hivemapper's technological backbone is equally impressive. The platform employs its in-house built Titan engine for processing massive datasets. This engine executes sensor fusion, machine learning/computer vision processing, and crowd consensus review and ensures the continuity of network data.

Furthermore, Hivemapper is pioneering in hardware development, working with multiple partners in the United States to build dashcams that collect 4K imagery and various telemetry data, all encrypted for security.

2. Helium Network

In the Moon Awards survey, Helium Network secured the 2nd position with 13% of the total votes.

The Helium Network, under the stewardship of the Helium Foundation, represents a paradigm shift in decentralized wireless technologies. Established in 2021, this US-based non-profit organization is at the forefront of protocol governance and the technical evolution of the Helium Network.

It's instrumental in educating the public about the advantages of decentralized wireless networks and fostering a vibrant community of network builders, users, and developers.

Key Aspects of the Helium Network:

- Foundation's Role: The Helium Foundation is central to driving the network's codebase and operations, ensuring a seamless experience for all stakeholders involved. It's committed to expanding public awareness and enhancing the network's utility through innovative use cases.

- Membership Program: Encompassing Hardware Manufacturers, Network Operators, Service Providers, and Application Developers, the program is set to open its doors to individual enthusiasts, including Hotspot hosts, in 2024.

- Migration to Solana: A significant milestone was the network's migration to Solana in April 2023, which brought substantial performance and scalability improvements. This move has also paved the way for the network to delve into the mobile and IoT sectors, with initiatives allowing users to earn tokens by supporting the network.

The Helium Network's journey through 2023 into 2024, marked by a 500% surge following its venture into mobile services, reflects its growing influence within the Solana ecosystem.

With the Helium Wallet now supporting all tokens on the Solana blockchain, the network is poised for further expansion, leveraging the DePIN project to offer decentralized connectivity services.

3. Raydium

In the Moon Awards survey, Raydium secured the 3rd position with 13% of the total votes.

Raydium stands as a pivotal Solana project, acting as a bridge between the worlds of decentralized finance (DeFi) and automated market makers (AMMs).

This platform has carved out a niche for itself within the Solana ecosystem by offering a suite of financial services that cater to both novice and seasoned crypto enthusiasts.

Key Features and Innovations:

- AMM Protocol: At its core, Raydium leverages the power of Solana's high-speed blockchain to facilitate lightning-fast trades and transactions. This not only enhances user experience but also contributes to the overall liquidity of the Solana ecosystem.

- Dual Functionality: Raydium functions as both an AMM and a liquidity provider to the Serum DEX, Solana's premier decentralized exchange. This unique positioning allows Raydium users to access the vast liquidity and wide range of trading pairs available on Serum.

Technological Advancements:

- High Throughput: Utilizing Solana's capability of processing thousands of transactions per second, Raydium offers a seamless and efficient trading experience.

- Yield Farming: Raydium introduces users to yield farming opportunities, enabling them to earn rewards by providing liquidity to various pools.

4. Jupiter Exchange

In the Moon Awards survey, Jupiter Exchange secured the 4th position with 12.8% of the total votes.

Jupiter Exchange (JUP) is a cutting-edge swap aggregator within the vibrant Solana ecosystem. It harnesses Solana's speed and efficiency to offer various advanced trading features, making it a standout choice for traders seeking flexibility and depth in their trading strategies. Here's a closer look at what makes Jupiter Exchange a notable Solana project:

Advanced Trading Features:

- Dollar Cost Averaging (DCA): Allows users to spread their investment over time, reducing the impact of volatility.

- Limit Orders: Users can set specific price points at which their trades automatically execute, offering greater control over trading outcomes.

- Perpetual Trading: Provides the opportunity for traders to engage in contracts that do not have an expiry date, enhancing trading flexibility.

Jupiter's commitment to efficiency, low transaction costs, and innovative offerings like swap services and perpetual exchange capabilities, coupled with a significant daily trading volume of $400 million and 90,000 unique wallets, underscores its importance within the Solana projects landscape.

5. Jito

In the Moon Awards survey, Jito secured the 5th position with 8.6% of the total votes.

In the vibrant Solana ecosystem, Jito stands as a beacon of innovation, particularly in the space of high-frequency trading on decentralized exchanges.

This Solana project is meticulously designed to address the common pain points encountered in decentralized finance (DeFi), offering solutions that significantly enhance trading efficiency and user experience.

High-Frequency Trading Capabilities:

- Jito utilizes the Solana blockchain's high throughput and low latency to facilitate high-frequency trading, a feature traditionally reserved for centralized financial markets.

- This allows traders to execute orders at lightning speed, capitalizing on market movements in real time.

Slippage Reduction:

- One of the platform's key innovations is its ability to drastically reduce slippage, a common issue that traders face in less liquid markets.

- By aggregating liquidity across multiple sources, Jito ensures that trades are executed at the most favorable prices, minimizing the cost impact on large orders.

Its emphasis on speed, efficiency, and reduced slippage positions Jito as a critical player among Solana projects, pushing the boundaries of what's possible in the realm of decentralized trading.

6. Bonk

In the Moon Awards survey, Bonk secured the 6th position with 7.6% of the total votes.

Bonk (BONK), a meme coin within the Solana ecosystem, has captured the attention of the cryptocurrency market with its significant traction.

BONKrewards by ArmadaFi: This initiative allows users to lock BONK for rewards, driving adoption and utility within the Solana ecosystem.

Competition and Roadmap: Facing competition from other meme coins, Bonk's development roadmap, and the team's efforts could influence investor sentiment and its market position.

Bonk's deflationary nature, with over 65% of its total circulating supply burnt, alongside a 10,000% gain in 2023, underscores its status as the hottest meme coin of 2024.

The Bonk DAO's investment in early-stage startups and the engaging Bonk March Madness event further highlight its community-driven approach and innovative use within the Solana projects landscape.

7. Helium Mobile

In the Moon Awards survey, Helium Mobile secured the 7th position with 7% of the total votes.

Helium Mobile's integration into the Solana ecosystem marks a significant leap forward in decentralized wireless communication, offering users an innovative and expansive service.

With the Helium Wallet's upgrade to support a wide range of digital assets on the Solana blockchain, users gain a streamlined experience in managing their Solana-based tokens.

This move not only enhances the utility of the Helium Wallet but also demonstrates Helium's dedication to embracing crypto diversity. The exclusive Pixel NFT, available in the "Badges" tab, rewards users for their engagement, adding a unique element to the user experience.

Key Developments:

- Coinbase Support: Helium Mobile's full support of Coinbase for trading amplifies its accessibility and potential for widespread adoption.

- Growth Metrics: The remarkable growth, evidenced by 12,398 active 5G nodes and a surge in new subscribers, indicates a rapidly expanding user base, with projections suggesting nearly 530,000 new subscribers within the year.

Helium Mobile's strategic adjustments to mining rules and the relationship between MOBILE and HNT tokens showcase a thoughtful approach to maintaining a balanced supply-demand dynamic.

8. Marinade Finance

In the Moon Awards survey, Marinade Finance secured the 8th position with 6.6% of the total votes.

Marinade Finance simplifies the concept of staking on the Solana blockchain, offering users an innovative approach to maximize their returns.

By automating the staking process across over 100 top-performing validators and providing an automated rebalancing feature, Marinade ensures that users' SOL is always working hard for them.

This automation not only optimizes returns but also democratizes access to high-quality staking opportunities, previously only available to those with extensive blockchain knowledge.

Key features that set Marinade Finance apart include:

- Instant Unstake: Users can exit their stake positions instantly at the best available price, offering unparalleled flexibility in managing their investments.

- Liquid Staking: By unlocking the liquidity of staked SOL, Marinade enables users to participate in DeFi, further enhancing the utility of their assets.

- mSOL Receipt Token: Depositing staked SOL with Marinade issues users an mSOL token, a fungible asset that can be used as collateral across various DeFi projects.

Moreover, Marinade Finance provides a comprehensive platform for users to review and understand the performance of Solana validators. Utilizing a detailed scoring system, it ranks validators based on yield economics, block production, and stake concentration, ensuring transparency and informed decision-making.

9. Magic Eden

In the Moon Awards survey, Magic Eden secured the 9th position with 3.6% of the total votes.

Magic Eden has established itself as a premier NFT marketplace within the Solana ecosystem, facilitating the buying, selling, and discovering unique digital assets across multiple blockchains. Several key features underscore its significance:

User and Creator Benefits:

- User-Friendly Interface: Ensures an intuitive navigation experience.

- Minimal Fees & Flexible Royalties: A 2% transaction fee for buyers and sellers, with optional royalty alchemy for creators.

- Magic Eden Wallet: Acts as a multi-purpose web3 navigator for storing crypto assets and interacting with NFTs.

Supported Blockchains and Collections:

- Blockchains: Solana, Ethereum, Polygon, and Bitcoin (Ordinals).

- Hot Collections Highlighted: Includes NodeMonkes, Bored Ape Yacht Club, Transdimensional Fox Federation, and Trump Digital Trading Cards, among others.

Magic Eden's blend of extensive collection, low transaction fees, and a user-friendly interface positions it as a hub for artists, creators, and enthusiasts from various blockchain backgrounds.

10. Orca

In the Moon Awards survey, Orca secured the 10th position with 3.3% of the total votes.

Orca emerges as a standout Concentrated Liquidity Automated Market Maker (AMM) on the Solana Network, designed to optimize the trading experience for both liquidity providers and traders. It achieves this through several innovative features and community-driven governance:

Concentrated Liquidity AMM:

- Liquidity providers can specify price ranges for their tokens, enhancing capital efficiency.

- A share of trading fees generated on the platform is an incentive, promising higher returns.

Community Governance:

- Governed by the Orca DAO and the DAO council, utilizing the ORCA token for decision-making.

- ORCA token is accessible on major exchanges like Kucoin, Gate, and Coinbase, ensuring wide availability.

Orca's platform is not just about trading; it's about creating a comprehensive ecosystem for DeFi activities on Solana. Key features include:

- Whirlpools: For concentrated liquidity, allowing for higher returns and lower slippage.

- DCA Feature & Decentralized Perpetuals Trading Platform: Offers up to 100x leverage, catering to various trading strategies.

- User-Friendly Interface: Ensures minimal transaction fees and lower latency than Ethereum-based DEXs, alongside a fair price guarantee.

Conclusion

Throughout this exploration of Solana's diverse and dynamic ecosystem, we've delved into the vast potential and innovation displayed by leading Solana projects set to shape the blockchain frontier in 2024.

From the decentralized mapping marvels of Hivemapper to the financial ingenuity of Orca, each project brings its unique contributions, marking Solana's unwavering position in the digital space.

These endeavors not only illuminate the robust capabilities of the Solana blockchain in supporting high-speed, cost-effective applications, but they also underline the ecosystem's versatility across various domains, including DeFi, wireless communication, NFT marketplaces, and beyond.

Disclaimer

The information provided on this website does not constitute investment advice, financial advice, trading advice, or any other advice, and you should not treat any of the website's content as such.

Token Metrics does not recommend buying, selling, or holding any cryptocurrency. Conduct your due diligence and consult your financial advisor before making investment decisions.

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)