Tron Price Prediction 2026: Low-Cost Blockchain Bull Market Analysis

Why 2026 Looks Bullish for Tron Price Prediction: Market Context

The crypto market is shifting toward a broadly bullish regime into 2026 as liquidity improves and risk appetite normalizes.

Regulatory clarity across major regions is reshaping the classic four-year cycle, flows can arrive earlier and persist longer. Institutional access keeps expanding through ETFs and qualified custody, while L2 scaling and real-world integrations broaden utility. Infrastructure maturity lowers frictions for capital, which supports deeper order books and more persistent participation.

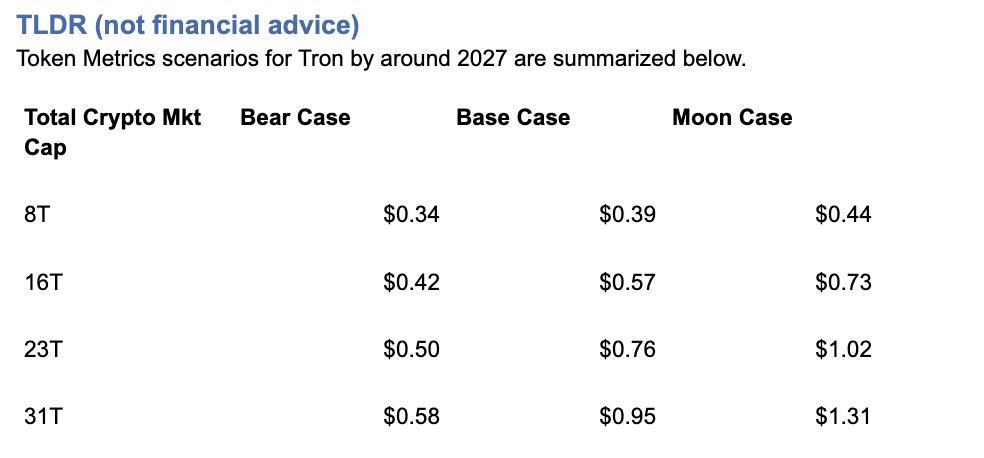

This bullish backdrop frames our Tron price prediction scenario work for TRX. The bands below map potential outcomes to different total crypto market sizes, providing comprehensive price prediction analysis for investors navigating the stablecoin transfer and payments-focused blockchain space.

Current price: $0.2971.

How to Read This TRX Price Prediction

Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

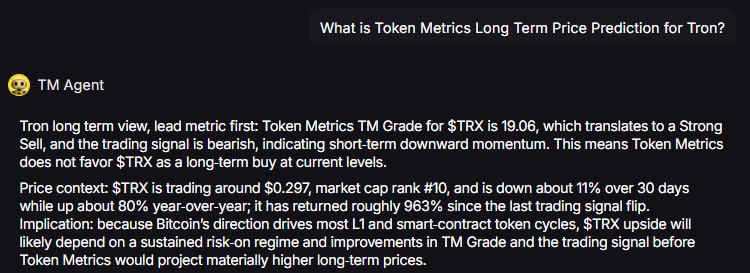

TM Agent baseline: Token Metrics TM Grade for $TRX is 19.06, which translates to a Strong Sell, and the trading signal is bearish, indicating short-term downward momentum. Price context: $TRX is trading around $0.297, market cap rank #10, and is down about 11% over 30 days while up about 80% year-over-year, it has returned roughly 963% since the last trading signal flip. Despite strong historical returns, current price prediction models reflect caution.

Live details: Tron Token Details → https://app.tokenmetrics.com/en/tron

Buy TRX: https://www.mexc.com/acquisition/custom-sign-up?shareCode=mexc-2djd4

Key Takeaways: Tron Price Prediction Summary

- Scenario driven: Price prediction outcomes hinge on total crypto market cap; higher liquidity and adoption lift the bands

- TM Agent gist: Bearish near term in price prediction models; upside depends on sustained risk-on regime and improvements in TM Grade and trading signal

- Current rating: Strong Sell (19.06) despite bullish 2026 macro backdrop and 80% YoY gains

- Stablecoin focus: TRX benefits from USDT transfer volume on Tron network

- $1 target: Achievable in 23T+ moon scenarios per price prediction framework

- Education only, not financial advice

Tron Price Prediction Scenario Analysis

Token Metrics price prediction scenarios span four market cap tiers, each representing different levels of crypto market maturity and liquidity:

8T Market Cap - TRX Price Prediction:

At an 8 trillion dollar total crypto market cap, our TRX price prediction framework projects specific targets in bear, base, and bullish scenarios. The 8T tier reflects conservative market conditions with steady stablecoin transfer adoption.

16T Market Cap - TRX Price Prediction:

Doubling the market to 16 trillion expands the TRX price prediction range significantly, reflecting increased institutional stablecoin usage and payments adoption that benefit Tron's high-throughput, low-cost infrastructure.

23T Market Cap - TRX Price Prediction:

At 23 trillion total crypto market cap, the price prediction scenarios show substantial upside potential, with the moon case projecting $1.02—achieving the psychologically important $1.00 milestone for TRX holders.

Each tier in our price prediction framework assumes progressively stronger market conditions, with particular emphasis on stablecoin transfer volume and payments use cases that uniquely position Tron in the blockchain ecosystem.

Why Consider the Indices with Top-100 Exposure

Diversification matters. Tron is compelling, yet concentrated bets can be volatile—especially when price predictions carry Strong Sell ratings despite bullish macro conditions. Token Metrics Indices hold TRX alongside the top one hundred tokens for broad exposure to leaders and emerging winners.

Our backtests indicate that owning the full market with diversified indices has historically outperformed both the total market and Bitcoin in many regimes due to diversification and rotation benefits, reducing single-asset risk.

Get early access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview

If your editor supports embeds, place a form embed here. Otherwise, include the link above as a button labeled Join Indices Early Access.

What Is Tron?

Tron is a smart-contract blockchain focused on low-cost, high-throughput transactions and cross-border settlement. The network supports token issuance and a broad set of dApps, with an emphasis on stablecoin transfer volume and payments—critical factors that influence TRX price predictions.

TRX is the native asset that powers fees and staking for validators and delegators within the network. Developers and enterprises use the chain for predictable costs and fast finality, which supports consumer-facing use cases. Tron has become a dominant network for USDT (Tether) transfers, creating organic demand for TRX that impacts long-term price prediction trajectories.

Catalysts That Skew TRX Price Predictions Bullish

- Institutional and retail access expands with ETFs, listings, and integrations

- Macro tailwinds from lower real rates and improving liquidity expected in 2026

- Product or roadmap milestones such as upgrades, scaling, or partnerships

- Growing USDT stablecoin transfer volume on Tron network

- Increased cross-border payment adoption and remittance use cases

- Continued low-fee competitive advantage versus Ethereum

- Enterprise blockchain partnerships leveraging Tron's throughput

Risks That Skew TRX Price Predictions Bearish

- Macro risk-off from tightening or liquidity shocks

- Regulatory actions targeting Tron, Justin Sun, or infrastructure outages

- Current Strong Sell rating (19.06) indicating significant fundamental concerns

- Concentration risk in validator economics and potential centralization issues

- Competitive displacement from faster, lower-cost L1s and L2 solutions

- Regulatory scrutiny on stablecoin operations affecting Tether/USDT

- Reputational concerns related to founder Justin Sun

- Competition from Solana, BNB Chain for stablecoin transfers

Special Offer — Token Metrics Advanced Plan with 20% Off

Unlock platform-wide intelligence on every major crypto asset. Use code ADVANCED20 at checkout for twenty percent off.

- AI powered ratings on thousands of tokens for traders and investors

- Interactive TM AI Agent to ask any crypto question

- Indices explorer to surface promising tokens and diversified baskets

- Signal dashboards, backtests, and historical performance views

- Watchlists, alerts, and portfolio tools to track what matters

- Early feature access and enhanced research coverage

Start with Advanced today → https://www.tokenmetrics.com/token-metrics-pricing

Tron Price Prediction FAQs

Can TRX reach $1?

Yes. The 23T moon case in our price prediction framework shows $1.02 and the 31T moon case shows $1.31, which imply a path to $1 in higher-liquidity regimes. From current price of $0.2971, this represents approximately 240%+ gains. However, achieving this requires the bullish 2026 thesis materializing with strong stablecoin adoption and overcoming the current Strong Sell rating (19.06). Not financial advice.

Is TRX a good long-term investment?

TRX presents a complex investment case with a Strong Sell rating (19.06) despite impressive historical returns (80% YoY, 963% since last signal flip). Our price prediction models show potential upside to $1.02-$1.31 in bullish scenarios, but outcome depends heavily on adoption, liquidity regime, competition, regulatory environment, and supply dynamics. The stablecoin transfer use case provides organic demand, but fundamental concerns reflected in the Strong Sell rating suggest caution. Diversify and size positions responsibly. Not financial advice.

What is the Tron price prediction for 2026-2027?

Our comprehensive TRX price prediction framework for 2026-2027 suggests Tron could reach $1.02 in the 23T moon scenario and $1.31 in the 31T moon scenario, representing significant upside from current $0.2971 price. Base case scenarios across market cap tiers provide more conservative targets. Despite the bullish 2026 macro thesis and strong stablecoin utility, the Strong Sell rating (19.06) and bearish trading signal indicate current fundamental concerns. Not financial advice.

Should I buy TRX now or wait?

TRX has a Strong Sell rating (19.06) indicating Token Metrics does not endorse Tron at current levels, despite bullish 2026 macro conditions and 80% YoY gains. Current price of $0.2971 is down 11% over 30 days with bearish trading signals. However, historical outperformance (963% since last signal flip) and stablecoin transfer utility create a paradox. If you believe in the 2026 bull thesis and Tron's stablecoin dominance, dollar-cost averaging may reduce timing risk. Consider diversified exposure through indices. Not financial advice.

Why does TRX have a Strong Sell rating despite 80% YoY gains?

TRX receives a Strong Sell rating (19.06) based on current fundamental analysis, technical indicators, and valuation metrics—despite impressive historical performance (80% YoY, 963% since last signal flip). Ratings reflect forward-looking risk assessment including regulatory concerns, centralization issues, reputational factors, and competitive threats. Our price prediction framework shows potential upside in favorable scenarios, but the Strong Sell rating suggests significant risks that could prevent achieving bullish targets. Past performance doesn't guarantee future results. Not financial advice.

How does TRX benefit from stablecoin transfers?

Tron has become the dominant network for USDT (Tether) transfers due to extremely low fees and fast settlement. This creates organic demand for TRX as users need the native token for transaction fees. Our price prediction models assume continued growth in stablecoin transfer volume, which supports base case scenarios. The 23T and 31T price predictions of $1.02-$1.31 factor in sustained stablecoin dominance. However, competition from other low-cost chains and regulatory scrutiny on Tether operations represent risks. Not financial advice.

Can TRX reach $2?

TRX reaching $2 would exceed our current price prediction models' highest scenario of $1.31 (31T moon case). From current $0.2971, $2 represents approximately 570%+ gains. This would require extraordinary market conditions beyond the 31T total crypto market cap tier, exceptional stablecoin adoption acceleration, and overcoming significant fundamental concerns reflected in the Strong Sell rating (19.06). While possible in extreme bull scenarios, $2 appears beyond rational price prediction frameworks. Not financial advice.

What are the biggest risks to TRX price predictions?

Key risks that could derail Tron price predictions include: Strong Sell rating (19.06) indicating severe fundamental concerns, regulatory actions targeting Tron network or founder Justin Sun, centralization concerns in validator economics, regulatory scrutiny on Tether/USDT operations affecting transfer volumes, competitive displacement from Solana/BNB Chain for stablecoin transfers, reputational issues, macro liquidity shocks ending 2026 bull thesis, and potential security vulnerabilities. Despite strong utility, these risks warrant careful position sizing. Not financial advice.

How does Tron compare to other payment blockchain price predictions?

Tron's price prediction framework suggests $0.30-$1.31 across scenarios, positioning it as a specialized payments and stablecoin transfer network. Compared to Ethereum's dominance and Solana's speed, Tron differentiates through extremely low fees and USDT transfer volume leadership. However, the Strong Sell rating (19.06) reflects concerns that this niche may not sustain long-term value versus more decentralized, diversified platforms. Tron trades at rank #10, indicating established but controversial market position. Not financial advice.

Will TRX benefit from the 2026 bull market?

Yes. Our price prediction scenarios are specifically framed around the bullish 2026 thesis, where improving liquidity, institutional participation, and stablecoin adoption create favorable conditions. TRX's low-fee infrastructure and USDT dominance position it to capture bull market momentum in payments use cases. However, the Strong Sell rating (19.06) and bearish momentum suggest TRX may underperform versus fundamentally stronger tokens. Muted base case projections reflect this tension between macro tailwinds and micro concerns. Not financial advice.

Is the $1 target realistic for TRX?

The $1 target appears achievable in our price prediction framework's 23T moon case ($1.02) and 31T scenarios ($1.31). From current $0.2971, this represents approximately 240%+ gains requiring sustained stablecoin adoption, bullish macro conditions materializing, and overcoming Strong Sell rating concerns. Historical volatility (963% returns since last signal flip) demonstrates TRX's ability to deliver explosive gains, but fundamental weaknesses create significant uncertainty. Possible but high-risk—manage expectations accordingly. Not financial advice.

What needs to happen for TRX to reach price prediction targets?

For TRX to reach our price prediction targets of $1.02-$1.31, several catalysts must materialize: (1) continued dominance in USDT stablecoin transfers, (2) bullish 2026 macro conditions with improving liquidity, (3) addressing regulatory concerns around Tron and Justin Sun, (4) improving TM Grade from Strong Sell (19.06) territory, (5) institutional adoption of Tron for cross-border payments, (6) successful competition against Solana/BNB Chain, and (7) maintaining low-fee advantage as scaling solutions proliferate. The Strong Sell rating suggests these catalysts aren't currently materializing at required pace.

Next Steps

Track live grades and signals: Token Details → https://app.tokenmetrics.com/en/tron

Join Indices Early Access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview

Want exposure Buy TRX on MEXC → https://www.mexc.com/acquisition/custom-sign-up?shareCode=mexc-2djd4

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

Why Use Token Metrics for Tron Price Predictions?

- Transparent analysis: Honest Strong Sell ratings (19.06) even when historical returns are exceptional (963%)

- Scenario-based modeling: Four market cap tiers for comprehensive price prediction analysis

- Stablecoin specialization: Understanding Tron's unique position in USDT transfer ecosystem

- Risk-adjusted approach: Balanced view of utility strengths versus fundamental concerns

- Real-time signals: Trading signals and TM Grades updated regularly tracking 11% monthly decline

- Diversification tools: Index solutions to spread payment blockchain risk

- Comparative analysis: Analyze TRX against BNB, SOL, and 6,000+ tokens

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)