What is a Blockchain Node and What Does It Do? A Complete Guide for 2025

The blockchain revolution has fundamentally transformed the way we handle digital transactions, data storage, and decentralized systems. The primary function of blockchain nodes is to maintain the blockchain's public ledger and ensure consensus across the network, supporting the decentralized infrastructure and integrity of the system. At the core of every blockchain network lies a crucial component that many users overlook but absolutely depend on: blockchain nodes. Understanding what is a blockchain node and what does it do is essential for anyone involved in cryptocurrency trading, blockchain development, or simply interested in how blockchain nodes work to validate transactions, store data, and maintain the decentralized network.

Understanding Blockchain Nodes: The Network's Backbone

A blockchain node refers to a computer or device that participates actively in a blockchain network by maintaining a copy of the distributed ledger and assisting in validating new transactions. These nodes act as individual participants in a vast, decentralized database where no single entity governs the information, creating a decentralized network that is resilient and censorship-resistant. Relying on just one node would make the network vulnerable to failures and attacks, but having many nodes ensures greater decentralization, stability, and security.

When you send cryptocurrency from one wallet to another, the transaction data isn’t processed by a bank or a central entity. Instead, it is broadcast to thousands of blockchain nodes worldwide. These nodes, along with other nodes in the network, collaborate to verify the legitimacy of the transaction, ensuring the sender has sufficient funds and preventing issues like double-spending. This process of authenticating transactions and broadcasting them across the entire network ensures the integrity of the blockchain ledger.

Because blockchain nodes store copies of the entire blockchain history, the network gains remarkable durability. Each node runs protocol software to participate in the network and communicate with others. Unlike traditional centralized systems vulnerable to single points of failure, a blockchain network can continue functioning smoothly even if many nodes go offline. This redundancy is what makes networks such as the bitcoin network, which relies on decentralized nodes and miners, so robust and secure. Nodes play a vital role in maintaining the network's security, ensuring the integrity and reliability of the blockchain.

The Blockchain Network: How Nodes Connect and Communicate

A blockchain network is a decentralized network made up of countless blockchain nodes that work in harmony to validate, record, and secure blockchain transactions. Unlike traditional systems that rely on a central authority, a blockchain network distributes responsibility across all participating nodes, creating a robust and resilient infrastructure.

Each blockchain node maintains a copy of the entire blockchain ledger, ensuring that every participant has access to the same up-to-date information. As new transactions occur, they are broadcast across the network, and every node updates its ledger in real time. This is made possible through a peer-to-peer network architecture, where each node can both send and receive data, eliminating single points of failure and enhancing the network’s security.

Within this decentralized network, nodes store and verify blockchain data according to their specific roles. Full nodes are responsible for storing the entire blockchain ledger and independently validating every transaction and block. Light nodes (or SPV nodes) store only the essential data needed to verify transactions, making them ideal for devices with limited resources. Mining nodes play a critical role in validating transactions and adding new blocks to the blockchain by solving complex mathematical puzzles, while authority nodes are tasked with authenticating transactions and ensuring the network operates according to the established rules.

Archival nodes go a step further by storing the entire blockchain history, including all past transactions, which is essential for services that require access to comprehensive transaction history. Staking nodes participate in proof-of-stake networks, where they validate transactions and add new blocks based on the amount of cryptocurrency they hold and are willing to “stake” as collateral. Super nodes and master nodes perform specialized tasks such as implementing protocol changes, maintaining network stability, and sometimes enabling advanced features like instant transactions or privacy enhancements.

The seamless operation of a blockchain network relies on a consensus mechanism—a set of rules that all nodes follow to agree on the validity of new transactions and blocks. This process ensures that no single node can manipulate the blockchain ledger, and it helps prevent issues like network congestion by coordinating how transactions are processed and recorded. For example, the bitcoin blockchain uses a proof-of-work consensus mechanism, while other networks may use proof-of-stake or other protocols.

Innovations like lightning nodes enable off-chain processing of transactions, reducing the load on the main blockchain and allowing for faster, more scalable exchanges. As the blockchain ecosystem evolves, new types of nodes and consensus mechanisms continue to emerge, each contributing to the network’s security, efficiency, and decentralized nature.

In essence, blockchain nodes are the backbone of any blockchain network. By working together to validate and record transactions, these nodes ensure the integrity and reliability of the entire system. Understanding how different types of blockchain nodes connect and communicate provides valuable insight into the complexity and power of decentralized networks, and highlights why blockchain technology is revolutionizing the way we think about data, trust, and digital value.

Types of Blockchain Nodes: Different Roles, Different Functions

Not all blockchain nodes perform the same functions. There are several node variations of blockchain nodes, each playing a unique role in maintaining the blockchain ecosystem and ensuring smooth network operation. These include super nodes, which are the super nodes rarest type and are created on demand for specialized tasks, as well as master nodes and others.

Full nodes are the most comprehensive type of node. They download and store data for the entire blockchain ledger, including all the transactions and blocks from the beginning of the blockchain. Full nodes independently verify every transaction and block against the network’s consensus mechanism, ensuring that only valid data is added to the blockchain. These nodes form the backbone of the network’s security, as they prevent invalid or malicious transactions from being accepted.

In contrast, light nodes (or SPV nodes) operate more efficiently by only downloading the essential data, such as block headers, rather than the full blockchain. They require less processing power and are ideal for mobile devices or wallets with limited storage and bandwidth. While light nodes sacrifice some independence, they still contribute to the network’s decentralization by verifying transactions without storing the entire blockchain history.

Mining nodes (also called miner nodes) combine the functions of full nodes with the additional task of creating new blocks. These nodes compete to solve complex cryptographic puzzles, and the winning miner adds the next block to the main blockchain, earning block rewards and transaction fees. In proof-of-stake networks, a staking node or validator node performs a similar function by using their stake to secure the network instead of computational power. Staking nodes participate in the authentication process, gain authentication powers, and must meet predetermined metrics to qualify for these roles.

Another specialized type includes archival full nodes, which go beyond full nodes by storing all the transactions and the complete blockchain's transaction history. An archival full node stores or can store data for the entire blockchain, making them vital for services like blockchain explorers and analytics platforms that require access to complete transaction history. The node stores all historical data, ensuring blockchain integrity and transparency.

Other variations include pruned full nodes, which store only the most recent blockchain transactions and discard older data to manage storage limits. A pruned full node has a set memory limit and retains only recent data, deleting the oldest blocks to optimize storage while maintaining the latest transaction information.

Lightning nodes play a crucial role in congested blockchain networks by enabling instantaneous exchanges and solving issues of slow processing. They use out of network connections to execute off-chain transactions, which helps reduce network congestion, lower transaction fees, and improve transaction speed and cost-efficiency.

In proof-of-authority networks, authority nodes (also known as approved nodes) are selected through a vetting process to ensure trustworthiness and accountability. The distribution of nodes, or blockchain hosts, across many blockchain networks enhances network robustness, security, and decentralization by spreading authority and preventing infiltration. Many blockchain networks exist, each with different features, governance models, and privacy options, supporting diverse community participation and transparent or pseudonymous transactions.

How Blockchain Nodes Maintain Network Security

The security of a blockchain network emerges from the collective efforts of thousands of independent nodes operating without a central authority. When a new transaction is broadcast, blockchain nodes immediately begin verifying it by checking digital signatures, confirming sufficient balances, and ensuring the transaction adheres to the blockchain protocol. Each node validates transactions to ensure their legitimacy within the network.

This multi-layered verification process strengthens the network’s security. Cryptographic signatures confirm that only rightful owners can spend their cryptocurrency. The consensus protocol requires a majority of nodes to agree on the validity of new blocks before they are added to the blockchain. Nodes play a crucial role in maintaining secure transactions by verifying transaction authenticity and protecting them through cryptographic hashing and the immutability of the blockchain ledger. Additionally, the distributed nature of the network means that an attacker would need to compromise a majority of nodes spread across different locations — an almost impossible feat.

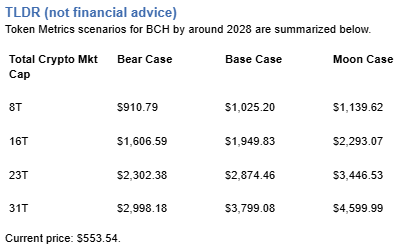

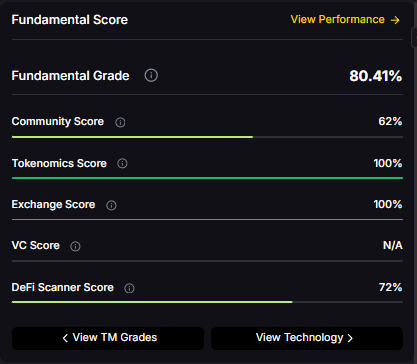

For investors and traders, understanding the distribution and health of blockchain nodes offers valuable insights into the long-term viability and security of a blockchain network. Platforms like Token Metrics incorporate node metrics into their analysis, helping users evaluate the fundamental strength of blockchain networks beyond just price trends.

The Economics of Running Blockchain Nodes

Running a blockchain node involves costs and incentives that help maintain network security and decentralization. Although full nodes generally do not receive direct financial rewards, they provide operators with important benefits such as complete transaction privacy, the ability to independently verify payments, and participation in network governance. The presence of many nodes also supports scalable growth, enabling the network to efficiently handle increasing transaction volumes without compromising performance.

On the other hand, mining nodes and staking nodes receive block rewards and transaction fees as compensation for their work securing the blockchain. However, operating these nodes requires significant investment in hardware, electricity, and maintenance. Profitability depends on factors like cryptocurrency prices, network difficulty, and energy costs, making mining a dynamic and competitive economic activity.

Many node operators run full nodes for ideological reasons, supporting the network’s decentralization without expecting monetary gain. This voluntary participation strengthens the blockchain ecosystem and reflects the community’s commitment to a peer to peer network free from a central entity.

Choosing and Setting Up Your Own Node

Setting up a blockchain node has become more accessible thanks to improved software and detailed guides from many blockchain projects. However, requirements vary widely. For example, running a Bitcoin full node demands several hundred gigabytes of storage to hold the entire blockchain ledger. Full nodes store the blockchain's transaction history, which is essential for verifying the integrity of the network. Maintaining the network's transaction history is crucial for transparency and trust, as it allows anyone to audit and verify all past transactions.

For beginners, a light node or lightweight wallet offers an easy way to engage with blockchain technology without the technical complexity or storage demands of full nodes. A light node stores only block headers and relies on full nodes for transaction validation, making it suitable for devices with limited resources. As users become more experienced, they may choose to run full nodes to enhance security, privacy, and autonomy.

Cloud-based node services provide an alternative for those who want full node access without investing in hardware. While convenient, these services introduce a level of trust in third parties, which partially contradicts the trustless principles of blockchain technology.

The Future of Blockchain Nodes

Blockchain node architecture is evolving rapidly to meet the demands of scalability, security, and usability. Layer-2 scaling solutions are introducing new node types that process transactions off the main blockchain, reducing congestion while retaining security guarantees. Cross-chain protocols require specialized bridge nodes to facilitate communication between different blockchain networks.

The potential for mobile and IoT devices to operate nodes could dramatically enhance decentralization, though challenges like limited storage, bandwidth, and battery life remain significant hurdles. Innovations in consensus mechanisms and data structures aim to make node operation more efficient and accessible without compromising security.

For traders and investors, staying informed about these developments is crucial. Platforms like Token Metrics offer insights into how advancements in node technology influence network fundamentals and investment opportunities within the expanding blockchain ecosystem.

Understanding what is a blockchain node and what does it do lays the foundation for anyone serious about blockchain technology and cryptocurrency. These often-invisible components form the governing infrastructure of decentralized networks, enabling secure, trustless, and censorship-resistant financial systems that are reshaping the future of digital interactions.

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)