What is Self-Sovereign Identity in Web3? The Complete Guide to Digital Freedom in 2025

In today’s digital world, our identities define how we interact online—from accessing services to proving who we are. However, traditional identity management systems often place control of your personal information in the hands of centralized authorities, such as governments, corporations, or social media platforms. This centralized control exposes users to risks like data breaches, identity theft, and loss of privacy. Enter Self-Sovereign Identity (SSI), a revolutionary digital identity model aligned with the core principles of Web3: decentralization, user empowerment, and true digital ownership. Understanding what is self sovereign identity in Web3 is essential in 2025 for anyone who wants to take full control of their digital identity and navigate the decentralized future safely and securely.

Understanding Self-Sovereign Identity: The Foundation of Digital Freedom

At its core, self sovereign identity is a new digital identity model that enables individuals to own, manage, and control their identity data without relying on any central authority. Unlike traditional identity systems, where identity data is stored and controlled by centralized servers or platforms—such as social media companies or government databases—SSI empowers users to become the sole custodians of their digital identity.

The self sovereign identity model allows users to securely store their identity information, including identity documents like a driver’s license or bank account details, in a personal digital wallet app. This wallet acts as a self sovereign identity wallet, enabling users to selectively share parts of their identity information with others through verifiable credentials. These credentials are cryptographically signed by trusted issuers, making them tamper-proof and instantly verifiable by any verifier without needing to contact the issuer directly.

This approach means users have full control over their identity information, deciding exactly what data to share, with whom, and for how long. By allowing users to manage their digital identities independently, SSI eliminates the need for centralized authorities and reduces the risk of data breaches and unauthorized access to sensitive information.

The Web3 Context: Why SSI Matters Now

The emergence of Web3—a decentralized internet powered by blockchain and peer-to-peer networks—has brought new challenges and opportunities for digital identity management. Traditional login methods relying on centralized platforms like Google or Facebook often result in users surrendering control over their personal data, which is stored on centralized servers vulnerable to hacks and misuse.

In contrast, Web3 promotes decentralized identity, where users own and control their digital credentials without intermediaries. The question what is self sovereign identity in Web3 becomes especially relevant because SSI is the key to realizing this vision of a user-centric, privacy-respecting digital identity model.

By 2025, businesses and developers are urged to adopt self sovereign identity systems to thrive in the Web3 ecosystem. These systems leverage blockchain technology and decentralized networks to create a secure, transparent, and user-controlled identity infrastructure, fundamentally different from centralized identity systems and traditional identity management systems.

The Three Pillars of Self-Sovereign Identity

SSI’s robust framework is built on three essential components that work together to create a secure and decentralized identity ecosystem:

1. Blockchain Technology

Blockchain serves as a distributed database or ledger that records information in a peer-to-peer network without relying on a central database or centralized servers. This decentralized nature makes blockchain an ideal backbone for SSI, as it ensures data security, immutability, and transparency.

By storing digital identifiers and proofs on a blockchain, SSI systems can verify identity data without exposing the actual data or compromising user privacy. This eliminates the vulnerabilities associated with centralized platforms and frequent data breaches seen in traditional identity systems.

2. Decentralized Identifiers (DIDs)

A Decentralized Identifier (DID) is a new kind of globally unique digital identifier that users fully control. Unlike traditional identifiers such as usernames or email addresses, which depend on centralized authorities, DIDs are registered on decentralized networks like blockchains.

DIDs empower users with user control over their identity by enabling them to create and manage identifiers without relying on a central authority. This means users can establish secure connections and authenticate themselves directly, enhancing data privacy and reducing reliance on centralized identity providers.

3. Verifiable Credentials (VCs)

Verifiable Credentials are cryptographically secure digital documents that prove certain attributes about an individual, organization, or asset. Issued by trusted parties, these credentials can represent anything from a university diploma to a government-issued driver’s license.

VCs are designed to be tamper-proof and easily verifiable without contacting the issuer, thanks to blockchain and cryptographic signatures. This ensures enhanced security and trustworthiness in digital identity verification processes, while allowing users to share only the necessary information through selective disclosure.

How SSI Works: The Trust Triangle

The operation of SSI revolves around a trust triangle involving three key participants:

- Holder: The individual who creates their decentralized identifier using a digital wallet and holds their digital credentials.

- Issuer: A trusted entity authorized to issue verifiable credentials to the holder, such as a government, university, or bank.

- Verifier: An organization or service that requests proof of identity or attributes from the holder to validate their claims.

When a verifier requests identity information, the holder uses their self sovereign identity wallet to decide which credentials to share, ensuring full control and privacy. This interaction eliminates the need for centralized intermediaries and reduces the risk of identity theft.

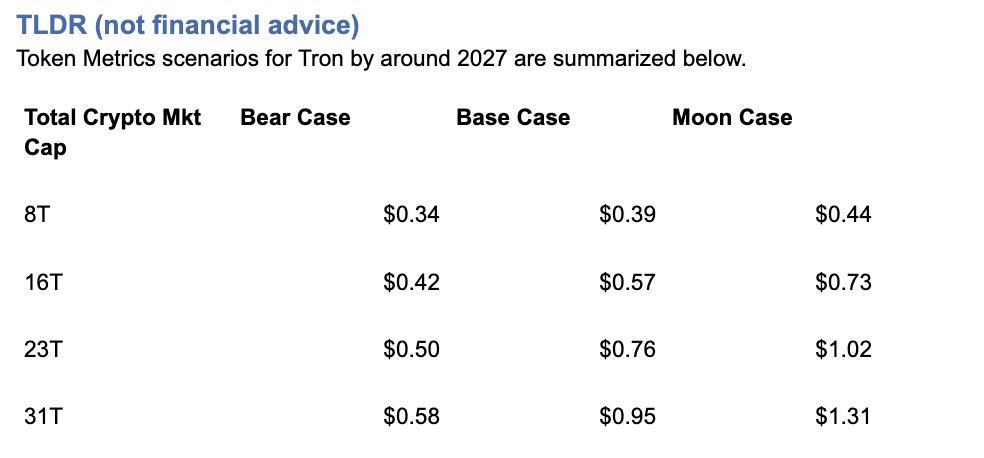

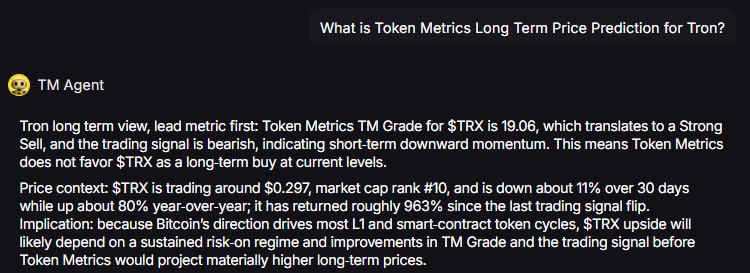

Token Metrics: Leading the Charge in Web3 Analytics and Security

As SSI platforms gain traction, understanding their underlying token economies and security is critical for investors and developers. Token Metrics is a leading analytics platform that provides deep insights into identity-focused projects within the Web3 ecosystem.

By analyzing identity tokens used for governance and utility in SSI systems, Token Metrics helps users evaluate project sustainability, security, and adoption potential. This is crucial given the rapid growth of the digital identity market, projected to reach over $30 billion by 2025.

Token Metrics offers comprehensive evaluations, risk assessments, and performance tracking, empowering stakeholders to make informed decisions in the evolving landscape of self sovereign identity blockchain projects.

Real-World Applications of SSI in 2025

Financial Services and DeFi

SSI streamlines Know Your Customer (KYC) processes by enabling users to reuse verifiable credentials issued by one institution across multiple services. This reduces redundancy and accelerates onboarding, while significantly lowering identity fraud, which currently costs billions annually.

Healthcare and Education

SSI enhances the authenticity and privacy of medical records, educational certificates, and professional licenses. Universities can issue digital diplomas as VCs, simplifying verification and reducing fraud.

Supply Chain and Trade

By assigning DIDs to products and issuing VCs, SSI improves product provenance and combats counterfeiting. Consumers gain verifiable assurance of ethical sourcing and authenticity.

Gaming and NFTs

SSI allows users to prove ownership of NFTs and other digital assets without exposing their entire wallet, adding a layer of privacy and security to digital asset management.

Advanced SSI Features: Privacy and Security

Selective Disclosure

SSI enables users to share only specific attributes of their credentials. For example, proving age without revealing a full birthdate helps protect sensitive personal information during verification.

Zero-Knowledge Proofs

Zero-knowledge proofs (ZKPs) allow users to prove statements about their identity without revealing the underlying data. For instance, a user can prove they are over 18 without sharing their exact birthdate, enhancing privacy and security in digital interactions.

Current SSI Implementations and Projects

Several initiatives showcase the practical adoption of SSI:

- ID Union (Germany): A decentralized identity network involving banks and government bodies.

- Sovrin Foundation: An open-source SSI infrastructure leveraging blockchain for verifiable credentials.

- European Blockchain Services Infrastructure (EBSI): Supports cross-border digital diplomas and identity.

- Finland’s MyData: Empowers citizens with control over personal data across sectors.

These projects highlight SSI’s potential to transform identity management globally.

Challenges and Considerations

Technical Challenges

Managing private keys is critical; losing a private key can mean losing access to one’s identity. Solutions like multi-signature wallets and biometric authentication are being developed to address this.

Regulatory Landscape

Global regulations, including the General Data Protection Regulation (GDPR) and emerging frameworks like Europe’s eIDAS 2.0, are shaping SSI adoption. Ensuring compliance while maintaining decentralization is a key challenge.

Adoption Barriers

Despite the promise, some critics argue the term "self-sovereign" is misleading because issuers and infrastructure still play roles. Improving user experience and educating the public are essential for widespread adoption.

The Future of SSI in Web3

By 2025, self sovereign identity systems will be vital for secure, private, and user-centric digital interactions. Key trends shaping SSI’s future include:

- Enhanced Interoperability between blockchains and DID methods.

- Improved User Experience through intuitive wallets and interfaces.

- Regulatory Clarity supporting SSI frameworks.

- Integration with AI for advanced cryptographic verification.

Implementation Guidelines for Businesses

Businesses aiming to adopt SSI should:

- Utilize blockchain platforms like Ethereum or Hyperledger Indy that support SSI.

- Prioritize user-friendly digital wallets to encourage adoption.

- Ensure compliance with global data protection laws.

- Collaborate across industries and governments to build a robust SSI ecosystem.

Conclusion: Embracing Digital Sovereignty

Self-Sovereign Identity is more than a technological innovation; it represents a fundamental shift towards digital sovereignty—where individuals truly own and control their online identities. As Web3 reshapes the internet, SSI offers a secure, private, and user-centric alternative to centralized identity systems that have long dominated the digital world.

For professionals, investors, and developers, understanding what is self sovereign identity in Web3 and leveraging platforms like Token Metrics is crucial to navigating this transformative landscape. The journey toward a decentralized, privacy-respecting digital identity model has begun, and those who embrace SSI today will lead the way in tomorrow’s equitable digital world.

.svg)

Create Your Free Token Metrics Account

.png)

%201.svg)

%201.svg)

%201.svg)

.svg)

.png)