Top Crypto Trading Platforms in 2025

%201.svg)

%201.svg)

Big news: We’re cranking up the heat on AI-driven crypto analytics with the launch of the Token Metrics API and our official SDK (Software Development Kit). This isn’t just an upgrade – it's a quantum leap, giving traders, hedge funds, developers, and institutions direct access to cutting-edge market intelligence, trading signals, and predictive analytics.

Crypto markets move fast, and having real-time, AI-powered insights can be the difference between catching the next big trend or getting left behind. Until now, traders and quants have been wrestling with scattered data, delayed reporting, and a lack of truly predictive analytics. Not anymore.

The Token Metrics API delivers 32+ high-performance endpoints packed with powerful AI-driven insights right into your lap, including:

Getting started with the Token Metrics API is simple:

At Token Metrics, we believe data should be decentralized, predictive, and actionable.

The Token Metrics API & SDK bring next-gen AI-powered crypto intelligence to anyone looking to trade smarter, build better, and stay ahead of the curve. With our official SDK, developers can plug these insights into their own trading bots, dashboards, and research tools – no need to reinvent the wheel.

%201.svg)

%201.svg)

Free APIs unlock data and functionality for rapid prototyping, research, and lightweight production use. Whether you’re building an AI agent, visualizing on-chain metrics, or ingesting market snapshots, understanding how to evaluate and integrate a free API is essential to building reliable systems without hidden costs.

Not all "free" APIs are created equal. The term generally refers to services that allow access to endpoints without an upfront fee, but differences appear across rate limits, data freshness, feature scope, and licensing. A clear framework for assessment is: access model, usage limits, data latency, security, and terms of service.

Use a methodical approach to compare options. Below is a pragmatic checklist that helps prioritize trade-offs between cost and capability.

For crypto-specific datasets, platforms such as Token Metrics illustrate how integrated analytics and API endpoints can complement raw data feeds by adding model-driven signals and normalized asset metadata.

Free APIs are most effective when integrated with resilient patterns. Below are recommended practices for teams and solo developers alike.

Understanding where a free API fits in your architecture depends on the scenario. Consider three common patterns:

When working with AI agents or automated analytics, instrument data flows and label data quality explicitly. AI-driven research tools can accelerate dataset discovery and normalization, but you should always audit automated outputs and maintain provenance records.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

Limits vary by provider but often include reduced daily/monthly call quotas, limited concurrency, and delayed data freshness. Review the provider’s rate-limit policy and test in your deployment region.

Yes for low-volume or non-critical paths, provided you incorporate caching, retries, and fallback logic. For mission-critical systems, evaluate paid tiers for SLAs and enhanced support.

Store keys in environment-specific vaults, avoid client-side exposure, and rotate keys periodically. Use proxy layers to inject keys server-side when integrating client apps.

Some free APIs provide robust historical endpoints, but completeness and retention policies differ. Validate by sampling known events and comparing across providers before depending on the dataset.

AI tools can assist with data cleaning, anomaly detection, and feature extraction, making it easier to derive insight from limited free data. Always verify model outputs and maintain traceability to source calls.

Track request volume, error rates (429/5xx), latency, and data staleness metrics. Set alerts for approaching throughput caps and automate graceful fallbacks to preserve user experience.

Legal permissions depend on the provider’s terms. Some allow caching for display but prohibit redistribution or commercial resale. Always consult the API’s terms of service before storing or sharing data.

Design with decoupled ingestion, caching, and multi-source redundancy so you can swap to paid tiers or alternative providers without significant refactoring.

Yes. Combining multiple sources improves resilience and data quality, but requires normalization, reconciliation logic, and latency-aware merging rules.

Disclaimer

This article is educational and informational only. It does not constitute financial, legal, or investment advice. Evaluate services and make decisions based on your own research and compliance requirements.

%201.svg)

%201.svg)

Modern web and mobile applications rely heavily on REST APIs to exchange data, integrate services, and enable automation. Whether you're building a microservice, connecting to a third-party data feed, or wiring AI agents to live systems, a clear understanding of REST API fundamentals helps you design robust, secure, and maintainable interfaces.

REST (Representational State Transfer) is an architectural style for distributed systems. A REST API exposes resources—often represented as JSON or XML—using URLs and standard HTTP methods. REST is not a protocol but a set of constraints that favor statelessness, resource orientation, and a uniform interface.

Key benefits include simplicity, broad client support, and easy caching, which makes REST a default choice for many public and internal APIs. Use-case examples include content delivery, telemetry ingestion, authentication services, and integrations between backend services and AI models that require data access.

Understanding core REST principles helps you map business entities to API resources and choose appropriate operations:

Adhering to these constraints makes integrations easier, especially when connecting analytics, monitoring, or AI-driven agents that rely on predictable behavior and clear failure modes.

Building a usable REST API involves choices beyond the basics. Consider these patterns and practices:

For teams building APIs that feed ML or AI pipelines, consistent schemas and semantic versioning are particularly important. They minimize downstream data drift and make model retraining and validation repeatable.

Security and operational visibility are core to production APIs:

Scaling often combines stateless application design, caching (CDNs or reverse proxies), and horizontal autoscaling behind load balancers. For APIs used by data-hungry AI agents, consider async patterns (webhooks, message queues) to decouple long-running tasks from synchronous request flows.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

REST emphasizes resources and uses HTTP verbs and status codes. GraphQL exposes a flexible query language letting clients request only needed fields. REST is often simpler to cache and monitor, while GraphQL can reduce over-fetching for complex nested data. Choose based on client needs, caching, and complexity.

Common strategies include URI versioning (/v1/) and header-based versioning. Maintain backward compatibility whenever possible, provide deprecation notices, and publish migration guides. Semantic versioning of your API contract helps client teams plan upgrades.

Require TLS, use strong authentication (OAuth 2.0 or signed tokens), validate inputs, enforce rate limits, and monitor anomalous traffic. Regularly audit access controls and rotate secrets. Security posture should be part of the API lifecycle.

APIs can supply training data, feature stores, and live inference endpoints. Design predictable schemas, low-latency endpoints, and asynchronous jobs for heavy computations. Tooling and observability help detect data drift, which is critical for reliable AI systems. Platforms like Token Metrics illustrate how API-led data can support model-informed insights.

Use synchronous APIs for short, fast operations with immediate results. For long-running tasks (batch processing, complex model inference), use asynchronous patterns: accept a request, return a job ID, and provide status endpoints or webhooks to report completion.

This article is educational and technical in nature. It does not constitute investment, legal, or professional advice. Evaluate tools and architectures against your requirements and risks before deployment.

%201.svg)

%201.svg)

REST APIs power much of the web and modern integrations—from mobile apps to AI agents that consume structured data. Understanding the principles, common pitfalls, and operational practices that make a REST API reliable and maintainable helps teams move faster while reducing friction when integrating services.

Representational State Transfer (REST) is an architectural style for networked applications. A REST API exposes resources (users, accounts, prices, etc.) via predictable HTTP endpoints and methods (GET, POST, PUT, DELETE). Its simplicity, cacheability, and wide tooling support make REST a go-to pattern for many back-end services and third-party integrations.

Key behavioral expectations include statelessness (each request contains the information needed to process it), use of standard HTTP status codes, and a resource-oriented URI design. These conventions improve developer experience and enable robust monitoring and error handling across distributed systems.

Designing a clear resource model at the outset avoids messy ad-hoc expansions later. Consider these guidelines:

Model relationships thoughtfully: prefer nested resources for clarity (e.g., /projects/42/tasks) but avoid excessive nesting depth. A well-documented schema contract reduces integration errors and accelerates client development.

Security for REST APIs is multi-layered. Common patterns:

Also consider rate limiting, token expiry, and key rotation policies. For APIs that surface sensitive data, adopt least-privilege principles and audit logging so access patterns can be reviewed.

Latency and scalability are often where APIs meet their limits. Practical levers include:

Instrument your API with observability: structured logs, distributed traces, and metrics (latency, error rates, throughput). These signals enable data-driven tuning and prioritized fixes.

Quality APIs are well-tested and easy to adopt. Include:

Automate CI checks that validate linting, schema changes, and security scanning to maintain long-term health.

When REST APIs expose market data, on-chain metrics, or signal feeds for analytics and AI agents, additional considerations apply. Data freshness, deterministic timestamps, provenance metadata, and predictable rate limits matter for reproducible analytics. Design APIs so consumers can:

AI-driven workflows often combine multiple endpoints; consistent schemas and clear quotas simplify orchestration and reduce operational surprises. For example, Token Metrics demonstrates how structured crypto insights can be surfaced via APIs to support research and model inputs for agents.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

"REST" refers to the architectural constraints defined by Roy Fielding. "RESTful" is an informal adjective describing APIs that follow REST principles—though implementations vary in how strictly they adhere to the constraints.

Use semantic intent when versioning. URL-based versions (e.g., /v1/) are explicit, while header-based or content negotiation approaches avoid URL churn. Regardless, document deprecation timelines and provide backward-compatible pathways.

REST is simple and cache-friendly for resource-centric models. GraphQL excels when clients need flexible queries across nested relationships. Consider client requirements, caching strategy, and operational complexity when choosing.

Expose limit headers, return standard status codes (e.g., 429), and provide retry-after guidance. Offer tiered quotas and clear documentation so integrators can design backoffs and fallback strategies.

OpenAPI (Swagger) for specs, Postman for interactive exploration, Pact for contract testing, and CI-integrated schema validators are common choices. Combine these with monitoring and API gateways for observability and enforcement.

This article is for educational and technical reference only. It is not financial, legal, or investment advice. Always evaluate tools and services against your own technical requirements and compliance obligations before integrating them into production systems.

%201.svg)

%201.svg)

The landscape of digital assets and blockchain technology has expanded rapidly over recent years, bringing forth a new realm known as Web3 alongside the burgeoning crypto ecosystem. For individuals curious about allocating resources into this sphere, questions often arise: should the focus be on cryptocurrencies or Web3 companies? This article aims to provide an educational and analytical perspective on these options, highlighting considerations without providing direct investment advice.

Before exploring the nuances between investing in crypto assets and Web3 companies, it's important to clarify what each represents.

Web3 companies often develop decentralized applications (dApps), offer blockchain-based services, or build infrastructure layers for the decentralized web.

Deciding between crypto assets or Web3 companies involves analyzing different dynamics:

To approach these complex investment types thoughtfully, frameworks can assist in structuring analysis:

Due to the rapidly evolving and data-intensive nature of crypto and Web3 industries, AI-powered platforms can enhance analysis by processing vast datasets and providing insights.

For instance, Token Metrics utilizes machine learning to rate crypto assets by analyzing market trends, project fundamentals, and sentiment data. Such tools support an educational and neutral perspective by offering data-driven research support rather than speculative advice.

When assessing Web3 companies, AI tools can assist with identifying emerging technologies, tracking developmental progress, and monitoring regulatory developments relevant to the decentralized ecosystem.

To gain a well-rounded understanding, consider the following steps:

Both crypto assets and Web3 companies involve unique risks that warrant careful consideration:

Deciding between crypto assets and Web3 companies involves analyzing different dimensions including technological fundamentals, market dynamics, and risk profiles. Employing structured evaluation frameworks along with AI-enhanced research platforms such as Token Metrics can provide clarity in this complex landscape.

It is essential to approach this domain with an educational mindset focused on understanding rather than speculative intentions. Staying informed and leveraging analytical tools supports sound comprehension of the evolving world of blockchain-based digital assets and enterprises.

This article is intended for educational purposes only and does not constitute financial, investment, or legal advice. Readers should conduct their own research and consult with professional advisors before making any decisions related to cryptocurrencies or Web3 companies.

%201.svg)

%201.svg)

The evolution from Web2 to Web3 marks a significant paradigm shift in how we interact with digital services. While Web2 platforms have delivered intuitive and seamless user experiences, Web3—the decentralized internet leveraging blockchain technology—still faces considerable user experience (UX) challenges. This article explores the reasons behind the comparatively poor UX in Web3 and the technical, design, and infrastructural hurdles contributing to this gap.

Web2 represents the current mainstream internet experience characterized by centralized servers, interactive social platforms, and streamlined services. Its UX benefits from consistent standards, mature design patterns, and direct control over data.

In contrast, Web3 aims at decentralization, enabling peer-to-peer interactions through blockchain protocols, decentralized applications (dApps), and user-owned data ecosystems. While promising increased privacy and autonomy, Web3 inherently introduces complexity in UX design.

Several intrinsic technical barriers impact the Web3 user experience:

The nascent nature of Web3 results in inconsistent and sometimes opaque design standards:

Web2 giants have invested billions over decades fostering developer communities, design systems, and customer support infrastructure. In contrast, Web3 is still an emerging ecosystem characterized by:

Such factors contribute to a user experience that feels fragmented and inaccessible to mainstream audiences.

Emerging tools powered by artificial intelligence and data analytics can help mitigate some UX challenges in Web3 by:

Integrating such AI-driven research and analytic tools enables developers and users to progressively enhance Web3 usability.

For users trying to adapt to Web3 environments, the following tips may help:

For developers, focusing on the following can improve UX outcomes:

The current disparity between Web3 and Web2 user experience primarily stems from decentralization complexities, immature design ecosystems, and educational gaps. However, ongoing innovation in AI-driven analytics, comprehensive rating platforms like Token Metrics, and community-driven UX improvements are promising. Over time, these efforts could bridge the UX divide to make Web3 more accessible and user-friendly for mainstream adoption.

This article is for educational and informational purposes only and does not constitute financial advice or an endorsement. Users should conduct their own research and consider risks before engaging in any blockchain or cryptocurrency activities.

%201.svg)

%201.svg)

Smart contracts have become an integral part of blockchain technology, enabling automated, trustless agreements across various platforms. Understanding what languages are used for smart contract development is essential for developers entering this dynamic field, as well as for analysts and enthusiasts who want to deepen their grasp of blockchain ecosystems. This article offers an analytical and educational overview of popular programming languages for smart contract development, discusses their characteristics, and provides insights on how analytical tools like Token Metrics can assist in evaluating smart contract projects.

Smart contract languages are specialized programming languages designed to create logic that runs on blockchains. The most prominent blockchain for smart contracts currently is Ethereum, but other blockchains have their languages as well. The following section outlines some of the most widely-used smart contract languages.

Developers evaluate smart contract languages based on various factors such as security, expressiveness, ease of use, and compatibility with blockchain platforms. Below are some important criteria:

Solidity remains the dominant language due to Ethereum's market position and is well-suited for developers familiar with JavaScript or object-oriented paradigms. It continuously evolves with community input and protocol upgrades.

Vyper has a smaller user base but appeals to projects requiring stricter security standards, as its design deliberately omits complex features that increase vulnerabilities.

Rust is leveraged by newer chains that aim to combine blockchain decentralization with high throughput and low latency. Developers familiar with systems programming find Rust a robust choice.

Michelson’s niche is in formal verification-heavy projects where security is paramount, such as financial contracts and governance mechanisms on Tezos.

Move and Clarity represent innovative approaches to contract safety and complexity management, focusing on deterministic execution and resource constraints.

Artificial Intelligence (AI) and machine learning have become increasingly valuable in analyzing and researching blockchain projects, including smart contracts. Platforms such as Token Metrics provide AI-driven ratings and insights by analyzing codebases, developer activity, and on-chain data.

Such tools facilitate the identification of patterns that might indicate strong development practices or potential security risks. While they do not replace manual code audits or thorough research, they support investors and developers by presenting data-driven evaluations that help in filtering through numerous projects.

Developers choosing a smart contract language should consider the blockchain platform’s restrictions and the nature of the application. Those focused on DeFi might prefer Solidity or Vyper for Ethereum, while teams aiming for cross-chain applications might lean toward Rust or Move.

Analysts seeking to understand a project’s robustness can utilize resources like Token Metrics for AI-powered insights combined with manual research, including code reviews and community engagement.

Security should remain a priority as vulnerabilities in smart contract code can lead to significant issues. Therefore, familiarizing oneself with languages that encourage safer programming paradigms contributes to better outcomes.

Understanding what languages are used for smart contract development is key to grasping the broader blockchain ecosystem. Solidity leads the field due to Ethereum’s prominence, but alternative languages like Vyper, Rust, Michelson, Move, and Clarity offer different trade-offs in security, performance, and usability. Advances in AI-driven research platforms such as Token Metrics play a supportive role in evaluating the quality and safety of smart contract projects.

This article is intended for educational purposes only and does not constitute financial or investment advice. Readers should conduct their own research and consult professionals before making decisions related to blockchain technologies and smart contract development.

%201.svg)

%201.svg)

With the increasing popularity of cryptocurrencies, selecting a trusted crypto exchange is an essential step for anyone interested in participating safely in the market. Crypto exchanges serve as platforms that facilitate the buying, selling, and trading of digital assets. However, the diversity and complexity of available exchanges make the selection process imperative yet challenging. This article delves into some trusted crypto exchanges, alongside guidance on how to evaluate them, all while emphasizing the role of analytical tools like Token Metrics in supporting well-informed decisions.

Crypto exchanges can broadly be categorized into centralized and decentralized platforms. Centralized exchanges (CEXs) act as intermediaries holding users’ assets and facilitating trades within their systems, while decentralized exchanges (DEXs) allow peer-to-peer transactions without a central authority. Each type offers distinct advantages and considerations regarding security, liquidity, control, and regulatory compliance.

When assessing trusted crypto exchanges, several fundamental factors come into focus, including security protocols, regulatory adherence, liquidity, range of supported assets, user interface, fees, and customer support. Thorough evaluation of these criteria assists in identifying exchanges that prioritize user protection and operational integrity.

Security Measures: Robust security is critical to safeguarding digital assets. Trusted exchanges implement multi-factor authentication (MFA), cold storage for the majority of funds, and regular security audits. Transparency about security incidents and response strategies further reflects an exchange’s commitment to protection.

Regulatory Compliance: Exchanges operating within clear regulatory frameworks demonstrate credibility. Registration with financial authorities, adherence to Anti-Money Laundering (AML) and Know Your Customer (KYC) policies are important markers of legitimacy.

Liquidity and Volume: High liquidity ensures competitive pricing and smooth order execution. Volume trends can be analyzed via publicly available data or through analytics platforms such as Token Metrics to gauge an exchange’s activeness.

Range of Cryptocurrencies: The diversity of supported digital assets allows users flexibility in managing their portfolios. Trusted exchanges often list major cryptocurrencies alongside promising altcoins, with transparent listing criteria.

User Experience and Customer Support: A user-friendly interface and responsive support contribute to efficient trading and problem resolution, enhancing overall trust.

While numerous crypto exchanges exist, a few have earned reputations for trustworthiness based on their operational history and general acceptance in the crypto community. Below is an educational overview without endorsement.

These examples illustrate the diversity of trusted exchanges, highlighting the importance of matching exchange characteristics to individual cybersecurity preferences and trading needs.

The rapid evolution of the crypto landscape underscores the value of AI-driven research tools in navigating exchange assessment. Platforms like Token Metrics provide data-backed analytics, including exchange ratings, volume analysis, security insights, and user sentiment evaluation. Such tools equip users with comprehensive perspectives that supplement foundational research.

Integrating these insights allows users to monitor exchange performance trends, identify emerging risks, and evaluate service quality over time, fostering a proactive and informed approach.

Despite due diligence, crypto trading inherently involves risks. Common concerns linked to exchanges encompass hacking incidents, withdrawal delays, regulatory actions, and operational failures. Reducing exposure includes diversifying asset holdings, using hardware wallets for storage, and continuously monitoring exchange announcements.

Educational tools such as Token Metrics contribute to ongoing awareness by highlighting risk factors and providing updates that reflect evolving market and regulatory conditions.

Choosing a trusted crypto exchange requires comprehensive evaluation across security, regulatory compliance, liquidity, asset diversity, and user experience dimensions. Leveraging AI-based analytics platforms such as Token Metrics enriches the decision-making process by delivering data-driven insights. Ultimately, informed research and cautious engagement are key components of navigating the crypto exchange landscape responsibly.

This article is for educational purposes only and does not constitute financial, investment, or legal advice. Readers should conduct independent research and consult professionals before making decisions related to cryptocurrency trading or exchange selection.

%201.svg)

%201.svg)

Blockchain technology has rapidly evolved into a foundational innovation affecting many industries. For newcomers eager to understand the basics, finding reliable and informative platforms to ask beginner blockchain questions is essential. This guide explores where you can pose your questions, engage with experts, and leverage analytical tools to deepen your understanding.

Blockchain, despite its increasing adoption, remains a complex and multifaceted topic involving cryptography, decentralized networks, consensus mechanisms, and smart contracts. Beginners often require clear explanations to grasp fundamental concepts. Asking questions helps clarify misunderstandings, connect with experienced individuals, and stay updated with evolving trends and technologies.

Online communities are often the first port of call for learners. They foster discussion, provide resources, and offer peer support. Some trusted platforms include:

Several courses and online platforms integrate Q&A functionalities to help learners ask questions in context, such as:

Advanced tools now assist users in analyzing blockchain projects and data, complementing learning and research efforts. Token Metrics is an example of an AI-powered platform that provides ratings, analysis, and educational content about blockchain technologies.

By using such platforms, beginners can strengthen their foundational knowledge through data-backed insights. Combining this with community Q&A interactions enhances overall understanding.

To get useful responses, consider these tips when posting questions:

Besides Q&A, structured learning is valuable. Consider:

This article is intended solely for educational purposes and does not constitute financial, investment, or legal advice. Always conduct independent research and consult professional advisors before making decisions related to blockchain technology or cryptocurrency.

%201.svg)

%201.svg)

The emergence of Web3 technologies has transformed the digital landscape, introducing decentralized applications, blockchain-based protocols, and novel governance models. For participants and observers alike, understanding how to measure success in Web3 projects remains a complex yet critical challenge. Unlike traditional businesses, where financial indicators are predominant, Web3 ventures often require multifaceted assessment frameworks that capture technological innovation, community engagement, and decentralization.

This article delves into the defining success factors for Web3 projects, offering a structured exploration of the key performance metrics, analytical frameworks, and tools available, including AI-driven research platforms such as Token Metrics. Our goal is to provide a clear, educational perspective on how participants and researchers can evaluate Web3 initiatives rigorously and holistically.

Success within Web3 projects is inherently multidimensional. While financial performance and market capitalization remain important, other dimensions include:

These factors may vary in relevance depending on the project type—be it DeFi protocols, NFTs, layer-one blockchains, or decentralized autonomous organizations (DAOs). Thus, establishing clear, context-specific benchmarks is essential for effective evaluation.

Below are critical performance indicators broadly used to gauge Web3 success. These metrics provide quantifiable insights into various aspects of project health and growth.

Systematic evaluation benefits from established frameworks:

Combining these frameworks with data-driven metrics allows for comprehensive, nuanced insights into project status and trajectories.

Artificial intelligence and machine learning increasingly support the evaluation of Web3 projects by processing vast datasets and uncovering patterns not readily apparent to human analysts. Token Metrics exemplifies this approach by offering AI-driven ratings, risk assessments, and project deep-dives that integrate quantitative data with qualitative signals.

These platforms aid in parsing complex variables such as token velocity, developer momentum, and community sentiment, providing actionable intelligence without subjective bias. Importantly, using such analytical tools facilitates continuous monitoring and reassessment as Web3 landscapes evolve.

For individuals or organizations assessing the success potential of Web3 projects, these steps are recommended:

While metrics and frameworks aid evaluation, it is essential to recognize the dynamic nature of Web3 projects and the ecosystem's inherent uncertainties. Metrics may fluctuate due to speculative behavior, regulatory shifts, or technological disruptions. Moreover, quantifiable indicators only capture parts of the overall picture, and qualitative factors such as community values and developer expertise also matter.

Therefore, success measurement in Web3 should be viewed as an ongoing process, employing diverse data points and contextual understanding rather than static criteria.

Measuring success in Web3 projects requires a multidimensional approach combining on-chain metrics, community engagement, development activity, and security considerations. Frameworks such as fundamental and scenario analysis facilitate structured evaluation, while AI-powered platforms like Token Metrics provide advanced tools to support data-driven insights.

By applying these methods with a critical and educational mindset, stakeholders can better understand project health and longevity without relying on speculative or financial advice.

This article is for educational and informational purposes only. It does not constitute financial, investment, or legal advice. Readers should conduct their own research and consult professionals before making decisions related to Web3 projects.

%201.svg)

%201.svg)

Smart contracts are self-executing contracts with the terms of the agreement directly written into lines of code. They run on blockchain platforms, such as Ethereum, enabling decentralized, automated agreements that do not require intermediaries. Understanding how to write a smart contract involves familiarity with blockchain principles, programming languages, and best practices for secure and efficient development.

Before diving into development, it is essential to grasp what smart contracts are and how they function within blockchain ecosystems. Essentially, smart contracts enable conditional transactions that automatically execute when predefined conditions are met, providing transparency and reducing dependency on third parties.

These programs are stored and executed on blockchain platforms, making them immutable and distributed, which adds security and reliability to the contract's terms.

Writing a smart contract starts with selecting an appropriate blockchain platform. Ethereum is among the most widely used platforms with robust support for smart contracts, primarily written in Solidity—a statically-typed, contract-oriented programming language.

Other platforms like Binance Smart Chain, Polkadot, and Solana also support smart contracts with differing languages and frameworks. Selecting a platform depends on the project requirements, intended network compatibility, and resource accessibility.

The most commonly used language for writing Ethereum smart contracts is Solidity. It is designed to implement smart contracts with syntax similar to JavaScript, making it approachable for developers familiar with web programming languages.

Other languages include Vyper, a pythonic language focusing on security and simplicity, and Rust or C++ for platforms like Solana. Learning the syntax, data types, functions, and event handling of the chosen language is foundational.

Development of smart contracts typically requires a suite of tools for editing, compiling, testing, and deploying code:

Writing a smart contract involves structuring the code to define its variables, functions, and modifiers. Key steps include:

contract to declare the contract and its name.Example snippet in Solidity:

pragma solidity ^0.8.0;

contract SimpleStorage {

uint storedData;

function set(uint x) public {

storedData = x;

}

function get() public view returns (uint) {

return storedData;

}

}Testing is crucial to ensure smart contracts operate as intended and to prevent bugs or vulnerabilities. Strategies include:

Adopting rigorous testing can reduce the risk of exploits or loss of funds caused by contract errors.

Deployment involves publishing the compiled smart contract bytecode to the blockchain. This includes:

Using test networks like Ropsten, Rinkeby, or Goerli is recommended for initial deployment to validate functionality without incurring real costs.

Emerging AI-driven platforms can assist developers and analysts with smart contract evaluation, security analysis, and market sentiment interpretation. For instance, tools like Token Metrics provide algorithmic research that can support understanding of blockchain projects and smart contract implications in the ecosystem.

Integrating these tools along with manual audits aids comprehensive assessments for better development decisions.

Writing secure smart contracts requires awareness of common vulnerabilities such as reentrancy attacks, integer overflows, and improper access controls. Best practices include:

Robust security practices are critical due to the immutable nature of deployed smart contracts on blockchain.

Writing a smart contract involves a combination of blockchain knowledge, programming skills, and adherence to security best practices. From choosing a platform and language to coding, testing, and deploying, each step plays an important role in the development lifecycle.

Leveraging AI-powered tools like Token Metrics can add valuable insights for developers aiming to enhance their understanding and approach to smart contract projects.

All information provided in this article is for educational purposes only and does not constitute financial or investment advice. Readers should conduct their own research and consult professional sources where appropriate.

%201.svg)

%201.svg)

Decentralized Autonomous Organizations (DAOs) represent an innovative model for decentralized governance and decision-making in the blockchain space. With the increasing integration of artificial intelligence (AI) into DAOs for automating processes and enhancing efficiency, it is vital to understand the risks associated with allowing AI to control or heavily influence DAOs. This article provides a comprehensive analysis of these risks, exploring technical, ethical, and systemic factors. Additionally, it outlines how analytical platforms like Token Metrics can support informed research around such emerging intersections.

DAOs are blockchain-based entities designed to operate autonomously through smart contracts and collective governance, without centralized control. AI technologies can offer advanced capabilities by automating proposal evaluation, voting mechanisms, or resource allocation within these organizations. While this combination promises increased efficiency and responsiveness, it also introduces complexities and novel risks.

One significant category of risks involves technical vulnerabilities arising from AI integration into DAOs:

Integrating AI into DAO governance raises complex questions around control, transparency, and accountability:

The autonomous nature of AI presents unique security concerns:

Beyond technical risks, the interaction between AI and DAOs also introduces ethical and regulatory considerations:

Understanding and managing these risks require robust research and analytical frameworks. Platforms such as Token Metrics provide data-driven insights supporting comprehensive evaluation of blockchain projects, governance models, and emerging technologies combining AI and DAOs.

The fusion of AI and DAOs promises innovative decentralized governance but comes with substantial risks. Technical vulnerabilities, governance challenges, security threats, and ethical concerns highlight the need for vigilant risk assessment and careful integration. Utilizing advanced research platforms like Token Metrics enables more informed and analytical approaches for stakeholders navigating this evolving landscape.

This article is for educational purposes only and does not constitute financial, legal, or investment advice. Readers should perform their own due diligence and consult professionals where appropriate.

%201.svg)

%201.svg)

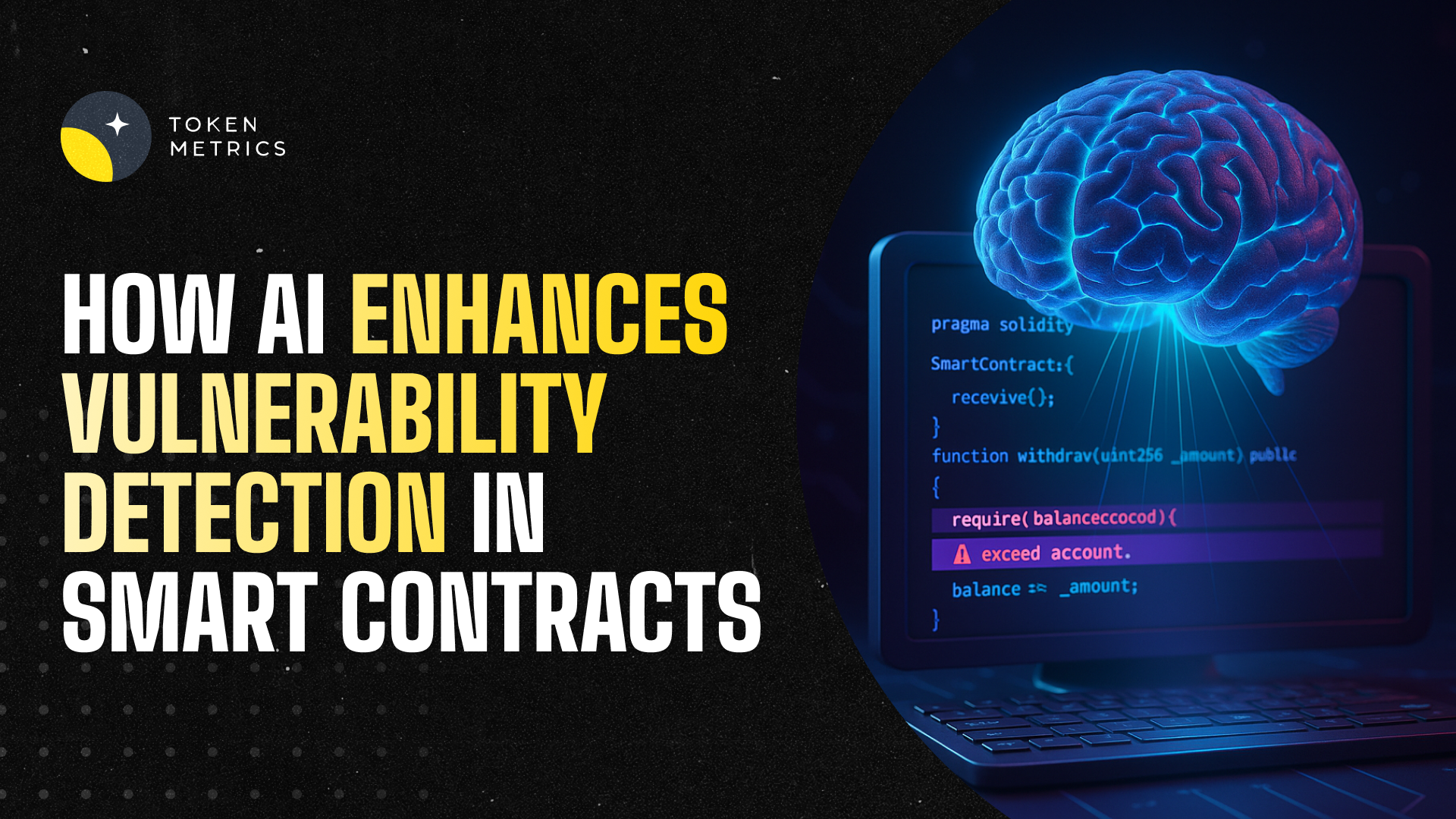

Smart contracts are self-executing contracts with the terms directly written into code, widely used across blockchain platforms to automate decentralized applications (DApps) and financial protocols. However, despite their innovation and efficiency, vulnerabilities in smart contracts pose significant risks, potentially leading to loss of funds, exploits, or unauthorized actions.

With the increasing complexity and volume of smart contracts being deployed, traditional manual auditing methods struggle to keep pace. This has sparked interest in leveraging Artificial Intelligence (AI) to enhance the identification and mitigation of vulnerabilities in smart contracts.

Smart contract vulnerabilities typically arise from coding errors, logic flaws, or insufficient access controls. Common categories include reentrancy attacks, integer overflows, timestamp dependencies, and unchecked external calls. Identifying such vulnerabilities requires deep code analysis, often across millions of lines of code in decentralized ecosystems.

Manual audits by security experts are thorough but time-consuming and expensive. Moreover, the human factor can result in missed weaknesses, especially in complex contracts. As the blockchain ecosystem evolves, utilizing AI to assist in this process has become a promising approach.

AI techniques, particularly machine learning (ML) and natural language processing (NLP), can analyze smart contract code by learning from vast datasets of previously identified vulnerabilities and exploits. The primary roles of AI here include:

Several AI-based methodologies have been adopted to aid vulnerability detection:

Some emerging platforms integrate such AI techniques to provide developers and security teams with enhanced vulnerability scanning capabilities.

Compared to manual or rule-based approaches, AI provides several notable benefits:

Despite these advantages, AI is complementary to expert review rather than a replacement, as audits require contextual understanding and judgment that AI currently cannot fully replicate.

While promising, AI application in this domain faces several hurdles:

Developers and security practitioners can optimize the benefits of AI by:

AI has a growing and important role in identifying vulnerabilities within smart contracts by providing scalable, consistent, and efficient analysis. While challenges remain, the combined application of AI tools with expert audits paves the way for stronger blockchain security.

As AI models and training data improve, and as platforms integrate these capabilities more seamlessly, users can expect increasingly proactive and precise identification of risks in smart contracts.

This article is for educational and informational purposes only. It does not constitute financial, investment, or legal advice. Always conduct your own research and consider consulting professionals when dealing with blockchain security.

Create Your Free Account

Create Your Free Account9450 SW Gemini Dr

PMB 59348

Beaverton, Oregon 97008-7105 US

.svg)

.png)

Token Metrics Media LLC is a regular publication of information, analysis, and commentary focused especially on blockchain technology and business, cryptocurrency, blockchain-based tokens, market trends, and trading strategies.

Token Metrics Media LLC does not provide individually tailored investment advice and does not take a subscriber’s or anyone’s personal circumstances into consideration when discussing investments; nor is Token Metrics Advisers LLC registered as an investment adviser or broker-dealer in any jurisdiction.

Information contained herein is not an offer or solicitation to buy, hold, or sell any security. The Token Metrics team has advised and invested in many blockchain companies. A complete list of their advisory roles and current holdings can be viewed here: https://tokenmetrics.com/disclosures.html/

Token Metrics Media LLC relies on information from various sources believed to be reliable, including clients and third parties, but cannot guarantee the accuracy and completeness of that information. Additionally, Token Metrics Media LLC does not provide tax advice, and investors are encouraged to consult with their personal tax advisors.

All investing involves risk, including the possible loss of money you invest, and past performance does not guarantee future performance. Ratings and price predictions are provided for informational and illustrative purposes, and may not reflect actual future performance.