Top Crypto Trading Platforms in 2025

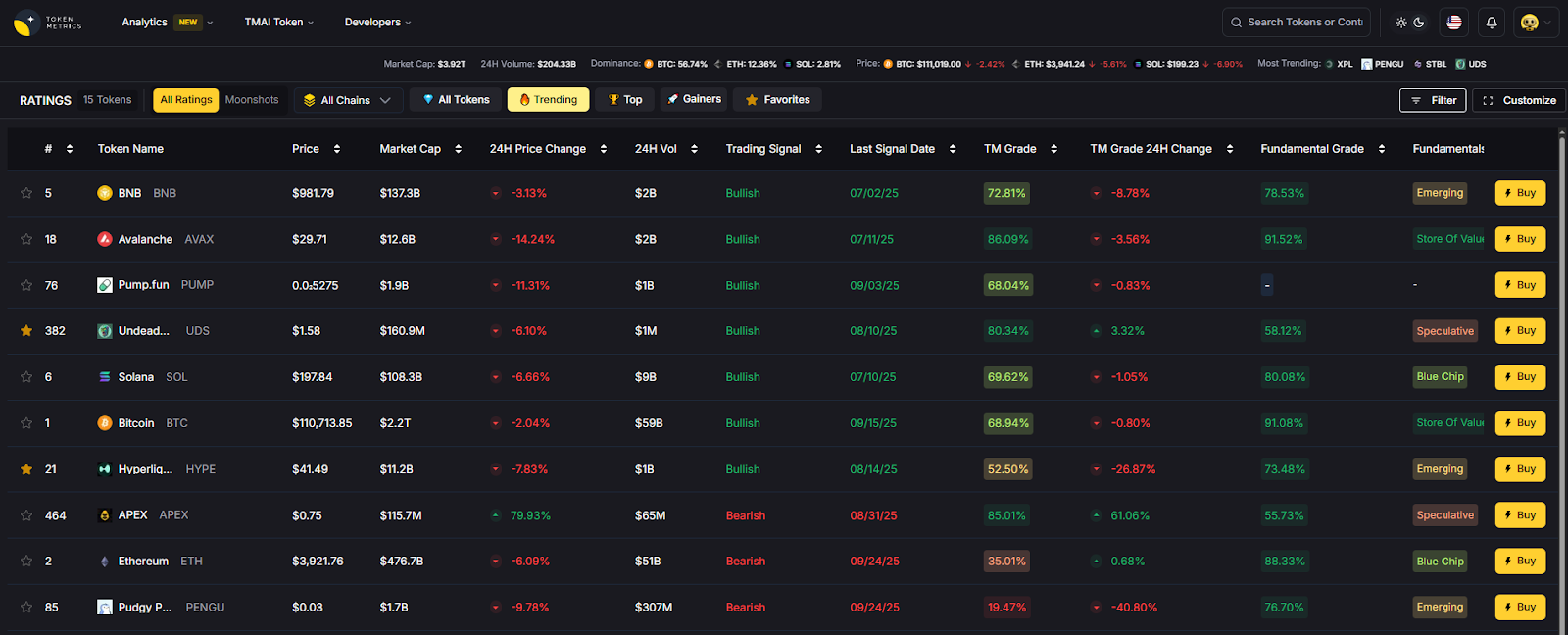

Big news: We’re cranking up the heat on AI-driven crypto analytics with the launch of the Token Metrics API and our official SDK (Software Development Kit). This isn’t just an upgrade – it's a quantum leap, giving traders, hedge funds, developers, and institutions direct access to cutting-edge market intelligence, trading signals, and predictive analytics.

Crypto markets move fast, and having real-time, AI-powered insights can be the difference between catching the next big trend or getting left behind. Until now, traders and quants have been wrestling with scattered data, delayed reporting, and a lack of truly predictive analytics. Not anymore.

The Token Metrics API delivers 32+ high-performance endpoints packed with powerful AI-driven insights right into your lap, including:

Getting started with the Token Metrics API is simple:

At Token Metrics, we believe data should be decentralized, predictive, and actionable.

The Token Metrics API & SDK bring next-gen AI-powered crypto intelligence to anyone looking to trade smarter, build better, and stay ahead of the curve. With our official SDK, developers can plug these insights into their own trading bots, dashboards, and research tools – no need to reinvent the wheel.

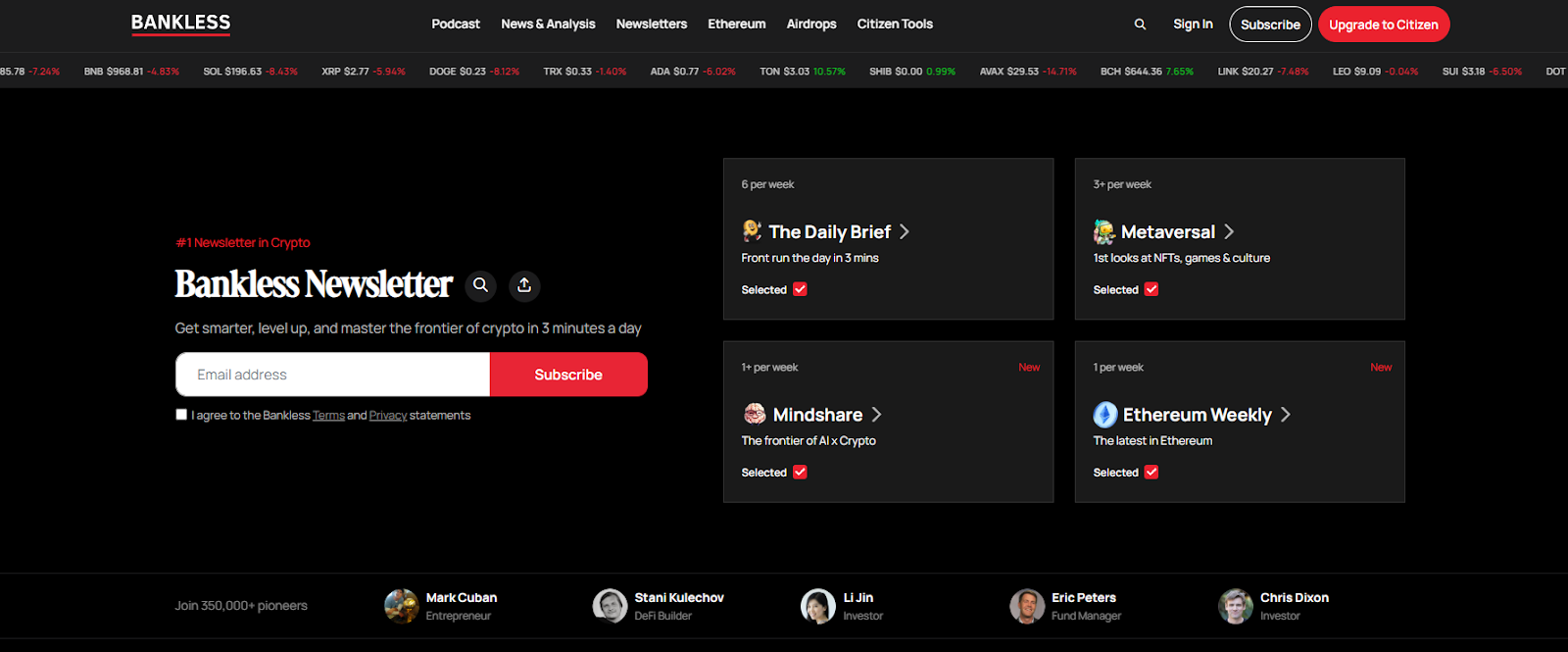

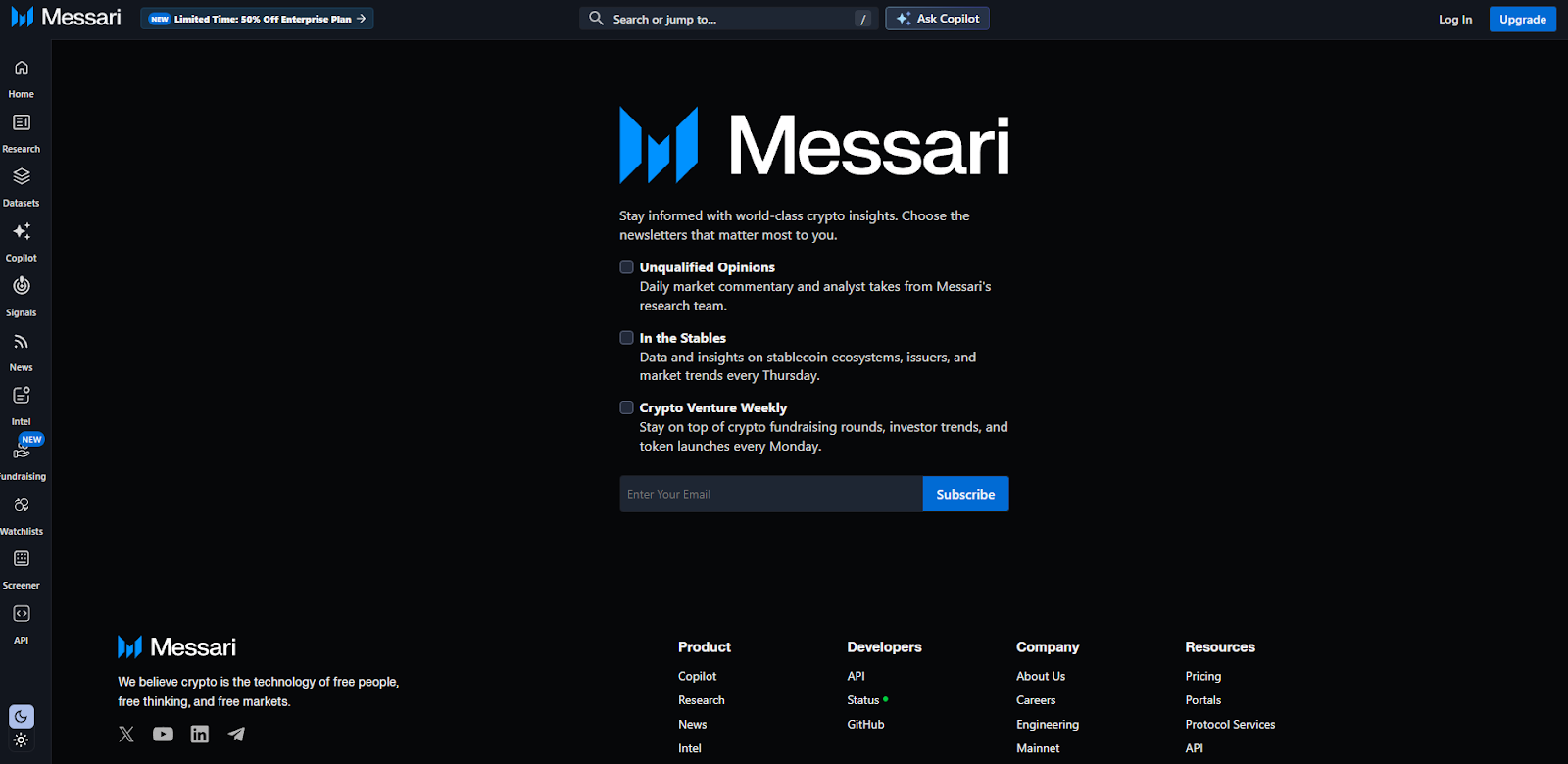

In a market that never sleeps, the best crypto newsletters 2025 help you filter noise, spot narratives early, and act with conviction. In one line: a great newsletter or analyst condenses complex on-chain, macro, and market structure data into clear, investable insights. Whether you’re a builder, long-term allocator, or active trader, pairing independent analysis with your own process can tighten feedback loops and reduce decision fatigue. In 2025, ETF flows, L2 expansion, AI infra plays, and global regulation shifts mean more data than ever. The picks below focus on consistency, methodology transparency, breadth (on-chain + macro + market), and practical takeaways—blending independent crypto analysts with data-driven research letters and easy-to-digest daily briefs.

Secondary intents we cover: crypto research newsletter, on-chain analysis weekly, and “who to follow” for credible signal over hype.

Primary CTA: Start free trial

This article is for research/education, not financial advice.

What makes a crypto newsletter “best” in 2025?

Frequency, methodological transparency, and the ability to translate on-chain/macro signals into practical takeaways. Bonus points for archives and clear disclosures.

Are the top newsletters free or paid?

Most offer strong free tiers (daily or weekly). Paid tiers typically unlock deeper research, models, or community access.

Do I need both on-chain and macro letters?

Ideally yes—on-chain explains market structure; macro sets the regime (liquidity, rates, growth). Pairing both creates a more complete view.

How often should I read?

Skim dailies (Bankless/Milk Road) for awareness; reserve time weekly for deep dives (Glassnode/Coin Metrics/Delphi).

Can newsletters replace analytics tools?

No. Treat them as curated insight. Validate ideas with your own data and risk framework (Token Metrics can help).

Which is best for ETF/flows?

CoinShares’ weekly Fund Flows is the go-to for institutional positioning, complemented by Glassnode/Coin Metrics on structure.

If you want a quick pulse, pick a daily (Bankless or Milk Road). For deeper conviction, add one weekly on-chain (Glassnode or Coin Metrics) and one thesis engine (Delphi or Messari). Layer macro (Lyn Alden) to frame the regime, and use Token Metrics to quantify what you read and act deliberately.

Related Reads:

We reviewed each provider’s official newsletter hub, research pages, and recent posts to confirm availability, cadence, and focus. Updated September 2025 with the latest archives and program pages. Key official references: Bankless newsletter hub Bankless+2Bankless+2; The Defiant newsletter page The Defiant+1; Messari newsletter hub and Unqualified Opinions pages Messari+2messari.substack.com+2; Delphi Digital newsletter page and research site Delphi Digital+2delphidigital.io+2; Glassnode Week On-Chain hub and latest issue insights.glassnode.com+2Glassnode+2; Coin Metrics SOTN hub and archive Coin Metrics+2Coin Metrics+2; Kaiko research/newsletter hub and company site Kaiko Research+1; CoinShares Fund Flows & Research hubs (US/global) and latest weekly example CoinShares+2CoinShares+2; Milk Road homepage and social proof Milk Road+1; Lyn Alden newsletter/archive pages and 2025 issues Lyn Alden+4Lyn Alden+4Lyn Alden+4.

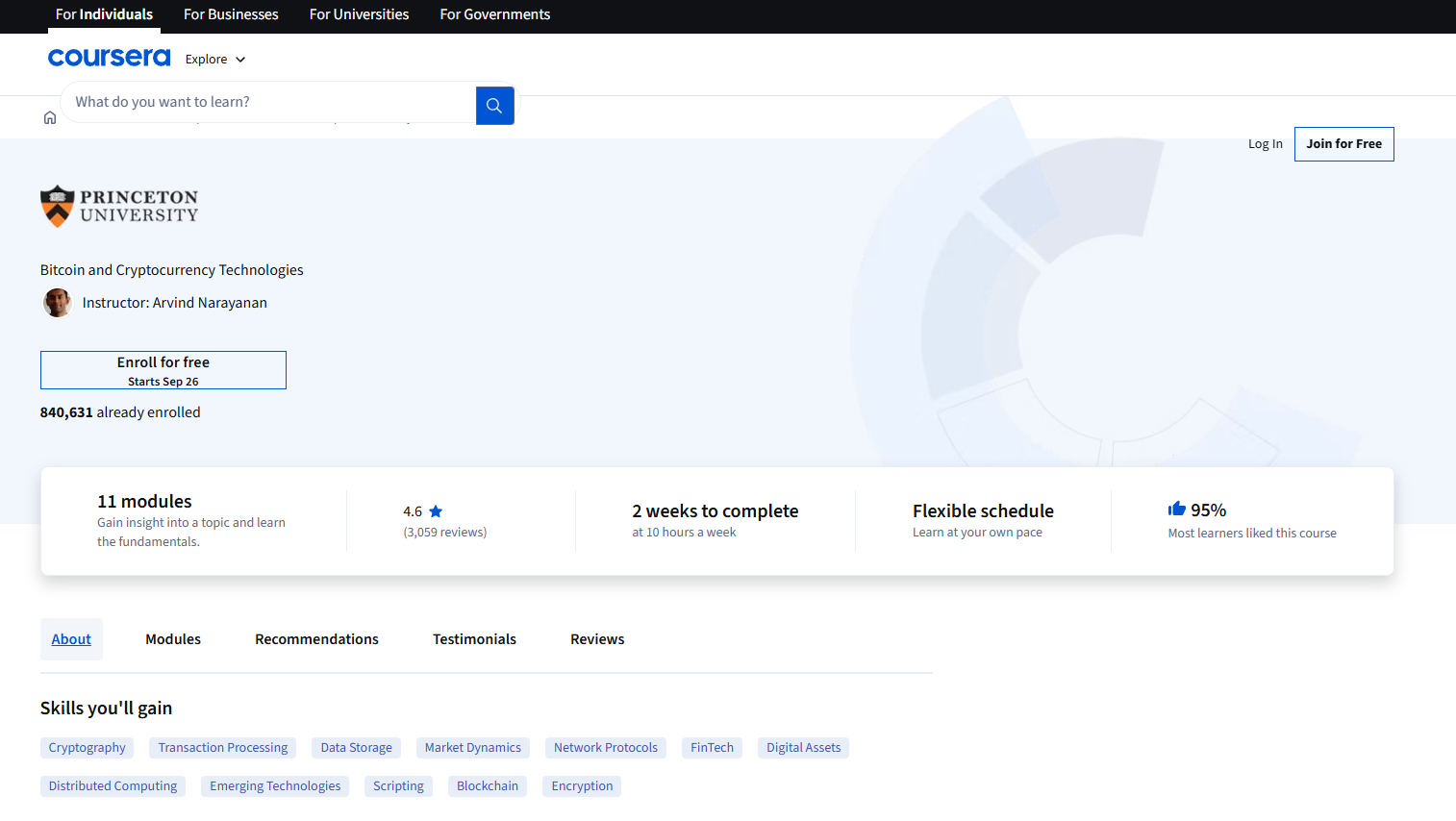

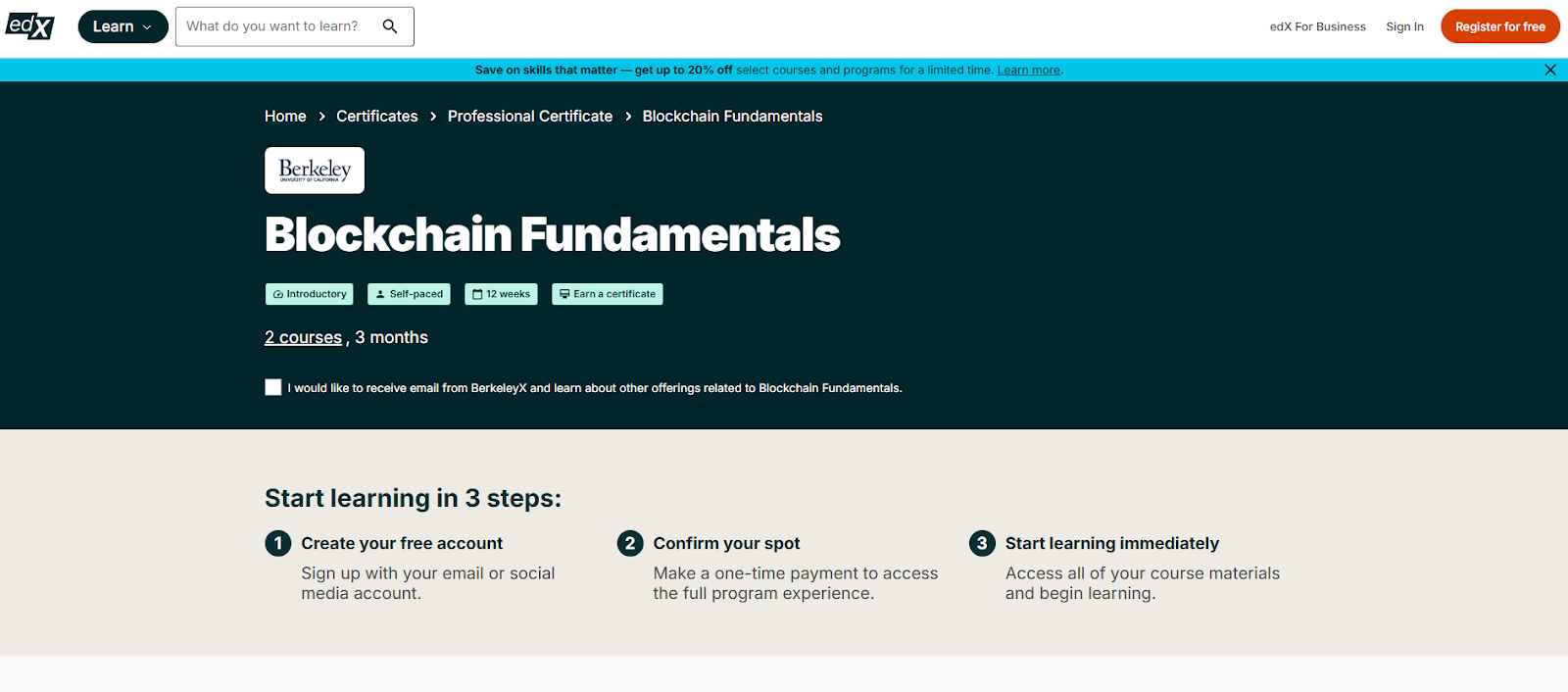

Crypto moves fast—and the gap between hype and real skills can be costly. If you’re evaluating the best crypto courses or structured paths to go from zero to fluent (or from power user to builder), the right program can compress months of trial-and-error into weeks. In short: a crypto education platform is any structured program, course catalog, or academy that teaches blockchain, Web3, or digital-asset topics with clear outcomes (e.g., literacy, developer skills, startup readiness).

This guide curates 10 credible options across beginner literacy, smart-contract engineering, and founder tracks. We blend SERP research with hands-on criteria so you can match a course to your goals, time, and budget—without the fluff.

Data sources: official provider pages (program docs, security/FAQ, curriculum), plus widely cited market datasets for cross-checks only. Last updated September 2025.

Primary CTA: Start free trial.

This article is for research/education, not financial advice.

What’s the fastest way to start learning crypto in 2025?

Start with a free literacy hub (Binance Academy or Coinbase Learn), then audit a university course (Coursera/edX) before committing to a paid bootcamp. This builds intuition and saves money. Binance+2Coinbase+2

Which course is best if I want to become a Solidity developer?

Alchemy University is a free, hands-on path with in-browser coding; ConsenSys Academy adds mentor-led structure and team projects for professional polish. Alchemy+1

Do I need a formal degree for crypto careers?

Not strictly. A portfolio of projects often trumps certificates, but formal programs like UNIC’s MSc can help for policy, compliance, or academia-adjacent roles. University of Nicosia

Are these programs global and online?

Most are fully online and globally accessible; accelerators like a16z CSX may run cohorts in specific cities, so check the latest cohort details. a16z crypto

Will these courses cover wallet and security best practices?

University and dev bootcamps typically include security modules; literacy hubs also publish safety guides. Always cross-check with official docs and practice in testnets. Consensys - The Ethereum Company+1

If your goal is literacy and safe onboarding, start with Binance Academy or Coinbase Learn; for academic depth, layer in Coursera (Princeton) or edX (Berkeley). Builders should choose Alchemy University (free) and consider ConsenSys Academy for mentor-led polish. For credentials, UNIC stands out. Founders ready to ship and raise should explore a16z Crypto’s CSX.

Related Reads:

We verified each provider’s official pages for curriculum, format, and access. Third-party datasets were used only to cross-check prominence. Updated September 2025.

The flood of information in crypto makes trusted voices indispensable. The top crypto influencers 2025 help you filter noise, spot narratives early, and pressure-test ideas across Twitter/X, YouTube, and TikTok. This guide ranks the most useful creators and media brands for research, education, and market awareness—whether you’re an individual investor, a builder, or an institution.

Definition: A crypto influencer/KOL is a creator or publication with outsized reach and demonstrated ability to shape attention, educate audiences, and surface on-chain or market insights. We emphasize track record, transparency, and multi-platform presence. Secondary terms like best crypto KOLs, crypto YouTubers, and crypto Twitter accounts are woven in naturally to match search intent.

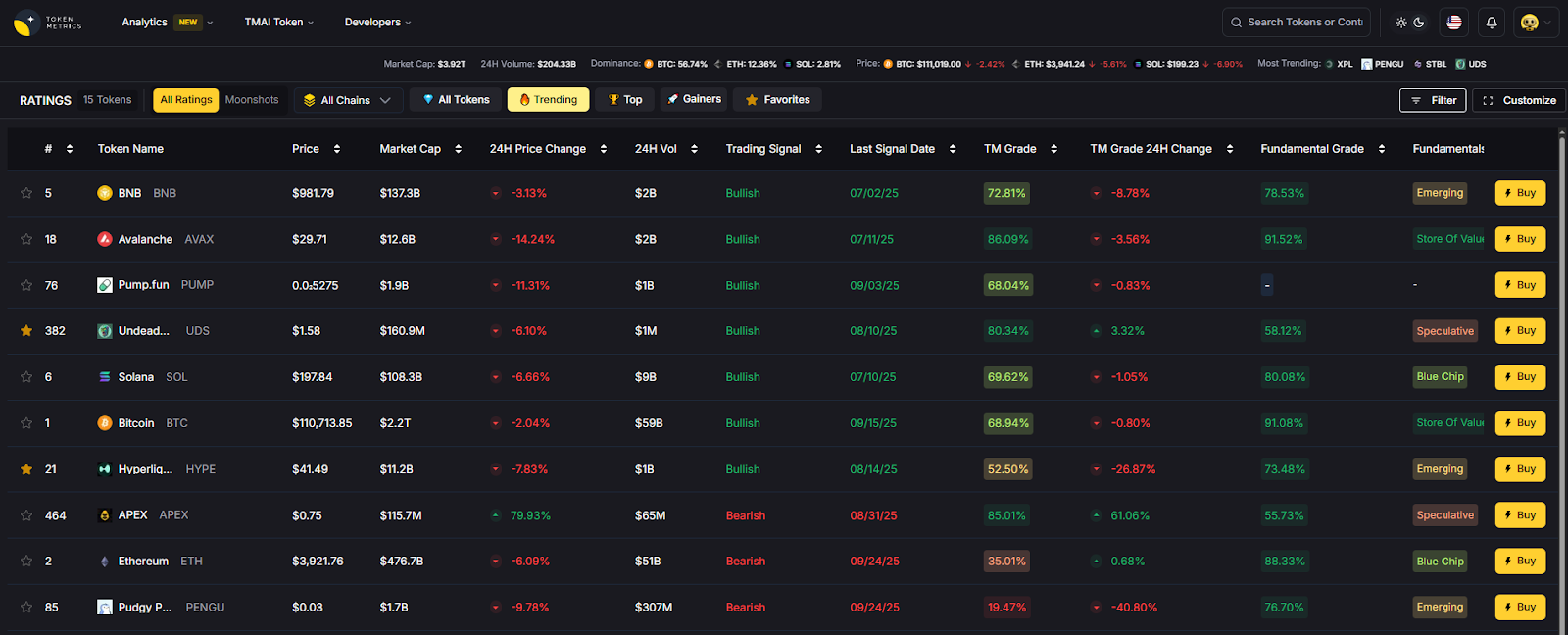

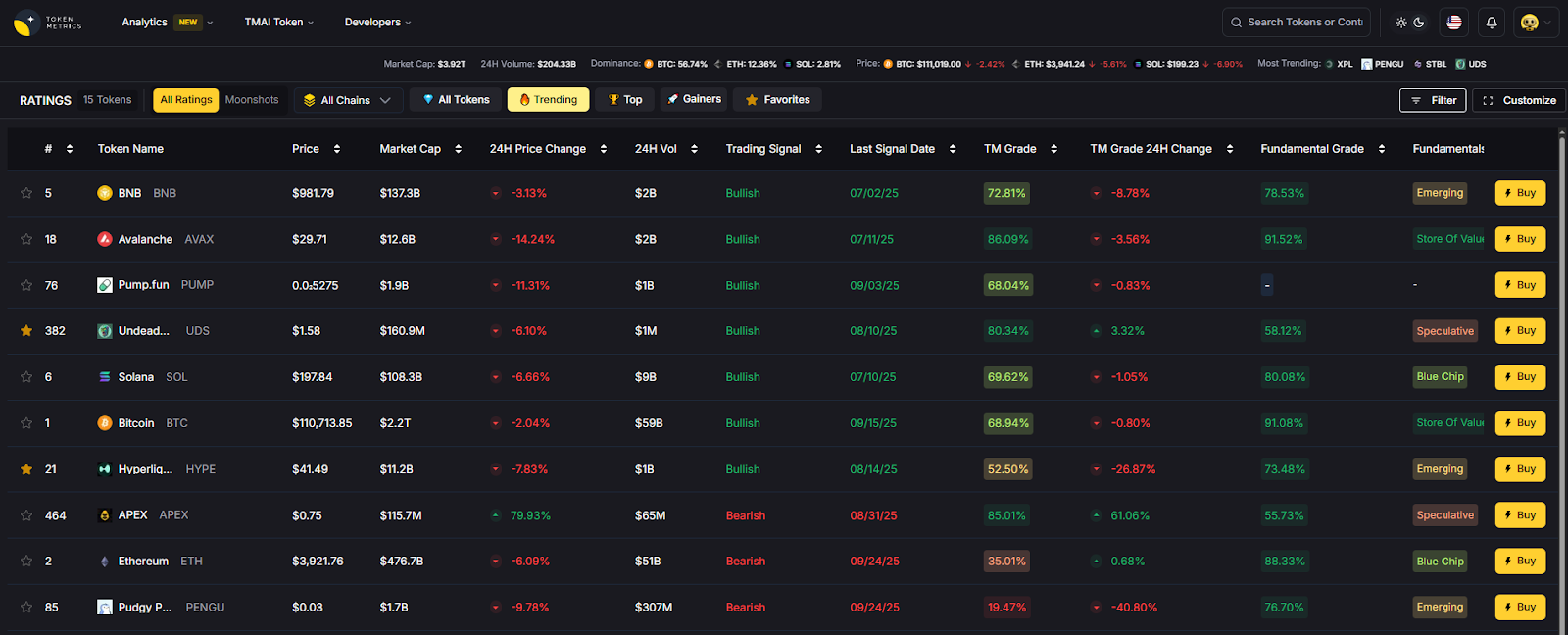

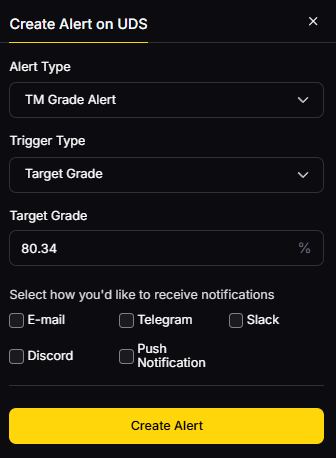

Why Use It: Token Metrics combines human analysts with AI ratings and on-chain/quant models, packaging insights via YouTube shows, tutorials, and research articles. The mix of data-driven screening and narrative detection makes it a strong daily driver for both retail and pro users. YouTube+1

Best For: Retail investors, swing traders, token research teams, and institutions seeking systematic signals.

Notable Features: AI Ratings & Signals; narrative heat detection; portfolio tooling; explainers and live shows.

Fees Notes: Free videos/reports; paid analytics tiers available.

Regions: Global.

Alternatives: Coin Bureau, Bankless.

Why Use It: Guy and team are known for accessible, well-structured education across tokens, tech, and regulation—ideal for learning fast without sensationalism. Their site and channel organize guides, analysis, and “what to know before you invest” content. Coin Bureau+1

Best For: Beginners, researchers, compliance-minded readers.

Notable Features: Long-form explainers; project primers; timely macro/market narratives.

Fees Notes: Content is free; optional merchandise/membership.

Regions: Global.

Alternatives: Finematics, Token Metrics.

Why Use It: Bankless blends interviews with founders and policymakers, DeFi primers, and a consistent macro lens. The podcast + YouTube combo and a busy newsletter make it a top “frontier finance” feed. Bankless+1

Best For: Builders, protocol teams, power users.

Notable Features: Deep interviews; airdrop and ecosystem roundups; policy/regulatory conversations.

Fees Notes: Many resources free; paid tiers/newsletters optional.

Regions: Global.

Alternatives: The Defiant (news), Coin Bureau.

Why Use It: The Arnold brothers deliver high-frequency coverage of market movers, narratives, and interviews, helping you catch headlines and sentiment shifts quickly. Their channel is among the most active for crypto news. YouTube+1

Best For: News-driven traders, general crypto audiences.

Notable Features: Daily videos; interviews; quick market takes.

Fees Notes: Free content; affiliate links may appear with disclosures.

Regions: Global.

Alternatives: Crypto Banter, Token Metrics.

Why Use It: A live, broadcaster-style format covering Bitcoin, altcoins, and breaking news—with recurring hosts and trader segments. The emphasis is on real-time updates and community participation. cryptobanter.com+1

Best For: Intraday watchers, momentum traders, community-driven learning.

Notable Features: Daily live streams; trader panels; market reaction shows.

Fees Notes: Free livestreams; education and partners disclosed on site.

Regions: Global.

Alternatives: Altcoin Daily, Token Metrics.

Why Use It: Pomp’s daily show and interviews bridge crypto with broader finance and tech. He brings operators, investors, and policymakers into accessible conversations. New original programming on X complements his long-running podcast. Anthony Pompliano+1

Best For: Executives, allocators, macro-minded audiences.

Notable Features: Daily investor letter; interviews; X-native programming.

Fees Notes: Free content; newsletter and media subscriptions optional.

Regions: Global.

Alternatives: Bankless, Token Metrics.

Why Use It: Finematics turns complex DeFi mechanics (AMMs, MEV, L2s) into crisp animations and threads—great for leveling up from novice to competent operator. The YouTube channel is a staple for concept mastery. YouTube+1

Best For: Students of DeFi, analysts, product managers.

Notable Features: Animated explainers; topical primers (MEV, EIPs); extra tutorials on site.

Fees Notes: Free videos; optional Patreon/course material.

Regions: Global.

Alternatives: Coin Bureau, Bankless.

Why Use It: Clear, approachable tutorials on wallets, security, and portfolio basics; frequent refreshes for the latest best practices. Great first touch for friends and teammates new to crypto. YouTube+1

Best For: Beginners, educators, community managers.

Notable Features: Setup walk-throughs; safety tips; series for newcomers.

Fees Notes: Free channel; affiliate/sponsor disclosures in video descriptions.

Regions: Global.

Alternatives: Coin Bureau, Finematics.

Why Use It: Rekt Capital focuses on disciplined, cycle-aware technical analysis, especially for Bitcoin. The research newsletter and YouTube channel offer a consistent framework for understanding halving cycles, support/resistance, and macro phases. Rekt Capital+1

Best For: Swing traders, long-term allocators, TA learners.

Notable Features: Cycle maps; weekly newsletters; educational modules.

Fees Notes: Free posts + paid tiers; clear membership options.

Regions: Global.

Alternatives: Willy Woo, Token Metrics.

Why Use It: A pioneer in on-chain analytics, Willy popularized frameworks like NVT and shares models and charts used widely by analysts. His work bridges on-chain data with macro narrative, useful when markets de-correlate from headlines. charts.woobull.com+1

Best For: Data-driven investors, quant-curious traders.

Notable Features: On-chain models; charts (e.g., NVT); newsletter The Bitcoin Forecast.

Fees Notes: Free charts; paid newsletter available.

Regions: Global.

Alternatives: Token Metrics (quant + AI), Rekt Capital.

Primary CTA: Start free trial.

This article is for research/education, not financial advice.

What’s the fastest way to use this list?

Pick one education-first creator (Coin Bureau or Crypto Casey) and one market-first feed (Token Metrics, Bankless, or Altcoin Daily). Use Token Metrics to validate ideas before you act. Coin Bureau+2YouTube+2

Are these KOLs region-restricted?

Content is generally global, though some platforms may geo-restrict features or embeds. Always follow local rules for trading and taxes. (Check each creator’s site/channel for access details.) Coin Bureau+1

Who’s best for on-chain metrics?

Willy Woo popularized several on-chain valuation approaches and maintains public charts on Woobull/WooCharts, useful for cycle context. charts.woobull.com+1

I’m brand-new—where should I start?

Crypto Casey and Coin Bureau offer step-by-step explainers; then layer in Token Metrics for AI-assisted idea validation and alerts. YouTube+2Coin Bureau+2

How do I avoid shill content?

Look for disclosures, independent verification, and multiple sources. Cross-check KOL mentions with Token Metrics’ ratings and narratives before allocating.

KOLs are force multipliers when you pair them with your own process. Start with one education channel and one market channel, then layer Token Metrics to validate and monitor. Over time, you’ll recognize which voices best fit your strategy.

Related Reads:

We verified identities, formats, and focus areas using official sites, channels, and about pages; scale and programming notes were cross-checked with publicly available profiles and posts. Updated September 2025.

Willy Woo — Woobull, WooCharts, and NVT page. Woobull+2woocharts.com+2