Top Crypto Trading Platforms in 2025

%201.svg)

%201.svg)

Big news: We’re cranking up the heat on AI-driven crypto analytics with the launch of the Token Metrics API and our official SDK (Software Development Kit). This isn’t just an upgrade – it's a quantum leap, giving traders, hedge funds, developers, and institutions direct access to cutting-edge market intelligence, trading signals, and predictive analytics.

Crypto markets move fast, and having real-time, AI-powered insights can be the difference between catching the next big trend or getting left behind. Until now, traders and quants have been wrestling with scattered data, delayed reporting, and a lack of truly predictive analytics. Not anymore.

The Token Metrics API delivers 32+ high-performance endpoints packed with powerful AI-driven insights right into your lap, including:

Getting started with the Token Metrics API is simple:

At Token Metrics, we believe data should be decentralized, predictive, and actionable.

The Token Metrics API & SDK bring next-gen AI-powered crypto intelligence to anyone looking to trade smarter, build better, and stay ahead of the curve. With our official SDK, developers can plug these insights into their own trading bots, dashboards, and research tools – no need to reinvent the wheel.

%201.svg)

%201.svg)

Shiba Inu operates as a community-driven meme token where price action stems primarily from social sentiment, attention cycles, and speculative trading rather than fundamental value drivers. SHIB exhibits extreme volatility with no defensive characteristics or revenue-generating mechanisms typical of utility tokens. Token Metrics scenarios below provide technical Price Predictions across different market cap environments, though meme tokens correlate more strongly with viral trends and community engagement than systematic market cap models. Positions in SHIB should be sized as high-risk speculative bets with potential for total loss.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

How to read it: Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity. For meme tokens, actual outcomes depend heavily on social trends and community momentum beyond what market cap models capture.

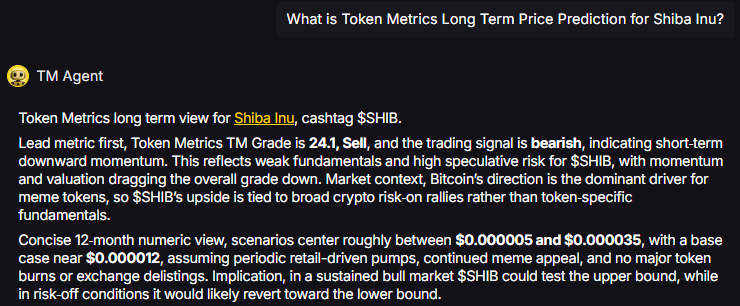

TM Agent baseline: Token Metrics TM Grade is 24.1%, Sell, with a bearish trading signal. The concise 12‑month numeric view centers between

TM Agent numeric view: scenarios center roughly between $0.000005 and $0.000035, with a base case near $0.000012.

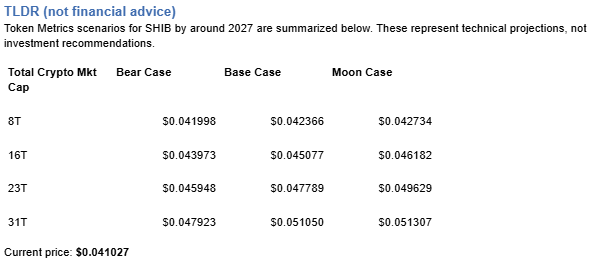

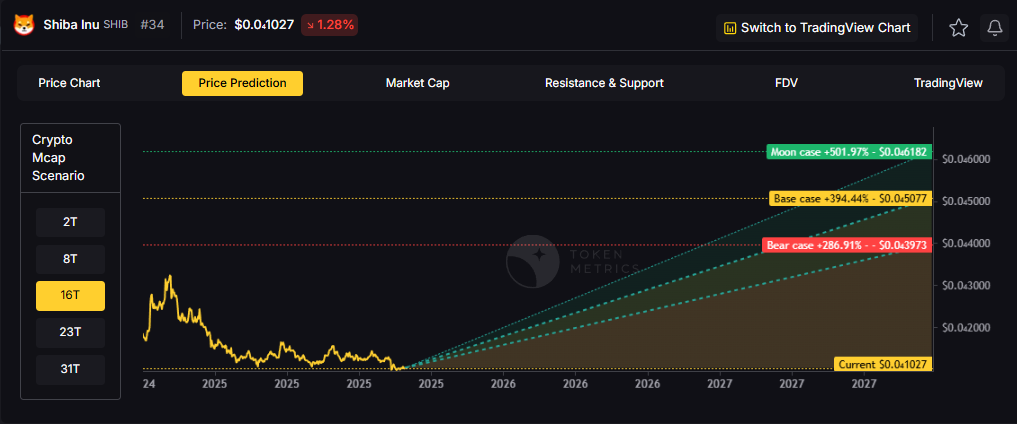

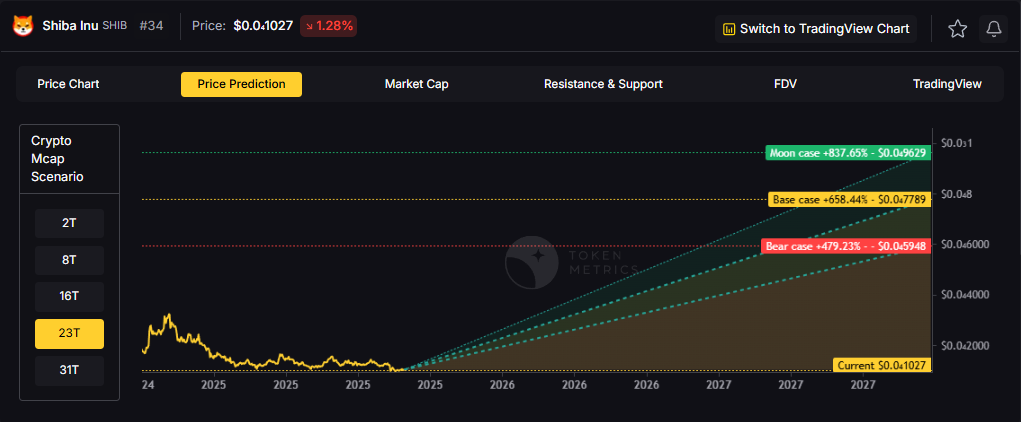

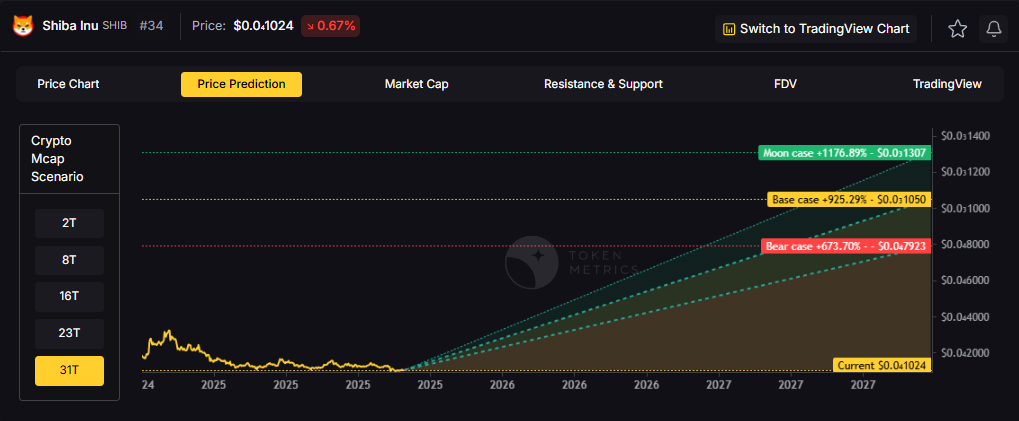

Token Metrics scenarios provide technical price bands across market cap tiers:

8T: At 8 trillion total crypto market cap, SHIB projects to $0.041998 (bear), $0.042366 (base), and $0.042734 (moon).

16T: At 16 trillion total crypto market cap, SHIB projects to $0.043973 (bear), $0.045077 (base), and $0.046182 (moon).

23T: At 23 trillion total crypto market cap, SHIB projects to $0.045948 (bear), $0.047789 (base), and $0.049629 (moon).

31T: At 31 trillion total crypto market cap, SHIB projects to $0.047923 (bear), $0.051050 (base), and $0.051307 (moon).

These technical ranges assume meme tokens maintain market cap share proportional to overall crypto growth. Actual outcomes for speculative tokens typically exhibit higher variance and stronger correlation to social trends than these models predict.

Shiba Inu is a meme-born crypto project that centers on community and speculative culture. Unlike utility tokens with specific use cases, SHIB operates primarily as a speculative asset and community symbol. The project focuses on community engagement and entertainment value.

SHIB has demonstrated viral moments and community loyalty within the broader meme token category. The token trades on community sentiment and attention cycles more than fundamentals. Market performance depends heavily on social media attention and broader meme coin cycles.

Token Metrics provides technical analysis, scenario math, and rigorous risk evaluation for hundreds of crypto tokens. Want to dig deeper? Explore our powerful AI-powered ratings and scenario tools here.

Will SHIB 10x from here?

Answer: At current price of $0.041027, a 10x reaches $0.41027. This level does not appear in any of the listed bear, base, or moon scenarios across 8T, 16T, 23T, or 31T tiers. Meme tokens can 10x rapidly during viral moments but can also lose 90%+ just as quickly. Position sizing for potential total loss is critical. Not financial advice.

What are the biggest risks to SHIB?

Answer: Primary risks include attention shifting to newer memes, community fragmentation, developer abandonment, regulatory crackdowns, and liquidity collapse during downturns. Unlike utility tokens with defensive characteristics, SHIB has zero fundamental floor. Price can approach zero if community interest disappears. Total loss is a realistic outcome. Not financial advice.

Next Steps

Disclosure

Educational purposes only, not financial advice. SHIB is a highly speculative asset with extreme volatility and high risk of total loss. Meme tokens operate as entertainment and gambling instruments rather than investments. Only allocate capital you can afford to lose entirely. Do your own research and manage risk appropriately.

%201.svg)

%201.svg)

Exchange tokens like WhiteBIT Coin offer leveraged exposure to overall market activity, creating concentration risk around a single platform's success. While WBT can deliver outsized returns during bull markets with high trading volumes, platform-specific risks like regulatory action, security breaches, or competitive displacement amplify downside exposure. Portfolio theory suggests balancing such concentrated bets with broader sector exposure.

The scenarios below show how WBT might perform across different crypto market cap environments. Rather than betting entirely on WhiteBIT Coin's exchange succeeding, diversified strategies blend exchange tokens with L1s, DeFi protocols, and infrastructure plays to capture crypto market growth while mitigating single-platform risk.

Portfolio theory teaches that diversification is the only free lunch in investing. WBT concentration violates this principle by tying your crypto returns to one protocol's fate. Token Metrics Indices blend WhiteBIT Coin with the top one hundred tokens, providing broad exposure to crypto's growth while smoothing volatility through cross-asset diversification. This approach captures market-wide tailwinds without overweighting any single point of failure.

Systematic rebalancing within index strategies creates an additional return source that concentrated positions lack. As some tokens outperform and others lag, regular rebalancing mechanically sells winners and buys laggards, exploiting mean reversion and volatility. Single-token holders miss this rebalancing alpha and often watch concentrated gains evaporate during corrections while index strategies preserve more gains through automated profit-taking.

Beyond returns, diversified indices improve the investor experience by reducing emotional decision-making. Concentrated WBT positions subject you to severe drawdowns that trigger panic selling at bottoms. Indices smooth the ride through natural diversification, making it easier to maintain exposure through full market cycles. Get early access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

How to read it: Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

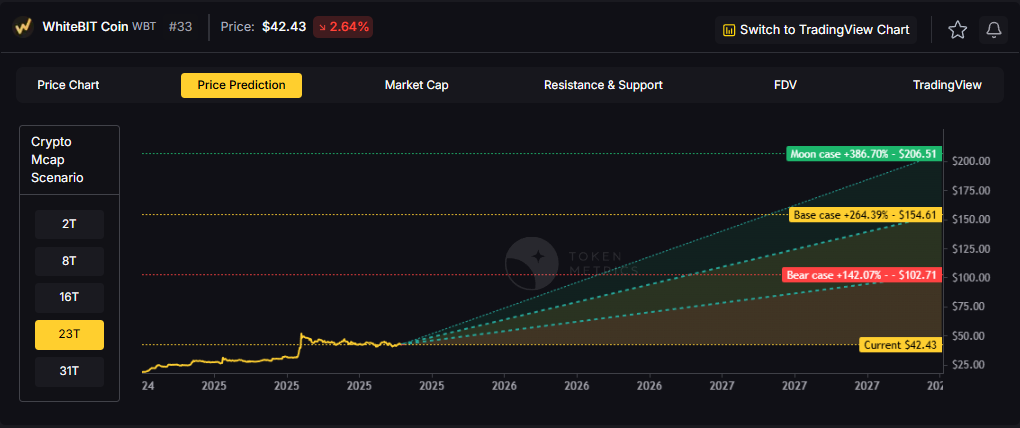

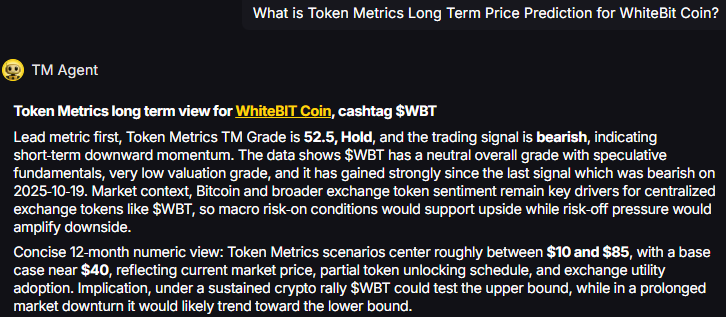

TM Agent baseline: Token Metrics long term view for WhiteBIT Coin, cashtag $WBT. Lead metric first, Token Metrics TM Grade is 52.5%, Hold, and the trading signal is bearish, indicating short-term downward momentum. Concise 12-month numeric view: Token Metrics scenarios center roughly between $10 and $85, with a base case near $40.

Token Metrics scenarios span four market cap tiers, each representing different levels of crypto market maturity and liquidity:

8T: At an 8 trillion dollar total crypto market cap, WBT projects to $54.50 in bear conditions, $64.88 in the base case, and $75.26 in bullish scenarios.

16T: Doubling the market to 16 trillion expands the range to $78.61 (bear), $109.75 (base), and $140.89 (moon).

23T: At 23 trillion, the scenarios show $102.71, $154.61, and $206.51 respectively.

31T: In the maximum liquidity scenario of 31 trillion, WBT could reach $126.81 (bear), $199.47 (base), or $272.13 (moon).

These ranges illustrate potential outcomes for concentrated WBT positions, but investors should weigh whether single-asset exposure matches their risk tolerance or whether diversified strategies better suit their objectives.

WhiteBIT Coin is the native exchange token associated with the WhiteBIT ecosystem. It is designed to support utility on the platform and related services.

WBT typically provides fee discounts and ecosystem benefits where supported. Usage depends on exchange activity and partner integrations.

Token Metrics AI provides comprehensive context on WhiteBIT Coin's positioning and challenges.

Vision: The stated vision for WhiteBIT Coin centers on enhancing user experience within the WhiteBIT exchange ecosystem by providing tangible benefits such as reduced trading fees, access to exclusive features, and participation in platform governance or rewards programs. It aims to strengthen user loyalty and engagement by aligning token holders’ interests with the exchange’s long-term success. While not positioned as a decentralized protocol token, its vision reflects a broader trend of exchanges leveraging tokens to build sustainable, incentivized communities.

Problem: Centralized exchanges often face challenges in retaining active users and differentiating themselves in a competitive market. Users may be deterred by high trading fees, limited reward mechanisms, or lack of influence over platform developments. WhiteBIT Coin aims to address these frictions by introducing a native incentive layer that rewards participation, encourages platform loyalty, and offers cost-saving benefits. This model seeks to improve user engagement and create a more dynamic trading environment on the WhiteBIT platform.

Solution: WhiteBIT Coin serves as a utility token within the WhiteBIT exchange, offering users reduced trading fees, staking opportunities, and access to special events such as token sales or airdrops. It functions as an economic lever to incentivize platform activity and user retention. While specific governance features are not widely documented, such tokens often enable voting on platform upgrades or listing decisions. The solution relies on integrating the token deeply into the exchange’s operational model to ensure consistent demand and utility for holders.

Market Analysis: Exchange tokens like WhiteBIT Coin operate in a competitive landscape led by established players such as Binance Coin (BNB) and KuCoin Token (KCS). While BNB benefits from a vast ecosystem including a launchpad, decentralized exchange, and payment network, WBT focuses on utility within its native exchange. Adoption drivers include the exchange’s trading volume, security track record, and the attractiveness of fee discounts and staking yields. Key risks involve regulatory pressure on centralized exchanges and competition from other exchange tokens that offer similar benefits.

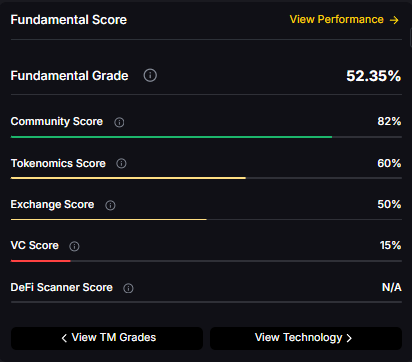

Fundamental Grade: 52.35% (Community 82%, Tokenomics 60%, Exchange 50%, VC —, DeFi Scanner N/A).

Can WBT reach $100?

Answer: Based on the scenarios, WBT could reach $100 in the 16T base case. The 16T tier projects $109.75 in the base case. Achieving this requires both broad market cap expansion and WhiteBIT Coin maintaining competitive position. Not financial advice.

What's the risk/reward profile for WBT?

Answer: Risk and reward span from $54.50 in the lowest bear case to $272.13 in the highest moon case. Downside risks include regulatory actions and competitive displacement, while upside drivers include expanding access and favorable macro liquidity. Concentrated positions amplify both tails, while diversified strategies smooth outcomes.

What gives WBT value?

Answer: WBT accrues value through fee discounts, staking rewards, access to special events, and potential participation in platform programs. Demand drivers include exchange activity, user growth, and security reputation. While these fundamentals matter, diversified portfolios capture value accrual across multiple tokens rather than betting on one protocol's success.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, concentration amplifies risk, and diversification is a fundamental principle of prudent portfolio construction. Do your own research and manage risk appropriately.

%201.svg)

%201.svg)

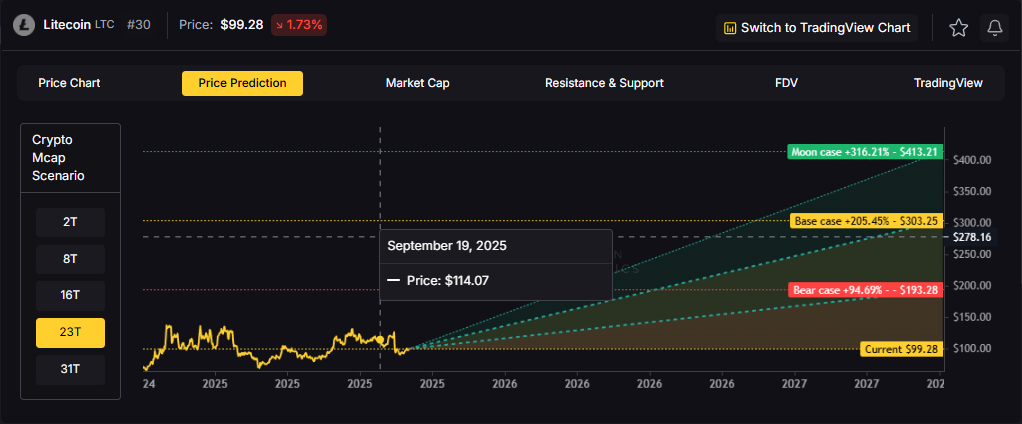

Layer 1 tokens capture value through transaction fees and miner economics. Litecoin processes blocks every 2.5 minutes using Proof of Work, targeting fast, low-cost payments. The scenarios below model LTC outcomes across different total crypto market sizes, reflecting network adoption and transaction volume.

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

How to read it: Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

TM Agent baseline: Token Metrics scenarios center roughly between $35 and $160, with a base case near $75, assuming gradual adoption, occasional retail rotation into major alts, and no major network issues. In a broad crypto rally LTC could test the upper bound, while in risk-off conditions it would likely drift toward the lower bound.

Token Metrics scenarios span four market cap tiers reflecting different crypto market maturity levels:

8T: At an 8 trillion dollar total crypto market cap, LTC projects to $115.80 in bear conditions, $137.79 in the base case, and $159.79 in bullish scenarios.

16T: At 16 trillion, the range expands to $154.54 (bear), $220.52 (base), and $286.50 (moon).

23T: The 23 trillion tier shows $193.28, $303.25, and $413.21 respectively.

31T: In the maximum liquidity scenario at 31 trillion, LTC reaches $232.03 (bear), $385.98 (base), or $539.92 (moon).

Litecoin is a peer-to-peer cryptocurrency launched in 2011 as an early Bitcoin fork. It uses Proof of Work with Scrypt and targets faster settlement, processing blocks roughly every 2.5 minutes with low fees.

LTC is the native token used for transaction fees and miner rewards. Its primary utilities are fast, low-cost payments and serving as a testing ground for Bitcoin-adjacent upgrades, with adoption in retail payments, remittances, and exchange trading pairs.

Token Metrics AI provides additional context on Litecoin's technical positioning and market dynamics.

Vision: Litecoin's vision is to serve as a fast, low-cost, and accessible digital currency for everyday transactions. It aims to complement Bitcoin by offering quicker settlement times and a more efficient payment system for smaller, frequent transfers.

Problem: Bitcoin's relatively slow block times and rising transaction fees during peak usage make it less ideal for small, frequent payments. This creates a need for a cryptocurrency that maintains security and decentralization while enabling faster and cheaper transactions suitable for daily use.

Solution: Litecoin addresses this by using a 2.5-minute block time and the Scrypt algorithm, which initially allowed broader participation in mining and faster transaction processing. It functions primarily as a payment-focused blockchain, supporting peer-to-peer transfers with low fees and high reliability, without the complexity of smart contract functionality.

Market Analysis: Litecoin operates in the digital payments segment of the cryptocurrency market, often compared to Bitcoin but positioned as a more efficient medium of exchange. While it lacks the smart contract capabilities of platforms like Ethereum or Solana, its simplicity, long-standing network security, and brand recognition give it a stable niche. It competes indirectly with other payment-focused cryptocurrencies like Bitcoin Cash and Dogecoin. Adoption is sustained by its integration across major exchanges and payment services, but growth is limited by the broader shift toward ecosystems offering decentralized applications.

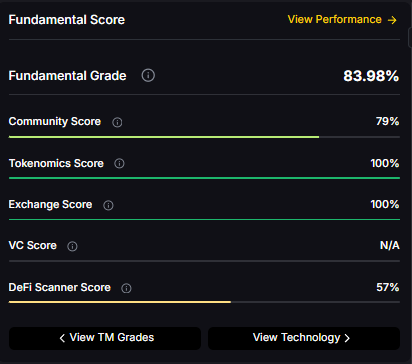

Fundamental Grade: 83.98% (Community 79%, Tokenomics 100%, Exchange 100%, VC —, DeFi Scanner 57%).

Technology Grade: 46.67% (Activity 51%, Repository 72%, Collaboration 60%, Security 20%, DeFi Scanner 57%).

For comprehensive Litecoin ratings, on-chain analysis, AI-powered price forecasts, and trading signals, go to Token Metrics.

What is LTC used for?

Answer: Primary use cases include fast peer-to-peer payments, low-cost remittances, and exchange settlement/liquidity pairs. LTC holders primarily pay transaction fees and support miner incentives. Adoption depends on active addresses and payment integrations.

What price could LTC reach in the moon case?

Answer: Moon case projections range from $159.79 at 8T to $539.92 at 31T. These scenarios require maximum market cap expansion and strong adoption dynamics. Not financial advice.

Next Steps

• Track live grades and signals: Token Details

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

%201.svg)

%201.svg)

FastAPI has become a go-to framework for teams that need production-ready, high-performance APIs in Python. It combines modern Python features, automatic type validation via pydantic, and ASGI-based async support to deliver low-latency endpoints. This post breaks down pragmatic patterns for building, testing, and scaling FastAPI services, with concrete guidance on performance tuning, deployment choices, and observability so you can design robust APIs for real-world workloads.

FastAPI is an ASGI framework that emphasizes developer experience and runtime speed. It generates OpenAPI docs automatically, enforces request/response typing, and integrates cleanly with async workflows. Compare FastAPI to traditional WSGI stacks (Flask, Django sync endpoints): FastAPI excels when concurrency and I/O-bound tasks dominate, and when you want built-in validation and schema-driven design.

Use-case scenarios where FastAPI shines:

FastAPI leverages async/await to let a single worker handle many concurrent requests when operations are I/O-bound. Key principles:

Performance tuning checklist:

FastAPI's dependency injection and pydantic models enable clear separation of concerns. Recommended practices:

Scenario analysis: for CPU-bound workloads (e.g., heavy data processing), prefer external workers or serverless functions. For high-concurrency I/O-bound workloads, carefully tuned async endpoints perform best.

Deploying FastAPI requires choices around containers, orchestration, and observability:

CI/CD tips: include a test matrix for schema validation, contract tests against OpenAPI, and canary deploys for backward-incompatible changes.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

FastAPI is a modern, ASGI-based Python framework focused on speed and developer productivity. It differs from traditional frameworks by using type hints for validation, supporting async endpoints natively, and automatically generating OpenAPI documentation.

Prefer async endpoints for I/O-bound operations like network calls or async DB drivers. If your code is CPU-bound, spawning background workers or using synchronous workers with more processes may be better to avoid blocking the event loop.

There is no one-size-fits-all. Start with CPU core count as a baseline and adjust based on latency and throughput measurements. For async I/O-bound workloads, fewer workers with higher concurrency can be more efficient; for blocking workloads, increase worker count or externalize tasks.

Enforce strong input validation with pydantic, use HTTPS, validate and sanitize user data, implement authentication and authorization (OAuth2, JWT), and apply rate limiting and request size limits at the gateway.

Use TestClient from FastAPI for unit and integration tests, mock external dependencies, write contract tests against OpenAPI schemas, and include load tests in CI to catch performance regressions early.

This article is for educational purposes only. It provides technical and operational guidance for building APIs with FastAPI and does not constitute professional or financial advice.

%201.svg)

%201.svg)

APIs are the connective tissue of modern software. Testing them thoroughly prevents regressions, ensures predictable behavior, and protects downstream systems. This guide breaks API testing into practical steps, frameworks, and tool recommendations so engineers can build resilient interfaces and integrate them into automated delivery pipelines.

API testing verifies that application programming interfaces behave according to specification: returning correct data, enforcing authentication and authorization, handling errors, and performing within expected limits. Unlike UI testing, API tests focus on business logic, data contracts, and integration between systems rather than presentation. Well-designed API tests are fast, deterministic, and suitable for automation, enabling rapid feedback in development workflows.

Effective strategies balance scope, speed, and confidence. A common model is the testing pyramid: many fast unit tests, a moderate number of integration and contract tests, and fewer end-to-end or performance tests. Core elements of a robust strategy include:

Tooling choices depend on protocols (REST, GraphQL, gRPC) and language ecosystems. Common tools and patterns include:

Automation should be baked into CI/CD pipelines: run unit and contract tests on pull requests, integration tests on feature branches or merged branches, and schedule performance/security suites on staging environments. Observability during test runs—collecting metrics, logs, and traces—helps diagnose flakiness and resource contention faster.

AI-driven analysis can accelerate test coverage and anomaly detection by suggesting high-value test cases and highlighting unusual response patterns. For teams that integrate external data feeds into their systems, services that expose robust, real-time APIs and analytics can be incorporated into test scenarios to validate third-party integrations under realistic conditions. For example, Token Metrics offers datasets and signals that can be used to simulate realistic inputs or verify integrations with external data providers.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

Unit tests isolate individual functions or routes using mocks and focus on internal logic. Integration tests exercise multiple components together (for example service + database) to validate interaction, data flow, and external dependencies.

Run lightweight load tests during releases and schedule comprehensive performance runs on staging before major releases or after architecture changes. Frequency depends on traffic patterns and how often critical paths change.

AI can suggest test inputs, prioritize test cases by risk, detect anomalies in responses, and assist with test maintenance through pattern recognition. Treat AI as a productivity augmenter that surfaces hypotheses requiring engineering validation.

Contract testing ensures providers and consumers agree on the API contract (schemas, status codes, semantics). It reduces integration regressions by failing early when expectations diverge, enabling safer deployments in distributed systems.

Use deterministic fixtures, isolate test databases, anonymize production data when necessary, seed environments consistently, and prefer schema or contract assertions to validate payload correctness rather than brittle value expectations.

Investigate root causes such as timing, external dependencies, or resource contention. Reduce flakiness by mocking unstable third parties, improving environment stability, adding idempotent retries where appropriate, and capturing diagnostic traces during failures.

This article is educational and technical in nature and does not constitute investment, legal, or regulatory advice. Evaluate tools and data sources independently and test in controlled environments before production use.

%201.svg)

%201.svg)

APIs power modern software by letting systems communicate without exposing internal details. Whether you're building an AI agent, integrating price feeds for analytics, or connecting wallets, understanding the core concept of an "API" — and the practical rules around using one — is essential. This article defines what an API is, explains common types, highlights evaluation criteria, and outlines best practices for secure, maintainable integrations.

API stands for Application Programming Interface. At its simplest, an API is a contract: a set of rules that lets one software component request data or services from another. The contract specifies available endpoints (or methods), required inputs, expected outputs, authentication requirements, and error semantics. APIs abstract implementation details so consumers can depend on a stable surface rather than internal code.

Think of an API as a menu in a restaurant: the menu lists dishes (endpoints), describes ingredients (parameters), and sets expectations for what arrives at the table (responses). Consumers don’t need to know how the kitchen prepares the dishes — only how to place an order.

APIs come in several architectural styles. The three most common today are:

Choosing a style depends on use case: REST for simple, cacheable resources; GraphQL for complex client-driven queries; gRPC/WebSocket for low-latency or streaming scenarios.

Documentation quality often determines integration time and reliability. When evaluating an API, check for:

For crypto and market data APIs, also verify the latency SLAs, the freshness of on‑chain reads, and whether historical data is available in a form suitable for research or model training.

APIs expose surface area; securing that surface is critical. Key practices include:

Security and resilience are especially important in finance and crypto environments where integrity and availability directly affect analytics and automated systems.

APIs are central to AI-driven research and crypto tooling. When integrating APIs into data pipelines or agent workflows, consider these steps:

AI models and agents can benefit from structured, versioned APIs that provide deterministic responses; integrating dataset provenance and schema validation improves repeatability in experiments.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

An API is an interface that defines how two software systems communicate. It lists available operations, required inputs, and expected outputs so developers can use services without understanding internal implementations.

REST exposes fixed resource endpoints and relies on HTTP semantics. GraphQL exposes a flexible query language letting clients fetch precise fields in one request. REST favors caching and simplicity; GraphQL favors efficiency for complex client queries.

Confirm data freshness, historical coverage, authentication methods, rate limits, and the provider’s documentation. Also verify uptime, SLA terms if relevant, and whether the API provides proof or verifiable on‑chain reads for critical use cases.

Rate limits set a maximum number of requests per time window, often per API key or IP. Providers may return headers indicating remaining quota and reset time; implement exponential backoff and caching to stay within limits.

AI-driven research tools can summarize documentation, detect breaking changes, and suggest integration patterns. For provider-specific signals and token research, platforms like Token Metrics combine multiple data sources and models to support analysis workflows.

This article is educational and informational only. It does not constitute financial, legal, or investment advice. Readers should perform independent research and consult qualified professionals before making decisions related to finances, trading, or technical integrations.

%201.svg)

%201.svg)

Modern distributed systems rely on effective traffic control, security, and observability at the edge. An API gateway centralizes those responsibilities, simplifying client access to microservices and serverless functions. This guide explains what an API gateway does, common architectural patterns, deployment and performance trade-offs, and design best practices for secure, scalable APIs.

An API gateway is a server-side component that sits between clients and backend services. It performs request routing, protocol translation, aggregation, authentication, rate limiting, and metrics collection. Instead of exposing each service directly, teams present a single, consolidated API surface to clients through the gateway. This centralization reduces client complexity, standardizes cross-cutting concerns, and can improve operational control.

Think of an API gateway as a policy and plumbing layer: it enforces API contracts, secures endpoints, and implements traffic shaping while forwarding requests to appropriate services.

API gateways vary in capability but commonly include:

Common patterns include:

Choosing where and how to deploy an API gateway affects performance, resilience, and operational cost. Key models include:

Performance trade-offs to monitor:

Adopt practical rules to keep gateways maintainable and secure:

AI and analytics tools can accelerate gateway design and operating decisions by surfacing traffic patterns, anomaly detection, and vulnerability signals. For example, products that combine real-time telemetry with model-driven insights help prioritize which endpoints need hardened policies.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

These technologies complement rather than replace each other. The API gateway handles north-south traffic (client to cluster), enforcing authentication and exposing public endpoints. A service mesh focuses on east-west traffic (service-to-service), offering fine-grained routing, mTLS, and telemetry between microservices. Many architectures use a gateway at the edge and a mesh internally for granular control.

A gateway introduces processing overhead for each request, which can increase end-to-end latency. Mitigations include optimizing filters, enabling HTTP/2 multiplexing, using local caches, and scaling gateway instances horizontally.

Not always. Small monoliths or single-service deployments may not require a gateway. For microservices, public APIs, or multi-tenant platforms, a gateway adds value by centralizing cross-cutting concerns and simplifying client integrations.

At minimum, the gateway should enforce TLS, validate authentication tokens, apply rate limits, and perform input validation. Additional controls include IP allowlists, web application firewall (WAF) rules, and integration with identity providers for RBAC.

Yes. Aggregation reduces client round trips by composing responses from multiple backends. Use caching and careful error handling to avoid coupling performance of one service to another.

Use a staging environment to run synthetic loads and functional tests against gateway policies. Store configurations in version control, run CI checks for syntax and policy conflicts, and roll out changes via canary deployments.

Managed gateways reduce operational overhead and provide scalability out of the box, while self-hosted gateways offer deeper customization and potentially lower long-term costs. Choose based on team expertise, compliance needs, and expected traffic patterns.

This article is for educational and technical information only. It does not constitute investment, legal, or professional advice. Readers should perform their own due diligence when selecting and configuring infrastructure components.

%201.svg)

%201.svg)

APIs are the connective tissue of modern applications; among them, RESTful APIs remain a dominant style because they map cleanly to HTTP semantics and scale well across distributed systems. This article breaks down what a RESTful API is, pragmatic design patterns, security controls, and practical monitoring and testing workflows. If you build or consume APIs, understanding these fundamentals reduces integration friction and improves reliability.

A RESTful API (Representational State Transfer) is an architectural style for designing networked applications. At its core, REST leverages standard HTTP verbs (GET, POST, PUT, PATCH, DELETE) and status codes to perform operations on uniquely identified resources, typically represented as URLs. Key characteristics include:

REST is a pragmatic guideline rather than a strict protocol; many APIs labeled "RESTful" adopt REST principles while introducing pragmatic extensions (e.g., custom headers, versioning strategies).

Good REST design begins with clear resource modeling. Ask: what are the nouns in the domain, and how do they relate? Use predictable URL structures and rely on HTTP semantics:

Design tips to improve usability and longevity:

Security is a primary concern for any public-facing API. Typical controls and patterns include:

Designing for security also means operational readiness: automated certificate rotation, secrets management, and periodic security reviews reduce long-term risk.

Performance tuning for RESTful APIs covers latency, throughput, and reliability. Practical strategies include caching (HTTP Cache-Control, ETags), connection pooling, and database query optimization. Use observability tools to collect metrics (error rates, latency percentiles), distributed traces, and structured logs for rapid diagnosis.

AI-assisted tools can accelerate many aspects of API development and operations: anomaly detection in request patterns, automated schema inference from traffic, and intelligent suggestions for endpoint design or documentation. While these tools improve efficiency, validate automated changes through testing and staged rollouts.

When selecting tooling, evaluate clarity of integrations, support for your API architecture, and the ability to export raw telemetry for custom analysis.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

REST focuses on resources and uses HTTP semantics; GraphQL centralizes queries into a single endpoint with flexible queries, and gRPC emphasizes high-performance RPCs with binary protocols. Choose based on client needs, performance constraints, and schema evolution requirements.

Common approaches include URL versioning (e.g., /v1/), header-based versioning, or semantic versioning of the API contract. Regardless of method, document deprecation timelines and provide migration guides and compatibility layers where possible.

Combine unit tests for business logic with integration tests that exercise endpoints and mocks for external dependencies. Use contract tests to ensure backward compatibility and end-to-end tests in staging environments. Automate tests in CI/CD to catch regressions early.

Additive changes (new fields, endpoints) are generally safe; avoid removing fields, changing response formats, or repurposing status codes. Feature flags and content negotiation can help introduce changes progressively.

Provide clear endpoint descriptions, request/response examples, authentication steps, error codes, rate limits, and code samples in multiple languages. Machine-readable specs (OpenAPI/Swagger) enable client generation and testing automation.

Disclaimer: This content is educational and informational only. It does not constitute professional, legal, security, or investment advice. Test and validate any architectural, security, or operational changes in environments that match your production constraints before rollout.

%201.svg)

%201.svg)

The Claude API is increasingly used to build context-aware AI assistants, document summarizers, and conversational workflows. This guide breaks down what the API offers, integration patterns, capability trade-offs, and practical safeguards to consider when embedding Claude models into production systems.

The Claude API exposes access to Anthropic’s Claude family of large language models. At a high level, it lets developers send prompts and structured instructions and receive text outputs, completions, or assistant-style responses. Key delivery modes typically include synchronous completions, streaming tokens for low-latency interfaces, and tools for handling multi-turn context. Understanding input/output semantics and token accounting is essential before integrating Claude into downstream applications.

Claude models are designed for safety-focused conversational AI and often emphasize instruction following and helpfulness while applying content filters. Typical features to assess:

Designing a robust integration with the Claude API means balancing performance, cost, and safety. Practical guidance:

Claude API use cases span chat assistants, summarization, prompt-driven code generation, and domain-specific Q&A. For each area evaluate these risk vectors:

Tooling around Claude often mirrors other LLM APIs: HTTP/SDK clients, streaming libraries, and orchestration frameworks. Combine the Claude API with retrieval-augmented generation (RAG) systems, vector stores for semantic search, and lightweight caching layers. AI-driven research platforms such as Token Metrics can complement model outputs by providing analytics and signal overlays when integrating market or on-chain data into prompts.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

The Claude API is an interface for sending prompts and receiving text-based model outputs from the Claude family. It supports completions, streaming responses, and multi-turn conversations, depending on the provider’s endpoints.

Implement a retrieval-augmented generation (RAG) approach: index documents into a vector store, use semantic search to fetch relevant segments, and summarize or stitch results before sending a concise prompt to Claude. Also consider chunking and progressive summarization when documents exceed context limits.

Optimize prompts to be concise, cache common responses, batch non-interactive requests, and choose lower-capacity model variants for non-critical tasks. Monitor token usage and set alerts for unexpected spikes.

Combine Claude’s built-in safety mechanisms with application-level filters, content validation, and human review workflows. Avoid sending regulated or sensitive data without proper agreements and minimize reliance on unverified outputs.

Use streaming for interactive chat interfaces where perceived latency matters. Batch completions are suitable for offline processing, analytics, and situations where full output is required before downstream steps.

This article is for educational purposes only and does not constitute professional, legal, or financial advice. It explains technical capabilities and integration considerations for the Claude API without endorsing specific implementations. Review service terms, privacy policies, and applicable regulations before deploying AI systems in production.

%201.svg)

%201.svg)

Every modern integration — from a simple weather widget to a crypto analytics agent — relies on API credentials to authenticate requests. An api key is one of the simplest and most widely used credentials, but simplicity invites misuse. This article explains what an api key is, how it functions, practical security patterns, and how developers can manage keys safely in production.

An api key is a short token issued by a service to identify and authenticate an application or user making an HTTP request. Unlike full user credentials, api keys are typically static strings passed as headers, query parameters, or request bodies. On the server side, the receiving API validates the key against its database, checks permissions and rate limits, and then either serves the request or rejects it.

Technically, api keys are a form of bearer token: possession of the key is sufficient to access associated resources. Because they do not necessarily carry user-level context or scopes by default, many providers layer additional access-control mechanisms (scopes, IP allowlists, or linked user tokens) to reduce risk.

API keys are popular because they are easy to generate and integrate: you create a key in a dashboard and paste it into your application. Typical use cases include server-to-server integrations, analytics pulls, and third-party widgets. In crypto and AI applications, keys often control access to market data, trading endpoints, or model inference APIs.

Limitations: api keys alone lack strong cryptographic proof of origin (compared with signed requests), are vulnerable if embedded in client-side code, and can be compromised if not rotated. For higher-security scenarios, consider combining keys with stronger authentication approaches like OAuth 2.0, mutual TLS, or request signing.

Secure handling of api keys reduces the chance of leak and abuse. Key best practices include:

These patterns are practical to implement: for example, many platforms offer scoped keys and rotation APIs so you can automate revocation and issuance without manual intervention.

Crypto data feeds, trading APIs, and model inference endpoints commonly require api keys. In these contexts, the attack surface often includes automated agents, cloud functions, and browser-based dashboards. Treat any key embedded in an agent as potentially discoverable and design controls accordingly.

Operational tips for crypto and AI projects:

Platforms such as Token Metrics provide APIs tailored to crypto research and can be configured with scoped keys for safe consumption in analytics pipelines and AI agents.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

An api key is a token that applications send with requests to identify and authenticate themselves to a service. It is often used for simple authentication, usage tracking, and applying access controls such as rate limits.

Store api keys outside of code: use environment variables, container secrets, or a managed secrets store. Ensure access to those stores is role-restricted and audited. Never commit keys to public repositories or client-side bundles.

API keys are static identifiers primarily for application-level authentication. OAuth tokens represent delegated user authorization and often include scopes and expiration. OAuth is generally more suitable for user-centric access control, while api keys are common for machine-to-machine interactions.

Rotation frequency depends on risk tolerance and exposure: a common pattern is scheduled rotation every 30–90 days, with immediate rotation upon suspected compromise. Automate the rotation process to avoid service interruptions.

Watch for abnormal usage patterns: sudden spikes in requests, calls from unexpected IPs or geographic regions, attempts to access endpoints outside expected scopes, or errors tied to rate-limit triggers. Configure alerts for such anomalies.

Many providers allow IP allowlisting or referrer restrictions. This reduces the attack surface by ensuring keys only work from known servers or client domains. Use this in combination with short lifetimes and least-privilege scopes.

AI agents that call external services should use securely stored keys injected at runtime. Limit their permissions to only what the agent requires, rotate keys regularly, and monitor agent activity to detect unexpected behavior.

This article is educational and informational in nature. It is not investment, legal, or security advice. Evaluate any security approach against your project requirements and consult qualified professionals for sensitive implementations.

%201.svg)

%201.svg)

Location data powers modern products: discovery, logistics, analytics, and personalized experiences all lean on accurate mapping services. The Google Maps API suite is one of the most feature-rich options for embedding maps, geocoding addresses, routing vehicles, and enriching UX with Places and Street View. This guide breaks the platform down into practical sections—what each API does, how to get started securely, design patterns to control costs and latency, and where AI can add value.

The Maps Platform is modular: you enable only the APIs and SDKs your project requires. Key components include:

Each API exposes different latency, quota, and billing characteristics. Plan around the functional needs (display vs. heavy batch geocoding vs. real-time routing).

Begin in the Google Cloud Console: create or select a project, enable the specific Maps Platform APIs your app requires, and generate an API key. Key operational steps:

Authentication and identity management are foundational—wider access means higher risk of unexpected charges and data leakage.

Successful integrations optimize performance, cost, and reliability. Consider these patterns:

The Maps Platform uses a pay-as-you-go model with billing tied to API calls, SDK sessions, or map loads depending on the product. To control costs:

Budgeting requires monitoring real usage patterns and aligning product behavior (e.g., map refresh frequency) with cost objectives.

Combining location APIs with machine learning unlocks advanced features: predictive ETA models, demand heatmaps, intelligent geofencing, and dynamic routing that accounts for historic traffic patterns. AI models can also enrich POI categorization from Places API results or prioritize search results based on user intent.

For teams focused on research and signals, AI-driven analytical tools can help surface patterns from large location datasets, cluster user behavior, and integrate external data feeds for richer context. Tools built for crypto and on-chain analytics illustrate how API-driven datasets can be paired with models to create actionable insights in other domains—similarly, map and location data benefit from model-driven enrichment that remains explainable and auditable.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

Google offers a free usage tier and a recurring monthly credit for Maps Platform customers. Beyond the free allocation, usage is billed based on API calls, map loads, or SDK sessions. Monitor your project billing and set alerts to avoid unexpected charges.

The Places API provides address and place autocomplete features tailored for UX-focused address entry. For server-side address validation or bulk geocoding, pair it with Geocoding APIs and implement server-side caching.

Apply application restrictions (HTTP referrers for web, package name & SHA-1 for Android, bundle ID for iOS) and limit the key to only the required APIs. Rotate keys periodically and keep production keys out of client-side source control when possible.

Yes—the Directions and Distance Matrix APIs support routing and travel-time estimates. For large-scale fleet optimization, consider server-side batching, rate-limit handling, and hybrid solutions that combine routing APIs with custom optimization logic to manage complexity and cost.

Common issues include unbounded API keys, lack of caching for geocoding, excessive map refreshes that drive costs, and neglecting offline/mobile behavior. Planning for quotas, testing under realistic loads, and instrumenting telemetry mitigates these pitfalls.

This article is for educational and technical information only. It does not constitute financial, legal, or professional advice. Evaluate features, quotas, and pricing on official Google documentation and consult appropriate professionals for specific decisions.

%201.svg)

%201.svg)

Discord's API is the backbone of modern community automation, moderation, and integrations. Whether you're building a utility bot, connecting an AI assistant, or streaming notifications from external systems, understanding the Discord API's architecture, constraints, and best practices helps you design reliable, secure integrations that scale.

The Discord API exposes two main interfaces: the Gateway (a persistent WebSocket) for real-time events and the REST API for one-off requests such as creating messages, managing channels, and configuring permissions. Together they let developers build bots and services that respond to user actions, post updates, and manage server state.

Key concepts to keep in mind:

Authentication is based on tokens. Bots use a bot token (issued in the Discord Developer Portal) to authenticate both the Gateway and REST calls. When building or auditing a bot, treat tokens like secrets: rotate them when exposed and store them securely in environment variables or a secrets manager.

Intents let you opt-in to categories of events. For example, message content intent is required to read message text in many cases. Use the principle of least privilege: request only the intents you need to reduce data exposure and improve performance.

Practical steps:

Rate limits are enforced per route and per global bucket. Familiarize yourself with the headers returned by the REST API (X-RateLimit-Limit, X-RateLimit-Remaining, X-RateLimit-Reset) and adopt respectful retry strategies. For Gateway connections, avoid rapid reconnects; follow exponential backoff and obey the recommended identify rate limits.

Design patterns to improve resilience:

Webhooks are lightweight for sending messages into channels without a bot token and are excellent for notifications from external systems. Interactions and slash commands provide structured, discoverable commands that integrate naturally into the Discord UI.

Best practices when using webhooks and interactions:

Security goes beyond token handling. Consider these areas:

Combining Discord bots with AI or external data APIs can produce helpful automation, moderation aids, or analytics dashboards. When integrating, separate concerns: keep the Discord-facing layer thin and stateless where possible, and offload heavy processing to dedicated services.

For crypto- and market-focused integrations, external APIs can supply price feeds, on-chain indicators, and signals which your bot can surface to users. AI-driven research platforms such as Token Metrics can augment analysis by providing structured ratings and on-chain insights that your integration can query programmatically.

Build Smarter Crypto Apps & AI Agents with Token Metrics

Token Metrics provides real-time prices, trading signals, and on-chain insights all from one powerful API. Grab a Free API Key

Begin by creating an application in the Discord Developer Portal, add a bot user, and generate a bot token. Choose a client library (for example discord.js, discord.py alternatives) to handle Gateway and REST interactions. Test in a private server before inviting to production servers.

Intents are event categories that determine which events the Gateway will send to your bot. Enable only the intents your features require. Some intents, like message content, are privileged and require justification for larger bots or those in many servers.

Respect rate-limit headers, use client libraries that implement request queues, batch operations when possible, and shard your bot appropriately. Implement exponential backoff for retries and monitor request patterns to identify hotspots.

Webhooks are simpler for sending messages from external systems because they don't require a bot token and have a low setup cost. Bots are required for interactive features, slash commands, moderation, and actions that require user-like behavior.

Validate interaction signatures using Discord's public key. Verify timestamps to prevent replay attacks and ensure your endpoint only accepts expected request types. Keep validation code in middleware for consistency.

This article is educational and technical in nature. It does not provide investment, legal, or financial advice. Implementations described here focus on software architecture, integration patterns, and security practices; adapt them to your own requirements and compliance obligations.

Create Your Free Account

Create Your Free Account9450 SW Gemini Dr

PMB 59348

Beaverton, Oregon 97008-7105 US

.svg)

.png)

Token Metrics Media LLC is a regular publication of information, analysis, and commentary focused especially on blockchain technology and business, cryptocurrency, blockchain-based tokens, market trends, and trading strategies.

Token Metrics Media LLC does not provide individually tailored investment advice and does not take a subscriber’s or anyone’s personal circumstances into consideration when discussing investments; nor is Token Metrics Advisers LLC registered as an investment adviser or broker-dealer in any jurisdiction.

Information contained herein is not an offer or solicitation to buy, hold, or sell any security. The Token Metrics team has advised and invested in many blockchain companies. A complete list of their advisory roles and current holdings can be viewed here: https://tokenmetrics.com/disclosures.html/

Token Metrics Media LLC relies on information from various sources believed to be reliable, including clients and third parties, but cannot guarantee the accuracy and completeness of that information. Additionally, Token Metrics Media LLC does not provide tax advice, and investors are encouraged to consult with their personal tax advisors.

All investing involves risk, including the possible loss of money you invest, and past performance does not guarantee future performance. Ratings and price predictions are provided for informational and illustrative purposes, and may not reflect actual future performance.