Top Crypto Trading Platforms in 2025

%201.svg)

%201.svg)

Big news: We’re cranking up the heat on AI-driven crypto analytics with the launch of the Token Metrics API and our official SDK (Software Development Kit). This isn’t just an upgrade – it's a quantum leap, giving traders, hedge funds, developers, and institutions direct access to cutting-edge market intelligence, trading signals, and predictive analytics.

Crypto markets move fast, and having real-time, AI-powered insights can be the difference between catching the next big trend or getting left behind. Until now, traders and quants have been wrestling with scattered data, delayed reporting, and a lack of truly predictive analytics. Not anymore.

The Token Metrics API delivers 32+ high-performance endpoints packed with powerful AI-driven insights right into your lap, including:

Getting started with the Token Metrics API is simple:

At Token Metrics, we believe data should be decentralized, predictive, and actionable.

The Token Metrics API & SDK bring next-gen AI-powered crypto intelligence to anyone looking to trade smarter, build better, and stay ahead of the curve. With our official SDK, developers can plug these insights into their own trading bots, dashboards, and research tools – no need to reinvent the wheel.

%201.svg)

%201.svg)

Why 2026 Looks Bullish, And What It Could Mean for TRX

The crypto market is shifting toward a broadly bullish regime into 2026 as liquidity improves and risk appetite normalizes.

Regulatory clarity across major regions is reshaping the classic four-year cycle, flows can arrive earlier and persist longer.

Institutional access keeps expanding through ETFs and qualified custody, while L2 scaling and real-world integrations broaden utility.

Infrastructure maturity lowers frictions for capital, which supports deeper order books and more persistent participation.

This backdrop frames our scenario work for TRX.

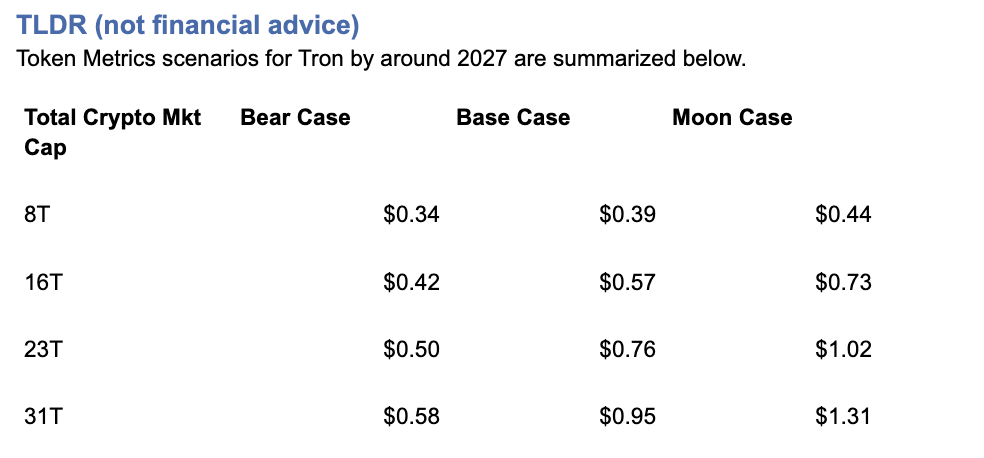

The bands below map potential outcomes to different total crypto market sizes.

Use the table as a quick benchmark, then layer in live grades and signals for timing.

Current price: $0.2971.

How to read it: Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

TM Agent baseline: Token Metrics TM Grade for $TRX is 19.06, which translates to a Strong Sell, and the trading signal is bearish, indicating short-term downward momentum.

Price context: $TRX is trading around $0.297, market cap rank #10, and is down about 11% over 30 days while up about 80% year-over-year, it has returned roughly 963% since the last trading signal flip.

Live details: Tron Token Details → https://app.tokenmetrics.com/en/tron

Buy TRX: https://www.mexc.com/acquisition/custom-sign-up?shareCode=mexc-2djd4

Scenario driven, outcomes hinge on total crypto market cap, higher liquidity and adoption lift the bands.

TM Agent gist: bearish near term, upside depends on a sustained risk-on regime and improvements in TM Grade and the trading signal.

Education only, not financial advice.

8T:

16T:

23T:

Diversification matters.

Tron is compelling, yet concentrated bets can be volatile.

Token Metrics Indices hold TRX alongside the top one hundred tokens for broad exposure to leaders and emerging winners.

Our backtests indicate that owning the full market with diversified indices has historically outperformed both the total market and Bitcoin in many regimes due to diversification and rotation.

Get early access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview

If your editor supports embeds, place a form embed here. Otherwise, include the link above as a button labeled Join Indices Early Access.

Tron is a smart-contract blockchain focused on low-cost, high-throughput transactions and cross-border settlement.

The network supports token issuance and a broad set of dApps, with an emphasis on stablecoin transfer volume and payments.

TRX is the native asset that powers fees and staking for validators and delegators within the network.

Developers and enterprises use the chain for predictable costs and fast finality, which supports consumer-facing use cases.

• Institutional and retail access expands with ETFs, listings, and integrations.

• Macro tailwinds from lower real rates and improving liquidity.

• Product or roadmap milestones such as upgrades, scaling, or partnerships.

• Macro risk-off from tightening or liquidity shocks.

• Regulatory actions or infrastructure outages.

• Concentration or validator economics and competitive displacement.

Unlock platform-wide intelligence on every major crypto asset. Use code ADVANCED20 at checkout for twenty percent off.

AI powered ratings on thousands of tokens for traders and investors.

Interactive TM AI Agent to ask any crypto question.

Indices explorer to surface promising tokens and diversified baskets.

Signal dashboards, backtests, and historical performance views.

Watchlists, alerts, and portfolio tools to track what matters.

Early feature access and enhanced research coverage.

Start with Advanced today → https://www.tokenmetrics.com/token-metrics-pricing

Can TRX reach $1?

Yes, the 23T moon case shows $1.02 and the 31T moon case shows $1.31, which imply a path to $1 in higher-liquidity regimes. Not financial advice.

Is TRX a good long-term investment

Outcome depends on adoption, liquidity regime, competition, and supply dynamics. Diversify and size positions responsibly.

Track live grades and signals: Token Details → https://app.tokenmetrics.com/en/tron

Join Indices Early Access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview

Want exposure Buy TRX on MEXC → https://www.mexc.com/acquisition/custom-sign-up?shareCode=mexc-2djd4

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

Token Metrics delivers AI-powered crypto ratings, research, and portfolio tools for every level of investor and trader seeking an edge.

%201.svg)

%201.svg)

Bitcoin

TL;DR (not financial advice): Token Metrics scenarios put BTC between ~$177k–$219k in an $8T total crypto market, $301k–$427k at $16T, $425k–$635k at $24T, and $548k–$843k at $32T by ~2027.

Baseline long-term view from TM Agent: $100k–$250k if macro stays favorable; $20k–$40k downside in a prolonged risk-off regime.

→ Deep dive & live signals: Bitcoin Token Details

→ Want to buy BTC? Use our partner link: MEXC sign-up

Scenario-driven: BTC outcomes hinge on total crypto market cap. Higher aggregate liquidity/adoption = higher BTC bands.

Fundamentals strong: Fundamental Grade 89.53% (Tokenomics 100%, Exchange 100%, Community 84%).

Tech solid: Technology Grade 69.78% (Repo 79%, Collaboration 70%, Activity 63%).

TM Agent baseline: multi-year $100k–$250k with upside if institutions & macro cooperate; risk to $20k–$40k in a severe risk-off.

This article is education only; not financial advice.

Total Crypto Mkt Cap |

Bear Case |

Base Case |

Moon Case |

$8T |

$176,934 |

$197,959 |

$218,985 |

$16T |

$300,766 |

$363,842 |

$426,918 |

$24T |

$424,598 |

$529,725 |

$634,852 |

$32T |

$548,430 |

$695,608 |

$842,786 |

Current price when modeled: ~$115.6k.

How to read it: Each band blends cycle analogues + market-cap share math and applies TA guardrails.

The base path assumes steady ETF/treasury adoption and neutral-to-positive macro; moon adds a liquidity boom + accelerated institutional flows; bear assumes muted flows and tighter liquidity.

8T MCap Scenario

16T MCap Scenario

24T MCap Scenario

32T MCap Scenario

Spot ETF flows, corporate/treasury allocations, and global liquidity are the swing factors that push BTC between the $100k–$250k baseline and the higher scenario bands.

If real rates fall and risk appetite rises, the system can support $16T–$24T crypto, putting BTC’s base case in the $364k–$530k zone.

Programmatic issuance cuts keep the scarcity story intact; historically, post-halving windows have supported asymmetric upside as demand shocks meet slower new supply.

Fundamental Grade 89.53% with perfect Tokenomics and Exchange access supports liquidity and distribution.

Technology Grade 69.78% (Repo 79%, Collaboration 70%) signals a mature, continuously maintained codebase—even if raw dev “Activity” cycles with market phases.

With price recently around $115k, the $8T path implies a medium-term corridor of $177k–$219k if crypto caps stall near cycle mid.

Reclaims above prior weekly supply zones (mid-$100ks to high-$100ks) would bias toward the $16T track ($301k–$427k).

A macro/liquidity slump that undercuts weekly supports could revisit the TM Agent downside zone ($20k–$40k), though that would require a deep and sustained risk-off.

For live support/resistance levels and signals, open: Bitcoin Token Details.

Fundamental Grade: 89.53%

Community: 84%

Tokenomics: 100%

Exchange availability: 100%

DeFi Scanner: 77%

VC Score: N/A

Technology Grade: 69.78%

Activity: 63%

Repository: 79%

Collaboration: 70%

Security: N/A

DeFi Scanner: 77%

Interpretation: Liquidity/access + pristine token mechanics keep BTC the market’s base collateral; tech metrics reflect a conservative, security-first core with steady maintenance rather than hype-driven burst commits.

• ETF/retirement channel penetration broadens demand beyond crypto-native cohorts.

• Treasury adoption (corporates, macro funds) increases “digital collateral” utility.

• Macro easing / falling real yields can push total crypto mkt cap toward $16T–$24T.

• Global tightening (higher real rates, QT) compresses risk premiums.

• Regulatory shocks curtail flows or custody rails.

• Vol/liquidity pockets amplify drawdowns; deep retests remain possible.

Can BTC hit $200k–$250k?

Yes—those sit inside our $8T–$16T bands (base/mid), contingent on continued institutional adoption and constructive macro. Not guaranteed.

Could BTC reach $500k–$800k?

Those levels map to $24T–$32T total crypto scenarios (base → moon). They require a powerful liquidity cycle plus broader balance-sheet adoption.

What invalidates the bull case?

Sustained high real rates, policy tightening, or adverse regulation that throttles ETF/fiat rails—conditions aligned with the TM Agent $20k–$40k downside.

Track the live grade & signals: Bitcoin Token Details

Set alerts around key breakout/retest levels inside Token Metrics.

Want exposure? Consider our partner: Buy BTC on MEXC

Disclosure & disclaimer: This content is for educational purposes only and not financial advice. Cryptocurrency is volatile; do your own research and manage risk.

%201.svg)

%201.svg)

Cryptocurrency's digital nature creates unprecedented investment opportunities—24/7 global markets, instant transactions, and direct ownership without intermediaries.

But this same digital nature introduces unique security challenges absent from traditional investing.

You can't lose your stock certificates to hackers, but you absolutely can lose your cryptocurrency to theft, scams, or user error.

Industry estimates suggest billions of dollars in cryptocurrency are lost or stolen annually through hacks, phishing attacks, forgotten passwords, and fraudulent schemes.

For many prospective crypto investors, security concerns represent the primary barrier to entry.

"What if I get hacked?" "How do I keep my crypto safe?" "What happens if I lose my password?"

These aren't trivial concerns—they're legitimate questions demanding thoughtful answers before committing capital to digital assets.

Token Metrics AI Indices approach security holistically, addressing not just portfolio construction and performance but the entire ecosystem of risks facing crypto investors.

From selecting fundamentally secure cryptocurrencies to providing guidance on safe custody practices, Token Metrics prioritizes investor protection alongside return generation.

This comprehensive guide explores the complete landscape of crypto security risks, reveals best practices for protecting your investments, and demonstrates how Token Metrics' systematic approach enhances safety across multiple dimensions.

Exchange Hacks and Platform Vulnerabilities

Cryptocurrency exchanges—platforms where users buy, sell, and store digital assets—represent prime targets for hackers given the enormous value they custody.

History is littered with devastating exchange hacks including Mt. Gox (2014): 850,000 Bitcoin stolen, worth $450 million then, billions today; Coincheck (2018): $530 million in NEM tokens stolen; QuadrigaCX (2019): $190 million lost when founder died with only access to cold wallets; and FTX (2022): Collapse resulting in billions in customer losses.

These incidents highlight fundamental custody risks. When you hold cryptocurrency on exchanges, you don't truly control it—the exchange does.

The industry saying captures this reality: "Not your keys, not your coins." Exchange bankruptcy, hacking, or fraud can result in total loss of funds held on platforms.

Token Metrics addresses exchange risk by never directly holding user funds—the platform provides investment guidance and analysis, but users maintain custody of their assets through personal wallets or trusted custodians they select.

This architecture eliminates single-point-of-failure risks inherent in centralized exchange custody.

Private Key Loss and User Error

Unlike traditional bank accounts where forgotten passwords can be reset, cryptocurrency relies on cryptographic private keys providing sole access to funds.

Lose your private key, and your cryptocurrency becomes permanently inaccessible—no customer service department can recover it.

Studies suggest 20% of all Bitcoin (worth hundreds of billions of dollars) is lost forever due to forgotten passwords, discarded hard drives, or deceased holders without key succession plans.

This user-error risk proves particularly acute for non-technical investors unfamiliar with proper key management.

Token Metrics provides educational resources on proper key management, wallet selection, and security best practices.

The platform emphasizes that regardless of how well indices perform, poor personal security practices can negate all investment success.

Phishing, Social Engineering, and Scams

Crypto scams exploit human psychology rather than technical vulnerabilities.

Common schemes include phishing emails impersonating legitimate platforms, fake customer support targeting victims through social media, romance scams building relationships before requesting crypto, pump-and-dump schemes artificially inflating token prices, and fake investment opportunities promising unrealistic returns.

These scams succeed because they manipulate emotions—fear, greed, trust. Even sophisticated investors occasionally fall victim to well-crafted social engineering.

Token Metrics protects users by vetting all cryptocurrencies included in indices, filtering out known scams and suspicious projects.

The platform's AI analyzes on-chain data, code quality, team credentials, and community sentiment, identifying red flags invisible to casual investors. This comprehensive due diligence provides first-line defense against fraudulent projects.

Smart Contract Vulnerabilities

Many cryptocurrencies operate on smart contract platforms where code executes automatically.

Bugs in smart contract code can be exploited, resulting in fund loss. Notable incidents include the DAO hack (2016): $50 million stolen through smart contract vulnerability; Parity wallet bug (2017): $280 million frozen permanently; and numerous DeFi protocol exploits draining millions from liquidity pools.

Token Metrics' analysis evaluates code quality and security audits for projects included in indices.

The AI monitors for smart contract risks, deprioritizing projects with poor code quality or unaudited contracts. This systematic evaluation reduces but doesn't eliminate smart contract risk—inherent to DeFi investing.

Regulatory and Compliance Risks

Cryptocurrency's evolving regulatory landscape creates risks including sudden regulatory restrictions limiting trading or access, tax compliance issues from unclear reporting requirements, securities law violations for certain tokens, and jurisdictional complications from crypto's borderless nature.

Token Metrics monitors regulatory developments globally, adjusting index compositions when regulatory risks emerge.

If specific tokens face heightened regulatory scrutiny, the AI can reduce or eliminate exposure, protecting investors from compliance-related losses.

Understanding Wallet Types

Cryptocurrency storage options exist along a security-convenience spectrum. Hot wallets (software wallets connected to internet) offer convenience for frequent trading but increased hacking vulnerability.

Cold wallets (hardware wallets or paper wallets offline) provide maximum security but reduced convenience for active trading. Custodial wallets (exchanges holding keys) offer simplicity but require trusting third parties.

For Token Metrics investors, recommended approach depends on portfolio size and trading frequency.

Smaller portfolios with frequent rebalancing might warrant hot wallet convenience. Larger portfolios benefit from cold wallet security, moving only amounts needed for rebalancing to hot wallets temporarily.

Hardware Wallet Security

Hardware wallets—physical devices storing private keys offline—represent the gold standard for cryptocurrency security. Popular options include Ledger, Trezor, and others providing "cold storage" immunity to online hacking.

Best practices for hardware wallets include:

• Purchasing directly from manufacturers

• Never buying used

• Verifying device authenticity through manufacturer verification

• Storing recovery seeds securely (physical copies in safe locations)

• Using strong PINs and never sharing device access

For substantial Token Metrics allocations, hardware wallets prove essential.

The modest cost ($50-200) pales compared to security benefits for portfolios exceeding several thousand dollars.

Multi-Signature Security

Multi-signature (multisig) wallets require multiple private keys to authorize transactions—for example, requiring 2-of-3 keys. This protects against single-point-of-failure risks: if one key is compromised, funds remain secure; if one key is lost, remaining keys still enable access.

Advanced Token Metrics investors with substantial holdings should explore multisig solutions through platforms like Gnosis Safe or Casa.

While more complex to set up, multisig dramatically enhances security for large portfolios.

Institutional Custody Solutions

For investors with six-figure+ crypto allocations, institutional custody services provide professional-grade security including:

• Regulated custodians holding cryptocurrency with insurance

• Cold storage with enterprise security protocols

• Compliance with financial industry standards

Services like Coinbase Custody, Fidelity Digital Assets, and others offer insured custody for qualified investors.

While expensive (typically basis points on assets), institutional custody eliminates personal security burdens for substantial holdings.

Password Management and Two-Factor Authentication

Basic security hygiene proves critical for crypto safety.

Use unique, complex passwords for every exchange and platform—password managers like 1Password or Bitwarden facilitate this. Enable two-factor authentication (2FA) using authenticator apps (Google Authenticator, Authy) rather than SMS which can be intercepted.

Never reuse passwords across platforms. A data breach exposing credentials from one service could compromise all accounts using identical passwords. Token Metrics recommends comprehensive password management as foundational security practice.

Recognizing and Avoiding Phishing

Phishing attacks impersonate legitimate services to steal credentials. Red flags include emails requesting immediate action or login, suspicious sender addresses with subtle misspellings, links to domains not matching official websites, and unsolicited contact from "customer support."

Always navigate directly to platforms by typing URLs rather than clicking email links. Verify sender authenticity before responding to any crypto-related communications. Token Metrics will never request passwords, private keys, or urgent fund transfers—any such requests are fraudulent.

Device Security and Network Safety

Maintain device security by:

• Keeping operating systems and software updated

• Running antivirus/anti-malware software

• Avoiding public WiFi for crypto transactions

• Considering dedicated devices for high-value crypto management

The computer or phone accessing crypto accounts represents potential vulnerability.

Compromised devices enable keyloggers capturing credentials or malware stealing keys. For substantial portfolios, dedicated devices used only for crypto management enhance security.

Cold Storage for Long-Term Holdings

For cryptocurrency not needed for active trading—long-term holdings in Token Metrics indices not requiring frequent rebalancing—cold storage provides maximum security.

Generate addresses on air-gapped computers, transfer funds to cold storage addresses, and store private keys/recovery seeds in physical safes or bank safety deposit boxes.

This approach trades convenience for security—appropriate for the majority of holdings requiring only occasional access.

No Custody Model

Token Metrics' fundamental security advantage is never taking custody of user funds. Unlike exchanges that become honeypots for hackers by concentrating billions in crypto, Token Metrics operates as an information and analytics platform. Users implement index strategies through their own chosen custody solutions.

This architecture eliminates platform hacking risk to user funds. Even if Token Metrics platform experienced data breach (which comprehensive security measures prevent), user cryptocurrency remains safe in personal or custodial wallets.

Data Security and Privacy

Token Metrics implements enterprise-grade security for user data including:

• Encrypted data transmission and storage

• Regular security audits and penetration testing

• Access controls limiting employee data access

• Compliance with data protection regulations

While Token Metrics doesn't hold crypto, protecting user data—account information, portfolio holdings, personal details—remains paramount.

The platform's security infrastructure meets standards expected of professional financial services.

API Security and Access Control

For users implementing Token Metrics strategies through API connections to exchanges, the platform supports secure API practices including:

• Read-only API keys when possible (avoiding withdrawal permissions)

• IP whitelisting restricting API access to specific addresses

• Regularly rotating API keys as security best practice

Never grant withdrawal permissions through API keys unless absolutely necessary.

Token Metrics strategies can be implemented through read-only keys providing portfolio data without risking unauthorized fund movement.

Continuous Monitoring and Threat Detection

Token Metrics employs active security monitoring including:

• Unusual activity detection flagging suspicious account access

• Threat intelligence monitoring for emerging crypto security risks

• Rapid incident response protocols should breaches occur

This proactive approach identifies and addresses security threats before they impact users, maintaining platform integrity and protecting user interests.

Diversification as Risk Management

Security isn't just about preventing theft—it's also about preventing portfolio devastation through poor investment decisions. Token Metrics' diversification inherently provides risk management by:

• Preventing over-concentration in any single cryptocurrency

• Spreading exposure across projects with different risk profiles

• Combining assets with low correlations reducing portfolio volatility

This diversification protects against the "secure wallet, worthless holdings" scenario where cryptocurrency is safely stored but becomes valueless due to project failure or market collapse.

Liquidity Risk Management

Liquidity—ability to buy or sell without significantly impacting price—represents important risk dimension. Token Metrics indices prioritize liquid cryptocurrencies with substantial trading volumes, multiple exchange listings, and deep order books.

This liquidity focus ensures you can implement index strategies efficiently and exit positions when necessary without severe slippage.

Illiquid tokens might offer higher theoretical returns but expose investors to inability to realize those returns when selling.

Regulatory Compliance and Tax Security

Following applicable laws and regulations protects against government enforcement actions, penalties, or asset seizures. Token Metrics provides transaction histories supporting tax compliance but users must maintain detailed records of all crypto activities including purchases, sales, rebalancing transactions, and transfers between wallets.

Consider working with crypto-specialized tax professionals ensuring full compliance with reporting requirements. The cost of professional tax assistance proves trivial compared to risks from non-compliance.

Emergency Preparedness and Succession Planning

Comprehensive security includes planning for emergencies including:

• Documenting wallet access instructions for trusted individuals

• Maintaining secure backup of recovery seeds and passwords

• Creating crypto asset inventory for estate planning

• Considering legal documents addressing cryptocurrency inheritance

Without proper planning, your cryptocurrency could become inaccessible to heirs upon death. Many families have lost access to substantial crypto holdings due to lack of succession planning.

Assessing Your Security Needs

Security requirements scale with portfolio size and complexity.

For small portfolios under $5,000, reputable exchange custody with 2FA and strong passwords may suffice. For portfolios of $5,000-$50,000, hardware wallets become essential for majority of holdings.

For portfolios exceeding $50,000, multisig or institutional custody warrant serious consideration. For portfolios exceeding $500,000, professional security consultation and institutional custody become prudent.

Assess your specific situation honestly, implementing security measures appropriate for your holdings and technical capabilities.

Creating Security Checklists

Develop systematic security checklists covering:

• Regular security audits of wallet configurations

• Password rotation schedules

• 2FA verification across all platforms

• Recovery seed backup verification

• Device security updates

Regular checklist execution ensures security doesn't degrade over time as you become complacent. Set quarterly reminders for comprehensive security reviews.

Continuous Education

Crypto security threats evolve constantly. Stay informed through:

• Token Metrics educational resources and platform updates

• Cryptocurrency security news and advisories

• Community forums discussing emerging threats

• Periodic security webinars and training

Knowledge proves the most powerful security tool. Understanding threat landscape enables proactive defense rather than reactive damage control.

Cryptocurrency's revolutionary potential means nothing if your investment is lost to theft, hacks, or user error.

Security isn't an afterthought—it's the foundation enabling confident long-term investing. Without proper security measures, even the most sophisticated investment strategies become meaningless.

Token Metrics AI Indices provide comprehensive security through multiple dimensions—selecting fundamentally secure cryptocurrencies, providing educational resources on custody best practices, implementing platform-level security protecting user data, and maintaining no-custody architecture eliminating single-point-of-failure risks.

But ultimately, security requires your active participation. Token Metrics provides tools, knowledge, and guidance, but you must implement proper custody solutions, maintain operational security hygiene, and stay vigilant against evolving threats.

The investors who build lasting crypto wealth aren't just those who select winning tokens—they're those who protect their investments with appropriate security measures. In cryptocurrency's digital landscape where irreversible transactions and pseudonymous attackers create unique challenges, security determines who ultimately enjoys their gains and who watches helplessly as value evaporates.

Invest intelligently with Token Metrics' AI-powered indices. Protect that investment with comprehensive security practices. This combination—sophisticated strategy plus robust security—positions you for long-term success in cryptocurrency's high-opportunity, high-risk environment.

Your crypto investments deserve professional-grade portfolio management and professional-grade security. Token Metrics delivers both.

At Token Metrics, safeguarding your crypto assets is fundamentally built into our platform.

We never take custody of client funds; instead, our AI-driven indices provide guidance, education, and advanced risk screening so you retain full control over your assets at all times.

Our robust platform-level security—encompassing encrypted communications, role-based access, and continuous threat monitoring—offers enterprise-grade protection for your data and strategies.

Whether you want to analyze secure projects, develop stronger portfolio management, or combine expert research with your own secure storage, Token Metrics provides a comprehensive support system to help you invest confidently and safely.

Use unique, complex passwords for every platform, enable two-factor authentication using authenticator apps (not SMS), avoid custodial wallets on exchanges for long-term holdings, store large balances in hardware wallets, and never share your private keys with anyone.

Hardware wallets offer the highest level of security for most users. For substantial balances, using multi-signature wallets or institutional custodians (for qualified investors) adds protection. Always keep backup recovery phrases in secure physical locations.

AI indices, such as those from Token Metrics, systematically vet projects for smart contract vulnerabilities, regulatory issues, code security, liquidity, and signs of fraudulent activity, thus reducing exposure to compromised or risky assets.

Do not interact with the suspicious message. Instead, independently visit the platform’s website by typing the URL directly and contact official customer support if needed. Never provide passwords or private keys to unsolicited contacts.

Document wallet access information and recovery instructions for trusted family or legal representatives. Maintain secure, physical records of all backup phrases, and consider legal estate planning that addresses your digital assets.

This blog is for informational and educational purposes only and does not constitute investment advice, a recommendation, or an offer to buy or sell any cryptocurrency or digital asset. You should consult your own legal, tax, and financial professionals before making any investment or security decisions. While every effort was made to ensure accuracy, neither Token Metrics nor its contributors accept liability for losses or damages resulting from information in this blog.

%201.svg)

%201.svg)

The landscape of cryptocurrency and Web3 has evolved dramatically in recent years, offering investors an expanding array of opportunities within the digital economy. As we navigate through October 2025, with Bitcoin trading above $124,000 and the total crypto market capitalization exceeding $4.15 trillion, many investors face a critical question: should I invest in crypto or Web3 companies? The reality is that both options present compelling potential, and understanding their differences, risks, and benefits is essential for making an informed investment decision.

Web3, often referred to as the decentralized web, represents the next evolution of the world wide web—one that empowers internet users with greater control, privacy, and ownership of their digital assets. Unlike traditional internet platforms controlled by centralized entities, Web3 leverages blockchain technology to create decentralized networks and applications. This shift enables users to interact, transact, and store digital assets in a more secure and transparent environment.

At the core of the Web3 movement is the crypto ecosystem, which includes a wide range of crypto assets such as cryptocurrencies and non-fungible tokens (NFTs). Built on blockchain technology, these digital assets facilitate peer-to-peer transactions without intermediaries. As internet users seek innovative investment options, decentralized apps and networks are gaining popularity for their ability to offer new ways to invest, earn, and participate in the digital economy.

The journey of Web3 began in 2014 when Gavin Wood, co-founder of Ethereum, introduced the concept as a vision for a more open and user-centric internet. Since then, the decentralized ecosystem has experienced rapid growth, fueled by blockchain technology and the emergence of unique digital assets. This foundation has enabled the development of decentralized applications (dApps) and new investment avenues previously unimaginable.

Recently, focus has shifted from centralized platforms to decentralized networks, giving users unprecedented control over data and assets. For example, decentralized finance (DeFi) has revolutionized crypto asset investment, offering innovative technologies that bypass traditional financial intermediaries. This progression has expanded investment opportunities and empowered users to participate directly in the digital economy.

Navigating the Web3 ecosystem requires a clear understanding of its main components, including digital currencies, dApps, and blockchain networks. For investors entering crypto, it’s vital to recognize that the ecosystem is multifaceted and constantly evolving. Digital assets range from established cryptocurrencies to innovative tokens powering decentralized platforms.

Conducting thorough research and staying updated on emerging trends are crucial for effective investment outcomes. Artificial intelligence increasingly supports Web3 projects by validating transactions, enhancing security, and improving user experience across platforms. Understanding how these technologies interact within the broader crypto ecosystem allows investors to make more informed decisions and capitalize on new opportunities.

The crypto market has matured significantly, demonstrating institutional adoption, clearer regulations, and sustained growth. Bitcoin recently surpassed $120,000, driven by institutional interest through ETFs and macroeconomic factors. Ethereum’s performance also exhibited resilience, climbing from around $3,500 to over $4,200 in Q3 2025.

Meanwhile, the Web3 sector—including blockchain infrastructure, dApps, and internet tech—has grown impressively. By mid-2025, market capitalization of Web3 companies exceeded $62.19 billion, with forecasts surpassing $65 billion by 2032. This parallel expansion indicates robust opportunities in both cryptocurrencies and Web3 companies, enhancing the appeal of diversified investment approaches.

Investing directly in cryptocurrencies provides exposure to digital assets lacking intermediary fees or corporate overhead. Buying tokens like Bitcoin or Ethereum offers potential for price appreciation and control over assets secured in digital wallets.

Cryptocurrency exchanges serve as primary platforms, ensuring liquidity and security. Current forecasts anticipate Bitcoin trading in the range of $80,440 to $151,200 in 2025, supported by institutional interest from firms like BlackRock and Fidelity. Crypto markets operate 24/7, enabling rapid responses to market shifts.

The growing Web3 crypto job market, which surged 300% from 2023 to 2025, reflects real economic activity. Platforms like Token Metrics support this approach by providing AI-powered analytics, real-time data, and integrated trading tools—making digital asset research and management more accessible for investors.

Investing in Web3 companies involves acquiring equity in firms developing infrastructure and platforms for the decentralized web. Instead of holding tokens, investors gain exposure through stocks like Coinbase, valued at nearly $58 billion, which has appreciated over 313% in the past year.

Technology giants such as Nvidia, with a market cap above $3 trillion, benefit from Web3 growth through computing hardware critical for blockchain mining and AI. Web3 stocks often offer diversification within the tech sector. ETFs focusing on Web3 companies provide diversified exposure without selecting individual stocks, though single-stock risks remain.

The regulatory landscape has become more favorable for cryptocurrencies and Web3 firms, with bipartisan support in Congress and new legislation like the GENIUS Act of July 2025 establishing clearer rules for stablecoins and digital assets. This clarity fosters a more secure environment for investments, building confidence in the industry’s longevity and sustainability.

Investments in crypto and Web3 stocks carry distinct risks. Crypto assets face high volatility, security challenges with wallets, and technical complexities. Effective security practices, device management, and continuous research are essential to mitigate these risks.

Web3 stock investments involve considerations such as market execution risk, competition, and broader economic fluctuations. A blended portfolio—including both digital assets and equities—can optimize potential returns while diversifying risks.

Platforms like Token Metrics offer tools for risk management, including automation, analytics, and portfolio monitoring—helping investors navigate volatility with data-driven insights.

The Web3 landscape is expanding with decentralized finance (DeFi), gaming, and tokenization. DeFi enables lending, borrowing, and trading without intermediaries, while Web3 gaming has seen a 60% rise in active users. The tokenization market, representing real-world assets on blockchain, has grown by about 23%, creating new investment niches in art, real estate, and securities.

Bitcoin’s growth from a niche experiment to a trillion-dollar asset exemplifies the decentralized financial revolution. Ethereum has facilitated the development of smart contracts and dApps, fueling innovation in multiple sectors. NFTs have revolutionized digital ownership, empowering artists and creators to monetize unique digital assets. These success stories highlight the evolving potential and inherent risks of investing in decentralized assets.

Choosing between crypto and Web3 stocks depends on your investment timeline, risk tolerance, technical knowledge, and goals. Cryptocurrencies may offer faster appreciation but demand active management; stocks tend to provide steadier, long-term growth. A diversified approach combining both strategies can help balance potential upside with risk management.

In 2025, both cryptocurrencies and Web3 company stocks present significant opportunities within the growing digital economy. Market maturation, clearer regulations, and technological advances support sustained growth. A diversified portfolio, combined with advanced tools like Token Metrics, can help investors navigate this complex landscape effectively. As the Web3 ecosystem continues to expand, the key question shifts from whether to invest into how to do so wisely to maximize opportunities and manage risks in this evolving digital frontier.

%201.svg)

%201.svg)

As cryptocurrency becomes increasingly mainstream, knowing how do I calculate capital gains on crypto is essential for every investor. The IRS treats cryptocurrency as property rather than currency, meaning each trade, sale, or purchase of crypto triggers a taxable event that must be carefully documented. This means that cryptocurrency is taxed similarly to other forms of property, with gains and losses reported for each transaction. This article serves as a comprehensive crypto tax guide, helping you understand how to accurately calculate your crypto capital gains so you can manage your tax bill effectively and avoid costly compliance issues.

Capital gains on cryptocurrency arise when you sell, trade, or spend your crypto for more than you originally paid. At its core, the calculation is straightforward: your proceeds (sale price) minus your cost basis (purchase price) equals your capital gain or loss. These gains are subject to crypto capital gains tax. However, the reality is far more complex, especially for active traders who manage multiple positions across various exchanges and wallets.

The IRS distinguishes between short-term capital gains and long-term capital gains based on how long you hold your crypto assets. If you hold your cryptocurrency for one year or less, any gains are considered short-term and taxed at your ordinary income tax rates, which range from 10% to 37% depending on your total taxable income. Conversely, assets held for more than one year qualify for preferential long-term capital gains tax rates of 0%, 15%, or 20%, based on your income and filing status. How crypto is taxed depends on the holding period and whether the gain is classified as short-term or long-term, so understanding how crypto taxed applies to your transactions is essential. This distinction can create significant tax planning opportunities for investors who strategically time their sales.

To calculate crypto capital gains accurately, you need three critical pieces of information for each transaction: your cost basis, your proceeds, and your holding period. Your cost basis is the original purchase price of your crypto, including any transaction fees directly related to the purchase. Proceeds are the amount you receive when you dispose of the crypto, minus any fees related to the sale. The difference between your proceeds and cost basis is your taxable gain, which is the amount subject to capital gains tax.

For example, imagine you bought 1 Bitcoin in June 2024 for $70,000 and sold it four months later for $80,000. Your capital gain is $80,000 minus $70,000, or $10,000. This $10,000 is your taxable gain and must be reported for bitcoin taxes. Since you held the Bitcoin for less than a year, this gain is short-term and taxed at your ordinary income tax rate. If your annual income is $85,000, your total taxable income becomes $95,000, placing you in the 24% federal tax bracket for 2024. This means you owe approximately $2,400 in federal taxes on that gain.

If you instead held the Bitcoin for 13 months before selling, the $10,000 gain qualifies for long-term capital gains treatment. With the same income, your tax rate on the gain would be 15%, resulting in a $1,500 tax bill—a $900 savings just by holding the asset longer.

While the basic formula seems simple, real-world crypto investing introduces many complexities. Take Sarah, an investor who bought Bitcoin at various prices: $5,000, $10,000, $15,000, and $20,000. When she sells part of her holdings, which purchase price should she use to calculate her cost basis?

This question highlights the importance of selecting a cost basis method. The IRS permits several approaches: FIFO (First In, First Out) uses the oldest purchase price; LIFO (Last In, First Out) uses the most recent purchase price; and HIFO (Highest In, First Out) uses the highest purchase price to minimize gains. These are all different cost basis methods, and the accounting method you choose can significantly affect your tax liability.

Complications also arise from trading on multiple exchanges and moving crypto between different wallets. Most investors don’t stick to one platform—they might buy on Coinbase, trade on Binance, stake on other platforms, and transfer assets between wallets. Each platform maintains separate transaction records, and consolidating these into a complete transaction history is like assembling a complex puzzle. Tracking your crypto cost basis for each asset is crucial, especially when dealing with multiple transactions across different platforms.

Calculating capital gains on crypto involves more than just selling for fiat currency. Several other actions involving digital assets are considered taxable events from a tax perspective, each representing a type of crypto transaction:

You owe capital gains tax whenever you dispose of or convert digital assets through these types of crypto transactions. The tax treatment of each event depends on the nature of the transaction, and the IRS provides specific guidance on how to report and classify these activities.

Not all crypto activities generate taxable events. Simply buying and holding digital assets doesn’t trigger a tax bill until you dispose of them. Transferring crypto between your own wallets is also non-taxable, though keeping detailed records of these crypto transactions is vital to track your cost basis accurately. Additionally, gifting crypto under the annual gift tax exclusion (set at $19,000 per recipient for 2025) doesn’t create taxable gains for the giver, but the recipient inherits the giver's cost basis (the original purchase price and acquisition date) for tax purposes. Proper documentation of the giver's cost basis is important for future tax reporting. The tax treatment of gifts and other crypto transactions should always be considered from a tax perspective to ensure compliance.

Crypto income encompasses a range of earnings from activities like mining, staking, airdrops, and earning interest through crypto lending platforms. For tax purposes, the IRS treats all these forms of crypto income as ordinary income, meaning they are taxed at your regular income tax rates based on your total taxable income. The key factor in determining your tax bill is the fair market value of the crypto assets at the time you receive them. For example, if you receive $1,000 worth of Bitcoin as a mining reward, you must report that $1,000 as taxable income on your tax return for the year.

Accurate reporting of crypto income starts with maintaining a complete transaction history. You should record the date, time, amount, and fair market value of each crypto asset received. This information is essential for calculating your tax liability and ensuring your tax return is accurate. Using tax software or a crypto tax calculator can greatly simplify this process by automatically importing your transaction data from exchanges and wallets, calculating your gains and losses, and generating a comprehensive tax report.

Beyond mining and staking rewards, other types of crypto income—such as interest from lending platforms or profits from trading—are also subject to crypto tax. Each of these activities can have unique tax implications, so it’s wise to consult a tax professional or use specialized tax software to ensure you’re following IRS rules and reporting all taxable income correctly. By understanding how crypto income is taxed and taking steps to accurately calculate and report it, you can avoid unexpected tax bills and minimize your overall tax liability.

Given the complexities of calculating crypto capital gains across multiple exchanges, wallets, and hundreds of transactions, having robust tracking tools is essential. This is where Token Metrics, a leading crypto trading and analytics platform, comes into play.

Token Metrics provides comprehensive portfolio tracking by aggregating your positions across exchanges and wallets, giving you real-time visibility into your entire crypto portfolio. This unified view simplifies the daunting task of compiling transaction records from disparate sources—a critical first step in accurate tax calculation. Organizing your transactions by tax year is essential for proper reporting and ensures you meet IRS deadlines for each tax year.

Beyond tracking, Token Metrics offers advanced analytics that empower investors to make tax-efficient trading decisions year-round, rather than scrambling during tax season. By understanding your current cost basis, holding periods, and potential tax implications before executing trades, you can optimize timing to minimize your tax liability. The platform’s insights help you plan around the one-year holding period that distinguishes short-term from long-term capital gains rates.

For active traders with complex portfolios, Token Metrics provides detailed performance attribution and reconstructs your cost basis accurately. Its reporting features generate comprehensive documentation to support your tax calculations, which is crucial for IRS compliance and audit defense. Token Metrics helps users report crypto transactions accurately and assists in reporting crypto gains for tax compliance, making it easier to meet regulatory requirements.

Token Metrics also aids in identifying opportunities for tax-loss harvesting, a strategy where you sell depreciated assets to realize losses that offset capital gains. By clearly showing which positions are underwater and by how much, the platform enables strategic loss realization that reduces your overall tax bill while maintaining your desired market exposure. Tools like Token Metrics are invaluable for managing cryptocurrency taxes and streamlining the entire tax preparation process.

Missing cost basis is a common challenge for crypto investors, especially those who have been active in the market for several years or have moved assets between multiple wallets and exchanges. The cost basis is the original purchase price of your crypto asset, including any transaction fees. Without this information, it becomes difficult to accurately calculate your capital gains or losses when you sell, trade, or otherwise dispose of your crypto.

To resolve missing cost basis, start by gathering as much information as possible about the original transaction. Check your exchange records, wallet transaction histories, and any other documentation that might indicate the purchase price, date, and amount of the crypto asset. If you’re unable to locate the original purchase price, some tax software can help estimate your cost basis based on available transaction records. However, using an estimated cost basis can be risky, as the IRS may scrutinize these calculations during an audit.

Maintaining accurate and complete transaction records is the best way to avoid missing cost basis issues in the future. Tax software like Token Metrics can help you track and calculate cost basis for each crypto asset, generate a detailed tax report, and ensure you’re prepared for tax season. If you’re unsure about how to calculate cost basis or need to estimate it due to missing information, consulting a tax professional is highly recommended. By resolving missing cost basis issues and keeping thorough records, you can accurately calculate your capital gains, comply with IRS rules, and minimize your tax liability.

There are a few strategies you can use to reduce your tax bill when dealing with cryptocurrency. These include tax-loss harvesting, holding assets for long-term gains, and careful planning of your transactions.

Capital losses can be a powerful tool for managing your tax bill. You can use capital losses to offset capital gains dollar-for-dollar, lowering your taxable income. If your losses exceed your gains, you can deduct up to $3,000 of net capital loss against ordinary income each year, with remaining losses carrying forward to future tax years.

Savvy investors practice tax-loss harvesting throughout the year, especially during market downturns. This approach is similar to strategies used for traditional investments like stocks. By selling depreciated positions to realize losses, they generate tax deductions and may repurchase similar assets to maintain exposure. It’s important to note that the IRS wash sale rule, which disallows losses on securities repurchased within 30 days, currently does not apply to cryptocurrency, though proposed regulations could change this.

You are required to pay taxes on gains from crypto activities, including trading, selling, or spending your crypto. Holding crypto for over a year before selling can substantially reduce your tax liability. The difference between ordinary income tax rates (up to 37%) and long-term capital gains rates (max 20%) can save tens of thousands of dollars on large gains. Patient investors who plan their sales strategically can significantly lower their tax liability.

If you mine cryptocurrency or operate as a self-employed individual, you may also be subject to self employment tax, which includes social security contributions, in addition to income and capital gains taxes.

Starting in 2025, cryptocurrency exchanges are required to report your transactions and wallet addresses directly to the IRS, making meticulous record keeping for all your digital assets more important than ever. You must maintain detailed documentation including transaction dates, amounts, fair market values at transaction time, involved parties, and the purpose of each transaction.

For tax reporting, you’ll use IRS Form 8949 to report your capital gains and losses, transferring totals to Schedule D. Income from mining, staking, or business activities, such as operating a crypto mining business, is reported on Schedule 1 or Schedule C. Due to the complexity of these forms, many investors rely on tax preparation software or consult a tax professional to ensure accuracy.

Platforms like Token Metrics simplify this process by maintaining a complete transaction history and providing organized reports ready for tax filing. Instead of manually reconstructing hundreds or thousands of transactions from multiple exchanges and wallets, you get centralized, accurate records that streamline your tax return preparation.

Federal taxes are only part of your overall tax obligation. Depending on your state of residence, you may owe additional state taxes on your crypto gains. States such as California, New York, and New Jersey impose significant taxes on investment income, while others like Texas, Florida, and Nevada have no state income tax. Your total tax liability is the sum of your federal and state obligations, so it’s important to understand your local tax rules.

Learning how do I calculate capital gains on crypto is crucial to managing your cryptocurrency investments responsibly and minimizing your tax burden. Calculating capital gains requires understanding IRS rules, maintaining detailed records, selecting appropriate accounting methods, and planning around holding periods and loss harvesting.

The complexity of cryptocurrency taxation, especially for active traders, makes reliable analytics and reporting tools indispensable. Token Metrics offers the comprehensive tracking, analysis, and reporting capabilities you need to navigate crypto taxes confidently. Its real-time portfolio visibility, accurate cost basis calculations, and tax-efficient trading insights transform the daunting task of crypto tax compliance into a manageable process.

As IRS enforcement intensifies and cryptocurrency tax regulations evolve, having sophisticated tools and accurate data becomes more valuable than ever. Whether you’re a casual investor with a few transactions or an active trader managing complex portfolios, understanding how to calculate capital gains correctly—and leveraging platforms like Token Metrics—protects you from costly errors while optimizing your tax position.

%201.svg)

%201.svg)

As cryptocurrency portfolios grow in value, understanding what’s the safest way to store large crypto holdings becomes a critical concern for investors. In 2024 alone, over $2.2 billion was stolen through various crypto hacks and scams, highlighting the vulnerabilities in digital asset protection. These incidents reveal the significant risks associated with storing large amounts of cryptocurrency, including potential vulnerabilities and hazards that can lead to loss or theft. Recent high-profile incidents, such as Coinbase’s May 2025 cyberattack that exposed customer information, underscore the urgent need for robust crypto security measures and the importance of following the safest ways to protect your assets. Unlike traditional bank accounts that benefit from FDIC insurance and fraud protection, stolen cryptocurrency cannot be refunded or insured through conventional means. This reality makes choosing the right cryptocurrency storage method to store your cryptocurrency essential for anyone holding significant crypto assets.

When it comes to crypto storage, the fundamental distinction lies in whether wallets are connected to the internet. There are different types of crypto wallets, each offering unique benefits and security features. Hot wallets are always online, making them convenient for trading, transactions, and quick access to funds. However, their constant internet connection makes them inherently vulnerable to hacking, phishing, and malware attacks. Examples include mobile, desktop, and web-based wallets, which are often used for daily spending or quick access to tokens.

On the other hand, cold wallets—also known as cold storage—store private keys completely offline. This means they are disconnected from the internet, drastically reducing the risk of remote attacks. Cold wallets are ideal for long term storage of large crypto assets, where security takes precedence over convenience. A custodial wallet is another option, where a third-party provider, such as an exchange, manages and holds your private keys on your behalf, offering convenience but less direct control compared to non-custodial wallets.

Think of hot wallets as your checking account: convenient but not meant for holding large sums. Cold wallets function like a safety deposit box, providing secure storage for assets you don’t need to access frequently. Crypto wallets use a public key as an address to receive funds, while the private key is used to sign transactions. For large holdings, experts recommend a tiered approach: keep only small amounts in hot wallets for active use, while storing the majority in cold storage. This balances security, access, and the risk of funds being compromised. Cold wallets keep private keys offline and store your private keys and digital assets securely, reducing the risk of theft.

Among cold storage options, hardware wallets are widely regarded as the safest and most practical solution for individual investors managing large cryptocurrency holdings. These physical devices, often resembling USB drives, securely store your private keys offline and only connect to the internet briefly when signing transactions.

Leading hardware wallets in 2025 include the Ledger Nano X, Ledger Flex, and Trezor Model Safe 5. These devices use secure element chips—the same technology found in credit cards and passports—to safeguard keys even if the hardware is physically compromised. By keeping private keys offline, hardware wallets protect your assets from malware, hacking, and remote theft.

To maximize safety when using hardware wallets, always purchase devices directly from manufacturers like Ledger or Trezor to avoid tampered products. When you create your wallet, securely generate and store your seed phrase or recovery phrase by writing it on paper or metal backup solutions. Another option is a paper wallet, which is a physical printout of your private and public keys, used as a form of cold storage for cryptocurrencies. Store these backups in multiple secure locations such as fireproof safes or safety deposit boxes. For example, you might keep one copy of your paper wallet or backup phrase in a home safe and another in a bank safety deposit box to reduce the risk of loss. Never store recovery phrases digitally or photograph them, as this increases the risk of theft.

Enable all available security features, including PIN protection and optional passphrases, for an extra layer of encryption. For very large holdings, consider distributing assets across multiple hardware wallets from different manufacturers to eliminate single points of failure. The main limitation of hardware wallets is their physical vulnerability: if lost or destroyed without proper backup, your funds become irretrievable, making diligent backup practices essential.

For even greater protection, especially among families, businesses, and institutional investors, multi-signature (multisig) wallets provide distributed control over funds. Unlike traditional wallets that require a single private key to authorize transactions, multisig wallets require multiple keys to sign off, reducing the risk of theft or loss.

A common configuration is a 2-of-3 setup, where any two of three keys are needed to sign a transaction. In this setup, the concept of 'two keys' is fundamental—two keys must be provided to authorize and access the funds. This means that funds can only be accessed when the required number of keys are available, ensuring both redundancy and security. If one key is lost, the other two can still access funds—while maintaining strong security since an attacker would need to compromise multiple keys simultaneously. More complex configurations like 3-of-5 are common for very large holdings, allowing keys to be geographically distributed to further safeguard assets.

Popular multisig wallet providers in 2025 include BitGo, which supports over 1,100 digital assets and offers insurance coverage up to $250 million for funds stored. BitGo’s wallets combine hot and cold storage with multisig security, meeting regulatory standards for institutional clients. Other notable solutions include Gnosis Safe (now known as Safe) for Ethereum and EVM-compatible chains, and Unchained, which manages over 100,000 Bitcoin using 2-of-3 multisig vaults tailored for Bitcoin holders. While multisig wallets require more technical setup and can slow transaction processing due to the need for multiple signatures, their enhanced security makes them ideal for large holdings where protection outweighs convenience.

An innovative advancement in crypto storage is Multi-Party Computation (MPC) technology, rapidly becoming the standard for institutional custody. Unlike multisig wallets where multiple full private keys exist, MPC splits a single private key into encrypted shares distributed among several parties. The full key never exists in one place—not during creation, storage, or signing—greatly reducing the risk of theft.

MPC offers advantages over traditional multisig: it works seamlessly across all blockchains, transactions appear identical to regular ones on-chain enhancing privacy, and it avoids coordination delays common in multisig setups. Leading MPC custody providers like Fireblocks have demonstrated the security benefits of this approach. However, Fireblocks also revealed vulnerabilities in competing threshold signature wallets in 2022, highlighting the importance of ongoing security audits in this evolving field.

For individual investors, MPC-based wallets like Zengo provide keyless security without requiring a seed phrase, distributing key management across secure locations. Nevertheless, MPC solutions are primarily adopted by institutions, with firms like BitGo, Fireblocks, and Copper offering comprehensive custody services for family offices and corporations.

For extremely large holdings—often in the millions of dollars—professional institutional custody services offer unparalleled security infrastructure, insurance coverage, and regulatory compliance. These platforms typically facilitate not only secure storage but also the buying and selling of crypto assets as part of their comprehensive service offerings. Institutional custody solutions are commonly used to store bitcoin and other major cryptocurrencies securely, protecting them from theft, loss, and unauthorized access.

Regulated custodians implement multiple layers of protection. They undergo regular third-party audits and SOC certifications to verify their security controls. Many maintain extensive insurance policies covering both hot and cold storage breaches, sometimes with coverage reaching hundreds of millions of dollars. Professional key management minimizes user errors, and 24/7 security monitoring detects and responds to threats in real-time.

Despite these advantages, institutional custody carries counterparty risk. The Coinbase cyberattack in May 2025, which exposed customer personal information (though not passwords or private keys), served as a reminder that even the most secure platforms can be vulnerable. Similarly, the collapse of platforms like FTX, Celsius, and BlockFi revealed that custodial services can fail catastrophically, sometimes taking customer funds with them.

Therefore, thorough due diligence is essential when selecting institutional custodians. Verify their regulatory licenses, audit reports, insurance coverage, and operational history before entrusting significant funds.

Securing large crypto holdings is not just about storage—it also involves smart portfolio management and timely decision-making. Sophisticated analytics platforms have become essential tools for this purpose. Token Metrics stands out as a leading AI-powered crypto trading and analytics platform designed to help users manage large cryptocurrency portfolios effectively. While hardware wallets and multisig solutions protect your keys, Token Metrics provides real-time market intelligence across hundreds of cryptocurrencies, enabling holders to make informed decisions about when to move assets between hot wallets and cold storage. The platform also assists users in determining the optimal times to buy crypto as part of their overall portfolio management strategy, ensuring that purchases align with market trends and security considerations.

The platform’s AI-driven analysis helps investors identify market conditions that warrant moving assets out of cold storage to capitalize on trading opportunities or to secure profits by returning funds to cold wallets. This strategic timing can significantly enhance portfolio performance without compromising security. Token Metrics also offers customizable risk alerts, allowing holders to respond quickly to significant market movements without constant monitoring. Since launching integrated trading capabilities in March 2025, the platform provides an end-to-end solution connecting research, analysis, and execution. This is especially valuable for users managing hot wallets for active trading while keeping the bulk of their crypto assets securely stored offline. With AI-managed indices, portfolio rebalancing recommendations, and detailed token grades assessing both short-term and long-term potential, Token Metrics equips large holders with the analytical infrastructure necessary to safeguard and optimize their holdings.

Even the most secure storage methods can fail without proper security hygiene. Regardless of your chosen storage solution, certain best practices are essential:

When it comes to crypto storage, having a robust backup and recovery plan is just as essential as choosing the right wallet. No matter how secure your hardware wallet, hot wallet, or cold wallet may be, losing access to your private keys or recovery phrase can mean losing your crypto assets forever. That’s why safeguarding your ability to restore access is a cornerstone of crypto security.

For users of hardware wallets like the Ledger Nano or Trezor Model, the most critical step is to securely record your recovery phrase (also known as a seed phrase) when you first set up your device. This unique string of words is the master key to your wallet—if your hardware wallet is lost, stolen, or damaged, the recovery phrase allows you to restore your funds on a new device. Write your seed phrase down on paper or, for even greater protection, use a metal backup solution designed to withstand fire and water damage. Never store your recovery phrase digitally, such as in a note-taking app or cloud storage, as these methods are vulnerable to hacking and malware.

It’s best practice to store your backup in a location separate from your hardware wallet—think a safe deposit box, a home safe, or another secure, private spot. For added security, consider splitting your backup between multiple locations or trusted individuals, especially if you’re managing significant crypto assets. This way, even if one location is compromised, your funds remain protected.

Non-custodial wallets, whether hardware or software-based, give you full control over your private keys and, by extension, your crypto. With this control comes responsibility: if you lose your recovery phrase or private key, there’s no customer support or password reset to help you regain access. That’s why diligent backup practices are non-negotiable for anyone serious about storing bitcoin or other digital assets securely.

For those seeking even greater protection, multi-signature wallets add another layer of security. By requiring multiple keys to authorize transactions, multi-signature setups make it much harder for hackers or thieves to access your funds—even if one key or device is lost or compromised. This method is especially valuable for families, businesses, or anyone managing large holdings who wants to reduce single points of failure.

If you ever suspect your wallet or recovery phrase has been compromised, act immediately: transfer your funds to a new wallet with a freshly generated seed phrase, and update your backup procedures. Similarly, if a hot wallet on your mobile device or desktop is hacked, move your assets to a secure cold wallet as quickly as possible. Ultimately, backup and recovery are not just technical steps—they’re your safety net. Whether you use hardware wallets, hot wallets, cold wallets, or even paper wallets, always create and securely store a backup of your recovery phrase. Regularly review your backup strategy, and make sure trusted individuals know how to access your assets in case of emergency. By taking these precautions, you ensure that your crypto assets remain safe, secure, and accessible—no matter what happens.

For large cryptocurrency holdings, a multi-layered storage strategy offers the best balance of security and accessibility. A common approach for portfolios exceeding six figures includes:

This tiered framework ensures that even if one layer is compromised, the entire portfolio remains protected. Combined with platforms like Token Metrics for market intelligence and risk management, this strategy offers both security and operational flexibility.

In 2025, securing large cryptocurrency holdings requires a deep understanding of various storage technologies and the implementation of layered security strategies. Hardware wallets remain the gold standard for individual investors, while multisig wallets and MPC solutions provide enhanced protection for very large or institutional holdings.

There is no one-size-fits-all answer to what's the safest way to store large crypto holdings. The ideal approach depends on factors like portfolio size, technical skill, transaction frequency, and risk tolerance. Most large holders benefit from distributing assets across multiple storage methods, keeping the majority in cold storage and a smaller portion accessible for trading.

Ultimately, cryptocurrency security hinges on effective private key management. Protecting these keys from unauthorized access while ensuring you can access them when needed is paramount. By combining robust storage solutions, disciplined security practices, and advanced analytics tools like Token Metrics, investors can safeguard their crypto assets effectively while maintaining the flexibility to seize market opportunities.

As the cryptocurrency landscape evolves, so will storage technologies. Stay informed, regularly review your security setup, and never become complacent. In the world of digital assets, your security is your responsibility—and with large holdings, that responsibility is more essential than ever.

%201.svg)

%201.svg)

The cryptocurrency industry experienced a turning point on July 18, 2025, when President Donald Trump signed the GENIUS Act into law. This landmark piece of major crypto legislation marks the first major federal crypto legislation ever passed by Congress and fundamentally reshapes the regulatory landscape for stablecoins. The GENIUS Act brings much-needed clarity and oversight to digital assets, including digital currency, signaling a dramatic shift in how the United States approaches the rapidly evolving crypto space. For anyone involved in cryptocurrency investing, trading, or innovation, understanding what the GENIUS Act is and how it affects crypto is essential to navigating this new era of regulatory clarity.

The digital asset landscape is undergoing a profound transformation, with the GENIUS Act representing a pivotal moment in establishing national innovation for U.S. stablecoins. Digital assets—ranging from cryptocurrencies and stablecoins to digital tokens and digital dollars—are at the forefront of financial innovation, reshaping how individuals, businesses, and financial institutions interact with money and value. As decentralized finance (DeFi) and digital finance continue to expand, the need for regulatory clarity and robust consumer protections has never been greater.

The GENIUS Act aims to address these needs by introducing clear rules for stablecoin issuers and setting a new standard for regulatory oversight in the crypto industry. By requiring permitted payment stablecoin issuers to maintain 1:1 reserves in highly liquid assets such as U.S. treasury bills, the Act ensures that stablecoin holders can trust in the stable value of their digital assets. This move not only protects consumers but also encourages greater participation from traditional banks, credit unions, and other financial institutions that had previously been wary of the regulatory uncertainties surrounding digital currencies.

One of the GENIUS Act’s most significant contributions is its comprehensive regulatory framework, which brings together federal and state regulators, the Federal Reserve, and the Federal Deposit Insurance Corporation to oversee payment stablecoin issuers. The Act also opens the door for foreign issuers to operate in the U.S. under specific conditions, further enhancing the role of cross-border payments in the global digital asset ecosystem. By aligning stablecoin regulation with the Bank Secrecy Act, the GENIUS Act requires issuers to implement robust anti-money laundering and customer identification measures, strengthening the integrity of the digital asset market.

President Trump’s signing of the GENIUS Act into law marks a turning point for both the crypto space and the broader financial markets. The Act’s focus on protecting consumers, fostering stablecoin adoption, and promoting financial innovation is expected to drive significant growth in digital finance. Crypto companies and major financial institutions now have a clear regulatory pathway, enabling them to innovate with confidence and contribute to the ongoing evolution of digital currencies.

As the digital asset market matures, staying informed about regulatory developments—such as the GENIUS Act and the proposed Asset Market Clarity Act—is essential for anyone looking to capitalize on the opportunities presented by digital finance. The GENIUS Act establishes a solid foundation for the regulation of payment stablecoins, ensuring legal protections for both the buyer and stablecoin holders, and setting the stage for future advancements in the crypto industry. With clear rules, strong consumer protections, and a commitment to national innovation for U.S. stablecoins, the GENIUS Act is shaping the future of digital assets and guiding the next era of financial markets.

The GENIUS Act, officially known as the Guiding and Establishing National Innovation for U.S. Stablecoins Act, establishes the first comprehensive federal regulatory framework specifically designed for stablecoins in the United States. Introduced by Senator Bill Hagerty (R-Tennessee) on May 1, 2025, the bill received strong bipartisan support, passing the Senate 68-30 on June 17, 2025, before clearing the House on July 17, 2025.

Stablecoins are a class of cryptocurrencies engineered to maintain a stable value by pegging their worth to another asset, typically the U.S. dollar. Unlike highly volatile crypto assets such as Bitcoin or Ethereum, stablecoins provide price stability, making them ideal for payments, trading, and serving as safe havens during market turbulence. At the time of the GENIUS Act’s passage, the two largest stablecoins—Tether (USDT) and USD Coin (USDC)—dominated a $238 billion stablecoin market.

This legislation emerged after years of regulatory uncertainty that left stablecoin issuers operating in a legal gray zone. The collapse of TerraUSD in 2022, which wiped out billions of dollars in value, underscored the risks of unregulated stablecoins and accelerated calls for federal oversight. The GENIUS Act aims to address these concerns by establishing clear standards for reserve backing, consumer protection, and operational transparency, thereby fostering national innovation in digital finance.