Top Crypto Trading Platforms in 2025

%201.svg)

%201.svg)

Big news: We’re cranking up the heat on AI-driven crypto analytics with the launch of the Token Metrics API and our official SDK (Software Development Kit). This isn’t just an upgrade – it's a quantum leap, giving traders, hedge funds, developers, and institutions direct access to cutting-edge market intelligence, trading signals, and predictive analytics.

Crypto markets move fast, and having real-time, AI-powered insights can be the difference between catching the next big trend or getting left behind. Until now, traders and quants have been wrestling with scattered data, delayed reporting, and a lack of truly predictive analytics. Not anymore.

The Token Metrics API delivers 32+ high-performance endpoints packed with powerful AI-driven insights right into your lap, including:

Getting started with the Token Metrics API is simple:

At Token Metrics, we believe data should be decentralized, predictive, and actionable.

The Token Metrics API & SDK bring next-gen AI-powered crypto intelligence to anyone looking to trade smarter, build better, and stay ahead of the curve. With our official SDK, developers can plug these insights into their own trading bots, dashboards, and research tools – no need to reinvent the wheel.

%201.svg)

%201.svg)

Crypto is transitioning into a broadly bullish regime into 2026 as liquidity improves and adoption deepens.

Regulatory clarity is reshaping the classic four-year cycle, flows can arrive earlier and persist longer as institutions gain confidence.

Access and infrastructure continue to mature with ETFs, qualified custody, and faster L2 scaling that reduce frictions for new capital.

Real‑world integrations expand the surface area for crypto utility, which supports sustained participation across market phases.

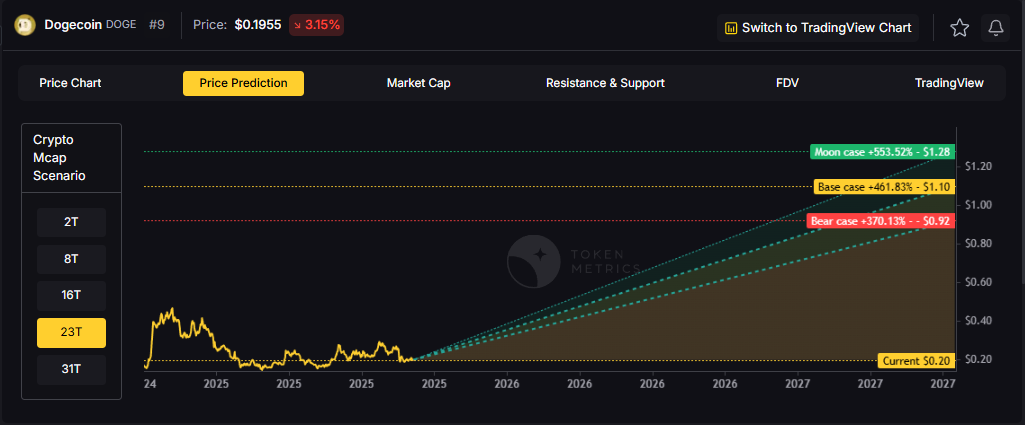

This backdrop frames our scenario work for DOGE. The bands below reflect different total market sizes and DOGE's share dynamics.

Read the TLDR first, then dive into grades, catalysts, and risks.

How to read it: Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

TM Agent baseline: Token Metrics lead metric, TM Grade, is 22.65 (Sell), and the trading signal is bearish, indicating short-term downward momentum. Price context: $DOGE is trading around $0.193, rank #9, down about 3.1% in 24 hours and roughly 16% over 30 days. Implication: upside likely requires a broader risk-on environment and renewed retail or celebrity-driven interest.

Live details: Dogecoin Token Details → https://app.tokenmetrics.com/en/dogecoin

• Scenario driven, outcomes hinge on total crypto market cap, higher liquidity and adoption lift the bands.

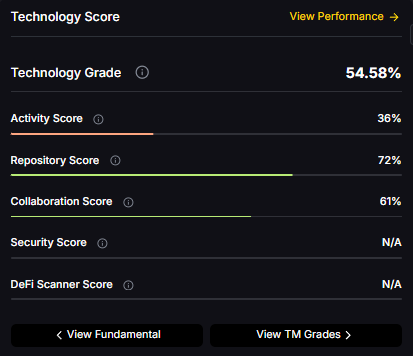

• Technology: Technology Grade 54.58% (Activity 36%, Repository 72%, Collaboration 61%, Security N/A, DeFi Scanner N/A).

• TM Agent gist: cautious long‑term stance until grades and momentum improve.

• Education only, not financial advice.

8T:

16T:

23T:

31T:

Diversification matters. Dogecoin is compelling, yet concentrated bets can be volatile. Token Metrics Indices hold DOGE alongside the top one hundred tokens for broad exposure to leaders and emerging winners.

Our backtests indicate that owning the full market with diversified indices has historically outperformed both the total market and Bitcoin in many regimes due to diversification and rotation.

Dogecoin is a peer-to-peer cryptocurrency that began as a meme but has evolved into a widely recognized digital asset used for tipping, payments, and community-driven initiatives. It runs on its own blockchain with inflationary supply mechanics. The token’s liquidity and brand awareness create periodic speculative cycles, especially during broad risk-on phases.

Technology Snapshot from Token Metrics

Technology Grade: 54.58% (Activity 36%, Repository 72%, Collaboration 61%, Security N/A, DeFi Scanner N/A).

Catalysts That Skew Bullish

• Institutional and retail access expands with ETFs, listings, and integrations.

• Macro tailwinds from lower real rates and improving liquidity.

• Product or roadmap milestones such as upgrades, scaling, or partnerships.

Risks That Skew Bearish

• Macro risk-off from tightening or liquidity shocks.

• Regulatory actions or infrastructure outages.

• Concentration or validator economics and competitive displacement.

Special Offer — Token Metrics Advanced Plan with 20% Off

Unlock platform-wide intelligence on every major crypto asset. Use code ADVANCED20 at checkout for twenty percent off.

• AI powered ratings on thousands of tokens for traders and investors.

• Interactive TM AI Agent to ask any crypto question.

• Indices explorer to surface promising tokens and diversified baskets.

• Signal dashboards, backtests, and historical performance views.

• Watchlists, alerts, and portfolio tools to track what matters.

• Early feature access and enhanced research coverage.

Can DOGE reach $1.00?

Yes, multiple tiers imply levels above $1.00 by the 2027 horizon, including the 23T Base and all 31T scenarios. Not financial advice.

Is DOGE a good long-term investment?

Outcome depends on adoption, liquidity regime, competition, and supply dynamics. Diversify and size positions responsibly.

• Track live grades and signals: Token Details

• Join Indices Early Access

• Want exposure Buy DOGE on MEXC

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

%201.svg)

%201.svg)

The crypto market is shifting toward a broadly bullish regime into 2026 as liquidity improves and risk appetite normalizes.

Regulatory clarity across major regions is reshaping the classic four-year cycle, flows can arrive earlier and persist longer.

Institutional access keeps expanding through ETFs and qualified custody, while L2 scaling and real-world integrations broaden utility.

Infrastructure maturity lowers frictions for capital, which supports deeper order books and more persistent participation.

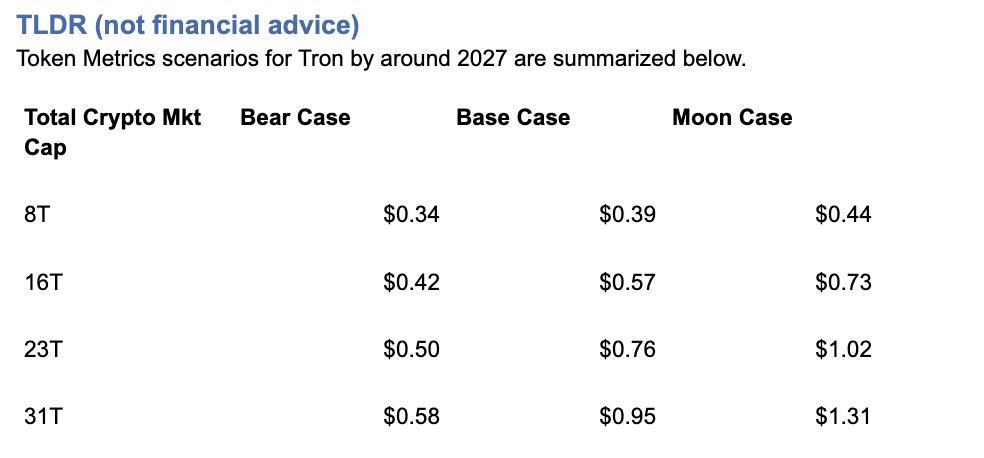

This backdrop frames our scenario work for TRX. The bands below map potential outcomes to different total crypto market sizes.

Use the table as a quick benchmark, then layer in live grades and signals for timing.

Current price: $0.2971.

How to read it: Each band blends cycle analogues and market-cap share math with TA guardrails. Base assumes steady adoption and neutral or positive macro. Moon layers in a liquidity boom. Bear assumes muted flows and tighter liquidity.

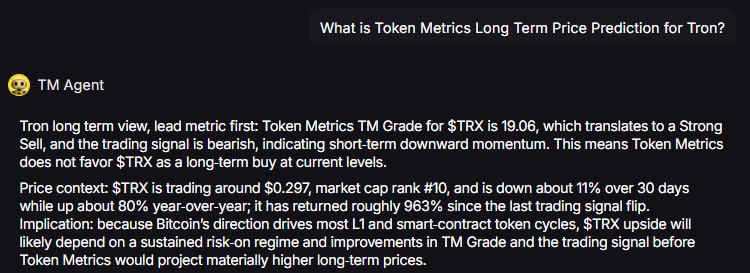

TM Agent baseline: Token Metrics TM Grade for $TRX is 19.06, which translates to a Strong Sell, and the trading signal is bearish, indicating short-term downward momentum. Price context: $TRX is trading around $0.297, market cap rank #10, and is down about 11% over 30 days while up about 80% year-over-year, it has returned roughly 963% since the last trading signal flip.

Live details: Tron Token Details → https://app.tokenmetrics.com/en/tron

Buy TRX: https://www.mexc.com/acquisition/custom-sign-up?shareCode=mexc-2djd4

• Scenario driven, outcomes hinge on total crypto market cap, higher liquidity and adoption lift the bands.

• TM Agent gist: bearish near term, upside depends on a sustained risk-on regime and improvements in TM Grade and the trading signal.

• Education only, not financial advice.

8T:

16T:

23T:

Diversification matters. Tron is compelling, yet concentrated bets can be volatile. Token Metrics Indices hold TRX alongside the top one hundred tokens for broad exposure to leaders and emerging winners.

Our backtests indicate that owning the full market with diversified indices has historically outperformed both the total market and Bitcoin in many regimes due to diversification and rotation.

Get early access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview

If your editor supports embeds, place a form embed here. Otherwise, include the link above as a button labeled Join Indices Early Access.

Tron is a smart-contract blockchain focused on low-cost, high-throughput transactions and cross-border settlement.

The network supports token issuance and a broad set of dApps, with an emphasis on stablecoin transfer volume and payments.

TRX is the native asset that powers fees and staking for validators and delegators within the network.

Developers and enterprises use the chain for predictable costs and fast finality, which supports consumer-facing use cases.

Catalysts That Skew Bullish

• Institutional and retail access expands with ETFs, listings, and integrations.

• Macro tailwinds from lower real rates and improving liquidity.

• Product or roadmap milestones such as upgrades, scaling, or partnerships.

Risks That Skew Bearish

• Macro risk-off from tightening or liquidity shocks.

• Regulatory actions or infrastructure outages.

• Concentration or validator economics and competitive displacement.

Unlock platform-wide intelligence on every major crypto asset. Use code ADVANCED20 at checkout for twenty percent off.

• AI powered ratings on thousands of tokens for traders and investors.

• Interactive TM AI Agent to ask any crypto question.

• Indices explorer to surface promising tokens and diversified baskets.

• Signal dashboards, backtests, and historical performance views.

• Watchlists, alerts, and portfolio tools to track what matters.

• Early feature access and enhanced research coverage.

Start with Advanced today → https://www.tokenmetrics.com/token-metrics-pricing

Can TRX reach $1?

Yes, the 23T moon case shows $1.02 and the 31T moon case shows $1.31, which imply a path to $1 in higher-liquidity regimes. Not financial advice.

Is TRX a good long-term investment

Outcome depends on adoption, liquidity regime, competition, and supply dynamics. Diversify and size positions responsibly.

Track live grades and signals: Token Details → https://app.tokenmetrics.com/en/tron

Join Indices Early Access: https://docs.google.com/forms/d/1AnJr8hn51ita6654sRGiiW1K6sE10F1JX-plqTUssXk/preview

Want exposure Buy TRX on MEXC → https://www.mexc.com/acquisition/custom-sign-up?shareCode=mexc-2djd4

Disclosure

Educational purposes only, not financial advice. Crypto is volatile, do your own research and manage risk.

%201.svg)

%201.svg)

The cryptocurrency market presents unprecedented wealth-building opportunities, but it also poses significant challenges.

With thousands of tokens competing for investor attention and market volatility that can erase gains overnight, success in crypto investing requires more than luck—it demands a strategic, data-driven approach.

Token Metrics AI Indices have emerged as a game-changing solution for investors seeking to capitalize on crypto's growth potential while managing risk effectively.

This comprehensive guide explores how to leverage these powerful tools to build, manage, and optimize your cryptocurrency portfolio for maximum returns in 2025 and beyond.

The traditional approach to crypto investing involves countless hours of research, technical analysis, and constant market monitoring.

For most investors, this proves unsustainable.

Token Metrics solves this challenge by offering professionally managed, AI-driven index portfolios that automatically identify winning opportunities and rebalance based on real-time market conditions.

What makes Token Metrics indices unique is their foundation in machine learning technology.

The platform analyzes over 6,000 cryptocurrencies daily, processing more than 80 data points per asset including technical indicators, fundamental metrics, on-chain analytics, sentiment data, and exchange information.

This comprehensive evaluation far exceeds what individual investors can accomplish manually.

The indices employ sophisticated AI models including gradient boosting decision trees, recurrent neural networks, random forests, natural language processing algorithms, and anomaly detection frameworks.

These systems continuously learn from market patterns, adapt to changing conditions, and optimize portfolio allocations to maximize risk-adjusted returns.

Token Metrics offers a diverse range of indices designed to serve different investment objectives, risk tolerances, and market outlooks.

Understanding these options is crucial for building an effective crypto portfolio.

Conservative Indices: Stability and Long-Term Growth

For investors prioritizing capital preservation and steady appreciation, conservative indices focus on established, fundamentally sound cryptocurrencies with proven track records.

These indices typically allocate heavily to Bitcoin and Ethereum while including select large-cap altcoins with strong fundamentals.

The Investor Grade Index exemplifies this approach, emphasizing projects with solid development teams, active communities, real-world adoption, and sustainable tokenomics.

This index is ideal for retirement accounts, long-term wealth building, and risk-averse investors seeking exposure to crypto without excessive volatility.

Balanced Indices: Growth with Measured Risk

Balanced indices strike a middle ground between stability and growth potential.

These portfolios combine major cryptocurrencies with promising mid-cap projects that demonstrate strong technical momentum and fundamental strength.

The platform's AI identifies tokens showing positive divergence across multiple indicators—rising trading volume, improving developer activity, growing social sentiment, and strengthening technical patterns.

Balanced indices typically rebalance weekly or bi-weekly, capturing emerging trends while maintaining core positions in established assets.

Aggressive Growth Indices: Maximum Upside Potential

For investors comfortable with higher volatility in pursuit of substantial returns, aggressive growth indices target smaller-cap tokens with explosive potential.

These portfolios leverage Token Metrics' Trader Grade system to identify assets with strong short-term momentum and technical breakout patterns.

Aggressive indices may include DeFi protocols gaining traction, Layer-1 blockchains with innovative technology, AI tokens benefiting from market narratives, and memecoins showing viral adoption patterns.

While risk is higher, the potential for 10x, 50x, or even 100x returns makes these indices attractive for portfolio allocation strategies that embrace calculated risk.

Sector-Specific Indices: Thematic Investing

Token Metrics offers specialized indices targeting specific cryptocurrency sectors, allowing investors to align portfolios with their market convictions and thematic beliefs.

• DeFi Index: Focuses on decentralized finance protocols including lending platforms, decentralized exchanges, yield aggregators, and synthetic asset platforms.

• Layer-1 Index: Concentrates on base-layer blockchains competing with Ethereum, including Solana, Avalanche, Cardano, Polkadot, and emerging ecosystems.

• AI and Machine Learning Index: Targets tokens at the intersection of artificial intelligence and blockchain technology.

• Memecoin Index: Contrary to traditional wisdom dismissing memecoins as purely speculative, Token Metrics recognizes that community-driven tokens can generate extraordinary returns.

This index uses AI to identify memecoins with genuine viral potential, active communities, and sustainable momentum before they become mainstream.

Success with Token Metrics indices requires more than simply choosing an index—it demands a comprehensive portfolio strategy tailored to your financial situation, goals, and risk tolerance.

Step 1: Assess Your Financial Profile

Begin by honestly evaluating your investment capacity, time horizon, and risk tolerance.

Ask yourself critical questions: How much capital can I allocate to crypto without compromising financial security? What is my investment timeline—months, years, or decades? How would I react emotionally to a 30% portfolio drawdown? What returns do I need to achieve my financial goals?

Your answers shape your portfolio construction.

Conservative investors with shorter timelines should emphasize stable indices, while younger investors with longer horizons can embrace more aggressive strategies.

Step 2: Determine Optimal Allocation Percentages

Financial advisors increasingly recommend including cryptocurrency in diversified portfolios, but the appropriate allocation varies significantly based on individual circumstances.

• Conservative Allocation (5-10% of portfolio): Suitable for investors approaching retirement or with low risk tolerance. Focus 80% on conservative indices, 15% on balanced indices, and 5% on sector-specific themes you understand deeply.

• Moderate Allocation (10-20% of portfolio): Appropriate for mid-career professionals building wealth. Allocate 50% to conservative indices, 30% to balanced indices, and 20% to aggressive growth or sector-specific indices.

• Aggressive Allocation (20-30%+ of portfolio): Reserved for younger investors with high risk tolerance and long time horizons. Consider 30% conservative indices for stability, 30% balanced indices for steady growth, and 40% split between aggressive growth and thematic sector indices.

Step 3: Implement Dollar-Cost Averaging

Rather than investing your entire allocation at once, implement a dollar-cost averaging strategy over 3-6 months.

This approach reduces timing risk and smooths out entry prices across market cycles.

For example, if allocating $10,000 to Token Metrics indices, invest $2,000 monthly over five months.

This strategy proves particularly valuable in volatile crypto markets where timing the perfect entry proves nearly impossible.

Step 4: Set Up Automated Rebalancing

Token Metrics indices automatically rebalance based on AI analysis, but you should also establish personal portfolio rebalancing rules.

Review your overall allocation quarterly and rebalance if any index deviates more than 10% from your target allocation.

If aggressive growth indices perform exceptionally well and grow from 20% to 35% of your crypto portfolio, take profits and rebalance back to your target allocation.

This disciplined approach ensures you systematically lock in gains and maintain appropriate risk levels.

Step 5: Monitor Performance and Adjust Strategy

While Token Metrics indices handle day-to-day portfolio management, you should conduct quarterly reviews assessing overall performance, comparing returns to benchmarks like Bitcoin and Ethereum, evaluating whether your risk tolerance has changed, and considering whether emerging market trends warrant allocation adjustments.

Use Token Metrics' comprehensive analytics to track performance metrics including total return, volatility, Sharpe ratio, maximum drawdown, and correlation to major cryptocurrencies.

These insights inform strategic decisions about continuing, increasing, or decreasing exposure to specific indices.

Once comfortable with basic index investing, consider implementing advanced strategies to enhance returns and manage risk more effectively.

Tactical Overweighting

While maintaining core index allocations, temporarily overweight specific sectors experiencing favorable market conditions.

During periods of heightened interest in AI, increase allocation to the AI and Machine Learning Index by 5-10% at the expense of other sector indices.

Return to strategic allocation once the catalyst dissipates.

Combining Indices with Individual Tokens

Use Token Metrics indices for 70-80% of your crypto allocation while dedicating 20-30% to individual tokens identified through the platform's Moonshots feature.

This hybrid approach provides professional management while allowing you to pursue high-conviction opportunities.

Market Cycle Positioning

Adjust index allocations based on broader market cycles.

During bull markets, increase exposure to aggressive growth indices.

As conditions turn bearish, shift toward conservative indices with strong fundamentals.

Token Metrics' AI Indicator provides valuable signals for market positioning.

Even with sophisticated AI-driven indices, cryptocurrency investing carries substantial risks.

Implement robust risk management practices to protect your wealth.

Diversification Beyond Crypto

Never allocate so much to cryptocurrency that a market crash would devastate your financial position.

Most financial advisors recommend limiting crypto exposure to 5-30% of investment portfolios depending on age and risk tolerance.

Maintain substantial allocations to traditional assets—stocks, bonds, real estate—that provide diversification and stability.

Position Sizing and Security

Consider implementing portfolio-level stop-losses if your crypto allocation declines significantly from its peak.

Use hardware wallets or secure custody solutions for significant holdings.

Implement strong security practices including two-factor authentication and unique passwords.

Tax Optimization

Cryptocurrency taxation typically involves capital gains taxes on profits.

Consult tax professionals to optimize your strategy through tax-loss harvesting and strategic rebalancing timing.

Token Metrics' transaction tracking helps maintain accurate records for tax reporting.

Several factors distinguish Token Metrics indices from alternatives and explain their consistent outperformance.

Token Metrics indices respond to market changes in real-time rather than waiting for scheduled monthly or quarterly rebalancing.

This responsiveness proves crucial in crypto markets where opportunities can appear and disappear rapidly.

The platform's AI evaluates dozens of factors simultaneously—technical patterns, fundamental strength, on-chain metrics, sentiment analysis, and exchange dynamics.

This comprehensive approach identifies tokens that traditional indices would miss.

The AI continuously learns from outcomes, improving predictive accuracy over time.

Models that underperform receive reduced weighting while successful approaches gain influence, creating an evolving system that adapts to changing market dynamics.

Token Metrics' extensive coverage of 6,000+ tokens provides exposure to emerging projects before they gain mainstream attention, positioning investors for maximum appreciation potential.

To illustrate practical application, consider several investor profiles and optimal index strategies.

Profile 1: Conservative 55-Year-Old Preparing for Retirement

Total portfolio: $500,000

Crypto allocation: $25,000 (5%)

Strategy: $20,000 in Investor Grade Index (80%), $4,000 in Balanced Index (16%), $1,000 in DeFi Index (4%)

This conservative approach provides crypto exposure with minimal volatility, focusing on established assets likely to appreciate steadily without risking retirement security.

Profile 2: Moderate 35-Year-Old Building Wealth

Total portfolio: $150,000

Crypto allocation: $30,000 (20%)

Strategy: $12,000 in Investor Grade Index (40%), $9,000 in Balanced Index (30%), $6,000 in Layer-1 Index (20%), $3,000 in Aggressive Growth Index (10%)

This balanced approach captures crypto growth potential while maintaining stability through substantial conservative and balanced allocations.

Profile 3: Aggressive 25-Year-Old Maximizing Returns

Total portfolio: $50,000

Crypto allocation: $15,000 (30%)

Strategy: $4,500 in Investor Grade Index (30%), $3,000 in Balanced Index (20%), $4,500 in Aggressive Growth Index (30%), $3,000 in Memecoin Index (20%)

This aggressive strategy embraces volatility and maximum growth potential, appropriate for younger investors with decades to recover from potential downturns.

Ready to begin building wealth with Token Metrics indices?

Follow this action plan:

• Week 1-2: Sign up for Token Metrics' 7-day free trial and explore available indices, historical performance, and educational resources. Define your investment goals, risk tolerance, and allocation strategy using the frameworks outlined in this guide.

• Week 3-4: Open necessary exchange accounts and wallets. Fund accounts and begin implementing your strategy through dollar-cost averaging. Set up tracking systems and calendar reminders for quarterly reviews.

• Ongoing: Follow Token Metrics' index recommendations, execute rebalancing transactions as suggested, monitor performance quarterly, and adjust strategy as your financial situation evolves.

Cryptocurrency represents one of the most significant wealth-building opportunities in modern financial history, but capturing this potential requires sophisticated approaches that most individual investors cannot implement alone.

Token Metrics AI Indices democratize access to professional-grade investment strategies, leveraging cutting-edge machine learning, comprehensive market analysis, and real-time responsiveness to build winning portfolios.

Whether you're a conservative investor seeking measured exposure or an aggressive trader pursuing maximum returns, Token Metrics provides indices tailored to your specific needs.

The choice between random coin picking and strategic, AI-driven index investing is clear.

One approach relies on luck and guesswork; the other harnesses data, technology, and proven methodologies to systematically build wealth while managing risk.

Your journey to crypto investment success begins with a single decision: commit to a professional, strategic approach rather than speculative gambling.

Token Metrics provides the tools, insights, and management to transform crypto investing from a game of chance into a calculated path toward financial freedom.

Start your 7-day free trial today and discover how AI-powered indices can accelerate your wealth-building journey.

The future of finance is decentralized, intelligent, and accessible—make sure you're positioned to benefit.

Token Metrics stands out as a leader in AI-driven crypto index solutions.

With over 6,000 tokens analyzed daily and indices tailored to every risk profile, the platform provides unparalleled analytics, real-time rebalancing, and comprehensive investor education.

Its commitment to innovation and transparency makes it a trusted partner for building your crypto investment strategy in today's fast-evolving landscape.

Token Metrics indices use advanced AI models to analyze technical, fundamental, on-chain, and sentiment data across thousands of cryptocurrencies.

They construct balanced portfolios that are automatically rebalanced in real-time to adapt to evolving market conditions and trends.

There are conservative, balanced, aggressive growth, and sector-specific indices including DeFi, Layer-1, AI, and memecoins.

Each index is designed for a different investment objective, risk tolerance, and market outlook.

No mandatory minimum is outlined for using Token Metrics indices recommendations.

You can adapt your allocation based on your personal investment strategy, capacity, and goals.

Token Metrics indices are rebalanced automatically based on dynamic AI analysis, but it is recommended to review your overall crypto allocation at least quarterly to ensure alignment with your targets.

Token Metrics provides analytics and index recommendations; investors maintain custody of their funds and should implement robust security practices such as hardware wallets and two-factor authentication.

No investing approach, including AI-driven indices, can guarantee profits.

The goal is to maximize risk-adjusted returns through advanced analytics and professional portfolio management, but losses remain possible due to the volatile nature of crypto markets.

This article is for educational and informational purposes only.

It does not constitute financial, investment, or tax advice.

Cryptocurrency investing carries risk, and past performance does not guarantee future results. Always consult your own advisor before making investment decisions.

%201.svg)

%201.svg)

Web3 promises to revolutionize the internet by decentralizing control, empowering users with data ownership, and eliminating middlemen. The technology offers improved security, higher user autonomy, and innovative ways to interact with digital assets. With the Web3 market value expected to reach $81.5 billion by 2030, the potential seems limitless. Yet anyone who's interacted with blockchain products knows the uncomfortable truth: Web3 user experience often feels more like punishment than promise. From nerve-wracking first crypto transactions to confusing wallet popups and sudden unexplained fees, Web3 products still have a long way to go before achieving mainstream adoption. If you ask anyone in Web3 what the biggest hurdle for mass adoption is, UX is more than likely to be the answer. This comprehensive guide explores why Web3 UX remains significantly inferior to Web2 experiences in 2025, examining the core challenges, their implications, and how platforms like Token Metrics are bridging the gap between blockchain complexity and user-friendly crypto investing.

To understand Web3's UX challenges, we must first recognize what users expect based on decades of Web2 evolution. Web2, the "read-write" web that started in 2004, enhanced internet engagement through user-generated content, social media platforms, and cloud-based services with intuitive interfaces that billions use daily without thought.

Web2 applications provide seamless experiences: one-click logins via Google or Facebook, instant account recovery through email, predictable transaction costs, and familiar interaction patterns across platforms. Users have become accustomed to frictionless digital experiences that just work.

Web3, by contrast, introduces entirely new paradigms requiring users to manage cryptographic wallets, understand blockchain concepts, navigate multiple networks, pay variable gas fees, and take full custody of their assets. This represents a fundamental departure from familiar patterns, creating immediate friction.

The first interaction with most decentralized applications asks users to "Connect Wallet." If you don't have MetaMask or another compatible wallet, you're stuck before even beginning. This creates an enormous barrier to entry where Web2 simply asks for an email address. Setting up a Web3 wallet requires understanding seed phrases—12 to 24 random words that serve as the master key to all assets. Users must write these down, store them securely, and never lose them, as there's no "forgot password" option. One mistake means permanent loss of funds.

Most DeFi platforms and crypto wallets nowadays still have cumbersome and confusing interfaces for wallet creation and management. The registration process, which in Web2 takes seconds through social login options, becomes a multi-step educational journey in Web3.

Most challenges in UX/UI design for blockchain stem from lack of understanding of the technology among new users, designers, and industry leaders. Crypto jargon and complex concepts of the decentralized web make it difficult to grasp product value and master new ways to manage funds. Getting typical users to understand complicated blockchain ideas represents one of the main design challenges. Concepts like wallets, gas fees, smart contracts, and private keys must be streamlined without compromising security or usefulness—a delicate balance few projects achieve successfully.

The blockchain itself is a complex theory requiring significant learning to fully understand. Web3 tries converting this specialized domain knowledge into generalist applications where novices should complete tasks successfully. When blockchain products first started being developed, most were created by experts for experts, resulting in products with extreme pain points, accessibility problems, and complex user flows.

Another common headache in Web3 is managing assets and applications across multiple blockchains. Today, it's not uncommon for users to interact with Ethereum, Polygon, Solana, or several Layer 2 solutions—all in a single session. Unfortunately, most products require users to manually switch networks in wallets, manually add new networks, or rely on separate bridges to transfer assets. This creates fragmented and confusing experiences where users must understand which network each asset lives on and how to move between them. Making users distinguish between different networks creates unnecessary cognitive burden. In Web2, users never think about which server hosts their data—it just works. Web3 forces constant network awareness, breaking the illusion of seamless interaction.

Transaction costs in Web3 are variable, unpredictable, and often shockingly expensive. Users encounter sudden, unexplained fees that can range from cents to hundreds of dollars depending on network congestion. There's no way to know costs precisely before initiating transactions, creating anxiety and hesitation. Web3 experiences generally run on public chains, leading to scalability problems as multiple parties make throughput requests. The more transactions that occur, the higher gas fees become—an unsustainable model as more users adopt applications. Users shouldn't have to worry about paying high gas fees as transaction costs. Web2 transactions happen at predictable costs or are free to users, with businesses absorbing payment processing fees. Web3's variable cost structure creates friction at every transaction.

In Web2, mistakes are forgivable. Sent money to the wrong person? Contact support. Made a typo? Edit or cancel. Web3 offers no such mercy. Blockchain's immutability means transactions are permanent—send crypto to the wrong address and it's gone forever. This creates enormous anxiety around every action. Users must triple-check addresses (long hexadecimal strings impossible to memorize), verify transaction details, and understand that one mistake could cost thousands. The nerve-wracking experience of making first crypto transactions drives many users away permanently.

Web2 platforms offer customer service: live chat, email support, phone numbers, and dispute resolution processes. Web3's decentralized nature eliminates these safety nets. There's no one to call when things go wrong, no company to reverse fraudulent transactions, no support ticket system to resolve issues. This absence of recourse amplifies fear and reduces trust. Users accustomed to consumer protections find Web3's "code is law" philosophy terrifying rather than empowering, especially when their money is at stake.

Web3 applications often provide cryptic error messages that technical users struggle to understand, let alone mainstream audiences. "Transaction failed" without explanation, "insufficient gas" without context, or blockchain-specific error codes mean nothing to average users. Good UX requires clear, actionable feedback. Web2 applications excel at this—telling users exactly what went wrong and how to fix it. Web3 frequently leaves users confused, frustrated, and unable to progress.

Crypto designs are easily recognizable by dark backgrounds, pixel art, and Web3 color palettes. But when hundreds of products have the same mysterious look, standing out while maintaining blockchain identity becomes challenging. More problematically, there are no established UX patterns for Web3 interactions. Unlike Web2, where conventions like hamburger menus, shopping carts, and navigation patterns are universal, Web3 reinvents wheels constantly. Every application handles wallet connections, transaction confirmations, and network switching differently, forcing users to relearn basic interactions repeatedly.

The problem with most DeFi startups and Web3 applications is that they're fundamentally developer-driven rather than consumer-friendly. When blockchain products first launched, they were created by technical experts who didn't invest effort in user experience and usability. This technical-first approach persists today. Products prioritize blockchain purity, decentralization orthodoxy, and feature completeness over simplicity and accessibility. The result: powerful tools that only experts can use, excluding the masses these technologies purportedly serve.

The Web3 revolution caught UI/UX designers by surprise. The Web3 community values privacy and anonymity, making traditional user research challenging. How do you design for someone you don't know and who deliberately stays anonymous? Researching without compromising user privacy becomes complex, yet dedicating time to deep user exploration remains essential for building products that resonate with actual needs rather than developer assumptions.

Despite years of development and billions in funding, Web3 UX remains problematic for several structural reasons:

Despite challenges, innovative solutions are emerging to bridge the Web3 UX gap:

While many Web3 UX challenges persist, platforms like Token Metrics demonstrate that sophisticated blockchain functionality can coexist with excellent user experience. Token Metrics has established itself as a leading crypto trading and analytics platform by prioritizing usability without sacrificing power.

The ultimate success of Web3 hinges on user experience. No matter how revolutionary the technology, it will remain niche if everyday people find it too confusing, intimidating, or frustrating. Gaming, FinTech, digital identity, social media, and publishing will likely become Web3-enabled within the next 5 to 10 years—but only if UX improves dramatically.

UX as Competitive Advantage: Companies embracing UX early see fewer usability issues, higher retention, and more engaged users. UX-driven companies continually test assumptions, prototype features, and prioritize user-centric metrics like ease-of-use, task completion rates, and satisfaction—core measures of Web3 product success.

Design as Education: Highly comprehensive Web3 design helps educate newcomers, deliver effortless experiences, and build trust in technology. Design becomes the bridge between innovation and adoption.

Convergence with Web2 Patterns: Successful Web3 applications increasingly adopt familiar Web2 patterns while maintaining decentralized benefits underneath. This convergence represents the path to mass adoption—making blockchain invisible to end users who benefit from its properties without confronting its complexity.

Web3 UX remains significantly inferior to Web2 in 2025 due to fundamental challenges: complex onboarding, technical jargon, multi-chain fragmentation, unpredictable fees, irreversible errors, lack of support, poor feedback, inconsistent patterns, developer-centric design, and constrained user research. These aren't superficial problems solvable through better visual design—they stem from blockchain's architectural realities and the ecosystem's technical origins. However, they're also not insurmountable. Innovative solutions like account abstraction, email-based onboarding, gasless transactions, and unified interfaces are emerging.

Platforms like Token Metrics demonstrate that Web3 functionality can deliver through Web2-familiar experiences. By prioritizing user needs over technical purity, abstracting complexity without sacrificing capability, and maintaining intuitive interfaces, Token Metrics shows the path forward for the entire ecosystem.

For Web3 to achieve its transformative potential, designers and developers must embrace user-centric principles, continuously adapting to users' needs rather than forcing users to adapt to technology. The future belongs to platforms that make blockchain invisible—where users experience benefits without confronting complexity.

As we progress through 2025, the gap between Web2 and Web3 UX will narrow, driven by competition for mainstream users, maturing design standards, and recognition that accessibility determines success. The question isn't whether Web3 UX will improve—it's whether improvements arrive fast enough to capture the massive opportunity awaiting blockchain technology.

For investors navigating this evolving landscape, leveraging platforms like Token Metrics that prioritize usability alongside sophistication provides a glimpse of Web3's user-friendly future—where powerful blockchain capabilities enhance lives without requiring technical expertise, patience, or tolerance for poor design.

%201.svg)

%201.svg)

Web3 promises to revolutionize the internet by decentralizing control, empowering users with data ownership, and eliminating middlemen. The technology offers improved security, higher user autonomy, and innovative ways to interact with digital assets. With the Web3 market value expected to reach $81.5 billion by 2030, the potential seems limitless. Yet anyone who's interacted with blockchain products knows the uncomfortable truth: Web3 user experience often feels more like punishment than promise. From nerve-wracking first crypto transactions to confusing wallet popups and sudden unexplained fees, Web3 products still have a long way to go before achieving mainstream adoption. If you ask anyone in Web3 what the biggest hurdle for mass adoption is, UX is more than likely to be the answer. This comprehensive guide explores why Web3 UX remains significantly inferior to Web2 experiences in 2025, examining the core challenges, their implications, and how platforms like Token Metrics are bridging the gap between blockchain complexity and user-friendly crypto investing.

To understand Web3's UX challenges, we must first recognize what users expect based on decades of Web2 evolution. Web2, the "read-write" web that started in 2004, enhanced internet engagement through user-generated content, social media platforms, and cloud-based services with intuitive interfaces that billions use daily without thought.

Web2 applications provide seamless experiences: one-click logins via Google or Facebook, instant account recovery through email, predictable transaction costs, and familiar interaction patterns across platforms. Users have become accustomed to frictionless digital experiences that just work.

Web3, by contrast, introduces entirely new paradigms requiring users to manage cryptographic wallets, understand blockchain concepts, navigate multiple networks, pay variable gas fees, and take full custody of their assets. This represents a fundamental departure from familiar patterns, creating immediate friction.

The first interaction with most decentralized applications asks users to "Connect Wallet." If you don't have MetaMask or another compatible wallet, you're stuck before even beginning. This creates an enormous barrier to entry where Web2 simply asks for an email address.

Setting up a Web3 wallet requires understanding seed phrases—12 to 24 random words that serve as the master key to all assets. Users must write these down, store them securely, and never lose them, as there's no "forgot password" option. One mistake means permanent loss of funds.

Most DeFi platforms and crypto wallets nowadays still have cumbersome and confusing interfaces for wallet creation and management. The registration process, which in Web2 takes seconds through social login options, becomes a multi-step educational journey in Web3.

Most challenges in UX/UI design for blockchain stem from lack of understanding of the technology among new users, designers, and industry leaders. Crypto jargon and complex concepts of the decentralized web make it difficult to grasp product value and master new ways to manage funds.

Getting typical users to understand complicated blockchain ideas represents one of the main design challenges. Concepts like wallets, gas fees, smart contracts, and private keys must be streamlined without compromising security or usefulness—a delicate balance few projects achieve successfully.

The blockchain itself is a complex theory requiring significant learning to fully understand. Web3 tries converting this specialized domain knowledge into generalist applications where novices should complete tasks successfully. When blockchain products first started being developed, most were created by experts for experts, resulting in products with extreme pain points, accessibility problems, and complex user flows.

Another common headache in Web3 is managing assets and applications across multiple blockchains. Today, it's not uncommon for users to interact with Ethereum, Polygon, Solana, or several Layer 2 solutions—all in a single session.

Unfortunately, most products require users to manually switch networks in wallets, manually add new networks, or rely on separate bridges to transfer assets. This creates fragmented and confusing experiences where users must understand which network each asset lives on and how to move between them.

Making users distinguish between different networks creates unnecessary cognitive burden. In Web2, users never think about which server hosts their data—it just works. Web3 forces constant network awareness, breaking the illusion of seamless interaction.

Transaction costs in Web3 are variable, unpredictable, and often shockingly expensive. Users encounter sudden, unexplained fees that can range from cents to hundreds of dollars depending on network congestion. There's no way to know costs precisely before initiating transactions, creating anxiety and hesitation.

Web3 experiences generally run on public chains, leading to scalability problems as multiple parties make throughput requests. The more transactions that occur, the higher gas fees become—an unsustainable model as more users adopt applications.

Users shouldn't have to worry about paying high gas fees as transaction costs. Web2 transactions happen at predictable costs or are free to users, with businesses absorbing payment processing fees. Web3's variable cost structure creates friction at every transaction.

In Web2, mistakes are forgivable. Sent money to the wrong person? Contact support. Made a typo? Edit or cancel. Web3 offers no such mercy. Blockchain's immutability means transactions are permanent—send crypto to the wrong address and it's gone forever.

This creates enormous anxiety around every action. Users must triple-check addresses (long hexadecimal strings impossible to memorize), verify transaction details, and understand that one mistake could cost thousands. The nerve-wracking experience of making first crypto transactions drives many users away permanently.

Web2 platforms offer customer service: live chat, email support, phone numbers, and dispute resolution processes. Web3's decentralized nature eliminates these safety nets. There's no one to call when things go wrong, no company to reverse fraudulent transactions, no support ticket system to resolve issues.

This absence of recourse amplifies fear and reduces trust. Users accustomed to consumer protections find Web3's "code is law" philosophy terrifying rather than empowering, especially when their money is at stake.

Web3 applications often provide cryptic error messages that technical users struggle to understand, let alone mainstream audiences. "Transaction failed" without explanation, "insufficient gas" without context, or blockchain-specific error codes mean nothing to average users.

Good UX requires clear, actionable feedback. Web2 applications excel at this—telling users exactly what went wrong and how to fix it. Web3 frequently leaves users confused, frustrated, and unable to progress.

Crypto designs are easily recognizable by dark backgrounds, pixel art, and Web3 color palettes. But when hundreds of products have the same mysterious look, standing out while maintaining blockchain identity becomes challenging.

More problematically, there are no established UX patterns for Web3 interactions. Unlike Web2, where conventions like hamburger menus, shopping carts, and navigation patterns are universal, Web3 reinvents wheels constantly. Every application handles wallet connections, transaction confirmations, and network switching differently, forcing users to relearn basic interactions repeatedly.

The problem with most DeFi startups and Web3 applications is that they're fundamentally developer-driven rather than consumer-friendly. When blockchain products first launched, they were created by technical experts who didn't invest effort in user experience and usability.

This technical-first approach persists today. Products prioritize blockchain purity, decentralization orthodoxy, and feature completeness over simplicity and accessibility. The result: powerful tools that only experts can use, excluding the masses these technologies purportedly serve.

The Web3 revolution caught UI/UX designers by surprise. The Web3 community values privacy and anonymity, making traditional user research challenging. How do you design for someone you don't know and who deliberately stays anonymous?

Researching without compromising user privacy becomes complex, yet dedicating time to deep user exploration remains essential for building products that resonate with actual needs rather than developer assumptions.

Despite years of development and billions in funding, Web3 UX remains problematic for several structural reasons:

Despite challenges, innovative solutions are emerging to bridge the Web3 UX gap:

Modern crypto wallets embrace account abstraction enabling social recovery (using trusted contacts to restore access), seedless wallet creation via Multi-Party Computation, and biometric logins. These features make self-custody accessible without sacrificing security.

Forward-looking approaches use email address credentials tied to Web3 wallets. Companies like Magic and Web3Auth create non-custodial wallets behind familiar email login interfaces using multi-party compute techniques, removing seed phrases from user experiences entirely.

Some platforms absorb transaction costs or implement Layer 2 solutions dramatically reducing fees, creating predictable cost structures similar to Web2.

Progressive platforms abstract blockchain complexity, presenting familiar Web2-like experiences while handling Web3 mechanics behind the scenes. Users interact through recognizable patterns without needing to understand underlying technology.

Discover Crypto Gems with Token Metrics AI

Token Metrics uses AI-powered analysis to help you uncover profitable opportunities in the crypto market. Get Started For Free

The ultimate success of Web3 hinges on user experience. No matter how revolutionary the technology, it will remain niche if everyday people find it too confusing, intimidating, or frustrating. Gaming, FinTech, digital identity, social media, and publishing will likely become Web3-enabled within the next 5 to 10 years—but only if UX improves dramatically.

UX as a competitive advantage, early design focus, and convergence with Web2 patterns are critical strategies for adoption. Designing for education and familiarity helps build trust, making blockchain invisibly integrated into daily digital interactions.

Web3 UX remains significantly inferior to Web2 in 2025 due to fundamental challenges: complex onboarding, technical jargon, multi-chain fragmentation, unpredictable fees, irreversible errors, lack of support, poor feedback, inconsistent patterns, developer-centric design, and constrained user research. These stem from blockchain's architectural realities and the technical origins of the ecosystem. However, emerging solutions like account abstraction, email onboarding, gasless transactions, and unified interfaces demonstrate that blockchain’s power can be delivered through familiar and accessible user experiences.

Platforms like Token Metrics exemplify how prioritizing user needs and abstracting complexity enables mainstream adoption. To succeed, designers and developers must focus on user-centric principles, continuously adapting technology to meet user expectations rather than forcing users to adapt to blockchain complexities. The future belongs to platforms that make blockchain invisible, delivering benefits seamlessly and intuitively. As 2025 progresses, the gap between Web2 and Web3 UX will narrow, driven by competition, standardization, and the recognition that accessibility is key to success. Leveraging platforms like Token Metrics provides a glimpse of this user-friendly future, where powerful blockchain capabilities enhance everyday digital life without requiring technical expertise or patience.

%201.svg)

%201.svg)

Web3 promises to revolutionize the internet by decentralizing control, empowering users with data ownership, and eliminating middlemen. The technology offers improved security, higher user autonomy, and innovative ways to interact with digital assets. With the Web3 market value expected to reach $81.5 billion by 2030, the potential seems limitless.Yet anyone who's interacted with blockchain products knows the uncomfortable truth: Web3 user experience often feels more like punishment than promise. From nerve-wracking first crypto transactions to confusing wallet popups and sudden unexplained fees, Web3 products still have a long way to go before achieving mainstream adoption. If you ask anyone in Web3 what the biggest hurdle for mass adoption is, UX is more than likely to be the answer.

This comprehensive guide explores why Web3 UX remains significantly inferior to Web2 experiences in 2025, examining the core challenges, their implications, and how platforms like Token Metrics are bridging the gap between blockchain complexity and user-friendly crypto investing.

To understand Web3's UX challenges, we must first recognize what users expect based on decades of Web2 evolution. Web2, the "read-write" web that started in 2004, enhanced internet engagement through user-generated content, social media platforms, and cloud-based services with intuitive interfaces that billions use daily without thought.

Web2 applications provide seamless experiences: one-click logins via Google or Facebook, instant account recovery through email, predictable transaction costs, and familiar interaction patterns across platforms. Users have become accustomed to frictionless digital experiences that just work.

Web3, by contrast, introduces entirely new paradigms requiring users to manage cryptographic wallets, understand blockchain concepts, navigate multiple networks, pay variable gas fees, and take full custody of their assets. This represents a fundamental departure from familiar patterns, creating immediate friction.

Despite years of development and billions in funding, Web3 UX remains problematic for several structural reasons:

Despite challenges, innovative solutions are emerging to bridge the Web3 UX gap:

Discover Crypto Gems with Token Metrics AI

Token Metrics uses AI-powered analysis to help you uncover profitable opportunities in the crypto market. Get Started For Free

The ultimate success of Web3 hinges on user experience. No matter how revolutionary the technology, it will remain niche if everyday people find it too confusing, intimidating, or frustrating. Gaming, FinTech, digital identity, social media, and publishing will likely become Web3-enabled within the next 5 to 10 years—but only if UX improves dramatically.

UX as Competitive Advantage: Companies embracing UX early see fewer usability issues, higher retention, and more engaged users. UX-driven companies continually test assumptions, prototype features, and prioritize user-centric metrics like ease-of-use, task completion rates, and satisfaction—core measures of Web3 product success.

Design as Education: Highly comprehensive Web3 design helps educate newcomers, deliver effortless experiences, and build trust in technology. Design becomes the bridge between innovation and adoption.

Convergence with Web2 Patterns: Successful Web3 applications increasingly adopt familiar Web2 patterns while maintaining decentralized benefits underneath. This convergence represents the path to mass adoption—making blockchain invisible to end users who benefit from its properties without confronting its complexity.

Web3 UX remains significantly inferior to Web2 in 2025 due to fundamental challenges: complex onboarding, technical jargon, multi-chain fragmentation, unpredictable fees, irreversible errors, lack of support, poor feedback, inconsistent patterns, developer-centric design, and constrained user research.

These aren't superficial problems solvable through better visual design—they stem from blockchain's architectural realities and the ecosystem's technical origins. However, they're also not insurmountable. Innovative solutions like account abstraction, email-based onboarding, gasless transactions, and unified interfaces are emerging.

Token Metrics demonstrates that Web3 functionality can deliver through Web2-familiar experiences. By prioritizing user needs over technical purity, abstracting complexity without sacrificing capability, and maintaining intuitive interfaces, Token Metrics shows the path forward for the entire ecosystem.

For Web3 to achieve its transformative potential, designers and developers must embrace user-centric principles, continuously adapting to users' needs rather than forcing users to adapt to technology. The future belongs to platforms that make blockchain invisible—where users experience benefits without confronting complexity.

As we progress through 2025, the gap between Web2 and Web3 UX will narrow, driven by competition for mainstream users, maturing design standards, and recognition that accessibility determines success. The question isn't whether Web3 UX will improve—it's whether improvements arrive fast enough to capture the massive opportunity awaiting blockchain technology.

For investors navigating this evolving landscape, leveraging platforms like Token Metrics that prioritize usability alongside sophistication provides a glimpse of Web3's user-friendly future—where powerful blockchain capabilities enhance lives without requiring technical expertise, patience, or tolerance for poor design.

%201.svg)

%201.svg)

The prediction revolution is transforming crypto investing in 2025. From AI-powered price prediction platforms to blockchain-based event markets, today's tools help investors forecast everything from token prices to election outcomes with unprecedented accuracy. With billions in trading volume and cutting-edge AI analytics, these platforms are reshaping how we predict, trade, and profit from future events. Whether you're forecasting the next 100x altcoin or betting on real-world outcomes, this comprehensive guide explores the top prediction tools dominating 2025.

Before diving in, it's crucial to distinguish between two types of prediction platforms:

Both serve valuable but different purposes. Let's explore the top tools in each category.

Token Metrics stands as the premier AI-driven crypto research and investment platform, scanning over 6,000 tokens daily to provide data-backed predictions and actionable insights. With a user base of 110,000+ crypto traders and $8.5 million raised from 3,000+ investors, Token Metrics has established itself as the industry's most comprehensive prediction tool.

Unlike basic charting tools or single-metric analyzers, Token Metrics combines time series data, media news, regulator activities, coin events like forks, and traded volumes across exchanges to optimize forecasting results. The platform's proven track record and comprehensive approach make it indispensable for serious crypto investors in 2025.

Investors and traders seeking AI-powered crypto price predictions, portfolio optimization, and early altcoin discovery.

Polymarket dominates the event prediction market space with unmatched liquidity and diverse betting opportunities.

What Sets It Apart: Polymarket proved its forecasting superiority when it accurately predicted election outcomes that traditional polls missed. The platform's user-friendly interface makes blockchain prediction markets accessible to mainstream audiences.

Kalshi has surged from 3.3% market share last year to 66% by September 2025, overtaking Polymarket as the trading volume leader.

Best For: U.S. residents seeking regulated prediction markets with crypto deposit options and diverse event contracts.

For traders demanding instant settlement and minimal fees, Drift BET represents the cutting edge of prediction markets on Solana.

Why It Matters: Leveraging Solana's near-instant transaction finality, Drift BET solves scalability issues faced by Ethereum-based prediction markets, with low transaction fees making smaller bets feasible across a wider audience.

Launched in 2018, Augur was the first decentralized prediction market, pioneering blockchain-based forecasting and innovative settlement methods secured by the REP token.

Legacy Impact: Augur v1 settled around $20 million in bets—impressive for 2018-19. Though its DAO has dissolved, Augur's technological innovations influence the DeFi sphere.

With a market cap of $463 million, Gnosis is the biggest prediction market project by market capitalization.

Ecosystem Approach: Founded in 2015, Gnosis evolved into a multifaceted ecosystem covering decentralized trading, wallet services, and infrastructure tools beyond prediction markets.

Smart investors combine Token Metrics for identifying promising cryptocurrencies and then leverage prediction markets like Polymarket or Kalshi to hedge positions or speculate on specific events.

Example Strategy: Use Token Metrics to identify a token with strong Trader Grade and bullish AI signals. Build a position through AI trading, then use prediction markets to bet on price milestones or events, monitoring alerts for exit points. This blends AI-driven predictions with market-based event forecasting.

The era of blind speculation is over. Between AI-powered platforms like Token Metrics analyzing thousands of data points per second and blockchain-based prediction markets aggregating collective wisdom, today's investors have unprecedented tools for forecasting the future. Token Metrics leads the charge in crypto price prediction with its comprehensive AI-driven approach, while platforms like Polymarket and Kalshi dominate event-based forecasting. Together, they represent a new paradigm where data, algorithms, and collective intelligence converge to illuminate tomorrow's opportunities.

Whether you're hunting the next 100x altcoin or betting on real-world events, 2025's prediction platforms put the power of foresight in your hands. The question isn't whether to use these tools—it's how quickly you can integrate them into your strategy.

Disclaimer: This article is for informational purposes only and does not constitute financial advice. All investing involves risk, including potential loss of capital. Price predictions and ratings are provided for informational purposes and may not reflect actual future performance. Always conduct thorough research and consult qualified professionals before making financial decisions.

%201.svg)

%201.svg)

The prediction revolution is transforming crypto investing in 2025. From AI-powered price prediction platforms to blockchain-based event markets, today's tools help investors forecast everything from token prices to election outcomes with unprecedented accuracy. With billions in trading volume and cutting-edge AI analytics, these platforms are reshaping how we predict, trade, and profit from future events. Whether you're forecasting the next 100x altcoin or betting on real-world outcomes, this comprehensive guide explores the top prediction tools dominating 2025.

Before diving in, it's crucial to distinguish between two types of prediction platforms:

Both serve valuable but different purposes. Let's explore the top tools in each category.

Token Metrics - AI-Powered Crypto Intelligence Leader

Token Metrics stands as the premier AI-driven crypto research and investment platform, scanning over 6,000 tokens daily to provide data-backed predictions and actionable insights. With a user base of 110,000+ crypto traders and $8.5 million raised from 3,000+ investors, Token Metrics has established itself as the industry's most comprehensive prediction tool.

Unlike basic charting tools or single-metric analyzers, Token Metrics combines time series data, media news, regulator activities, coin events like forks, and traded volumes across exchanges to optimize forecasting results. The platform's proven track record and comprehensive approach make it indispensable for serious crypto investors in 2025.

Investors and traders seeking AI-powered crypto price predictions, portfolio optimization, and early altcoin discovery.

Polymarket dominates the event prediction market space with unmatched liquidity and diverse betting opportunities.

What Sets It Apart: Polymarket proved its forecasting superiority when it accurately predicted election outcomes that traditional polls missed. The platform's user-friendly interface makes blockchain prediction markets accessible to mainstream audiences.

Kalshi has surged from 3.3% market share last year to 66% by September 2025, overtaking Polymarket as the trading volume leader.

For traders demanding instant settlement and minimal fees, Drift BET represents the cutting edge of prediction markets on Solana.

Why It Matters: By leveraging Solana's near-instant transaction finality, Drift BET solves many scalability issues faced by Ethereum-based prediction markets, with low transaction fees making smaller bets feasible for wider audiences.

Launched in 2018, Augur was the first decentralized prediction market, pioneering blockchain-based forecasting and innovative methods for settlement secured by the REP token.

Legacy Impact: Augur v1 settled around $20 million in bets—impressive for 2018-19. While the DAO has dissolved, Augur's technological innovations now permeate the DeFi sphere.

With a market cap of $463 million, Gnosis is the biggest prediction market project by market capitalization.

Ecosystem Approach: Founded in 2015, Gnosis evolved into a multifaceted ecosystem encompassing decentralized trading, wallet services, and infrastructure tools beyond mere prediction markets.

Smart investors often use Token Metrics for identifying which cryptocurrencies to invest in, then leverage prediction markets like Polymarket or Kalshi to hedge positions or speculate on specific price targets and events.

Example Strategy:

This combines the best of AI-driven price prediction with market-based event forecasting.

Market Growth Trajectory: The prediction market sector is projected to reach $95.5 billion by 2035, with underlying derivatives integrating with DeFi protocols.

Key Growth Drivers:

What's Coming: Token Metrics Evolution—Expect deeper AI agent integration, automated portfolio management, and enhanced moonshot discovery as machine learning models become more sophisticated.

Prediction Market Expansion: Kalshi aims to integrate with every major crypto app within 12 months, while tokenization of positions and margin trading will create new financial primitives.

Cross-Platform Integration: Future platforms will likely combine Token Metrics-style AI prediction with Polymarket-style event markets in unified interfaces.

DeFi Integration: The prediction market derivatives layer is set to integrate with DeFi protocols to create more complex financial products.

→ Token Metrics - Unmatched AI analytics, moonshot discovery, and comprehensive scoring

The era of blind speculation is over. Between AI-powered platforms like Token Metrics analyzing thousands of data points per second and blockchain-based prediction markets aggregating collective wisdom, today's investors have unprecedented tools for forecasting the future. Token Metrics leads the charge in crypto price prediction with its comprehensive AI-driven approach, while platforms like Polymarket and Kalshi dominate event-based forecasting. Together, they represent a new paradigm where data, algorithms, and collective intelligence converge to illuminate tomorrow's opportunities.

Whether you're hunting the next 100x altcoin or betting on real-world events, 2025's prediction platforms put the power of foresight in your hands. The question isn't whether to use these tools—it's how quickly you can integrate them into your strategy.

Disclaimer: This article is for informational purposes only and does not constitute financial advice. All investing involves risk, including potential loss of capital. Price predictions and ratings are provided for informational purposes and may not reflect actual future performance. Always conduct thorough research and consult qualified professionals before making financial decisions.

%201.svg)

%201.svg)

If you've heard phrases like "the S&P 500 is up today" or "crypto indices are gaining popularity," you've encountered indices in action. But what are indices, exactly, and why do millions of investors rely on them? This guide breaks down everything you need to know about indices, from traditional stock market benchmarks to modern crypto applications.

An index (plural: indices or indexes) is a measurement tool that tracks the performance of a group of assets as a single metric. Think of it as a portfolio formula that selects specific investments, assigns them weights, and updates on a regular schedule to represent a market, sector, or strategy.

Indices serve as benchmarks that answer questions like:

Important distinction: An index itself is just a number—like a thermometer reading. To actually invest, you need an index fund or index product that holds the underlying assets to replicate that index's performance.

Every index follows a systematic approach built on three core components:

The most established category tracks equity performance:

Track fixed-income securities:

Monitor raw materials and resources:

The newest category tracks digital asset performance:

Instead of researching and buying dozens of individual stocks or cryptocurrencies, one index investment gives you exposure to an entire market. If you buy an S&P 500 index fund, you instantly own pieces of 500 companies—from Apple and Microsoft to Coca-Cola and JPMorgan Chase.

This diversification dramatically reduces single-asset risk. If one company fails, it represents only a small fraction of your total investment.

Traditional financial advisors typically charge 1-2% annually to actively pick investments. Index funds charge just 0.03-0.20% because they simply follow preset rules rather than paying expensive analysts and portfolio managers.

Over decades, this cost difference compounds significantly. A 1% fee might seem small, but it can reduce your retirement savings by 25% or more over 30 years.

Research consistently shows that 80-90% of professional fund managers fail to beat simple index funds over 10-15 year periods. By investing in indices, you guarantee yourself market-average returns—which historically beat most active strategies after fees.

Index investing eliminates the need to:

Markets test investors' emotions. Fear drives selling at bottoms; greed drives buying at tops. Index investing removes these emotional triggers—the formula decides what to own based on rules, not feelings.

Cryptocurrency markets face unique challenges that make indices particularly valuable:

Traditional indices stay fully invested through bull and bear markets alike. If the S&P 500 drops 30%, your index fund drops 30%. Regime-switching crypto indices add adaptive risk management:

This approach aims to provide "heads you win, tails you don't lose as much"—participating when conditions warrant while stepping aside when risk turns south.

Choose your focus: Total stock market, S&P 500, international, or bonds

Select a provider: Vanguard, Fidelity, Schwab, or iShares offer excellent low-cost options

Open a brokerage account: Most platforms have no minimums and free trading

Buy and hold: Invest regularly and leave it alone for years

Identify your strategy: Passive broad exposure or adaptive regime-switching

Research index products: Look for transparent holdings, clear fee structures, and published methodologies

Review the details: Check rebalancing frequency, custody model, and supported funding options

Start small: Test the platform and process before committing large amounts

Monitor periodically: Track performance but avoid overtrading

Example: Token Metrics Global 100 Index

Token Metrics offers a regime-switching crypto index that holds the top 100 cryptocurrencies during bullish market signals and moves fully to stablecoins when conditions turn bearish. With weekly rebalancing, transparent holdings displayed in treemaps and tables, and a complete transaction log, it exemplifies the modern approach to crypto index investing.

The platform features embedded self-custodial wallets, one-click purchasing (typically completed in 90 seconds), and clear fee disclosure before confirmation—lowering the operational barriers that often prevent investors from accessing diversified crypto strategies.

Indices are measurement tools that track groups of assets, and index funds make those measurements investable. Whether you're building a retirement portfolio with stock indices or exploring crypto indices with adaptive risk management, the core benefits remain consistent: diversification, lower costs, emotional discipline, and simplified execution.

For most investors, index-based strategies deliver better risk-adjusted returns than attempting to pick individual winners. As Warren Buffett famously recommended, "Put 10% of the cash in short-term government bonds and 90% in a very low-cost S&P 500 index fund."

That advice applies whether you're investing in stocks, bonds, or the emerging world of cryptocurrency indices.

%201.svg)

%201.svg)

You've probably seen professional investors discuss tracking entire markets or specific sectors without the need to purchase countless individual assets. The concept behind this is indices—powerful tools that offer a broad yet targeted market view. In 2025, indices have advanced from simple benchmarks to sophisticated investment vehicles capable of adapting dynamically to market conditions, especially in the evolving crypto landscape.

A trading index, also known as a market index, is a statistical measure that tracks the performance of a selected group of assets. Think of it as a basket containing multiple securities, weighted according to specific rules, designed to represent a particular segment of the market or a strategy. Indices serve as benchmarks allowing investors to:

Unlike individual stocks or cryptocurrencies, indices themselves are not directly tradable assets. Instead, they are measurement tools that financial products like index funds, ETFs, or crypto indices replicate to provide easier access to markets.

Famous indices such as the S&P 500, Dow Jones Industrial Average, and Nasdaq Composite each follow particular methodologies for selecting and weighting their constituent assets.

Indices typically undergo periodic rebalancing—quarterly, annually, or based on specific triggers—to keep their composition aligned with their intended strategy as markets evolve.

The crypto market has adapted and innovated on traditional index concepts. Crypto indices track baskets of digital assets, offering exposure to broad markets or specific sectors like DeFi, Layer-1 protocols, or metaverse tokens.

What sets crypto indices apart in 2025 is their ability to operate transparently on-chain. Unlike traditional indices that can lag in updates, crypto indices can rebalance frequently—sometimes even weekly—and display current holdings and transactions in real-time.

A typical crypto index might track the top 100 cryptocurrencies by market cap, automatically updating rankings and weights, thus addressing the challenge of rapid narrative shifts and asset rotations common in crypto markets. They encourage owning diversified baskets to mitigate risks associated with individual coin failures or narrative collapses.

Research suggests that over 80% of active fund managers underperform their benchmarks over a decade. For individual investors, beating the market is even more challenging. Indices eliminate the need for exhaustive research, constant monitoring, and managing numerous assets, saving time while offering broad market exposure.

Passive indices face a drawback: they remain fully invested during both bull and bear markets. When markets decline sharply, so do index values, which may not align with investors seeking downside protection.

This led to the development of active or regulated strategies that adjust exposure based on market regimes, blending diversification with risk management.

Regime-switching indices dynamically alter their asset allocations depending on market conditions. They identify different regimes—bullish or bearish—and adjust holdings accordingly:

This sophisticated approach combines the benefits of broad index exposure with downside risk mitigation, offering a more adaptable investment strategy.

The TM Global 100 index from Token Metrics exemplifies advanced index strategies tailored for crypto in 2025. It is a rules-based, systematic index that tracks the top 100 cryptocurrencies by market cap during bullish phases, and automatically shifts fully to stablecoins in bearish conditions.

This index maintains weekly rebalancing, full transparency, and easy access via one-click purchase through a secure, self-custodial wallet. The rules are transparent, and the index adapts swiftly to market changes, reducing operational complexity and risk.

Designed for both passive and active traders, it offers broad exposure, risk management, and operational simplicity—perfect for those seeking disciplined yet flexible crypto exposure.